Introduction to KEDA and Event-Driven Autoscaling

How KEDA extends Kubernetes HPA with 65+ scalers, scale-to-zero, and a two-phase architecture for event-driven pod autoscaling.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Posts by KubeDojo

How KEDA extends Kubernetes HPA with 65+ scalers, scale-to-zero, and a two-phase architecture for event-driven pod autoscaling.

How KEDA's Kafka and SQS scalers calculate lag and queue depth, with TriggerAuthentication patterns and production edge cases.

Using KEDA's Prometheus scaler to drive autoscaling from any PromQL query — replacing Prometheus Adapter with a simpler, more flexible approach.

How the KEDA HTTP Add-on intercepts traffic to scale HTTP workloads to zero, and when the Prometheus scaler is better.

ScaledJob creates one Kubernetes Job per event, scales dynamically, and lets long-running batch workloads terminate cleanly.

Combining KEDA's event-driven pod scaling with Karpenter's just-in-time node provisioning for a fully reactive, cost-efficient Kubernetes autoscaling stack.

Scraping KEDA operator metrics, building Grafana dashboards for scaling events, and diagnosing common ScaledObject issues in production.

What KubeDojo is and what you'll find here: deep dives into real code, honest explorations of the Kubernetes ecosystem, and structured learning paths to master every certification.

A practical map of the five CNCF Kubernetes certifications — what each one covers, how exams work, and which path fits your career.

How kagent, Agent Sandbox, KEDA, and OPA/Kyverno form the production stack for agentic AI on Kubernetes.

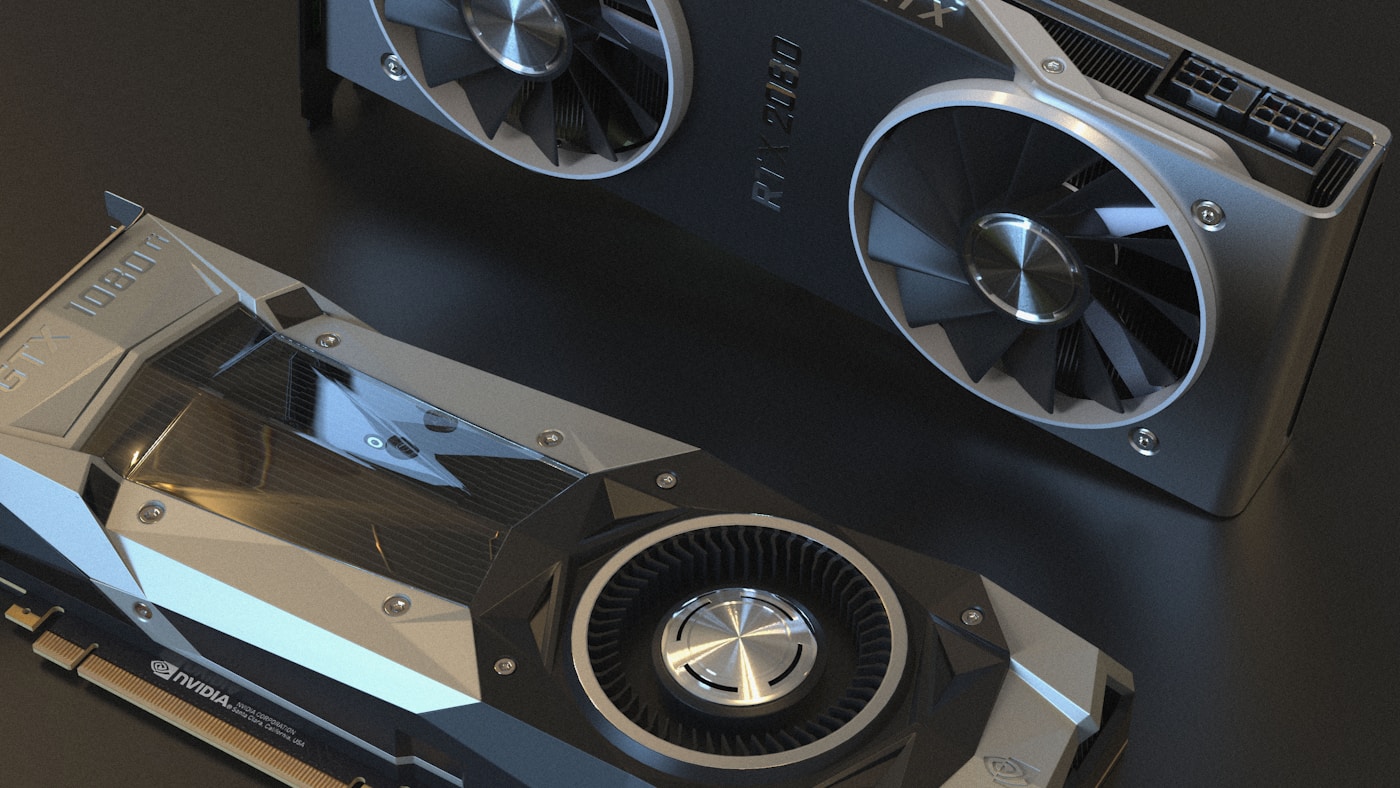

NVIDIA's GPU sharing mechanisms — MIG, time-slicing, and MPS — are gaining traction as teams run multiple inference workloads per GPU.

NVIDIA released AI Cluster Runtime, an open-source project providing validated, version-locked Kubernetes configurations for GPU infrastructure.

Kueue manages GPU quotas, enforces fair sharing across teams, and dispatches jobs to remote HPC clusters — the standard for production AI batch scheduling.

Kubernetes 1.36 decouples scheduling policy from runtime instances with Workload API v1alpha2, standalone PodGroups, and a dedicated group scheduling cycle.

CNCF launched v1.0 of the Kubernetes AI Conformance Program defining baseline capabilities for running AI workloads across conformant clusters.

Deploy vLLM with KServe on Kubernetes: InferenceService CRD, KEDA autoscaling on queue depth, and distributed KV cache with LMCache for production inference.

NVIDIA open-sourced KAI Scheduler (Apache 2.0), a Kubernetes-native GPU scheduling solution originally from the Run:ai platform.

llm-d was accepted as a CNCF Sandbox project, providing Kubernetes-native distributed inference with KV-cache-aware routing, prefill/decode disaggregation, and accelerator-agnostic serving.

Curated KubeCon EU 2026 picks: agentic AI on Kubernetes, sovereign inference, GPU scheduling with Kueue, and platform engineering under pressure.

Production deployment patterns for NVIDIA Dynamo 1.0 on EKS and GKE — disaggregated serving, KV-aware routing, and gotchas from real deployments.

HAMi (CNCF Sandbox) emerged at KubeCon EU 2026 as the reference implementation for GPU resource management across NVIDIA, AMD, Huawei, and Cambricon accelerators.

Gateway API Inference Extension GA standardizes model-aware routing for self-hosted LLMs with KV-cache-aware scheduling and LoRA adapter affinity.

DRA went GA in Kubernetes v1.34 and continues evolving — replacing Device Plugins with richer semantics including DeviceClass, ResourceClaim, CEL-based filtering, and topology awareness.

Armada treats multiple Kubernetes clusters as a single resource pool for GPU-intensive AI workloads, with global queue management, gang scheduling, and production-scale throughput.

Ingress2Gateway 1.0 provides stable migration from ingress-nginx to Gateway API with 30+ supported annotations and integration-tested translation.

Cloudflare made AI Security for Apps GA with prompt injection protection, while RFC 9457 structured error responses cut AI agent token costs by 98%.

Build teams of specialized AI agents with Docker Agent: declarative YAML config, multi-agent orchestration, and secure execution in sandboxed microVMs with Docker Desktop 4.63+.

How Karpenter's groupless, pod-driven provisioning model solves the scaling limitations that plagued Kubernetes Cluster Autoscaler for years.

A hands-on walkthrough of installing Karpenter on EKS, configuring NodePools and EC2NodeClasses, and hardening the setup for production workloads.

How Karpenter automatically replaces drifted nodes, consolidates underutilized capacity, and respects disruption budgets to keep clusters lean and current.

Dedicated GPU NodePools, cold start fixes for 10GB+ AI images, disruption protection for training jobs, and gang scheduling for distributed workloads.

Spot-to-Spot consolidation, instance diversification, right-sizing pod requests, and the real-world strategies that cut Kubernetes compute costs by 20-40%.

Setting up Prometheus metrics scraping, building Grafana dashboards for node lifecycle events, and diagnosing common Karpenter issues in production.