NVIDIA AI Cluster Runtime: Validated GPU Kubernetes Recipes

Introduction

A fully specialized GPU training stack on Kubernetes touches 268 configuration values across 16 components. Kernel versions, drivers, container runtimes, NCCL parameters, operator settings. Get one cluster working, and you spend days getting the next to match. Upgrade a single component and something downstream breaks. Move to a new cloud provider and start from scratch.

Small differences produce failures that are difficult to diagnose and expensive to reproduce. The GPU Operator version must match the driver version, which must match the kernel version, which must match the OS. This kind of version coupling is exactly why teams end up hand-tuning clusters.

AI Cluster Runtime (AICR) is an open-source project from NVIDIA that publishes validated, version-locked Kubernetes configurations as recipes. Each recipe captures a known-good combination of components tested together for a specific environment. You describe your target, the CLI generates a recipe, validates it against your cluster, and renders deployment-ready Helm charts.

How AI Cluster Runtime Works

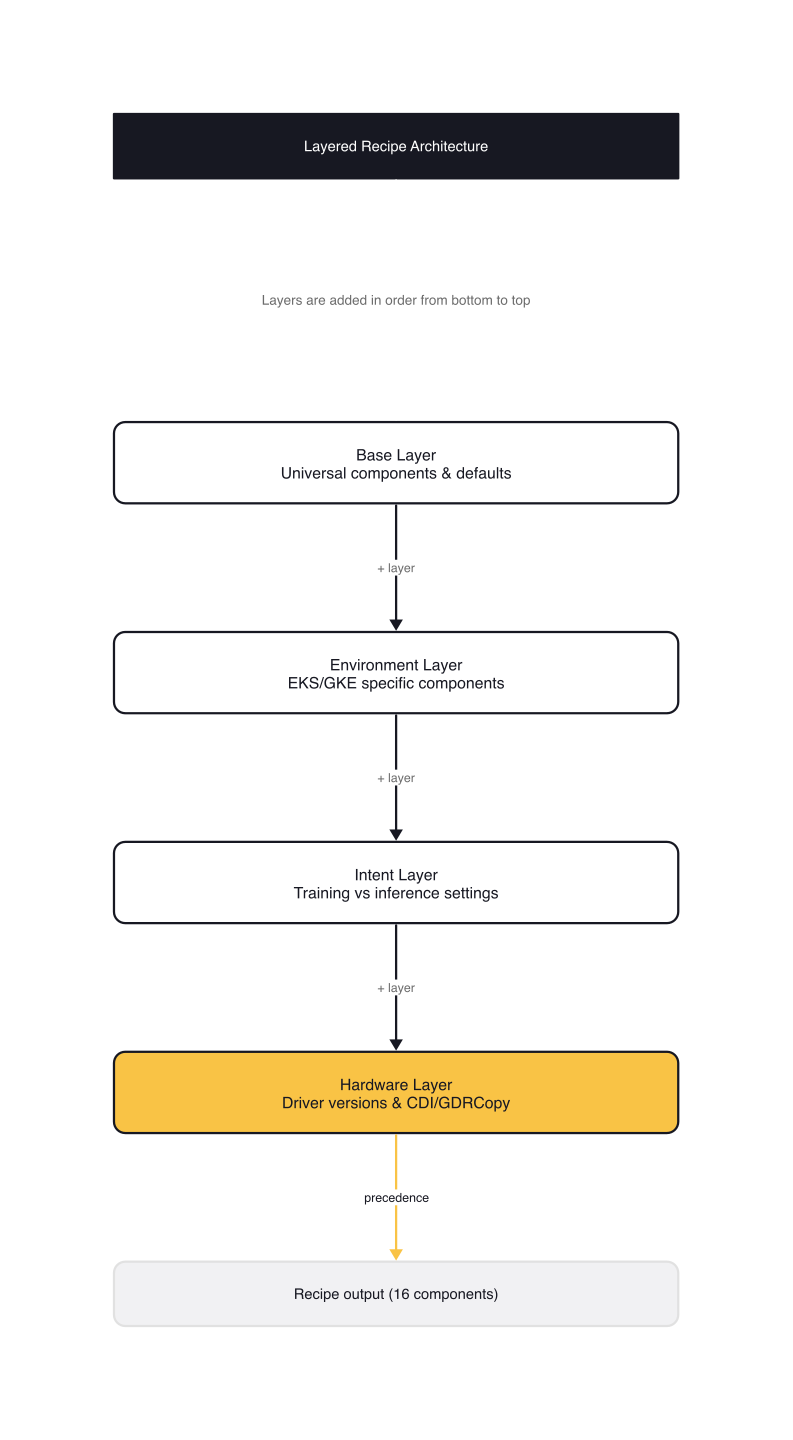

Recipes compose from layers rather than existing as monolithic configurations:

- Base layers define universal components and default versions

- Environment layers add cloud-specific components. On Amazon EKS, that means the EBS CSI driver and EFA plugin. On AKS, the Azure-specific node configurations.

- Intent layers configure workload-specific settings. Training recipes include NCCL tuning parameters. Inference recipes target NVIDIA Dynamo with different resource profiles.

- Hardware layers pin driver versions and enable accelerator-specific features like CDI and GDRCopy for H100 or GB200.

Each layer is added in order, with more specific values taking precedence. The recipe engine resolves the full inheritance chain at generation time:

base → eks → eks-training → h100-eks-training → h100-eks-ubuntu-training

A fully specialized recipe (H100 + EKS + Ubuntu + training + Kubeflow) carries 268 configuration values across 16 components. A generic EKS query returns 200. The delta between training and inference intent can swap 5 components and change 41 values, producing completely different deployment stacks from the same base.

Recipe Walkthrough: H100 on EKS for Kubeflow Training

The recipe overlay h100-eks-ubuntu-training-kubeflow.yaml demonstrates the architecture. It inherits from h100-eks-ubuntu-training and adds the Kubeflow Training Operator for distributed training with TrainJob:

# recipes/overlays/h100-eks-ubuntu-training-kubeflow.yaml

kind: RecipeMetadata

apiVersion: aicr.nvidia.com/v1alpha1

metadata:

name: h100-eks-ubuntu-training-kubeflow

spec:

base: h100-eks-ubuntu-training

criteria:

service: eks

accelerator: h100

os: ubuntu

intent: training

platform: kubeflow

# Constraint names use fully qualified measurement paths: {type}.{subtype}.{key}

constraints:

- name: K8s.server.version

value: ">= 1.32.4"

- name: OS.release.ID

value: ubuntu

- name: OS.release.VERSION_ID

value: "24.04"

- name: OS.sysctl./proc/sys/kernel/osrelease

value: ">= 6.8"

componentRefs:

- name: kubeflow-trainer

type: Helm

valuesFile: components/kubeflow-trainer/values.yaml

manifestFiles:

- components/kubeflow-trainer/manifests/torch-distributed-cluster-training-runtime.yaml

dependencyRefs:

- cert-manager

- kube-prometheus-stack

- gpu-operator

The constraints section enforces minimum versions for Kubernetes, OS, and kernel. The componentRefs list declares dependencies explicitly. The recipe won't deploy unless cert-manager, the Prometheus stack, and the GPU Operator are present. Every release ships with SLSA Level 3 provenance, signed SBOMs, and image attestations.

The aicr CLI

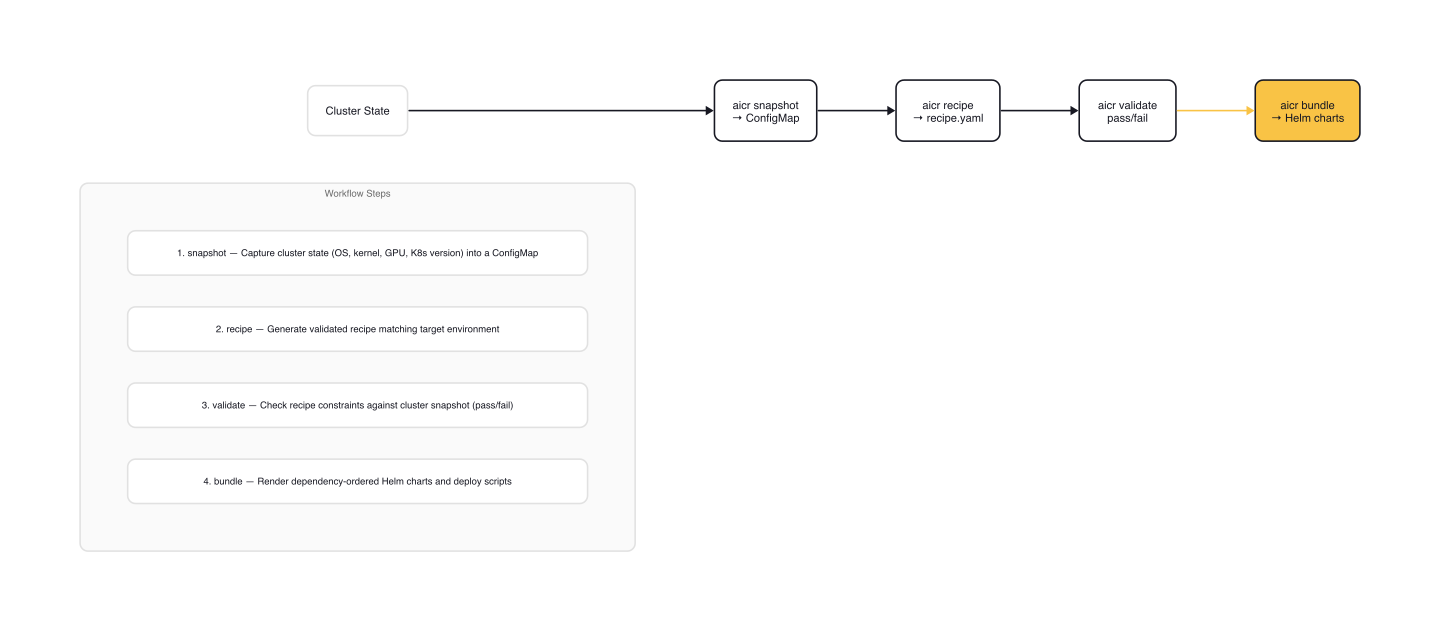

The aicr CLI implements a four-step workflow: snapshot, recipe, validate, bundle. A fifth command, query, lets you extract individual hydrated values from a recipe match.

Snapshot

Capture your cluster's current state. The CLI deploys a short-lived Job onto a target node, collects system measurements in parallel (OS, GPU, Kubernetes, SystemD), and writes the results to a ConfigMap or local file:

# aicr snapshot -- capture cluster state into a ConfigMap

aicr snapshot \

--node-selector nodeGroup=gpu-worker \

--output cm://gpu-operator/aicr-snapshot

The snapshot agent collects OS release, kernel version, GPU hardware, driver version, CUDA version, MIG settings, Kubernetes version, and installed operators. Each collector runs concurrently with a 30-second timeout. The snapshot becomes the baseline for validation.

Recipe

Generate a validated recipe for your target environment:

# aicr recipe -- generate a recipe from environment parameters

aicr recipe \

--service eks \

--accelerator h100 \

--intent training \

--os ubuntu \

--platform kubeflow \

--output recipe.yaml

The recipe command matches your parameters against the overlay library, resolves the inheritance chain, and merges all layers into a single recipe with exact component versions and settings. Recipe generation runs entirely in memory and completes in under 100ms.

You can also generate recipes from a snapshot, letting the CLI detect your environment automatically:

# Generate recipe from live cluster state

aicr recipe \

--snapshot cm://gpu-operator/aicr-snapshot \

--intent training \

--platform kubeflow \

--output recipe.yaml

Query

Inspect a specific hydrated value without generating the full recipe:

# aicr query -- extract a single configuration value

aicr query \

--service aks --accelerator h100 --os ubuntu --intent training \

--selector components.gpu-operator.values.driver.version

This is useful for CI pipelines that need a specific version number or for debugging which value a particular overlay sets.

Validate

Validate a recipe against your cluster snapshot. Validation runs in phases:

- Readiness: Compares recipe constraints against snapshot measurements (Kubernetes version, OS, kernel, GPU hardware). Fails immediately if constraints don't match.

- Deployment: Checks component health after installation.

- Conformance: Verifies requirements from the CNCF Certified Kubernetes AI Conformance Program: DRA support, gang scheduling, job-level networking.

# aicr validate -- check recipe against cluster state

aicr validate \

--recipe recipe.yaml \

--snapshot snapshot.yaml \

--phase all \

--output report.json

Validation results use the CTRF (Common Test Report Format), making them consumable by CI/CD systems. The command exits non-zero on failures by default.

Bundle

Render the recipe into deployment-ready artifacts:

# aicr bundle -- render Helm charts with dependency ordering

aicr bundle \

--recipe recipe.yaml \

--system-node-selector nodeGroup=system-pool \

--accelerated-node-selector nodeGroup=gpu-worker \

--accelerated-node-toleration nvidia.com/gpu=present:NoSchedule \

--output ./bundles

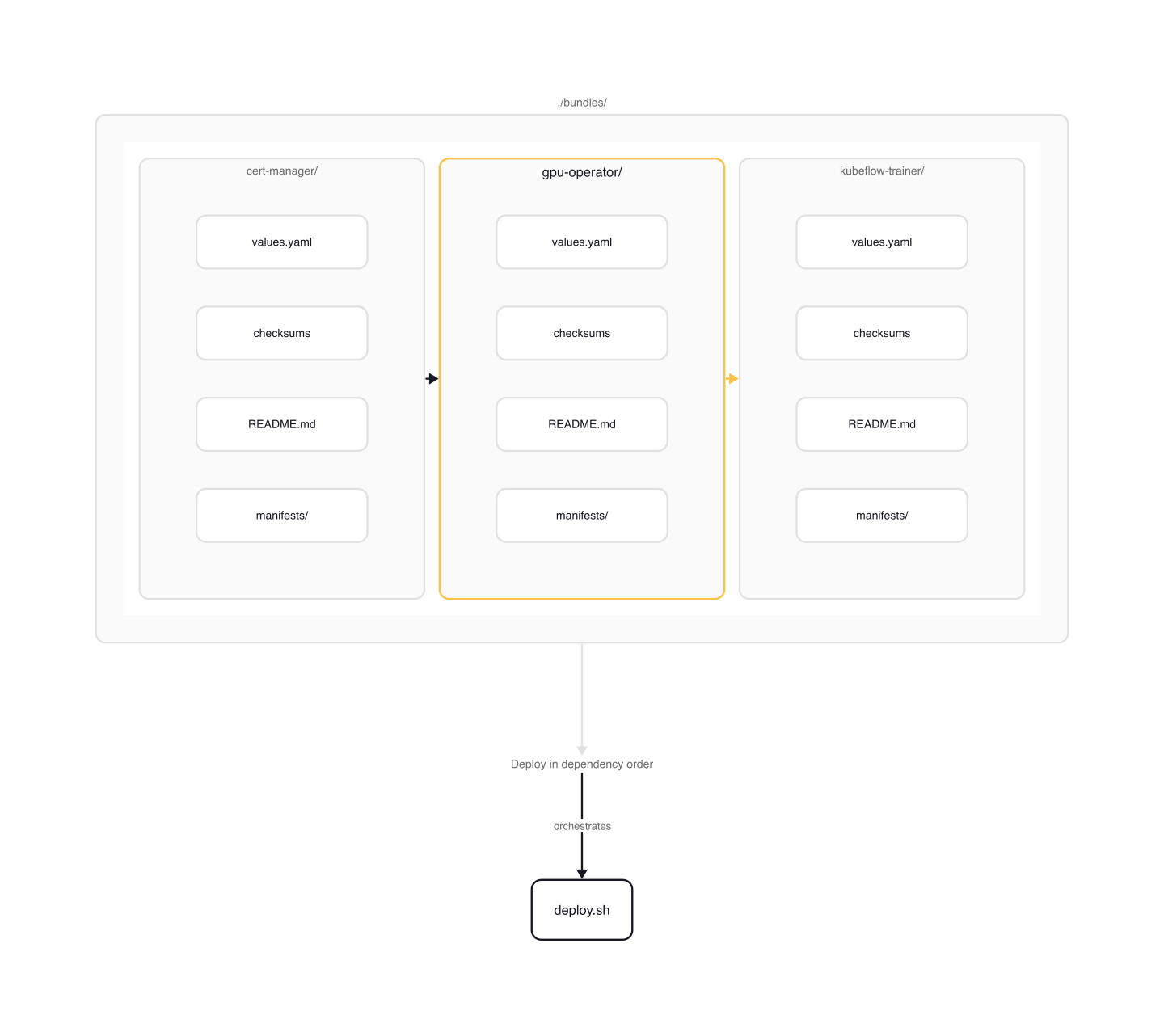

The output is a directory with one folder per component, each containing values.yaml, integrity checksums, a README, and optional custom manifests. Components are ordered by their dependency graph: cert-manager before GPU Operator, GPU Operator before Kubeflow Trainer.

Bundles and Deployment

The bundler supports two deployers that produce different output structures.

Helm deployer (default) generates per-component directories with a root deploy.sh script:

bundles/

├── README.md # Root deployment guide with ordered steps

├── deploy.sh # Deploys all components in dependency order

├── recipe.yaml # Copy of the input recipe

├── checksums.txt # SHA256 checksums of all files

├── cert-manager/

│ ├── values.yaml

│ └── README.md

├── gpu-operator/

│ ├── values.yaml

│ ├── README.md

│ └── manifests/

│ └── dcgm-exporter.yaml

└── kubeflow-trainer/

├── values.yaml

└── README.md

ArgoCD deployer generates Application manifests with sync-wave annotations that enforce dependency order:

# Generate ArgoCD Application manifests

aicr bundle \

--recipe recipe.yaml \

--deployer argocd \

--repo https://github.com/my-org/gpu-infra.git \

--output ./bundles

Each component gets an ArgoCD Application with sync-wave annotations that enforce deployment order. The generated manifests use multi-source to pull the Helm chart from upstream and values from your GitOps repo:

# bundles/gpu-operator/argocd/application.yaml

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: gpu-operator

annotations:

argocd.argoproj.io/sync-wave: "1" # After cert-manager (wave 0)

spec:

sources:

- repoURL: https://helm.ngc.nvidia.com/nvidia

targetRevision: v25.3.3

chart: gpu-operator

helm:

valueFiles:

- $values/gpu-operator/values.yaml

- repoURL: https://github.com/my-org/gpu-infra.git

targetRevision: main

ref: values

An app-of-apps.yaml at the root ties all components together. For air-gapped environments, bundles can be published as OCI images and pulled without internet access.

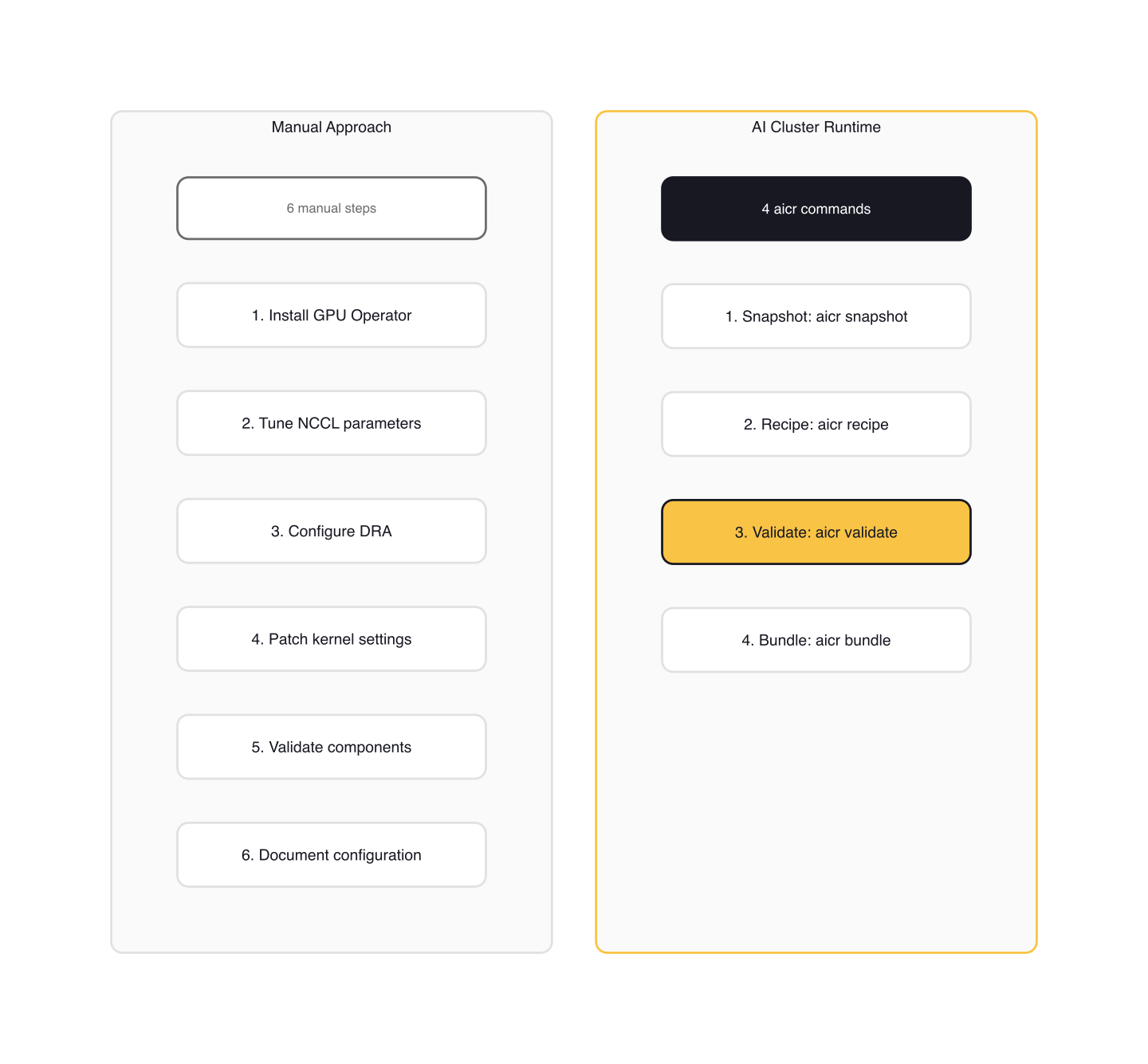

Comparison: Recipes vs Manual Setup

Manual Approach

Setting up a GPU training cluster by hand requires at minimum:

- Install the NVIDIA GPU Operator with the correct driver version for your GPU and kernel

- Tune NCCL parameters for your interconnect topology (NVLink, InfiniBand, EFA)

- Configure DRA (Dynamic Resource Allocation) or the legacy device plugin for GPU scheduling

- Patch kernel settings for GPU passthrough and huge pages

- Install supporting components (cert-manager, Prometheus, node feature discovery)

- Validate each component individually

- Document the configuration for reproducibility

Each step introduces version coupling. The GPU Operator version must match the driver version, which must match the kernel version, which must match the OS. A single mismatch can produce silent failures: workloads schedule but underperform, or schedule on the wrong GPU partition.

AI Cluster Runtime Approach

AICR consolidates this into four commands:

aicr snapshot --output snapshot.yaml

aicr recipe --service eks --accelerator h100 --intent training \

--os ubuntu --platform kubeflow --output recipe.yaml

aicr validate --recipe recipe.yaml --snapshot snapshot.yaml

aicr bundle --recipe recipe.yaml --output ./bundles && ./bundles/deploy.sh

The recipe engine resolves all version coupling. Every component version in the recipe has been tested together against real clusters. The validation step catches mismatches before deployment, not after.

Gotchas

Constraint Mismatches

Recipe constraints must match your cluster snapshot or validation fails at the readiness phase. Common mismatches:

- Kubernetes version below minimum (the H100 EKS recipe requires >= 1.32.4)

- OS release mismatch (Ubuntu required, Debian detected)

- Kernel version below threshold (>= 6.8 for GPU passthrough features)

- GPU hardware not matching the recipe's accelerator specification

Readiness failures are the cheapest: they happen before any deployment, so there's nothing to roll back.

Validation Phase Failures

Each validation phase has different remediation paths:

- Readiness failures require upgrading the cluster or selecting a different recipe

- Component health failures indicate installation problems: check operator logs, node readiness, and resource availability

- Conformance failures suggest configuration gaps against the CNCF AI Conformance requirements (DRA, gang scheduling, job-level networking)

Use --phase readiness to run only pre-deployment checks during development, and --phase all in CI to catch the full spectrum.

MIG Strategy Selection

MIG (Multi-Instance GPU) partitions a physical GPU into isolated instances for multi-tenant workloads. The recipe must specify the correct MIG strategy. A single strategy dedicates the entire GPU. A mixed strategy allows different partition sizes on the same node. Choosing the wrong strategy means workloads either can't schedule or waste GPU capacity.

Private Overlays

If your organization has custom configurations (internal registries, specific kernel modules, non-standard node labels), use the --data flag to overlay external recipe directories at runtime:

# Overlay organization-specific recipes without forking the repo

aicr recipe \

--data ./internal-recipes \

--service eks --accelerator h100 --intent training \

--output recipe.yaml

Private overlays merge with public recipes using the same precedence rules. This avoids maintaining a fork while keeping organization-specific tuning.

Alpha Scope

AICR is alpha software with a narrow support matrix. As of this release: Ubuntu only (no RHEL, Rocky, or Bottlerocket), H100 and GB200 only (no A100, L40S, or T4), and three managed Kubernetes services (EKS, AKS 1.34+, GKE) plus Kind. If your production fleet runs A100s on RHEL, AICR won't generate a recipe for you today. The overlay library is designed to grow through community contributions, but current coverage reflects what NVIDIA has validated internally.

The recipe model also assumes you're deploying the full component stack. If you already run cert-manager or Prometheus and just need GPU Operator configuration, you need to extract the relevant component from the bundle rather than deploying everything. The --data flag helps here: you can override specific components while keeping the rest of the validated recipe.

Wrap-up

AI Cluster Runtime turns GPU cluster configuration from a manual process into a reproducible pipeline. The aicr CLI captures cluster state, generates validated recipes, checks them against your environment, and renders deployment-ready artifacts for Helm or ArgoCD.

The alpha scope is narrow: H100 and GB200 on Ubuntu, across EKS, AKS, GKE, and Kind. If that matches your environment, the value is immediate. If not, watch the overlay library. Training recipes target Kubeflow Trainer, inference recipes target NVIDIA Dynamo, and the project is designed for community contributions that expand coverage to new hardware, operating systems, and Kubernetes distributions.

If you're running GPU workloads on Kubernetes and spending time debugging version mismatches, start with aicr snapshot on an existing cluster. The snapshot alone tells you exactly what you're running. Comparing it against a validated recipe shows where your configuration drifts.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

GPU Sharing Strategies for Multi-Tenant Kubernetes: MIG, Time-Slicing, and MPS

NVIDIA's GPU sharing mechanisms — MIG, time-slicing, and MPS — are gaining traction as teams run multiple inference workloads per GPU.

CNCF Certified Kubernetes AI Conformance Program

CNCF launched v1.0 of the Kubernetes AI Conformance Program defining baseline capabilities for running AI workloads across conformant clusters.

NVIDIA KAI Scheduler: Open-Source GPU-Aware Kubernetes Scheduling

NVIDIA open-sourced KAI Scheduler (Apache 2.0), a Kubernetes-native GPU scheduling solution originally from the Run:ai platform.