NVIDIA KAI Scheduler: Open-Source GPU-Aware Kubernetes Scheduling

Introduction

GPU workloads are exploding across organizations. Training large language models, running inference APIs, processing video streams—these are all GPU-intensive workloads that demand efficient resource management. Yet Kubernetes treats GPUs as indivisible resources. You either get a whole GPU or you get nothing. This rigidity creates severe fragmentation in multi-tenant GPU clusters.

You've seen it in production. A team requests four GPUs for an interactive debugging session. Another team needs three GPUs for distributed training. The first team holds onto those four GPUs for hours, even though they only need two for most of that time. The second team sits idle, waiting for resources that are technically available but not usable because they're not contiguous.

NVIDIA solved this by open-sourcing KAI Scheduler under the Apache 2.0 license. Built on the kube-batch foundation and originally developed for the Run:ai platform, KAI Scheduler brings enterprise-grade GPU scheduling to the community. It supports fractional GPU allocation, queue-based resource management, bin packing, spread scheduling, and topology awareness.

This article covers how KAI Scheduler manages GPU workloads at scale. You'll learn about queues as scheduling primitives, how to configure resource quotas versus limits, and real production patterns for multi-tenant GPU clusters. I'll also show you how to test custom schedulers safely without risking your production environment.

Section 1: What is KAI Scheduler?

KAI Scheduler is a Kubernetes-native scheduler designed specifically for AI workloads. Unlike the default kube-scheduler, which treats all resources as integers, KAI Scheduler understands GPU topology and can split a single GPU among multiple workloads.

The scheduler runs alongside other schedulers on the same cluster. Kubernetes supports multiple schedulers through the schedulerName field on pod specifications. Each scheduler watches the API server for pods that explicitly select it.

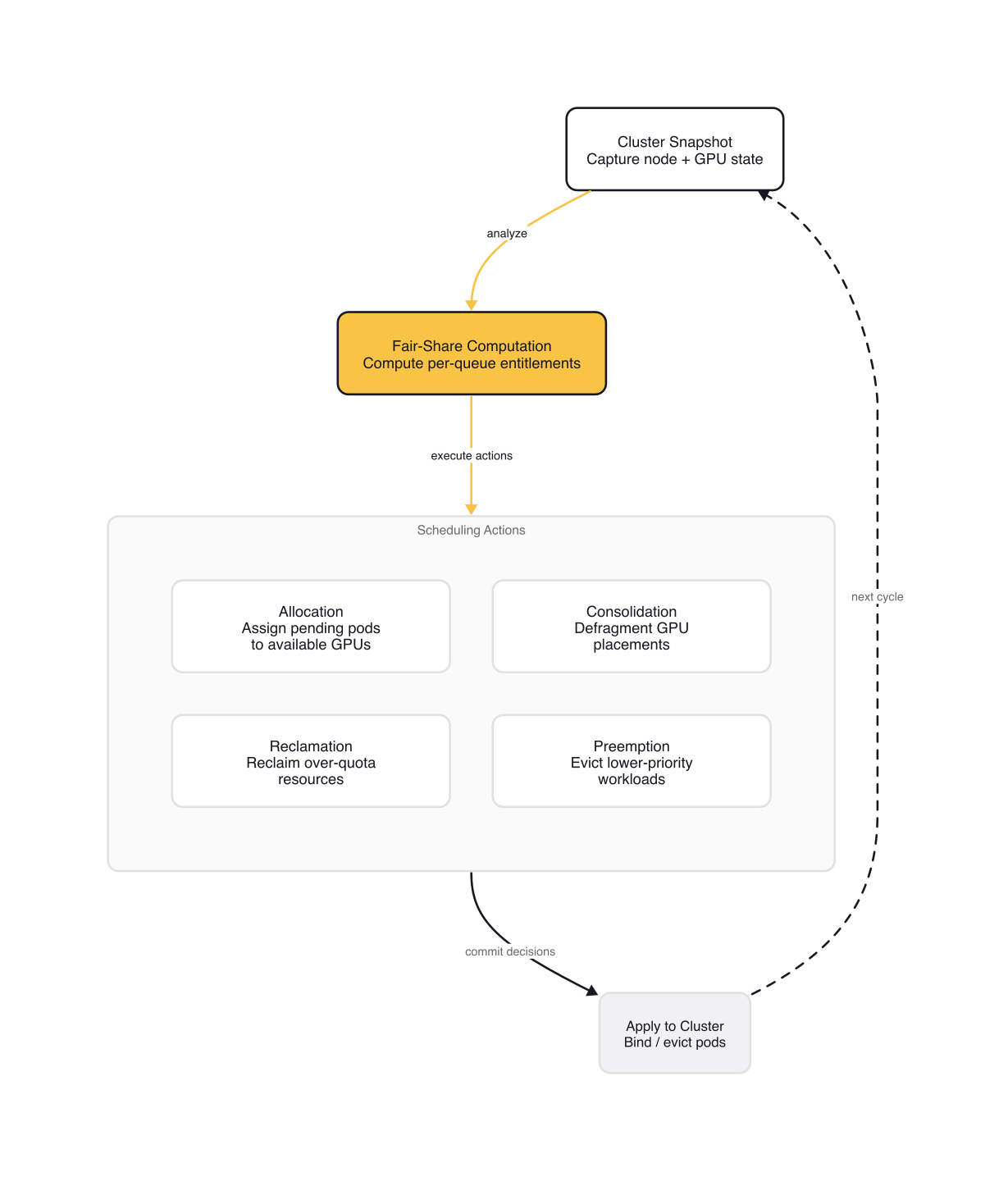

KAI Scheduler operates in an infinite loop. It captures a snapshot of the cluster state, computes optimal resource distribution using fair-share algorithms, then applies targeted scheduling actions. The four main actions are allocation, consolidation, reclamation, and preemption.

Allocation binds pending jobs to nodes when resources are available. KAI Scheduler orders jobs by priority and attempts to fit them into available slots. If a job requires contiguous GPUs, the scheduler finds nodes with sufficient contiguous blocks.

Consolidation reallocates running workloads to reduce fragmentation. Suppose a pending job needs four GPUs on a single node, but no node has that many contiguous free GPUs. The scheduler moves running pods from one node to another, freeing up contiguous space. This is the consolidation action.

Reclamation evicts jobs from queues consuming more than their fair share. If one team monopolizes resources, reclamation identifies which pods to evict to restore balance across queues.

Preemption handles lower-priority jobs within the same queue. High-priority pending jobs can preempt lower-priority running jobs in their queue, ensuring critical workloads aren't starved.

The scheduling cycle repeats continuously, adapting to changing workload demands in real time. This dynamic approach helps ensure efficient GPU allocation without constant manual intervention.

KAI Scheduler addresses three specific challenges that traditional schedulers struggle with:

Managing fluctuating GPU demands — Workloads change rapidly. An interactive exploration might need one GPU, then suddenly require three for distributed training. KAI Scheduler recalculates fair-share values and adjusts quotas in real time.

Reduced wait times — ML engineers need compute access quickly. The scheduler combines gang scheduling, GPU sharing, and hierarchical queuing so tasks launch as soon as resources align with priorities and fairness.

Resource guarantees — In shared clusters, some researchers secure more GPUs than necessary early in the day. KAI Scheduler enforces resource guarantees while dynamically reallocating idle resources to other workloads.

Section 2: Core Concepts

Queues as Scheduling Primitives

Queues are the foundation of KAI Scheduler's resource management. Each queue has specific properties that guide resource allocation: quota, over-quota weight, limit, and queue priority.

Only leaf queues—queues with no children—can schedule jobs. Parent queues serve as organizational units for resource distribution among their children.

Every queue defines a quota for each resource type. Quota is the baseline guaranteed allocation. For example, a queue with gpu.quota: 1 is guaranteed one GPU. Any remaining resources are distributed among queues based on their over-quota weight.

You can also set a limit on resource consumption. The limit defines the maximum resources a queue can consume. Between quota and limit, you have a guaranteed minimum and a hard cap.

Special values exist for flexibility. Set quota: -1 for unlimited quota. Set limit: -1 for no limit. Set quota: 0 or leave it unset for no guaranteed resources. Set limit: 0 or unset for no additional resources allowed.

Here's a queue with resource quotas and limits:

# queues/README.md

apiVersion: scheduling.run.ai/v2

kind: Queue

metadata:

name: research-team

spec:

displayName: "Research Team"

resources:

cpu:

quota: 1000

limit: 2000

gpu:

quota: 1

limit: 2

This queue guarantees 1 CPU core and one GPU, with a hard cap of 2 CPU cores and two GPUs.

Resource Units and Special Values

Resource units follow Kubernetes conventions. CPU uses millicores where 1000 equals one core. Memory uses megabytes. GPU uses integer units where 1 equals a full GPU device.

The special values provide flexibility for different use cases. -1 means unlimited or no constraint. 0 or unset means no guarantee or no additional allowance.

Here's a queue with hierarchical structure:

apiVersion: scheduling.run.ai/v2

kind: Queue

metadata:

name: ml-team

spec:

displayName: "ML Team"

parentQueue: "research-team"

priority: 200

resources:

cpu:

quota: 500

overQuotaWeight: 2

gpu:

quota: 1

overQuotaWeight: 1

This ML team queue is a child of the research team queue with higher priority (200) and can consume up to 50% of its parent's CPU quota and half of its parent's GPU quota.

Assigning Pods to Queues

Pods join queues through labels. The kai.scheduler/queue label assigns a pod to a specific scheduling queue. The queue determines which resource pool the pod draws from and which fairness policies apply.

To use KAI Scheduler, you must explicitly select it in the pod specification. The schedulerName field tells Kubernetes which scheduler handles this pod. By default, Kubernetes uses kube-scheduler. Set schedulerName: kai-scheduler to use KAI Scheduler instead.

Here's a GPU pod demonstrating queue assignment and scheduler selection:

# docs/quickstart/pods/gpu-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: gpu-pod

labels:

kai.scheduler/queue: default-queue

spec:

schedulerName: kai-scheduler

containers:

- name: main

image: ubuntu

command: ["bash", "-c"]

args: ["nvidia-smi; trap 'exit 0' TERM; sleep infinity & wait"]

resources:

limits:

nvidia.com/gpu: "1"

This pod requests one GPU using NVIDIA's custom resource name nvidia.com/gpu. The kai.scheduler/queue: default-queue label assigns it to the default queue, and schedulerName: kai-scheduler explicitly selects the custom scheduler.

You can verify which scheduler is handling a pod with kubectl:

$ kubectl get pod gpu-pod -o custom-columns="NAME:.metadata.name,SCHEDULER:.spec.schedulerName"

NAME SCHEDULER

gpu-pod kai-scheduler

The scheduler decides which nodes to place pods on. KAI Scheduler uses the GPU-Operator to discover available GPU resources. Without GPU-Operator installed, KAI Scheduler can't see GPU capacity.

Section 3: Production Patterns

Multi-Tenant GPU Clusters with Hierarchical Queues

Multi-tenant GPU clusters require careful queue hierarchy design. Each research team gets a leaf queue with its own quotas. Parent queues organize teams into departments or projects.

Hierarchical queues enable flexible resource distribution. A parent queue can guarantee resources to all children, then distribute surplus based on over-quota weights.

Consider this structure for an ML organization:

ML Department (parent queue)

├── Research Team (parent queue, quota: 4 GPUs)

│ ├── Project Alpha (leaf queue)

│ └── Project Beta (leaf queue)

└── Engineering Team (leaf queue, quota: 2 GPUs)

The ML Department parent queue guarantees 6 GPUs total. Research Team gets 4 GPUs, Engineering Team gets 2 GPUs. If the cluster has 10 GPUs available, the remaining 4 GPUs distribute based on over-quota weights.

Time-based fairshare adds another dimension. Instead of static quotas, you can weight resources by time of day. A research team might need 4 GPUs during business hours but only 1 GPU overnight. The scheduler adjusts allocations accordingly.

Topology-Aware Placement

Topology-aware scheduling optimizes placement based on hardware layout. GPUs on the same node share memory bandwidth. Distributed training jobs benefit from contiguous GPUs on a single node. Inference workloads might spread across multiple GPUs for fault tolerance.

KAI Scheduler uses topology information from the GPU-Operator to make informed placement decisions. It understands which GPUs are adjacent, which are connected through NVLink, and which share memory domains.

For disaggregated serving architectures—where inference and training run separately on different hardware—topology awareness becomes critical. KAI Scheduler v0.10.0 added Topology-Aware Scheduling (TAS) support, optimizing placement for modern disaggregated serving patterns.

Comparison with Kueue

Kueue is the community standard for batch workload scheduling on Kubernetes. It's a Kubernetes SIG project (kubernetes-sigs/kueue) that provides queue-based resource management similar to KAI Scheduler.

The key difference: KAI Scheduler understands GPU topology and supports fractional allocation. Kueue treats GPUs as indivisible resources. For CPU and memory workloads, Kueue is often the better choice because it has broader community adoption and is part of the Kubernetes SIG ecosystem.

For GPU-specific needs—fractional allocation, bin packing, topology awareness—KAI Scheduler is the right tool. For general batch scheduling, Kueue provides a solid foundation.

Some teams use both. Kueue handles CPU-intensive batch jobs. KAI Scheduler manages GPU workloads. They coexist on the same cluster without conflict because pods explicitly select their scheduler.

Section 4: Safety First — Testing Custom Schedulers

Testing custom schedulers in production is dangerous. A scheduler bug can leave all pods pending. CRD conflicts can corrupt namespaces. Version mismatches cause random failures. Resource leaks exhaust GPU capacity.

Using vCluster for Isolated Testing

vCluster creates a fully functional Kubernetes cluster inside a namespace of your existing cluster. It's not a new EKS or GKE cluster—it's a virtual cluster running inside your current infrastructure.

The architecture consists of an API server that handles Kubernetes API calls independently, a syncer that synchronizes resources between virtual and host clusters, and SQLite or etcd for complete state isolation.

The syncer translates virtual resources to host resources. This means GPU workloads scheduled by KAI inside the vCluster actually run on real GPU nodes in your host cluster, but all scheduling decisions stay isolated.

Here's how to safely test KAI Scheduler without risking production:

- Create a vCluster with virtual scheduler enabled

- Install KAI Scheduler inside the vCluster

- Deploy test workloads with fractional GPU requests

- Observe behavior in complete isolation

- If something fails, delete the vCluster in 40 seconds

Parallel scheduler deployments: Each team can operate their own virtual cluster with their own KAI scheduler version. The ML team tests KAI v0.9.3 while the Research team requires stable v0.7.11. Both run simultaneously without interference.

The practical benefit: blast radius stays in a single namespace. If the scheduler misbehaves, you delete the vCluster and the host cluster is unaffected. No manual pod cleanup, no CRD conflicts to untangle.

The 40-Second Rollback Guarantee

Deleting a vCluster removes all its resources from the host cluster. The syncer cleans up virtual objects, and the host cluster returns to its previous state. This is the 40-second rollback guarantee.

In production, rolling back a scheduler change takes hours. You need to identify which pods failed, understand why, revert configuration changes, and restart affected workloads. With vCluster, you simply delete the namespace where the virtual cluster lives.

Gotchas

Queue Hierarchy Pitfalls

Only leaf queues can schedule jobs. If you submit a pod to a parent queue without children, the scheduler ignores it. Always assign pods to leaf queues in the hierarchy.

Parent queues exist solely for organizational purposes and resource distribution. They calculate fair-share values for their children but never schedule pods directly.

GPU Resource Naming Conventions

NVIDIA uses nvidia.com/gpu as the custom resource name. Some vendors use different conventions. AMD might use amd.com/gpu. Make sure your queue configuration matches the actual resource names your nodes advertise.

The GPU-Operator discovers and advertises GPU resources. Without it, KAI Scheduler can't see GPU capacity. Install GPU-Operator before deploying KAI Scheduler.

Namespace Isolation Requirements

When submitting workloads, use a dedicated namespace. Do not use the kai-scheduler namespace for workload submission. The KAI Scheduler components run in that namespace. Submitting workloads there causes conflicts.

The kai-scheduler namespace contains the scheduler controller, CRDs, and admission webhooks. It's not for workloads. Create a separate namespace like gpu-workloads or ml-jobs for your applications.

The kai-scheduler Namespace Restriction

KAI Scheduler enforces namespace restrictions on custom resources. Queue resources must live in the kai-scheduler namespace. Trying to create queues elsewhere fails with an error.

This restriction prevents queue configuration conflicts. Queues are global scheduling primitives, so they live in the scheduler's namespace. Workloads reference them through labels, not through namespace placement.

Wrap-up

KAI Scheduler fills a real gap in the Kubernetes scheduling ecosystem. The default kube-scheduler treats GPUs as indivisible integers. KAI Scheduler treats them as shared, topology-aware resources — and now that it's Apache 2.0 licensed, any team can deploy it without a commercial dependency.

The concepts that matter most: queues as scheduling primitives with quota/limit semantics, the schedulerName field for explicit scheduler selection, and hierarchical queue design for multi-tenant GPU clusters. If you're running GPU workloads at scale, evaluate KAI Scheduler alongside Kueue — they solve different problems and coexist cleanly.

Test custom schedulers in isolated environments before promoting to production. vCluster gives you that isolation at the namespace level, with a fast teardown path when things go wrong.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

GPU Sharing Strategies for Multi-Tenant Kubernetes: MIG, Time-Slicing, and MPS

NVIDIA's GPU sharing mechanisms — MIG, time-slicing, and MPS — are gaining traction as teams run multiple inference workloads per GPU.

NVIDIA AI Cluster Runtime: Validated GPU Kubernetes Recipes

NVIDIA released AI Cluster Runtime, an open-source project providing validated, version-locked Kubernetes configurations for GPU infrastructure.

Kueue: The Community Standard for Kubernetes AI Batch Scheduling

Kueue manages GPU quotas, enforces fair sharing across teams, and dispatches jobs to remote HPC clusters — the standard for production AI batch scheduling.