Kueue: The Community Standard for Kubernetes AI Batch Scheduling

Three teams share 64 A100 GPUs. Team A submits a fine-tuning job at 2 AM and claims all 64. Team B's training run, scheduled for the morning, sits in Pending for six hours. Team C's inference batch never starts. The Kubernetes scheduler placed every Pod correctly — the problem isn't scheduling. It's that nobody is managing the queue.

Native Kubernetes has no concept of resource quotas across batch jobs, no borrowing between teams, no preemption hierarchy. The scheduler sees Pods, not workloads. It doesn't know that Team A already has 32 GPUs and Team B has zero.

Kueue fills this gap. It's a Kubernetes-native job queueing system that manages resource quotas, enforces fair sharing across teams through cohorts, preempts lower-priority workloads when capacity is exhausted, and dispatches jobs to remote clusters through MultiKueue. You submit a Job or JobSet with a queue label, and Kueue decides when and where to admit it.

ClusterQueues and Resource Flavors

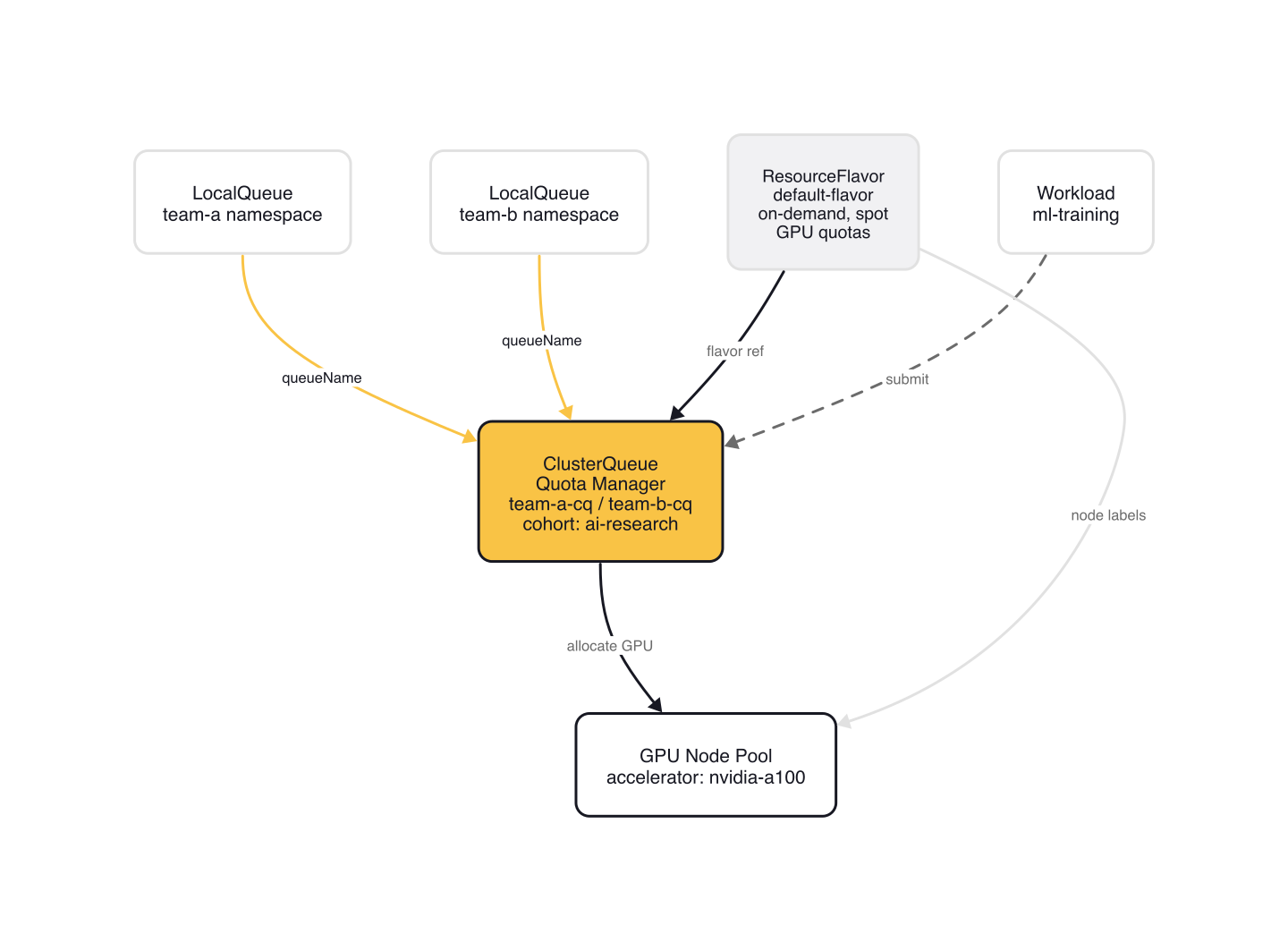

Kueue's resource model has three layers: ResourceFlavors define hardware types, ClusterQueues set quotas per flavor, and LocalQueues give namespaces access to a ClusterQueue.

A minimal setup from the Kueue examples repository defines all three:

# single-clusterqueue-setup.yaml

apiVersion: kueue.x-k8s.io/v1beta2

kind: ResourceFlavor

metadata:

name: "default-flavor"

---

apiVersion: kueue.x-k8s.io/v1beta2

kind: ClusterQueue

metadata:

name: "cluster-queue"

spec:

namespaceSelector: {} # match all.

resourceGroups:

- coveredResources: ["cpu", "memory"]

flavors:

- name: "default-flavor"

resources:

- name: "cpu"

nominalQuota: 9

- name: "memory"

nominalQuota: 36Gi

---

apiVersion: kueue.x-k8s.io/v1beta2

kind: LocalQueue

metadata:

namespace: "default"

name: "user-queue"

spec:

clusterQueue: "cluster-queue"

In GPU clusters, you'd create separate flavors per accelerator (e.g., nvidia-a100, nvidia-t4) with nodeLabels that map to your node pools. The ClusterQueue sets a hard quota of 9 CPUs and 36Gi memory. The LocalQueue in the default namespace gives workloads a way to target the ClusterQueue.

ResourceFlavors can also enforce credits-based quotas. The shared-quota-setup example uses a virtual cpu_credits resource to cap total consumption across multiple flavors:

# shared-quota-setup.yaml — ClusterQueue with credits-based quota

apiVersion: kueue.x-k8s.io/v1beta2

kind: ClusterQueue

metadata:

name: "team-cluster-queue"

spec:

namespaceSelector: {}

resourceGroups:

- coveredResources: ["cpu"]

flavors:

- name: "on-demand"

resources:

- name: "cpu"

nominalQuota: 9

- name: "spot"

resources:

- name: "cpu"

nominalQuota: 9

- coveredResources: ["cpu_credits"]

flavors:

- name: "credits"

resources:

- name: cpu_credits

nominalQuota: 14

Credits-based quota requires a resource transformation in the Kueue Configuration that maps real CPU requests to the virtual cpu_credits resource. Each CPU requested generates one credit, and the combined quota of 14 credits limits total CPU usage across both on-demand and spot flavors, regardless of which flavor is consumed.

Fair Sharing with Cohorts

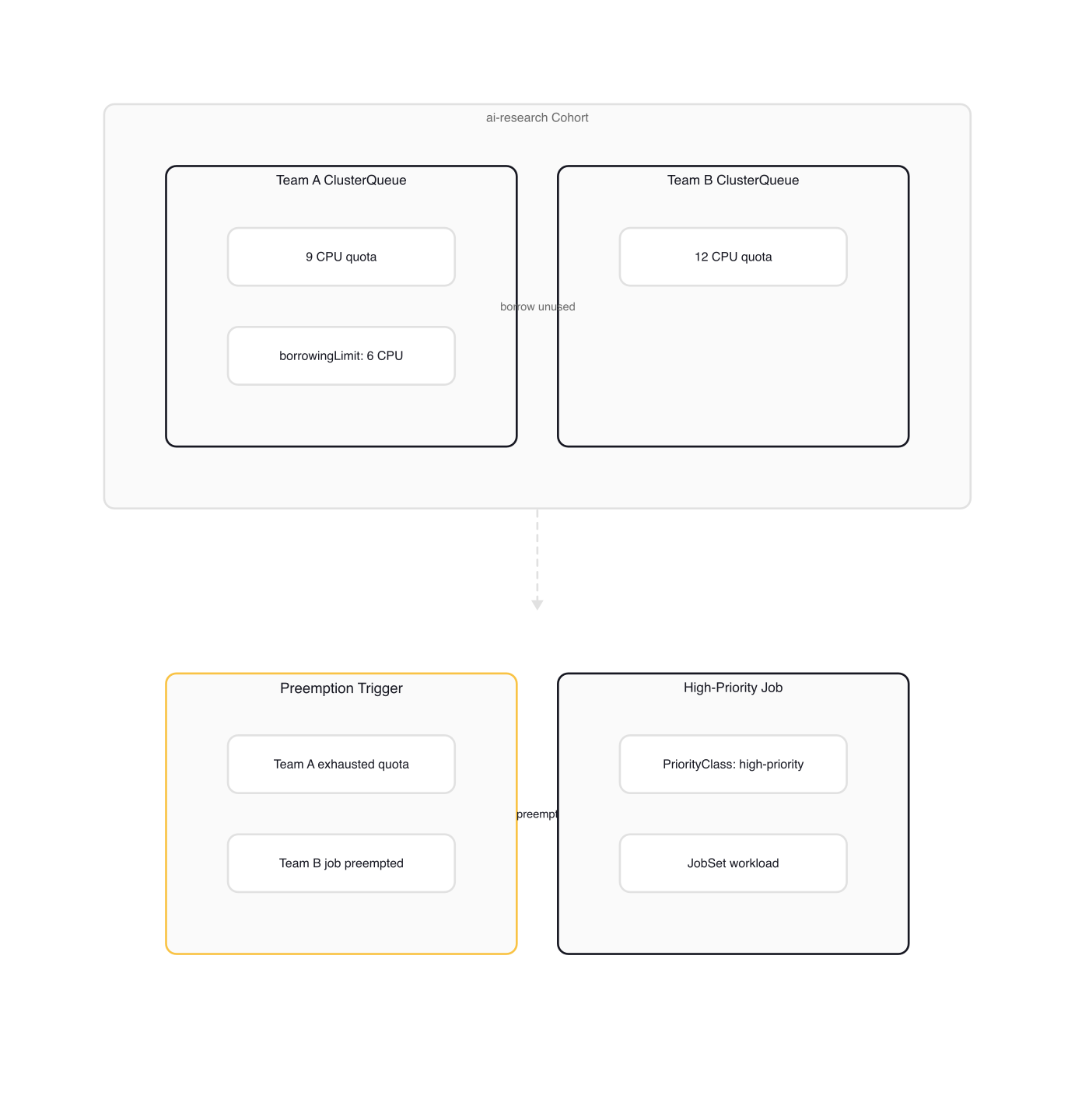

In multi-tenant environments, you need more than simple quotas. Team A might have unused GPU capacity while Team B is starved. A cohort groups ClusterQueues that can borrow unused quota from each other.

The following example from the Kueue ClusterQueue documentation shows two teams sharing resources:

# team-a-cq.yaml — from Kueue docs borrowing example

apiVersion: kueue.x-k8s.io/v1beta2

kind: ClusterQueue

metadata:

name: "team-a-cq"

spec:

namespaceSelector: {} # match all.

cohortName: "team-ab"

resourceGroups:

- coveredResources: ["cpu", "memory"]

flavors:

- name: "default-flavor"

resources:

- name: "cpu"

nominalQuota: 9

- name: "memory"

nominalQuota: 36Gi

# team-b-cq.yaml — from Kueue docs borrowing example

apiVersion: kueue.x-k8s.io/v1beta2

kind: ClusterQueue

metadata:

name: "team-b-cq"

spec:

namespaceSelector: {} # match all.

cohortName: "team-ab"

resourceGroups:

- coveredResources: ["cpu", "memory"]

flavors:

- name: "default-flavor"

resources:

- name: "cpu"

nominalQuota: 12

- name: "memory"

nominalQuota: 48Gi

The cohortName: "team-ab" field links both ClusterQueues. When Team B has no admitted workloads, Team A can use up to 9 + 12 = 21 CPUs total. Once Team B submits workloads, Kueue ensures Team B's nominal quota is met before allowing further borrowing.

Each team gets a LocalQueue pointing to their ClusterQueue:

# team-a-local-queue.yaml

apiVersion: kueue.x-k8s.io/v1beta2

kind: LocalQueue

metadata:

namespace: "team-a"

name: "team-a-queue"

spec:

clusterQueue: "team-a-cq"

To cap how much a team can borrow, add borrowingLimit to the flavor's resources:

# team-a-cq-with-borrowing.yaml — from Kueue docs borrowingLimit example

apiVersion: kueue.x-k8s.io/v1beta2

kind: ClusterQueue

metadata:

name: "team-a-cq"

spec:

namespaceSelector: {} # match all.

cohortName: "team-ab"

resourceGroups:

- coveredResources: ["cpu", "memory"]

flavors:

- name: "default-flavor"

resources:

- name: "cpu"

nominalQuota: 9

borrowingLimit: 1

With borrowingLimit: 1, Team A can use at most 9 + 1 = 10 CPUs — even if Team B has 12 unused CPUs. The limit is a ceiling, not a guarantee. If Team B has already used 11 of their 12 CPUs, only 1 is available to borrow.

The inverse of borrowingLimit is lendingLimit. If Team B sets lendingLimit: 1 on their 12-CPU quota, only 1 CPU is available for borrowing regardless of how much Team B has unused. This protects reserved capacity for burst workloads.

Preemption Policies

When quota is exhausted, Kueue can evict lower-priority workloads to admit higher-priority ones. There are two preemption algorithms: Classic and Fair Sharing.

Classic preemption is the default — it preempts lower-priority workloads only when the incoming workload fits within the ClusterQueue's nominal quota. Fair Sharing distributes borrowed resources based on weighted share values, enabling more aggressive rebalancing across the cohort.

To enable Fair Sharing, configure a Kueue Configuration object:

# kueue-config.yaml — from Kueue preemption docs

apiVersion: config.kueue.x-k8s.io/v1beta2

kind: Configuration

fairSharing:

preemptionStrategies: [LessThanOrEqualToFinalShare, LessThanInitialShare]

Without this configuration, preemption uses the Classic algorithm only. LessThanOrEqualToFinalShare preempts when the preempting queue's share would be less than or equal to the target queue's share after eviction. LessThanInitialShare is more conservative — it only preempts when the preempting queue's share stays strictly below the target's current share.

To enable preemption on a ClusterQueue, add the preemption field. The following example from the Kueue ClusterQueue documentation shows all available policies:

# team-a-cq-with-preemption.yaml — from Kueue ClusterQueue docs

apiVersion: kueue.x-k8s.io/v1beta2

kind: ClusterQueue

metadata:

name: "team-a-cq"

spec:

preemption:

reclaimWithinCohort: Any

borrowWithinCohort:

policy: LowerPriority

maxPriorityThreshold: 100

withinClusterQueue: LowerPriority

withinClusterQueue: LowerPriority lets Kueue evict lower-priority jobs within the same ClusterQueue. reclaimWithinCohort: Any permits reclaiming borrowed resources from other ClusterQueues in the cohort regardless of priority. borrowWithinCohort allows preemption even when the incoming workload needs to borrow, but only against workloads below the maxPriorityThreshold.

Workloads participate in preemption through PriorityClasses:

# high-priority.yaml

apiVersion: scheduling.k8s.io/v1

kind: PriorityClass

metadata:

name: high-priority

value: 1000

globalDefault: false

description: "High-priority AI training workloads"

A JobSet with Kueue admission control uses the kueue.x-k8s.io/queue-name label and the priorityClassName in its pod template:

# sample-jobset.yaml — from Kueue JobSet docs

apiVersion: jobset.x-k8s.io/v1alpha2

kind: JobSet

metadata:

generateName: sleep-job-

labels:

kueue.x-k8s.io/queue-name: user-queue

spec:

replicatedJobs:

- name: workers

replicas: 1

template:

spec:

parallelism: 1

completions: 1

backoffLimit: 0

template:

spec:

priorityClassName: high-priority

containers:

- name: sleep

image: busybox

resources:

requests:

cpu: 1

memory: "200Mi"

command: ["sleep"]

args: ["100s"]

The kueue.x-k8s.io/queue-name label targets the LocalQueue. Kueue creates a Workload object internally and manages admission. In a GPU training scenario, you'd replace the container image, add nvidia.com/gpu to resource requests, and increase replicas — the Kueue admission flow is identical.

When a high-priority job arrives and quota is exhausted, Kueue evicts lower-priority workloads first. Preempted workloads have an Evicted condition in their status:

# Based on Kueue preemption docs — workload status after preemption

status:

conditions:

- lastTransitionTime: "2025-03-07T21:19:54Z"

message: 'Preempted to accommodate a workload (UID: 5c023c28-8533-4927-b266-56bca5e310c1,

JobUID: 4548c8bd-c399-4027-bb02-6114f3a8cdeb) due to prioritization in the ClusterQueue'

observedGeneration: 1

reason: Preempted

status: "True"

type: Evicted

The message identifies which workload triggered the preemption. You can find the preempting workload with kubectl get workloads.kueue.x-k8s.io --selector=kueue.x-k8s.io/job-uid=<JobUID> --all-namespaces.

MultiKueue for Cross-Cluster Dispatching

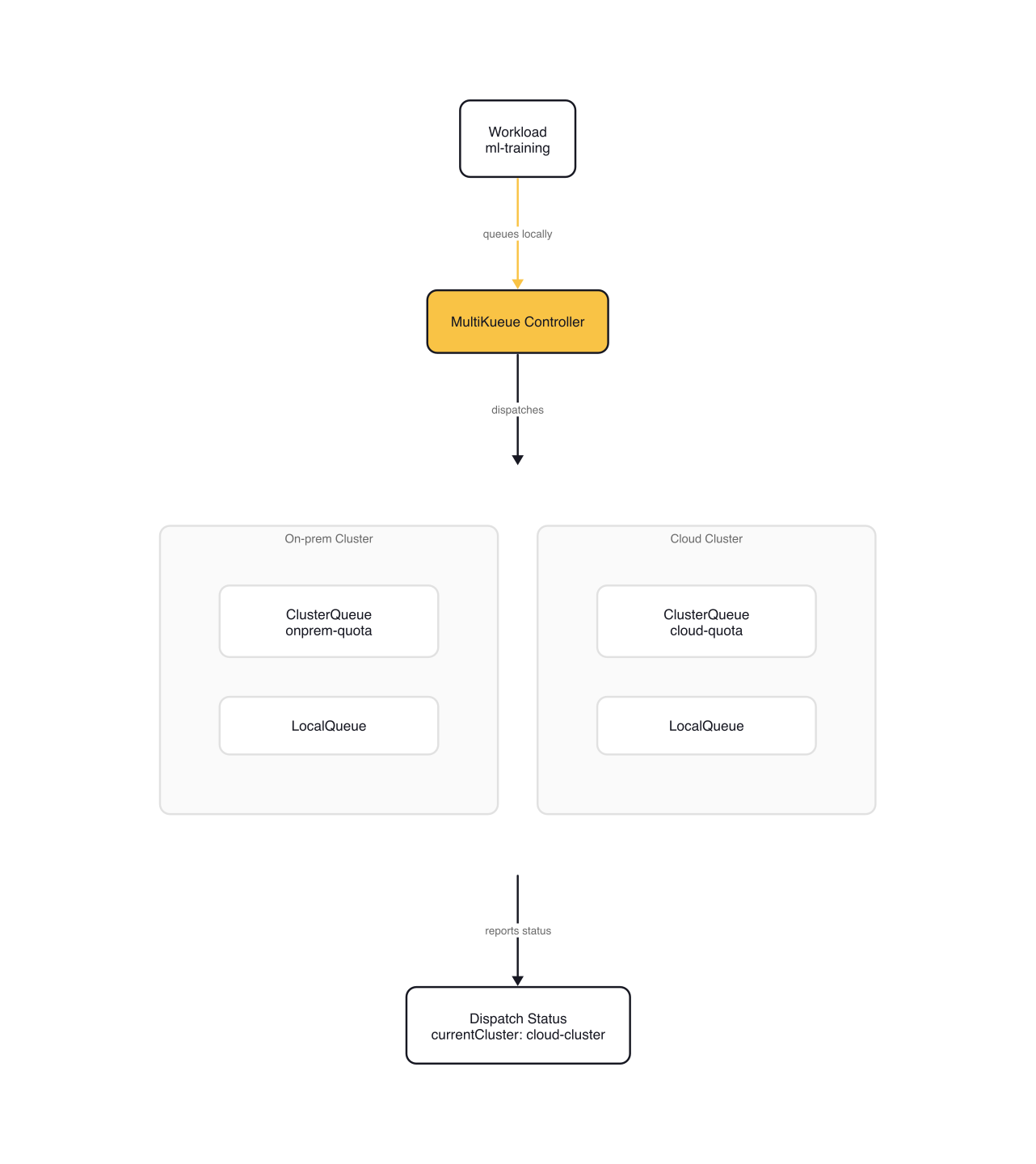

MultiKueue extends Kueue to dispatch jobs across multiple clusters. A manager cluster handles admission and scheduling decisions, while worker clusters run the actual workloads. Instead of submitting a job to a specific cluster, you submit it once to the manager and Kueue picks the worker with available capacity.

This matters for organizations with an on-prem GPU cluster (limited capacity) and a cloud cluster (elastic but expensive). MultiKueue dispatches to whichever cluster can admit the workload first.

The setup requires three CRDs on the manager cluster: AdmissionCheck, MultiKueueConfig, and MultiKueueCluster. The following example is from the MultiKueue setup tutorial:

# multikueue-admission-check.yaml — adapted from Kueue setup_multikueue docs

apiVersion: kueue.x-k8s.io/v1beta2

kind: AdmissionCheck

metadata:

name: multikueue-check

spec:

controllerName: kueue.x-k8s.io/multikueue

parameters:

apiGroup: kueue.x-k8s.io

kind: MultiKueueConfig

name: multikueue-config

# multikueue-config.yaml — adapted from Kueue setup_multikueue docs

apiVersion: kueue.x-k8s.io/v1beta2

kind: MultiKueueConfig

metadata:

name: multikueue-config

spec:

clusters:

- onprem-worker

- cloud-worker

# multikueue-clusters.yaml — adapted from Kueue setup_multikueue docs

apiVersion: kueue.x-k8s.io/v1beta2

kind: MultiKueueCluster

metadata:

name: onprem-worker

spec:

clusterSource:

kubeConfig:

locationType: Secret

location: onprem-worker-secret

---

apiVersion: kueue.x-k8s.io/v1beta2

kind: MultiKueueCluster

metadata:

name: cloud-worker

spec:

clusterSource:

kubeConfig:

locationType: Secret

location: cloud-worker-secret

Each MultiKueueCluster points to a Kubernetes Secret containing a kubeconfig for the worker cluster. The MultiKueueConfig groups workers together.

The ClusterQueue on the manager references the AdmissionCheck through admissionChecksStrategy:

# manager-cluster-queue.yaml — adapted from Kueue setup_multikueue docs

apiVersion: kueue.x-k8s.io/v1beta2

kind: ClusterQueue

metadata:

name: "cluster-queue"

spec:

namespaceSelector: {}

resourceGroups:

- coveredResources: ["cpu", "memory"]

flavors:

- name: "default-flavor"

resources:

- name: "cpu"

nominalQuota: 9

- name: "memory"

nominalQuota: 36Gi

admissionChecksStrategy:

admissionChecks:

- name: multikueue-check

Jobs submitted to this ClusterQueue are regular Kubernetes Jobs or JobSets — no special annotations needed. Kueue handles the cross-cluster dispatch automatically. The dispatching flow works as follows: when a workload gets a quota reservation on the manager, Kueue creates remote Workload objects on the worker clusters. When a worker admits the workload, Kueue deletes it from the other workers, creates the actual Job on the selected worker, and syncs status back to the manager.

You can verify the setup is active:

$ kubectl get clusterqueues cluster-queue \

-o jsonpath="{.status.conditions[?(@.type=='Active')].message}"

Can admit new workloads

$ kubectl get multikueuecluster onprem-worker \

-o jsonpath="{.status.conditions[?(@.type=='Active')].message}"

Connected

Gotchas and Lessons Learned

ResourceFlavors must match node labels. If you define a flavor with nodeSelector: accelerator: nvidia-a100 but your nodes don't have that label, the quota sits unused. Label your GPU nodes consistently:

$ kubectl label nodes gpu-node-01 accelerator=nvidia-a100

$ kubectl label nodes gpu-node-02 accelerator=nvidia-t4

Borrowing limits apply to unused quota. The borrowingLimit is a ceiling, not a guarantee. If Team B has already consumed 11 of their 12 CPUs, Team A can only borrow 1 CPU — even if their borrowingLimit allows more.

Preemption only works within a cohort. ClusterQueues that aren't in the same cohort can't preempt each other. Verify cohort membership with kubectl get clusterqueue <name> -o jsonpath='{.spec.cohortName}' before expecting preemption behavior.

MultiKueue requires matching namespaces and LocalQueues. When Kueue dispatches a workload from the manager to a worker cluster, it expects the job's namespace and LocalQueue to exist on the worker. Mirror the namespace and queue configuration across all clusters in the MultiKueue setup.

MultiKueue requires network connectivity between clusters. If the manager cluster can't reach a worker cluster's API server, dispatch fails. Verify DNS resolution, TLS certificates, and the kubeconfig Secret before relying on cross-cluster dispatch.

Wrap-up

Start with a single ClusterQueue and ResourceFlavor scoped to your GPU nodes. Add cohorts and borrowing limits when a second team arrives. Introduce preemption when SLA-critical jobs need guaranteed admission. Consider MultiKueue when your GPU demand exceeds single-cluster capacity. Each layer builds on the previous one — you don't need the full stack on day one.

The Kueue project moves fast. Keep an eye on lendingLimit (beta since v0.9) for finer-grained capacity protection, Topology-Aware Scheduling for NUMA-optimized GPU placement, and the ClusterProfile API (alpha since v0.15) for federated credential discovery in MultiKueue setups.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

GPU Sharing Strategies for Multi-Tenant Kubernetes: MIG, Time-Slicing, and MPS

NVIDIA's GPU sharing mechanisms — MIG, time-slicing, and MPS — are gaining traction as teams run multiple inference workloads per GPU.

NVIDIA KAI Scheduler: Open-Source GPU-Aware Kubernetes Scheduling

NVIDIA open-sourced KAI Scheduler (Apache 2.0), a Kubernetes-native GPU scheduling solution originally from the Run:ai platform.

HAMi: GPU Virtualization as the Reference Pattern for AI Infrastructure

HAMi (CNCF Sandbox) emerged at KubeCon EU 2026 as the reference implementation for GPU resource management across NVIDIA, AMD, Huawei, and Cambricon accelerators.