HAMi: GPU Virtualization as the Reference Pattern for AI Infrastructure

Introduction

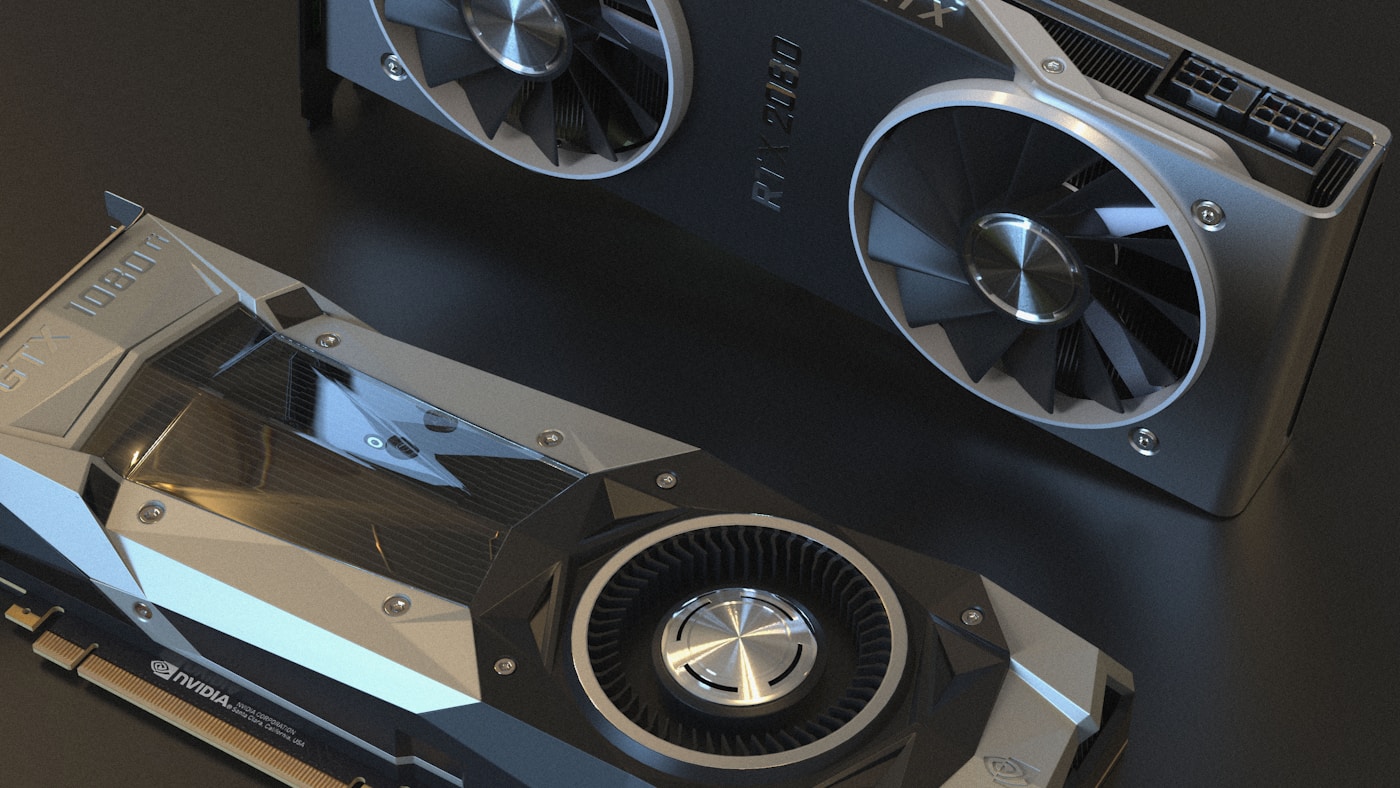

GPUs are the new CPU — the fundamental compute unit for AI workloads. But most Kubernetes clusters still allocate them like whole servers: one pod, one GPU, one tenant. The result is predictable — single-digit utilization, stranded capacity, and inference workloads that can't coexist on the same accelerator.

At KubeCon Europe 2026 in Amsterdam, the signal was unmistakable: HAMi (Heterogeneous AI Computing Virtualization Middleware) moved from "another CNCF project" to reference implementation for GPU resource management. Invited to present at the Maintainer Summit, discussed in CNCF TOC sessions, and explored for joint integration with vLLM — HAMi is becoming the default pattern for running AI workloads on Kubernetes.

This article covers HAMi's architecture, device sharing mechanics, production deployment patterns, and how it compares to NVIDIA's native approaches (MIG, time-slicing). By the end, you'll understand when HAMi is the right choice and how to deploy it for multi-tenant inference serving.

The GPU Resource Layer Problem

Whole-GPU allocation made sense when GPUs were used exclusively for training — long-running, single-tenant workloads that could saturate an entire A100 or H100. Inference is different. A vLLM replica serving Llama 3.1 8B might use 16GB of GPU memory and 40% of compute cores, leaving 60% of the GPU idle. Multiply that by dozens of models across your organization, and you're burning capital on stranded capacity.

NVIDIA offers two native sharing mechanisms:

MIG (Multi-Instance GPU) — Hardware-level partitioning available on A100 and H100 GPUs. An A100 80GB can be split into 7 independent instances (each with dedicated memory, compute, and memory bandwidth). MIG provides strong isolation but requires specific GPU models and doesn't support dynamic resizing.

Time-slicing — Available only on MIG partitions, not full GPUs. Up to 10 pods can time-share a single MIG instance, but the scheduler doesn't understand GPU topology or utilization.

Both approaches have gaps:

- Heterogeneous support — Neither MIG nor time-slicing helps if you have a mix of NVIDIA, AMD, Huawei Ascend, or Cambricon accelerators

- Fine-grained memory allocation — MIG uses fixed partitions; you can't request "3GB of GPU memory" without manual partitioning

- Cross-vendor management — Each vendor has its own device plugin, scheduling semantics, and isolation guarantees

Jimmy Song's observation from KubeCon EU captures the shift: "GPUs are no longer just devices — they are now a schedulable, partitionable, and shareable resource layer." The infrastructure layer is moving from exclusive node resources to multi-tenant shared pools.

HAMi Architecture — Scheduler Extender + Device Plugins

HAMi (formerly k8s-vGPU-scheduler) is a heterogeneous device management middleware that sits between kube-scheduler and vendor-specific device plugins. The architecture has four components:

Mutating Webhook — Intercepts pod creation and injects GPU scheduling annotations based on resource requests (nvidia.com/gpu, nvidia.com/gpumem, nvidia.com/gpucores).

Scheduler Extender — Implements predicate and priority functions for GPU-aware scheduling. Understands device topology, binpack/spread policies, and sharing constraints.

Device Plugins — Per-vendor plugins (NVIDIA, Huawei Ascend, Cambricon MLU, Hygon DCU, Iluvatar, Moore Threads, MetaX) that register virtual devices with kubelet and manage in-container virtualization.

In-Container Virtualization — Intercept CUDA/ROCm calls at runtime to enforce memory and compute limits without code changes.

Device Sharing Modes

HAMi supports three allocation strategies:

Memory-based — Request a specific amount of GPU memory:

# Pod requesting 3GB GPU memory on a 16GB A100

resources:

limits:

nvidia.com/gpu: 1 # Number of physical GPUs

nvidia.com/gpumem: 3000 # Memory in MB

Inside the container, nvidia-smi reports exactly 3000MB total memory — a hard limit enforced at the driver level.

Core-based — Request a percentage of compute cores (streaming multiprocessors):

resources:

limits:

nvidia.com/gpu: 1

nvidia.com/gpucores: 50 # 50% of SMs

MIG-based — Dynamic MIG partitioning for A100/H100 GPUs. HAMi can create and manage MIG slices on-demand, though currently only "none" and "mixed" modes are supported.

Hard Isolation

Unlike time-slicing (which relies on cooperative scheduling), HAMi enforces hard limits:

- Memory hard limit — Container OOMs if it exceeds requested GPU memory

- SM hard limit — Compute kernels are throttled to requested core percentage

- Zero code changes — Existing CUDA applications run unmodified

The isolation is strong enough for multi-tenant serving: a vLLM replica can't starve its neighbors by allocating all GPU memory or launching compute-heavy kernels. In-container virtualization adds ~2-5% latency overhead compared to bare metal — acceptable for most inference workloads, but measure for your SLOs.

Deploying HAMi in Production

Installation uses a Helm chart with minimal prerequisites (NVIDIA drivers >= 440, Kubernetes >= 1.18, container runtime configured for NVIDIA):

# Label GPU nodes for HAMi scheduling

kubectl label nodes <node-name> gpu=on

# Install HAMi

helm repo add hami-charts https://project-hami.github.io/HAMi/

helm install hami hami-charts/hami -n kube-system

# Verify installation

kubectl get pods -n kube-system | grep hami

# hami-device-plugin-xxxxx 1/1 Running

# hami-scheduler-xxxxx 1/1 Running

The scheduler exposes metrics at http://<scheduler-ip>:31993/metrics. Grafana dashboards are available in the HAMi repository.

Pod Spec Patterns

Partial GPU allocation — Multiple pods share a single physical GPU:

# Example: 4 vLLM replicas on one A100 80GB

apiVersion: v1

kind: Pod

metadata:

name: vllm-replica-1

spec:

containers:

- name: vllm

image: vllm/vllm-openai:latest

resources:

limits:

nvidia.com/gpu: 1

nvidia.com/gpumem: 20000 # 20GB per replica

Multiple vGPUs per pod — For tensor parallelism across virtual devices:

# Example: 2 vGPUs for tensor parallelism

resources:

limits:

nvidia.com/gpu: 2 # 2 physical GPUs

nvidia.com/gpumem: 16000 # 16GB per GPU

Dynamic MIG — A100/H100 GPUs with on-demand partitioning:

# Example: HAMi creates MIG slice dynamically

resources:

limits:

nvidia.com/gpu: 1

nvidia.com/gpumem: 10000

nvidia.com/mig: "1g.10gb" # MIG profile

vLLM Integration

vLLM has become the de facto standard for LLM inference serving, and HAMi integrates naturally. A typical multi-tenant deployment:

# Example: 8 vLLM replicas on 2 A100 80GB GPUs (10GB memory, 25% cores each)

apiVersion: apps/v1

kind: Deployment

metadata:

name: vllm-multi-tenant

spec:

replicas: 8

template:

spec:

containers:

- name: vllm

image: vllm/vllm-openai:latest

args: ["--model", "meta-llama/Llama-3.1-8B-Instruct"]

resources:

limits:

nvidia.com/gpu: 1

nvidia.com/gpumem: 10000

nvidia.com/gpucores: 25

KV-cache isolation is enforced by HAMi's memory limits — each replica sees only its allocated memory, preventing cache thrashing. Throughput vs. latency trade-offs depend on workload characteristics: latency-sensitive workloads benefit from dedicated slices, while batch inference can tolerate more sharing. Production deployments report 3-4x consolidation ratios compared to whole-GPU allocation.

HAMi vs. Native NVIDIA Approaches

| Feature | MIG | Time-Slicing | HAMi |

|---|---|---|---|

| Hardware requirement | A100, H100 only | MIG partitions only | Any NVIDIA GPU (440+ drivers) |

| Sharing granularity | Fixed partitions (1g.10gb, 2g.20gb, etc.) | Time-based (up to 10 slices) | Memory (1MB precision), cores (1% precision) |

| Heterogeneous support | NVIDIA only | NVIDIA only | NVIDIA, AMD, Huawei, Cambricon, Hygon, Iluvatar, Moore Threads, MetaX |

| Code changes required | None | None | None |

| Isolation level | Hardware (strongest) | Time-based (medium) | Driver-level hard limits (strong) |

| Dynamic resizing | No (requires pod restart) | No | Yes (with pod recreation) |

| Scheduling awareness | None (relies on kube-scheduler) | None | Topology-aware, binpack/spread policies |

| MIG support | Native (all modes) | MIG partitions only | "none" and "mixed" modes only |

When MIG is better:

- Single-tenant workloads requiring maximum isolation

- A100/H100-only clusters

- Compliance requirements mandating hardware partitioning

When HAMi is better:

- Multi-tenant inference serving (multiple models per GPU)

- Heterogeneous clusters (mixed GPU generations or vendors)

- Memory-based allocation (requesting exact memory amounts)

- Cost optimization (higher consolidation ratios)

Real scenario: Running 8 vLLM replicas serving different models. With MIG on A100, you're limited to 7 partitions per GPU, each with fixed memory. With HAMi, you can run 8 replicas at 10GB each on 2 A100s, with dynamic adjustment based on model size and KV-cache requirements.

Gotchas

Node label requirement — HAMi only schedules pods on nodes labeled gpu=on. Unlabeled nodes are ignored even if they have GPUs.

nodeName field not supported — Pods with nodeName bypass the scheduler and won't go through HAMi's webhook. Use nodeSelector instead.

nvidia.com/gpu semantics change after installation — Before HAMi, this resource refers to physical GPUs. After HAMi installation, the node's reported GPU count reflects virtual GPUs (vGPUs). When requesting resources in a pod, nvidia.com/gpu: 1 still means "one physical GPU", but the node's capacity shows vGPUs.

A100 MIG mode limitations — Currently only "none" and "mixed" modes are supported. "Mixed" allows MIG and non-MIG pods on the same GPU, but flexibility is limited compared to native MIG management.

Monitoring starts after first task — HAMi doesn't collect GPU metrics until a pod submits its first GPU task. Empty clusters show no utilization data.

Wrap-up

HAMi abstracts GPU heterogeneity into a unified scheduling interface with fine-grained sharing — memory, compute cores, and MIG slices — across NVIDIA, AMD, Huawei, and Cambricon accelerators. The hard isolation and topology-aware scheduling make it suitable for multi-tenant inference serving where utilization and cost matter.

GPU virtualization is becoming the default pattern for AI workloads on Kubernetes, shifting accelerators from exclusive node resources to shared infrastructure pools.

What's next in AI infrastructure on Kubernetes: Kueue is emerging as the community standard for batch AI workload scheduling, while Dynamic Resource Allocation (DRA) reaches general availability in Kubernetes 1.36, providing a richer interface for GPU allocation beyond static device plugins.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

GPU Sharing Strategies for Multi-Tenant Kubernetes: MIG, Time-Slicing, and MPS

NVIDIA's GPU sharing mechanisms — MIG, time-slicing, and MPS — are gaining traction as teams run multiple inference workloads per GPU.

Production LLM Serving on Kubernetes: vLLM + KServe Stack

Deploy vLLM with KServe on Kubernetes: InferenceService CRD, KEDA autoscaling on queue depth, and distributed KV cache with LMCache for production inference.

Kueue: The Community Standard for Kubernetes AI Batch Scheduling

Kueue manages GPU quotas, enforces fair sharing across teams, and dispatches jobs to remote HPC clusters — the standard for production AI batch scheduling.