Production LLM Serving on Kubernetes: vLLM + KServe Stack

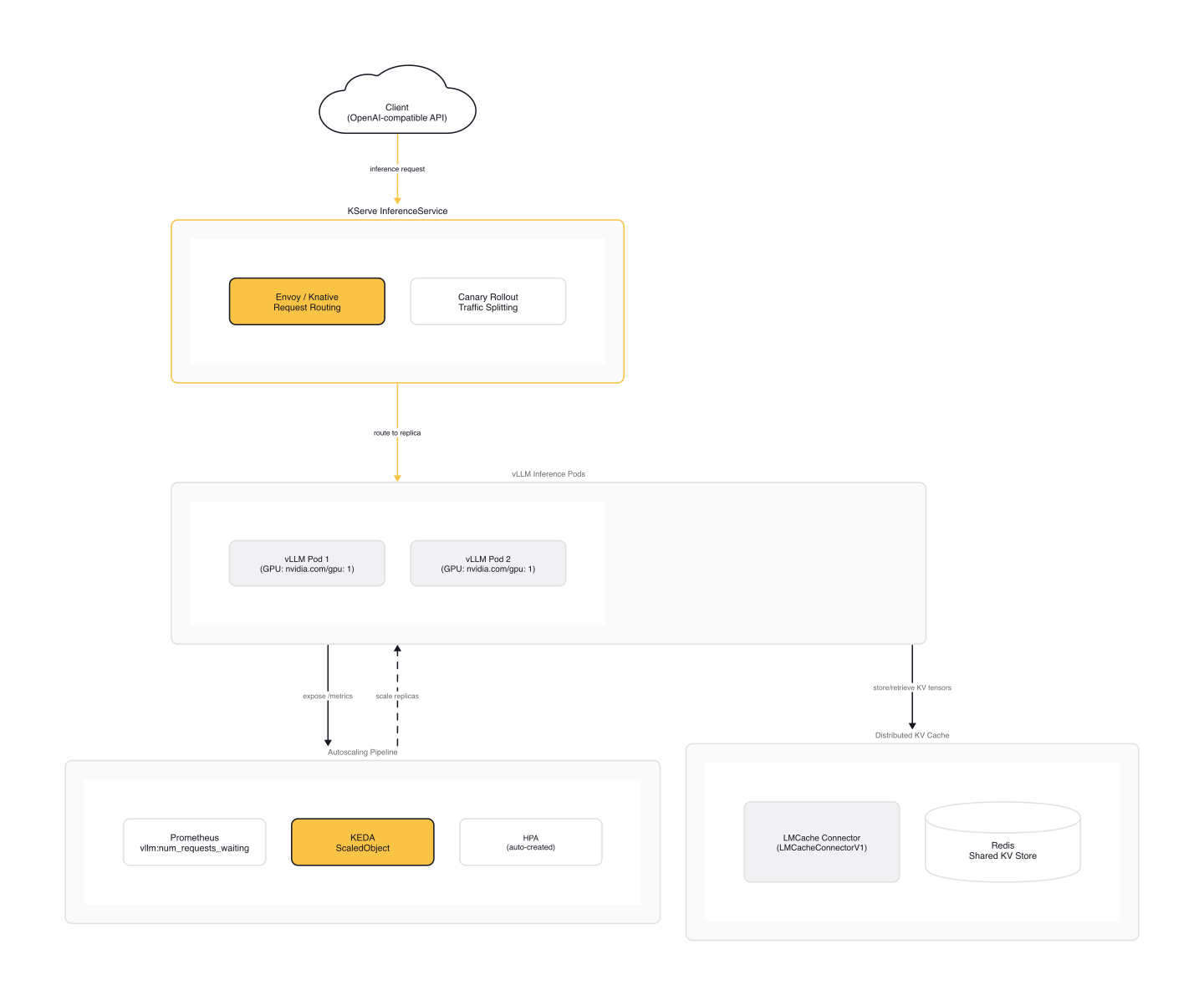

Running vLLM on a single GPU node is the easy part. The hard part starts when you need multiple replicas behind autoscaling, health checks that survive a 70B model load, and KV cache shared across pods so your RAG pipeline's second query doesn't recompute the entire context. KServe v0.15 landed in May 2025 with first-class support for exactly this stack: vLLM backend with KEDA autoscaling and LMCache for distributed KV cache offloading.

The stack that emerged in 2025 for this: KServe v0.15 with its KEDA integration and LMCache for distributed KV cache. Here is how to wire it together.

vLLM on Kubernetes: The Baseline Deployment

Before adding KServe, it helps to understand what a raw vLLM deployment looks like on Kubernetes. The vLLM documentation provides a GPU deployment manifest that covers the essentials:

# vllm-deployment.yaml (from vLLM docs)

apiVersion: apps/v1

kind: Deployment

metadata:

name: mistral-7b

namespace: default

labels:

app: mistral-7b

spec:

replicas: 1

selector:

matchLabels:

app: mistral-7b

template:

metadata:

labels:

app: mistral-7b

spec:

volumes:

- name: cache-volume

persistentVolumeClaim:

claimName: mistral-7b

- name: shm

emptyDir:

medium: Memory

sizeLimit: "2Gi"

containers:

- name: mistral-7b

image: vllm/vllm-openai:latest

command: ["/bin/sh", "-c"]

args: [

"vllm serve mistralai/Mistral-7B-Instruct-v0.3 --trust-remote-code --enable-chunked-prefill --max_num_batched_tokens 1024"

]

env:

- name: HF_TOKEN

valueFrom:

secretKeyRef:

name: hf-token-secret

key: token

ports:

- containerPort: 8000

resources:

limits:

cpu: "10"

memory: 20G

nvidia.com/gpu: "1"

requests:

cpu: "2"

memory: 6G

nvidia.com/gpu: "1"

volumeMounts:

- mountPath: /root/.cache/huggingface

name: cache-volume

- name: shm

mountPath: /dev/shm

livenessProbe:

httpGet:

path: /health

port: 8000

initialDelaySeconds: 60

periodSeconds: 10

readinessProbe:

httpGet:

path: /health

port: 8000

initialDelaySeconds: 60

periodSeconds: 5

Three things to notice. First, the /dev/shm emptyDir with medium: Memory: vLLM needs shared memory for tensor parallel inference, and without this mount the process silently fails or crashes. Second, the initialDelaySeconds: 60 on both probes: model loading for a 7B model takes at least a minute, larger models much longer. Third, --enable-chunked-prefill: this enables chunked prefill processing, preventing long prompts from blocking decode steps of in-flight requests.

This Deployment works, but it gives you exactly one replica with no autoscaling, no canary rollouts, no request routing, and no model management abstraction. For production, you need a serving layer.

KServe InferenceService: The Serving Layer

KServe wraps inference workloads in the InferenceService CRD, handling concerns that a raw Deployment cannot: request routing, canary deployments, autoscaling policies, and model format abstraction. KServe v0.15 added a lightweight installation mode specifically for generative inference, dropping the requirement for a full Istio service mesh.

A basic InferenceService with vLLM uses the huggingface model format and pulls directly from Hugging Face:

# kserve-vllm-isvc.yaml (based on KServe v0.15 patterns)

apiVersion: serving.kserve.io/v1beta1

kind: InferenceService

metadata:

name: llm-service

spec:

predictor:

model:

modelFormat:

name: huggingface

resources:

limits:

cpu: "6"

memory: 24Gi

nvidia.com/gpu: "1"

storageUri: "hf://meta-llama/Llama-3.1-8B-Instruct"

The hf:// prefix tells KServe to pull from Hugging Face Hub at pod startup. KServe handles the download, container lifecycle, and readiness signaling, and exposes an OpenAI-compatible API.

warning: Cold start with

hf://storageUri Anhf://storageUri downloads the model at pod startup. For an 8B model, that is ~16GB over the network, adding minutes to cold start. With KEDA autoscaling, new replicas sit in NotReady while downloading. For production, use a PVC with pre-downloaded weights or KServe'sLocalModelCacheto avoid download latency on scale-up.

For models that exceed a single node's GPU memory, KServe v0.15 introduced workerSpec for multi-node inference using pipeline parallelism:

# kserve-multi-node.yaml (from KServe v0.15 release)

apiVersion: serving.kserve.io/v1beta1

kind: InferenceService

metadata:

name: huggingface-llama3

spec:

predictor:

model:

modelFormat:

name: huggingface

storageUri: pvc://llama-3-8b-pvc/hf/8b_instruction_tuned

workerSpec:

pipelineParallelSize: 2

tensorParallelSize: 1

The workerSpec splits the model across two nodes using pipeline parallelism while keeping tensor parallelism within each node. Pipeline parallelism adds latency per layer boundary (each stage must wait for the previous one), but it is the only option when a model's weights exceed a single node's aggregate GPU memory. The community is working on a distributed inference API to add disaggregated prefill support.

Autoscaling with KEDA on LLM Metrics

Standard HPA on CPU utilization is a common mistake for LLM deployments. GPU inference can sit at 5% CPU utilization while the request queue backs up with 50 waiting requests. The GPU is the bottleneck, not the CPU.

vLLM's continuous batching processes requests as slots open in the running batch, so queue depth directly reflects saturation rather than batch boundary effects. When slots are available, requests enter the batch immediately and the queue stays near zero. The queue only grows when the batch is full. That makes queue depth a precise scaling signal. Google's GKE team recommends starting with a queue size threshold of 3-5 and adjusting based on your latency targets.

KServe v0.15 integrates with KEDA for event-driven autoscaling on LLM-specific metrics. The InferenceService manifest uses annotations to configure the autoscaler:

# kserve-keda-autoscaling.yaml (from KServe v0.15 release)

apiVersion: serving.kserve.io/v1beta1

kind: InferenceService

metadata:

name: huggingface-llama3-keda

annotations:

serving.kserve.io/autoscalerClass: "keda"

sidecar.opentelemetry.io/inject: "huggingface-llama3-keda"

spec:

predictor:

model:

modelFormat:

name: huggingface

args:

- --model_name=llama3

- --model_id=meta-llama/meta-llama-3-70b

minReplicas: 1

maxReplicas: 5

autoScaling:

metrics:

- type: PodMetric

podmetric:

metric:

backend: "opentelemetry"

metricNames:

- vllm:num_requests_running

query: "vllm:num_requests_running"

target:

type: Value

value: "4"

The autoscalerClass: "keda" annotation switches from Knative's KPA to KEDA. The scaling target is vllm:num_requests_running with a threshold of 4 concurrent requests per pod. When running requests per pod exceed 4, KEDA adds replicas.

For environments where you manage KEDA directly, the Red Hat approach uses a ScaledObject with a Prometheus trigger:

# keda-scaledobject.yaml (adapted from Red Hat Developer guide)

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: llama-32-3b-predictor

namespace: keda

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: llama-32-3b-predictor

minReplicaCount: 1

maxReplicaCount: 5

pollingInterval: 5

triggers:

- type: prometheus

authenticationRef:

name: keda-trigger-auth-prometheus

metadata:

serverAddress: https://thanos-querier.openshift-monitoring.svc.cluster.local:9092

query: 'sum(vllm:num_requests_waiting{namespace="keda", pod=~"llama-32-3b-predictor.*"})'

threshold: '2'

authModes: "bearer"

namespace: keda

This scales on vllm:num_requests_waiting instead of num_requests_running. The difference matters: waiting requests are queued, not yet in the batch. A threshold of 2 waiting requests triggers scale-up before users experience latency spikes. KEDA creates an HPA under the hood and polls Prometheus every 5 seconds.

tip: Knative KPA vs KEDA Red Hat's performance testing found that Knative's concurrency-based KPA achieves 84.8% request success under homogeneous workloads, but fails under heterogeneous traffic (mixed prompt lengths). KEDA with SLO-driven Prometheus metrics correctly scaled to full cluster capacity with 86.9% success. For LLM workloads with variable input sizes, KEDA is the safer choice.

Distributed KV Cache with LMCache

Transformer inference generates key-value tensors for every token in the context. Without caching, every request recomputes the full context from scratch. For multi-turn conversations or RAG pipelines with shared system prompts, that is wasted GPU compute.

LMCache stores KV caches across GPU, CPU, disk, and remote backends like Redis. It integrates directly into vLLM's v1 engine, enabling cache sharing across replicas. LMCache benchmarks claim 3-10x TTFT reduction for multi-round QA and RAG workloads. The actual gain depends on prompt overlap between requests: high overlap (shared system prompts, RAG contexts) yields the biggest wins, while unique prompts see minimal benefit.

KServe v0.15 added native LMCache integration via PR #4320. The setup requires three pieces: a Redis backend, an LMCache ConfigMap, and an InferenceService with the connector enabled.

The LMCache config is stored in a ConfigMap:

# lmcache-config.yaml (from KServe docs)

apiVersion: v1

kind: ConfigMap

metadata:

name: lmcache-config

data:

lmcache_config.yaml: |

local_cpu: true

chunk_size: 256

max_local_cpu_size: 2.0

remote_url: "redis://redis.default.svc.cluster.local:6379"

remote_serde: "naive"

The local_cpu: true enables a local CPU RAM cache as a fast first-level cache. The remote Redis instance acts as a shared, persistent second-level cache accessible by all replicas.

The InferenceService mounts this config and enables the LMCache connector:

# kserve-lmcache-isvc.yaml (from KServe docs)

apiVersion: serving.kserve.io/v1beta1

kind: InferenceService

metadata:

name: huggingface-llama3

spec:

predictor:

minReplicas: 2

model:

modelFormat:

name: huggingface

args:

- --model_name=llama3

- --model_id=meta-llama/Llama-3.2-1B-Instruct

- --max-model-len=10000

- --kv-transfer-config

- '{"kv_connector":"LMCacheConnectorV1", "kv_role":"kv_both"}'

- --enable-chunked-prefill

env:

- name: HF_TOKEN

valueFrom:

secretKeyRef:

name: hf-secret

key: HF_TOKEN

- name: LMCACHE_USE_EXPERIMENTAL

value: "True"

- name: LMCACHE_CONFIG_FILE

value: /lmcache/lmcache_config.yaml

- name: LMCACHE_LOG_LEVEL

value: "INFO"

resources:

limits:

cpu: 6

memory: 24Gi

nvidia.com/gpu: "1"

requests:

cpu: 6

memory: 24Gi

nvidia.com/gpu: "1"

volumeMounts:

- name: lmcache-config-volume

mountPath: /lmcache

readOnly: true

volumes:

- name: lmcache-config-volume

configMap:

name: lmcache-config

items:

- key: lmcache_config.yaml

path: lmcache_config.yaml

The --kv-transfer-config argument with kv_role: "kv_both" tells vLLM to both store and retrieve KV caches via LMCache. With minReplicas: 2, both replicas share cache through Redis.

You can verify cache hits from the pod logs:

$ kubectl logs -f <pod-name> -c kserve-container

# Cold miss — stores cache

[2025-05-16 08:46:00,417] LMCache INFO: Reqid: chatcmpl-cb800, Total tokens 50, LMCache hit tokens: 0

[2025-05-16 08:46:00,450] LMCache INFO: Storing KV cache for 50 out of 50 tokens

# Warm hit — skips prefill

[2025-05-16 09:09:09,550] LMCache INFO: Reqid: chatcmpl-fabe7, Total tokens 50, LMCache hit tokens: 49

A subsequent request with overlapping context hits 49 out of 50 tokens from cache, skipping almost all prefill computation. For RAG workloads where multiple queries share the same retrieved context, this eliminates redundant GPU work across replicas.

Gotchas

Startup probe failureThreshold too low. vLLM model loading takes 60+ seconds for a 7B model, several minutes for 70B+. The vLLM docs manifest above uses livenessProbe and readinessProbe with initialDelaySeconds: 60, but lacks a startupProbe. For production, add a dedicated startup probe with a failureThreshold that covers your model's full load time. If failureThreshold * periodSeconds is shorter than the load time, Kubernetes kills the container in a restart loop.

Missing /dev/shm mount. vLLM needs shared memory for tensor parallel inference. Without an emptyDir volume with medium: Memory mounted at /dev/shm, tensor parallel fails silently or crashes. The default shm size in most container runtimes is 64MB, far too small.

GPU memory utilization set to 1.0. vLLM's gpu_memory_utilization controls the fraction of GPU memory reserved for KV cache. Setting it to 1.0 leaves no headroom for PagedAttention metadata and causes OOM errors under load. Use 0.9 as the ceiling.

HPA on CPU utilization. GPU inference workloads can sit at 5% CPU while the inference queue backs up. HPA on CPU will never trigger a scale-up. Use KEDA with vllm:num_requests_waiting or vllm:num_requests_running instead.

ReadWriteOnce PVC for model storage. A PVC with ReadWriteOnce access mode blocks multi-replica scaling because only one pod can mount it. Use ReadWriteMany with NFS or a CSI driver that supports it. Otherwise, new replicas fail to schedule.

Wrap-Up

The vLLM Production Stack Helm chart bundles this pattern with a request router, Prometheus+Grafana observability, and KV-cache-aware routing out of the box. KServe is also working on Envoy AI Gateway integration for token-level rate limiting and a distributed inference API for disaggregated prefill. The plumbing shown here, InferenceService for serving, KEDA for queue-depth scaling, LMCache for shared KV state, is the baseline. The next layer is routing intelligence.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

Introduction to KEDA and Event-Driven Autoscaling

How KEDA extends Kubernetes HPA with 65+ scalers, scale-to-zero, and a two-phase architecture for event-driven pod autoscaling.

Custom Metrics and Prometheus-Based Scaling with KEDA

Using KEDA's Prometheus scaler to drive autoscaling from any PromQL query — replacing Prometheus Adapter with a simpler, more flexible approach.

HTTP-Based Autoscaling with the KEDA HTTP Add-on

How the KEDA HTTP Add-on intercepts traffic to scale HTTP workloads to zero, and when the Prometheus scaler is better.