HTTP-Based Autoscaling with the KEDA HTTP Add-on

KEDA scales pods on Kafka lag, SQS queue depth, and Prometheus queries. HTTP traffic is conspicuously absent from the core project. There is no built-in HTTP scaler, and for good reason: an HTTP "event" is not well defined, and measuring incoming request volume requires infrastructure that does not exist out of the box in a Kubernetes cluster.

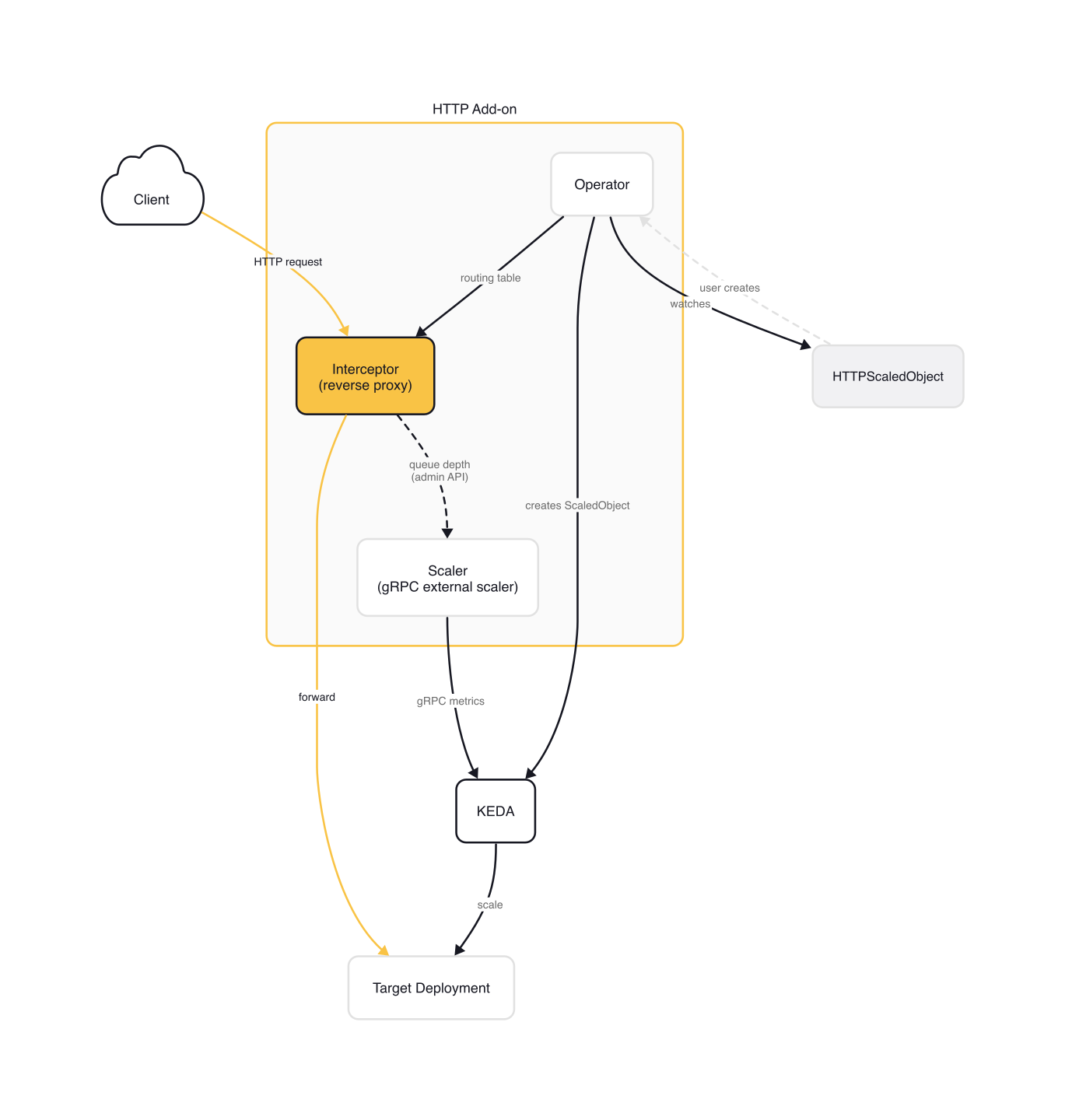

The KEDA HTTP Add-on fills this gap. It places an interceptor proxy in front of your service, counts pending requests, and feeds those metrics to KEDA through an external scaler. This is the only way to get true scale-to-zero for HTTP workloads with KEDA.

The trade-off is real. The add-on is still beta, community-maintained, and every request flows through an extra proxy hop. CPU-based HPA reacts to resource pressure that already happened. An API pod handling 200ms P99 requests at 80% CPU has already queued 40+ requests before HPA reacts at the default 15-second sync period. What you want is to scale on request volume directly, before the pod is saturated. Two approaches exist: intercept traffic with a proxy that counts in-flight requests (the HTTP Add-on), or query an external metrics source like Prometheus where your ingress controller already exports http_requests_total (the Prometheus scaler). This article covers both, starting with the add-on's architecture and ending with a decision framework for choosing between them.

HTTP Add-on Architecture

The KEDA HTTP Add-on (kedacore/http-add-on) consists of three components that work together but run as independent processes.

Operator

The Operator watches for HTTPScaledObject custom resources. When one is created, it updates an internal routing table that maps hostnames to backend services, furnishes this routing information to the interceptor, and creates a standard KEDA ScaledObject for the target Deployment. When the HTTPScaledObject is deleted, the operator reverses all of these actions.

Interceptor

The Interceptor is a cluster-internal reverse proxy written in Go using controller-runtime. It accepts incoming HTTP traffic, forwards requests to the target service, and tracks the number of pending requests in an in-memory queue.

// interceptor/main.go — queue and routing table initialization

queues := queue.NewMemory()

routingTable := routing.NewTable(ctrlCache, queues)

The routing table is event-driven. The interceptor watches two CRD types: HTTPScaledObject (the user-facing resource) and InterceptorRoute (an internal resource the operator creates to describe the routing configuration). You never create InterceptorRoute objects directly. Informer event handlers call routingTable.Signal() on every change, so route updates propagate without polling:

// interceptor/main.go — informer setup for route change detection

routeSources := []client.Object{

&v1beta1.InterceptorRoute{},

&v1alpha1.HTTPScaledObject{},

}

for _, obj := range routeSources {

informer, err := ctrlCache.GetInformer(ctx, obj)

// ... (error handling omitted for brevity)

_, err = informer.AddEventHandler(toolscache.ResourceEventHandlerFuncs{

AddFunc: func(_ any) { routingTable.Signal() },

UpdateFunc: func(_, _ any) { routingTable.Signal() },

DeleteFunc: func(_ any) { routingTable.Signal() },

})

// ... (error handling omitted)

}

The interceptor runs four concurrent goroutines: the controller-runtime cache, the routing table update loop, an admin server on a separate port exposing queue depth metrics, and the proxy server that handles user traffic. It supports TLS, OpenTelemetry tracing, and Prometheus metrics export.

Scaler

The Scaler implements KEDA's external scaler gRPC interface. It periodically pings the interceptor's admin endpoint for the current pending request count, then reports that metric to KEDA:

// scaler/main.go — queue pinger initialization

pinger := newQueuePinger(

ctrl.Log, k8s.EndpointsFuncForControllerClient(ctrlCache),

namespace, svcName, deplName, targetPortStr,

)

The scaler implements KEDA's ExternalScaler gRPC interface, including StreamIsActive for push-based activation updates. The operator creates a ScaledObject with trigger type external-push, which means KEDA receives scaling signals from the scaler's stream rather than polling on an interval. Health checks propagate the pinger's status: if the last successful ping exceeds twice the tick duration, the scaler reports NOT_SERVING, preventing KEDA from making stale scaling decisions.

The communication path: interceptor (HTTP admin endpoint) → scaler (gRPC) → KEDA (HPA) → Kubernetes (scale the Deployment).

The HTTPScaledObject CRD

The HTTPScaledObject is the only resource you create. The operator handles everything else.

# httpscaledobject.yaml

kind: HTTPScaledObject

apiVersion: http.keda.sh/v1alpha1

metadata:

name: api-service

spec:

hosts:

- api.example.com

pathPrefixes:

- /v1

scaleTargetRef:

name: api-service

kind: Deployment

apiVersion: apps/v1

service: api-service

port: 8080

replicas:

min: 0

max: 50

scalingMetric:

requestRate:

targetValue: 100

granularity: 1s

window: 1m

scaledownPeriod: 300

Once you apply this resource, the operator creates a ScaledObject and wires the routing automatically:

$ kubectl get httpso

NAME TARGETWORKLOAD TARGETSERVICE MINREPLICAS MAXREPLICAS AGE READY

api-service deployment/api-service api-service 0 50 2m True

$ kubectl get scaledobject

NAME SCALETARGETKIND SCALETARGETNAME MIN MAX TRIGGERS READY ACTIVE

api-service-app apps/v1.Deployment api-service 0 50 external-push True True

The ScaledObject with trigger type external-push is managed entirely by the operator. You do not create it yourself.

Scaling Metrics

Two metrics are available, and they are mutually exclusive.

Request rate scales based on average requests per second per replica over a time window. The HPA algorithm uses the formula desiredReplicas = ceil[currentReplicas * (currentRate / targetValue)]. If your target is 100 requests per second and the interceptor measures 300, KEDA scales to three replicas.

# Request rate configuration

scalingMetric:

requestRate:

targetValue: 100 # requests per second per replica

granularity: 1s # measurement resolution (default 1s)

window: 1m # aggregation window (default 1m)

Concurrency scales based on the number of in-flight requests per replica. This works better for long-lived connections, streaming responses, or WebSocket traffic where requests per second is not the right metric.

# Concurrency configuration

scalingMetric:

concurrency:

targetValue: 50 # concurrent requests per replica

If you omit scalingMetric entirely, the add-on defaults to concurrency with a target of 100.

Routing

The hosts field supports exact matches and wildcards (*.example.com). Exact matches take precedence. An empty host or * acts as a catch-all. The pathPrefixes field narrows which request paths count toward scaling. You can also match on custom headers for more granular routing.

Combining with Other Scalers

If you need HTTP scaling alongside other triggers (scaling on both request rate and CPU, for example), set the httpscaledobject.keda.sh/skip-scaledobject-creation annotation to "true". The operator skips creating its managed ScaledObject, and you create your own with an external-push trigger pointing to keda-add-ons-http-external-scaler.keda:9090 plus whatever additional triggers you need. See the HTTPScaledObject reference for the required metadata fields.

Scale-to-Zero Flow

Scale-to-zero is the HTTP Add-on's distinguishing feature. Here is the sequence when a request arrives and the Deployment has zero replicas:

- Traffic arrives at the ingress controller, which routes to

keda-add-ons-http-interceptor-proxy - The interceptor receives the request, finds no healthy backend pods, and queues the request

- The scaler pings the interceptor, sees pending > 0, reports the metric to KEDA

- KEDA activates the Deployment, scaling from 0 to the configured

replicas.minvalue - The pod starts, passes readiness probes, and the interceptor detects a healthy endpoint

- The interceptor forwards the queued request to the now-ready pod

Cold start latency is the sum of: interceptor queue hold time + pod scheduling + container image pull + container startup + readiness probe success. For a small Go binary with a 1-second readiness probe, expect 3-5 seconds. For a JVM application pulling a 500MB image, expect 30+ seconds.

Reducing Cold Start Impact

Three levers reduce cold start pain:

Fast containers. Use small images, avoid JVM startup overhead, and set aggressive readiness probes (

initialDelaySeconds: 1,periodSeconds: 1). A Go binary in a distroless image can pass readiness in under 2 seconds.Failover service. The

ColdStartTimeoutFailoverReffield routes requests to a fallback service if the target does not become ready withintimeoutSeconds(default 30). Use this to return a cached response or status page while the primary service starts.

# HTTPScaledObject spec — cold start failover

coldStartTimeoutFailoverRef:

service: api-fallback

port: 8080

timeoutSeconds: 10

- Run multiple interceptor replicas. Set

interceptor.replicas=3in the Helm values. The interceptor itself must stay available to queue requests during scale-from-zero events.

The Prometheus Alternative

If you already have Prometheus collecting HTTP metrics from your ingress controller or application, you can skip the HTTP Add-on entirely and use KEDA's Prometheus scaler.

NGINX Ingress, for example, exports nginx_ingress_controller_requests out of the box when metrics are enabled. A KEDA ScaledObject with a Prometheus trigger can scale your Deployment based on request rate without any interceptor:

# scaledobject-prometheus.yaml

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: api-service-scaler

spec:

scaleTargetRef:

kind: Deployment

name: api-service

minReplicaCount: 1

maxReplicaCount: 50

triggers:

- type: prometheus

metadata:

serverAddress: http://prometheus.monitoring:9090

query: |

sum(rate(

nginx_ingress_controller_requests{

service="api-service"

}[2m]

))

threshold: "100"

No interceptor. No proxy hop. No routing changes. The ingress routes directly to your application service, and KEDA queries Prometheus for the request rate. Production queries are rarely this simple: you typically need to exclude error status codes, handle absent() for newly deployed services, and tune the rate window to match your scaling responsiveness. But the operational overhead is still lower than running an interceptor proxy.

The trade-off is straightforward: no scale-to-zero. The Prometheus scaler requires minReplicaCount: 1 because there is no interceptor to queue requests while pods start. If the Deployment is at zero replicas, no requests reach the application, no metrics are generated, and KEDA has nothing to scale on.

Decision Matrix

| Criteria | HTTP Add-on | Prometheus Scaler |

|---|---|---|

| Scale-to-zero | Yes | No (min 1 replica) |

| Proxy overhead | Yes (interceptor in request path) | None |

| Production readiness | Beta, community-maintained | Stable, core KEDA |

| Setup complexity | Helm install + HTTPScaledObject | Prometheus + ScaledObject + PromQL |

| Latency impact | Added proxy hop + cold start | None beyond existing infrastructure |

| Ingress changes | Must route through interceptor | No changes required |

Use the HTTP Add-on for dev/staging environments, cost-sensitive internal APIs with bursty traffic that tolerate cold starts, or any workload where the cost of running a minimum replica exceeds the cost of cold start latency.

Use the Prometheus scaler for production web services where latency matters, when Prometheus is already collecting HTTP metrics, or when you need the stability of a core KEDA scaler without additional infrastructure in the request path.

Gotchas

Beta means beta. The HTTP Add-on README states: "We can't yet recommend it for production usage because we are still developing and testing it." It is community-maintained, not owned by the KEDA core team. Kedify offers a production-grade commercial alternative built on the same concepts but with Envoy-based interceptors, gRPC/WebSocket support, and fallback mechanisms.

Request rate data lives in memory. The interceptor stores request count data in-memory for the configured window. Long windows (greater than 5 minutes) or fine granularity (less than 1 second) increase memory consumption. Changing the window or granularity on a running HTTPScaledObject replaces all stored data, causing temporary scaling inaccuracy until the new window fills.

The interceptor is in the request path. Every HTTP request routed through the add-on passes through the interceptor proxy. If the interceptor goes down, traffic stops. Run multiple interceptor replicas (interceptor.replicas=3 in the Helm values) and monitor interceptor pod health closely.

Ingress misconfiguration silently breaks scaling. Your ingress must route to keda-add-ons-http-interceptor-proxy, not directly to the application service. If traffic bypasses the interceptor, the scaler reports zero pending requests, and KEDA may scale the Deployment to zero even while users are sending requests.

scaledownPeriod is measured by the scaler, not real traffic. The scaler checks pending request counts periodically. Workloads with sparse, random traffic can unexpectedly scale to zero between bursts. Increase scaledownPeriod (default 300 seconds) for services with long gaps between requests.

The HPA scales between 1 and max. KEDA handles 0 ↔ 1. This is a common source of confusion. KEDA activates the Deployment (0 → 1 or more), and the HPA handles scaling between replicas.min (when > 0) and replicas.max. The two systems work together but have different responsibilities.

Wrap-up

The HTTP Add-on is the only way to get true scale-to-zero for HTTP workloads with KEDA. Whether that capability justifies the interceptor overhead depends on your environment.

Start by checking what metrics you already have. If Prometheus is collecting nginx_ingress_controller_requests or your application exports its own HTTP metrics, try the Prometheus scaler first. It requires zero new infrastructure in the request path. If you genuinely need scale-to-zero for cost savings or bursty internal workloads, deploy the HTTP Add-on in staging, measure the cold start latency with your actual containers, and decide whether the trade-off works for your SLOs. The add-on is actively evolving. Watch the roadmap for production readiness milestones.

This post is part of the KEDA — Event-Driven Autoscaling from Zero to Production collection (4 of 7)

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

Custom Metrics and Prometheus-Based Scaling with KEDA

Using KEDA's Prometheus scaler to drive autoscaling from any PromQL query — replacing Prometheus Adapter with a simpler, more flexible approach.

Introduction to KEDA and Event-Driven Autoscaling

How KEDA extends Kubernetes HPA with 65+ scalers, scale-to-zero, and a two-phase architecture for event-driven pod autoscaling.

KEDA and Karpenter Together — Pod and Node Scaling Synergy

Combining KEDA's event-driven pod scaling with Karpenter's just-in-time node provisioning for a fully reactive, cost-efficient Kubernetes autoscaling stack.