Batch Processing with KEDA ScaledJobs

ScaledObject works well for long-running services. Point it at a Deployment, give it a trigger, and KEDA adjusts replicas as demand shifts. But when your workload needs to process one event and exit, Deployments are the wrong abstraction. A pod that pulls a message, runs an ETL job for three hours, and terminates cleanly is a Kubernetes Job, not a Deployment replica.

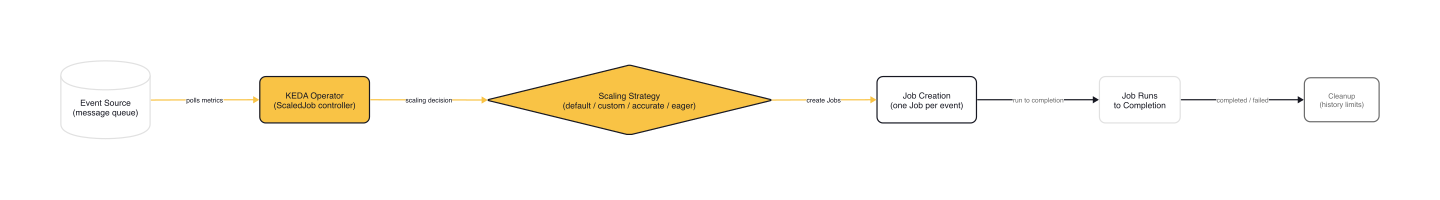

KEDA's ScaledJob bridges event sources to Kubernetes Jobs. No events in the queue means no Jobs running. A message arrives and KEDA creates a Job. The Job pulls that single message, processes it to completion, and terminates on its own. KEDA never kills a running Job mid-execution, which is the critical difference from ScaledObject's scale-down behavior.

This article covers the ScaledJob spec, the four scaling strategies that control how many Jobs KEDA creates per polling cycle, rollout policies for safe updates, and a real-world pattern from LeanIX's integration platform that pushed ScaledJob beyond the typical one-job-per-message model.

ScaledJob vs ScaledObject

ScaledObject scales Deployments. Pods are long-lived and process multiple events. ScaledJob creates Kubernetes Jobs that run to completion. KEDA does not terminate Jobs during scale-down; the only thing that stops a running Job is the Job itself finishing, hitting activeDeadlineSeconds, or exceeding backoffLimit.

When to use ScaledJob:

- Event isolation: each message gets its own Job with its own failure domain. One poisoned message crashes one Job, not your entire consumer pool.

- Long execution time: Jobs that run for minutes or hours without interruption risk. An ETL batch that takes 90 minutes cannot tolerate being killed at the 60-minute mark because demand dropped.

- Clean termination: the Job processes exactly one event, writes results, and exits. No shutdown hooks, no draining, no graceful termination period.

When ScaledObject is better: high-throughput, low-latency workloads where Job startup overhead (pulling images, initializing containers) adds unacceptable latency. If your consumer processes 500 messages per second, you want a Deployment with persistent pods, not 500 Jobs per second.

Anatomy of a ScaledJob

Here is the canonical ScaledJob configuration from the KEDA docs, processing messages from a RabbitMQ queue:

# ScaledJob — KEDA docs example (keda.sh/docs/2.19/concepts/scaling-jobs)

apiVersion: keda.sh/v1alpha1

kind: ScaledJob

metadata:

name: rabbitmq-consumer

namespace: default

spec:

jobTargetRef:

template:

spec:

containers:

- name: demo-rabbitmq-client

image: demo-rabbitmq-client:1

imagePullPolicy: Always

command: ["receive", "amqp://user:PASSWORD@rabbitmq.default.svc.cluster.local:5672"]

envFrom:

- secretRef:

name: rabbitmq-consumer-secrets

restartPolicy: Never

backoffLimit: 4

pollingInterval: 10

maxReplicaCount: 30

successfulJobsHistoryLimit: 3

failedJobsHistoryLimit: 2

scalingStrategy:

strategy: "custom"

customScalingQueueLengthDeduction: 1

customScalingRunningJobPercentage: "0.5"

triggers:

- type: rabbitmq

metadata:

queueName: hello

host: RabbitMqHost

queueLength: '5'

The jobTargetRef is a standard batch/v1 JobSpec. Anything you can put in a Job template works here: parallelism, completions, activeDeadlineSeconds, backoffLimit, volumes, init containers.

Key fields on the ScaledJob spec itself:

| Field | Default | Purpose |

|---|---|---|

pollingInterval |

30s | How often KEDA checks the event source |

minReplicaCount |

0 | Minimum Jobs kept running (0 = scale to zero) |

maxReplicaCount |

100 | Cap on Jobs created per polling cycle |

successfulJobsHistoryLimit |

100 | Completed Job objects to retain |

failedJobsHistoryLimit |

100 | Failed Job objects to retain |

These defaults come directly from the CRD source:

// scaledjob_types.go (kedacore/keda, line 24-27)

const (

defaultScaledJobMaxReplicaCount = 100

defaultScaledJobMinReplicaCount = 0

)

Setting minReplicaCount above zero keeps warm Jobs running permanently. That eliminates cold start latency at the cost of continuous resource consumption.

Scaling Strategies

KEDA offers four strategies that determine how many Jobs to create per polling cycle. The base formula is:

maxScale = min(maxReplicaCount, ceil(queueLength / targetAverageValue))

From there, each strategy applies its own logic to produce an effectiveMaxScale, the actual number of Jobs KEDA will create.

One subtlety: getScalingDecision in scale_jobs.go subtracts minReplicaCount from runningJobCount before passing it to the strategy. When minReplicaCount is 0 (the default), this has no effect. If you set minReplicaCount: 2, the strategies see runningJobCount - 2, counting only the Jobs above the minimum floor. The comparison table below assumes minReplicaCount: 0.

default

The simplest approach: subtract running Jobs from the target.

// scale_jobs.go — defaultScalingStrategy.GetEffectiveMaxScale (kedacore/keda)

func (s defaultScalingStrategy) GetEffectiveMaxScale(

maxScale, runningJobCount, _, _, scaleTo int64,

) (int64, int64) {

return maxScale - runningJobCount, scaleTo

}

If the queue has 10 messages, target value is 1, and 3 Jobs are running, KEDA creates 7 new Jobs (capped at maxReplicaCount).

custom

Adds two tuning knobs: customScalingQueueLengthDeduction subtracts a fixed number from the queue length before calculating, and customScalingRunningJobPercentage controls how much weight running Jobs carry.

// scale_jobs.go — customScalingStrategy.GetEffectiveMaxScale (kedacore/keda)

func (s customScalingStrategy) GetEffectiveMaxScale(

maxScale, runningJobCount, _, maxReplicaCount, scaleTo int64,

) (int64, int64) {

return min(maxScale-int64(*s.CustomScalingQueueLengthDeduction)-

int64(float64(runningJobCount)*(*s.CustomScalingRunningJobPercentage)),

maxReplicaCount), scaleTo

}

Setting customScalingRunningJobPercentage: "0.5" means only 50% of running Jobs count toward the deduction. This makes KEDA more aggressive about creating new Jobs when existing ones are slow.

accurate

Factors in pending Jobs (created but not yet running). Recommended for queues where messages are deleted on consume, like Azure Storage Queue, where the queue length already excludes in-flight messages.

// scale_jobs.go — accurateScalingStrategy.GetEffectiveMaxScale (kedacore/keda)

func (s accurateScalingStrategy) GetEffectiveMaxScale(

maxScale, runningJobCount, pendingJobCount, maxReplicaCount, scaleTo int64,

) (int64, int64) {

if (maxScale + runningJobCount - pendingJobCount) > maxReplicaCount {

return maxReplicaCount - runningJobCount, scaleTo

}

return maxScale - pendingJobCount, scaleTo

}

eager

Fills all available slots up to maxReplicaCount regardless of what is already running. Designed for long-running Jobs where messages accumulate faster than Jobs complete.

// scale_jobs.go — eagerScalingStrategy.GetEffectiveMaxScale (kedacore/keda)

func (s eagerScalingStrategy) GetEffectiveMaxScale(

maxScale, runningJobCount, pendingJobCount, maxReplicaCount, _ int64,

) (int64, int64) {

return min(maxReplicaCount-runningJobCount-pendingJobCount, maxScale),

maxReplicaCount

}

Consider this scenario: maxReplicaCount: 10, 3 running Jobs, 3 new messages arrive. With default, KEDA creates 3 Jobs (one per message). With eager, KEDA creates up to 7 Jobs (filling all available slots), which means more concurrent Jobs than queue messages if messages arrive in bursts.

Side-by-side comparison

Given: queue length = 10, target value = 1, running Jobs = 3, pending Jobs = 1, maxReplicaCount = 15.

| Strategy | effectiveMaxScale | Jobs created | Logic |

|---|---|---|---|

| default | 7 | 7 | 10 - 3 |

| custom (deduction=1, pct=0.5) | 8 | 8 | min(10 - 1 - int(3*0.5), 15) = min(8, 15) |

| accurate | 9 | 9 | 10 - 1 (pending) |

| eager | 10 | 10 | min(15 - 3 - 1, 10) = min(11, 10) |

When multiple scalers are attached to a single ScaledJob, the multipleScalersCalculation field (default: max) determines how to aggregate their metrics. Options: max, min, avg, sum.

Rollout and Lifecycle Management

Rollout strategy

When you update a ScaledJob manifest (new image tag, changed environment variables), KEDA needs to decide what happens to running Jobs.

# ScaledJob rollout configuration

spec:

rollout:

strategy: gradual # or "default"

propagationPolicy: foreground # or "background"

The CRD source defines the enum as gradual and immediate, though the KEDA docs refer to the non-gradual option as default. In practice, omitting the field or using any value other than gradual gives you the terminate-and-recreate behavior: KEDA kills existing Jobs and recreates them with the new spec. For short-lived Jobs this is fine. For a 3-hour ETL job at the 2-hour mark, it is catastrophic.

With strategy: gradual, KEDA leaves running Jobs alone. Only new Jobs use the updated spec. Running Jobs complete with the old configuration, and the transition happens naturally as old Jobs finish and new ones start.

warning: Always use

rollout.strategy: gradualfor long-running batch workloads. Thedefaultstrategy kills running Jobs on ScaledJob manifest updates.

Pausing autoscaling

Add the autoscaling.keda.sh/paused: true annotation to stop KEDA from creating new Jobs without touching running ones. Useful during maintenance windows or incident response.

metadata:

annotations:

autoscaling.keda.sh/paused: "true"

Remove the annotation or set it to "false" to resume. Existing Jobs are unaffected in both directions.

Job cleanup

KEDA's executor handles cleanup internally. Every polling interval, it lists all Jobs owned by the ScaledJob, sorts completed and failed Jobs by completion time, and deletes the oldest entries beyond the history limits. With the defaults of 100 each, that is 200 Job objects lingering in your namespace before cleanup kicks in.

Safety net: activeDeadlineSeconds

Set activeDeadlineSeconds on the jobTargetRef to cap how long any single Job can run. Kubernetes terminates the Job after this duration, regardless of whether the work is finished. This catches runaway processes, deadlocked consumers, and infinite loops.

spec:

jobTargetRef:

activeDeadlineSeconds: 3600 # 1 hour hard limit

backoffLimit: 2

template:

spec:

containers:

- name: worker

image: batch-processor:v2

restartPolicy: Never

Real-World Pattern: LeanIX Integration Platform

The canonical ScaledJob pattern is one Job per message. LeanIX's integration platform pushed the model further.

Their problem: integration Jobs ranged from 5 seconds to 6 hours depending on customer data volume. The legacy system ran one microservice with a hard limit of 6 parallel jobs per region. At peak times, jobs queued up and customers waited.

Their solution split one codebase into three roles deployed separately on Kubernetes:

- service: REST API, receives webhook callbacks, creates new jobs

- singleton: computes the desired worker count and writes it to a

desired_pod_countPostgreSQL table - worker: polls for queued jobs, processes them, and self-terminates

The KEDA ScaledJob uses a PostgreSQL trigger to poll the desired_pod_count table:

# LeanIX integration ScaledJob (engineering.leanix.net)

apiVersion: keda.sh/v1alpha1

kind: ScaledJob

metadata:

name: integration-servicenow-worker

spec:

jobTargetRef:

activeDeadlineSeconds: 32400 # 9 hours

template:

# ... worker container template

pollingInterval: 15

successfulJobsHistoryLimit: 5

failedJobsHistoryLimit: 5

maxReplicaCount: 10

rolloutStrategy: gradual

scalingStrategy:

strategy: default

triggers:

- type: postgresql

metadata:

host: db.example.com

port: "5432"

dbName: integration

userName: keda

sslmode: require

passwordFromEnv: POSTGRES_PASSWORD

query: "SELECT count FROM desired_pod_count"

targetQueryValue: '1'

The key insight: workers manage their own lifecycle through a state machine. INACTIVE on startup, ACTIVE for 30 minutes (during which they claim queued jobs), RETIRED (finish current work, accept no new claims), TERMINATING, and TERMINATED. The worker shuts itself down. KEDA only intervenes if activeDeadlineSeconds (9 hours) is hit.

Combined with rolloutStrategy: gradual, new deployments never kill workers mid-job. Old workers finish their current batch and exit naturally. New workers start with the updated image.

The result: 1-2 workers overnight, scaling to 10 at 5am when cron-triggered synchronizations flood the queue.

Gotchas

History limits default to 100 each. That is 200 completed Job objects accumulating in your namespace before cleanup runs. In production, set successfulJobsHistoryLimit and failedJobsHistoryLimit to 5-10.

maxReplicaCount is per polling interval, not total. If pollingInterval is 10 seconds and the queue has 1000 messages, KEDA creates up to maxReplicaCount new Jobs every 10 seconds until the queue drains. Monitor running Job count to catch runaway scaling.

No cooldownPeriod. ScaledJob has no equivalent of ScaledObject's cooldownPeriod. Once the event source is empty, KEDA stops creating Jobs, but existing Jobs run to completion on their own timeline.

minReplicaCount > 0 burns resources continuously. Warm Jobs reduce cold start latency but never exit until they hit activeDeadlineSeconds or process an event. Only use this when startup latency is genuinely unacceptable.

The eager strategy can overshoot. It fills all available slots immediately without waiting for running Jobs to finish. If your Jobs are slow and messages arrive in bursts, you will have more concurrent Jobs than queue messages.

Default rollout strategy kills running Jobs. Always use rollout.strategy: gradual for batch workloads where Jobs run longer than a few seconds.

Wrap-up

ScaledJob handles the batch processing half of KEDA's scaling model. One Job per event, four scaling strategies to tune throughput, and rollout policies that protect running workloads from deployment disruptions.

Start with the default scaling strategy and gradual rollout. Lower history limits to single digits. Set activeDeadlineSeconds as a safety net. Monitor running Job count through kubectl get jobs or the KEDA operator metrics to catch runaway batches early.

If your batch Jobs need GPU resources or specialized node types, pair ScaledJob with a node autoscaler so compute capacity appears on demand. The same applies to burst workloads that exceed current cluster capacity: KEDA handles the pod-level scaling decision, and the node autoscaler ensures the infrastructure exists to run them.

This post is part of the KEDA — Event-Driven Autoscaling from Zero to Production collection (5 of 7)

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

Introduction to KEDA and Event-Driven Autoscaling

How KEDA extends Kubernetes HPA with 65+ scalers, scale-to-zero, and a two-phase architecture for event-driven pod autoscaling.

Message Queue Scaling with KEDA — Kafka and SQS

How KEDA's Kafka and SQS scalers calculate lag and queue depth, with TriggerAuthentication patterns and production edge cases.

Custom Metrics and Prometheus-Based Scaling with KEDA

Using KEDA's Prometheus scaler to drive autoscaling from any PromQL query — replacing Prometheus Adapter with a simpler, more flexible approach.