Message Queue Scaling with KEDA — Kafka and SQS

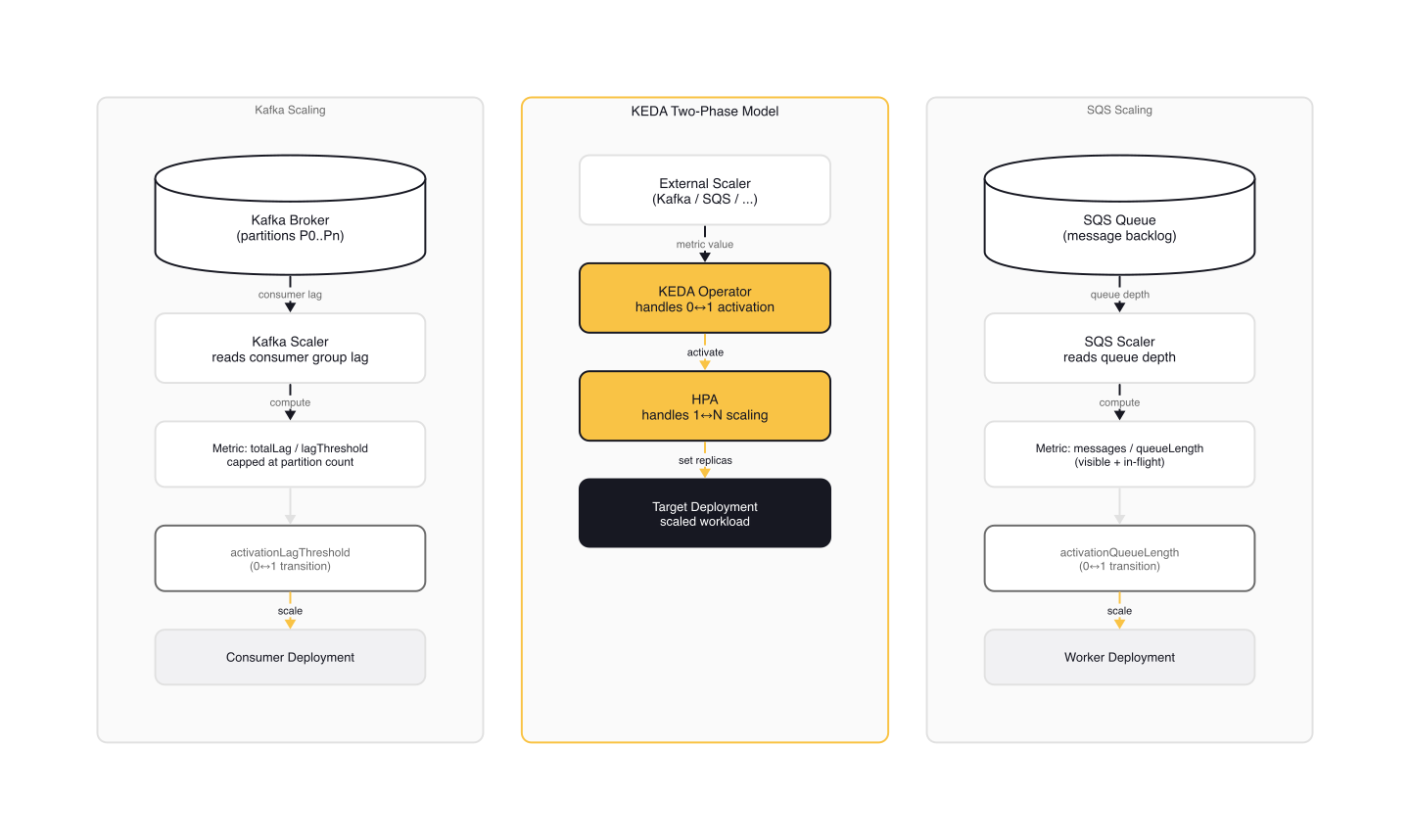

Your Kafka consumer group lag is climbing. Messages pile up in your SQS dead-letter queue. HPA can't see either signal because it only knows about CPU and memory. KEDA fixes this by exposing Kafka consumer lag and SQS queue depth as HPA metrics, but the two scalers work differently under the hood. The Kafka scaler tracks consumer group lag across partitions. The SQS scaler counts approximate messages in the queue. Both feed into the same two-phase model (KEDA operator handles 0↔1, HPA handles 1↔N), but tuning them requires understanding what each scaler actually measures and how the numbers translate to replica counts.

What follows: how KEDA calculates lag and queue depth from the source code, the parameters that control scaling behavior, TriggerAuthentication patterns for secure connections, and the edge cases that catch teams in production.

The Kafka Scaler: Consumer Lag as a Scaling Signal

The Kafka scaler uses consumer group lag as its metric. KEDA queries the Kafka brokers for two things: the latest offset on each partition (where the producer is) and the committed offset for the consumer group (where the consumer is). The difference is lag.

The getTotalLag() function in kafka_scaler.go sums latestOffset - consumerOffset across all partitions in the topic. That total lag becomes the metric value fed to HPA.

// Paraphrased from kafka_scaler.go — getTotalLag()

for topic, partitionsOffsets := range producerOffsets {

for partition := range partitionsOffsets {

lag, lagWithPersistent, err := s.getLagForPartition(

topic, partition, consumerOffsets, producerOffsets,

)

totalLag += lag

totalLagWithPersistent += lagWithPersistent

}

}

HPA computes desired replicas as totalLag / lagThreshold. With a lagThreshold of 10 (the default) and a total lag of 50 across all partitions, HPA targets 5 replicas. But this formula has an implicit ceiling: by default, KEDA caps replicas at the partition count (see below). A 10-partition topic with lagThreshold: 50 and a total lag of 1000 still produces only 10 replicas, not 20. The cap only lifts when allowIdleConsumers is true.

Partition-Aware Capping

Kafka consumer groups have a hard constraint: each partition can only be consumed by one consumer in the group. Running more consumers than partitions means idle consumers. KEDA enforces this by default, capping the calculated replica count at the partition count.

A minimal ScaledObject for Kafka:

# kafka-scaledobject.yaml

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: order-processor

namespace: default

spec:

scaleTargetRef:

name: order-consumer

pollingInterval: 30

minReplicaCount: 1

maxReplicaCount: 10

triggers:

- type: kafka

metadata:

bootstrapServers: kafka.messaging.svc:9092

consumerGroup: order-processing-group

topic: orders

lagThreshold: "50"

offsetResetPolicy: latest

Once applied, the ScaledObject creates a managed HPA:

$ kubectl get scaledobject order-processor

NAME SCALETARGETKIND SCALETARGETNAME MIN MAX TRIGGERS READY

order-processor apps/v1.Deployment order-consumer 1 10 kafka True

$ kubectl get hpa keda-hpa-order-processor

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS

keda-hpa-order-processor Deployment/order-consumer 250/50 (avg) 1 10 5

With a total lag of 250 and a lagThreshold of 50, HPA targets 5 replicas.

Key Tuning Parameters

Several parameters adjust how the scaler counts lag and caps replicas:

allowIdleConsumers (default: false). Set to true when your consumers do work beyond consuming, such as calling external APIs or running batch processing. This lifts the partition cap and lets HPA scale beyond the partition count.

limitToPartitionsWithLag (default: false). Only counts partitions with non-zero lag toward the replica cap. Useful when some partitions are idle. Requires topic to be set. Cannot be combined with allowIdleConsumers.

excludePersistentLag (default: false). Compares the consumer offset to the previous polling cycle. If the offset hasn't moved, KEDA treats that partition's lag as zero for scaling purposes (though it still counts for activation). This prevents scaling up for partitions where the consumer is stuck on a poison pill message.

// Paraphrased from kafka_scaler.go — getLagForPartition(), excludePersistentLag branch

case previousOffset == consumerOffset:

// Consumer stuck on same offset — don't count for scaling

return 0, latestOffset - consumerOffset, nil

default:

// Offset moved — update and count normally

s.previousOffsets[topic][partitionID] = consumerOffset

ensureEvenDistributionOfPartitions (default: false). Forces the replica count to be a factor of the partition count. A 10-partition topic only scales to 1, 2, 5, or 10 replicas, never 3 or 7. This avoids uneven partition assignment across consumers.

partitionLimitation. Scope scaling to specific partition IDs. Accepts comma-separated values and ranges: 1,2,10-20,31. Only those partitions contribute to the lag calculation.

Activation vs. Scaling Thresholds

KEDA has two distinct thresholds. The activationLagThreshold (default: 0) controls the 0↔1 transition, handled by the KEDA operator. The lagThreshold (default: 10) controls 1↔N scaling, handled by HPA. Activation only fires when the metric value is strictly greater than the activation threshold.

This separation matters. You might want a lagThreshold of 50 for smooth scaling but an activationLagThreshold of 10 to avoid waking the deployment for tiny blips. Or set activationLagThreshold to 100 to keep the deployment at zero until a real backlog forms.

Kafka Offset Reset and Edge Cases

The offsetResetPolicy parameter controls what happens when no offset is committed for the consumer group. New consumer groups always start with no committed offset.

With latest (the default) and scaleToZeroOnInvalidOffset: false (also the default), KEDA returns a lag of 1 when the offset is invalid. That single-unit lag keeps the deployment active so consumers can start, connect to Kafka, and commit their first offset. Without this, a cold-start deployment would never wake up.

With earliest, KEDA returns lag equal to the latest offset (the full topic length). The consumer will replay from the beginning, and KEDA scales proportionally to the backlog.

scaleToZeroOnInvalidOffset (default: false) overrides both behaviors. When true, invalid offsets produce zero lag, allowing scale-to-zero even with no committed offset. Use this only when you explicitly want to ignore topics with no consumer history.

The Dead Consumer Trap

There is an edge case in the Kafka scaler that silently breaks scaling. If your consumer never commits an offset and minReplicaCount is 0, KEDA scales the deployment to zero during quiet periods. Once at zero, KEDA can't discover the topic because there's no commit history and no active consumer. The deployment stays at zero permanently.

# kafka-scaledobject-safe.yaml

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: order-processor

namespace: default

spec:

scaleTargetRef:

name: order-consumer

minReplicaCount: 1 # Safety net: always keep one consumer running

triggers:

- type: kafka

metadata:

bootstrapServers: kafka.messaging.svc:9092

consumerGroup: order-processing-group

topic: orders

lagThreshold: "50"

offsetResetPolicy: latest

scaleToZeroOnInvalidOffset: "false"

The fix: set minReplicaCount: 1 until you're confident the consumer group is committing offsets regularly. Alternatively, use a multi-trigger ScaledObject where one trigger explicitly sets the topic to ensure lag is always detected.

The SQS Scaler: Queue Depth as a Scaling Signal

The SQS scaler is simpler than Kafka. There are no partitions, no offsets, no consumer groups. KEDA calls the SQS GetQueueAttributes API and sums the message counts.

The default formula is:

actualMessages = ApproximateNumberOfMessages

+ ApproximateNumberOfMessagesNotVisible

ApproximateNumberOfMessages is the backlog: messages waiting to be picked up. ApproximateNumberOfMessagesNotVisible is messages currently being processed (in-flight). HPA computes desired replicas as actualMessages / queueLength.

// Paraphrased from aws_sqs_queue_scaler.go — parseAwsSqsQueueMetadata()

meta.awsSqsQueueMetricNames = append(meta.awsSqsQueueMetricNames,

types.QueueAttributeNameApproximateNumberOfMessages)

if meta.ScaleOnInFlight {

meta.awsSqsQueueMetricNames = append(meta.awsSqsQueueMetricNames,

types.QueueAttributeNameApproximateNumberOfMessagesNotVisible)

}

if meta.ScaleOnDelayed {

meta.awsSqsQueueMetricNames = append(meta.awsSqsQueueMetricNames,

types.QueueAttributeNameApproximateNumberOfMessagesDelayed)

}

The queueLength parameter (default: 5) is the target messages per replica. If one pod processes 10 messages at a time, set queueLength to 10. With 30 messages in the queue, KEDA targets 3 replicas.

SQS ScaledObject Example

# sqs-scaledobject.yaml

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: invoice-processor

namespace: default

spec:

scaleTargetRef:

name: invoice-worker

pollingInterval: 15

minReplicaCount: 0

maxReplicaCount: 50

cooldownPeriod: 60

triggers:

- type: aws-sqs-queue

authenticationRef:

name: aws-credentials

metadata:

queueURL: https://sqs.eu-west-1.amazonaws.com/123456789012/invoices

queueLength: "10"

awsRegion: eu-west-1

scaleOnInFlight: "true"

activationQueueLength: "5"

In-Flight Messages: The Over-Scaling Trap

The default scaleOnInFlight: true counts messages currently being processed. If your consumers take 5 minutes per message, those messages stay "not visible" for 5 minutes. KEDA sees them as load and keeps scaling up, even though they're already being handled.

For slow consumers, set scaleOnInFlight: false. KEDA will only see the actual backlog (messages waiting to be picked up), which is a more accurate signal for "do I need more workers?"

The trade-off: with scaleOnInFlight: false, a sudden spike can temporarily under-scale. KEDA only sees the queue growing, not the full processing load. For most workloads, this is the safer default.

scaleOnDelayed (default: false) adds ApproximateNumberOfMessagesDelayed to the count. Enable this if you use SQS delay queues and want to pre-scale before delayed messages become visible.

TriggerAuthentication: Securing Scaler Connections

Both scalers need credentials to connect: Kafka needs broker auth, SQS needs AWS credentials. Embedding secrets directly in ScaledObject metadata is fragile and breaks the separation of concerns between teams.

TriggerAuthentication is a namespace-scoped CRD that references Kubernetes Secrets, environment variables, or pod identity providers. ClusterTriggerAuthentication is the cluster-wide variant, accessible from any namespace.

Kafka with SASL/SCRAM and TLS

# kafka-auth.yaml

apiVersion: v1

kind: Secret

metadata:

name: kafka-credentials

namespace: default

data:

username: b3JkZXItcHJvY2Vzc29y # base64: order-processor

password: czNjcjN0LXBhc3N3b3Jk # base64: s3cr3t-password

---

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: kafka-scram-auth

namespace: default

spec:

secretTargetRef:

- parameter: username

name: kafka-credentials

key: username

- parameter: password

name: kafka-credentials

key: password

Reference it from the ScaledObject:

# kafka-scaledobject-with-auth.yaml

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: order-processor

namespace: default

spec:

scaleTargetRef:

name: order-consumer

triggers:

- type: kafka

metadata:

bootstrapServers: kafka.messaging.svc:9092

consumerGroup: order-processing-group

topic: orders

lagThreshold: "50"

sasl: scram_sha512

tls: enable

authenticationRef:

name: kafka-scram-auth

SASL mode goes in the ScaledObject metadata (it's a protocol choice, not a secret). Credentials go in TriggerAuthentication. TLS certificates (ca, cert, key) can also go in the TriggerAuthentication if mutual TLS is required.

SQS with AWS Pod Identity (IRSA)

For AWS workloads, Pod Identity is the cleanest approach. No long-lived credentials, no rotation burden.

# sqs-auth.yaml

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: aws-credentials

namespace: default

spec:

podIdentity:

provider: aws

roleArn: arn:aws:iam::123456789012:role/keda-sqs-reader

The KEDA operator pod needs the right service account annotations for IRSA. The roleArn scopes the identity to a specific IAM role with SQS read permissions.

For teams sharing credentials across namespaces, use ClusterTriggerAuthentication:

# cluster-auth.yaml

apiVersion: keda.sh/v1alpha1

kind: ClusterTriggerAuthentication

metadata:

name: shared-aws-credentials

spec:

podIdentity:

provider: aws

roleArn: arn:aws:iam::123456789012:role/keda-shared-reader

Reference it with kind: ClusterTriggerAuthentication in the ScaledObject's authenticationRef.

Production Checklist

Kafka

- Set

minReplicaCount: 1for new consumer groups until you've confirmed offsets are being committed - Enable

excludePersistentLag: trueto avoid scaling on stuck partitions (poison pills) - Use

ensureEvenDistributionOfPartitions: trueif balanced consumption matters for your workload - Match

pollingIntervalto your lag tolerance. The default 30s works for most cases. Lowering it increases load on the Kafka brokers. - Set

cooldownPeriodhigh enough to avoid premature scale-down after burst processing. 60-120 seconds is a reasonable starting point.

SQS

- Tune

queueLengthto match your per-pod throughput. If a pod handles 10 messages/second and your target latency is 5 seconds,queueLength: 50keeps you within budget. - Consider

scaleOnInFlight: falsefor slow consumers. It prevents over-provisioning at the cost of slightly delayed scale-out during spikes. - Set

activationQueueLength> 0 to avoid thrashing around zero. A value of 2-5 messages prevents wake-ups for occasional stragglers. - Use

cooldownPeriodto prevent premature scale-down after clearing a burst.

Both Scalers

- Use TriggerAuthentication or ClusterTriggerAuthentication. Never put credentials in ScaledObject metadata.

- Monitor KEDA operator logs (

kubectl logs -n keda deployment/keda-operator) for scaler connection errors. A misconfigured auth doesn't prevent the ScaledObject from being created; it just silently fails to scale. - Use

pausedannotations (autoscaling.keda.sh/paused: "true") during maintenance windows to prevent unwanted scaling during cluster operations.

Wrap-up

Kafka and SQS represent the two dominant queue scaling patterns in KEDA: lag-based and depth-based. The Kafka scaler is the more complex of the two, with partition-aware capping, offset policies, persistent lag exclusion, and even partition distribution. The SQS scaler is straightforward, but the scaleOnInFlight default catches teams off-guard when consumer processing time is high.

The common thread is understanding what KEDA actually measures. The scaler's metric formula determines how many replicas HPA requests. Tuning lagThreshold or queueLength without understanding the formula means guessing. Reading the source code, specifically getLagForPartition() for Kafka and getAwsSqsQueueLength() for SQS, removes the guesswork.

Next in the series: Prometheus-based custom metrics scaling, where the scaling signal isn't a queue at all but any metric you can express as a PromQL query.

This post is part of the KEDA — Event-Driven Autoscaling from Zero to Production collection (2 of 7)

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

Introduction to KEDA and Event-Driven Autoscaling

How KEDA extends Kubernetes HPA with 65+ scalers, scale-to-zero, and a two-phase architecture for event-driven pod autoscaling.

Custom Metrics and Prometheus-Based Scaling with KEDA

Using KEDA's Prometheus scaler to drive autoscaling from any PromQL query — replacing Prometheus Adapter with a simpler, more flexible approach.

HTTP-Based Autoscaling with the KEDA HTTP Add-on

How the KEDA HTTP Add-on intercepts traffic to scale HTTP workloads to zero, and when the Prometheus scaler is better.