Introduction to KEDA and Event-Driven Autoscaling

Kubernetes HPA scales on CPU and memory. Most production workloads care about something else entirely: messages in a queue, HTTP requests per second, rows in a database table, or a custom Prometheus metric that reflects actual business load. Bridging that gap used to mean deploying a custom metrics adapter, wiring it to your event source, and maintaining the plumbing yourself.

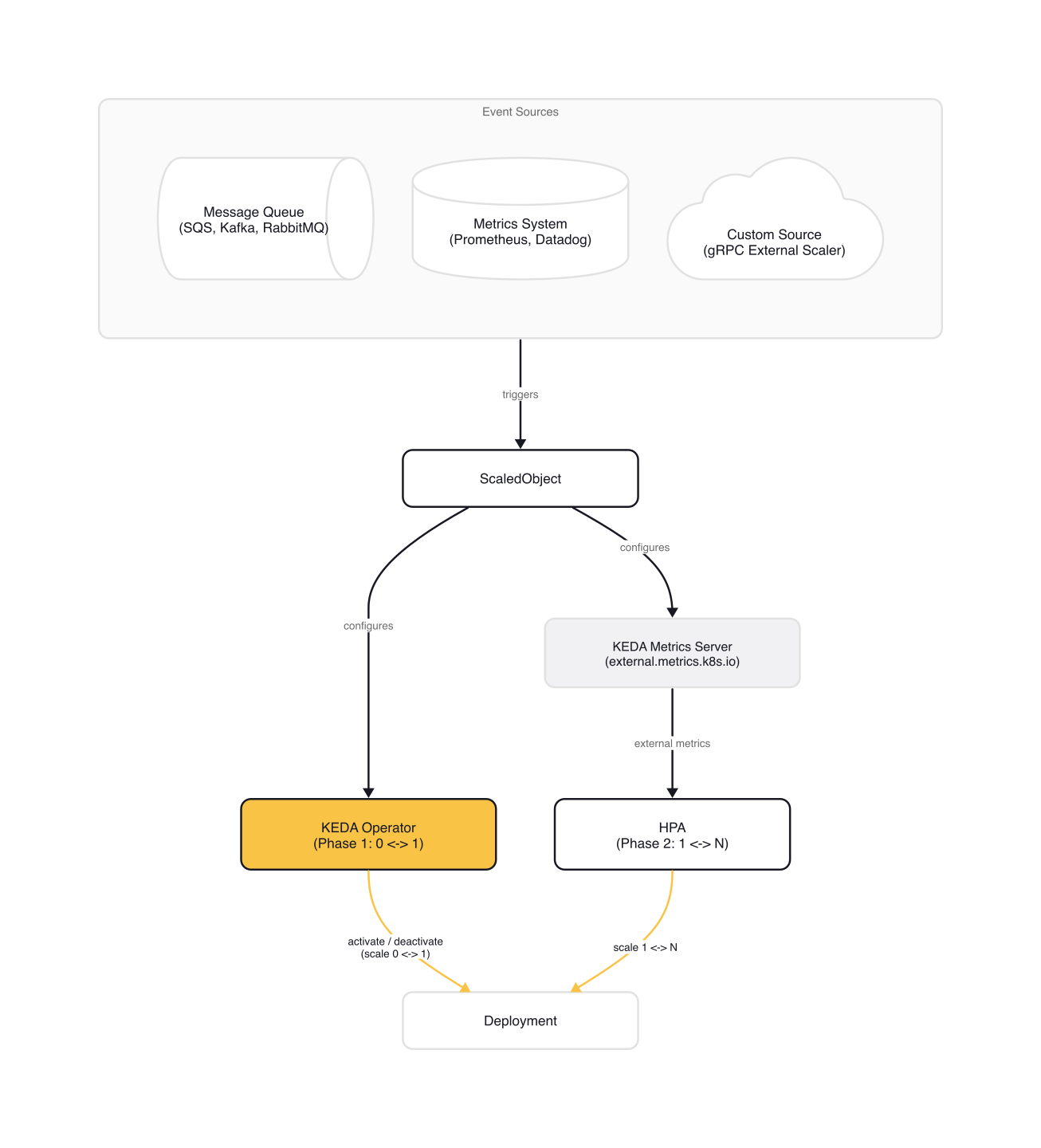

KEDA eliminates that plumbing. It is a lightweight, CNCF-graduated component that connects Kubernetes autoscaling to 65+ external event sources out of the box. It does not replace HPA. It extends it with a clean two-phase architecture: KEDA handles activation (scaling from and to zero), then hands off to the native HPA for replica scaling. The result is event-driven autoscaling that works with Deployments, StatefulSets, Jobs, and custom resources, on any Kubernetes cluster.

This article covers KEDA's architecture, its two-phase scaling model, the four CRDs that define scaling behavior, and the scaler landscape. By the end, you will understand how KEDA fits alongside HPA, when to use scale-to-zero, and what the gotchas are before you deploy your first ScaledObject.

Why HPA Isn't Enough

HPA scales on CPU and memory out of the box. Anything else, whether it is Kafka consumer lag, SQS queue depth, or a Prometheus query, requires a custom metrics adapter that implements the custom.metrics.k8s.io or external.metrics.k8s.io API. Each adapter supports a limited set of sources (Prometheus Adapter only speaks PromQL, the Datadog Cluster Agent only speaks Datadog), has its own configuration model, and none of them support scale-to-zero. HPA's minReplicas floor is 1.

KEDA solves all three problems: 65+ built-in scalers, a declarative CRD-based configuration, and scale-to-zero as a first-class feature. It does not replace HPA or any existing metrics pipeline. It installs alongside them and only manages the resources you explicitly target with a ScaledObject.

KEDA Architecture: The Two-Phase Model

KEDA installs three components into your cluster:

- KEDA Operator (

keda-operator): watchesScaledObjectandScaledJobresources, manages the 0↔1 scaling transitions, and creates/owns HPA objects for each ScaledObject. - KEDA Metrics Server (

keda-operator-metrics-apiserver): implements theexternal.metrics.k8s.ioAPI. When HPA asks "what is the current value of this metric?", the metrics server queries the configured scaler and returns the answer. - Admission Webhooks: validate

ScaledObjectandScaledJobconfigurations at creation time, preventing issues like two ScaledObjects targeting the same Deployment.

The key insight is the two-phase scaling model:

Phase 1 — Activation (0↔1): The KEDA operator polls each scaler at the configured pollingInterval (default: 30 seconds). If the scaler reports that the event source is active (messages in the queue, metrics above the activation threshold), the operator scales the target from 0 to 1 replica. When the source goes quiet and the cooldownPeriod elapses, the operator scales back to 0. This phase is entirely managed by KEDA, not HPA.

Phase 2 — Scaling (1↔N): Once the target has at least 1 replica, the HPA takes over. KEDA's metrics server feeds the external metric values to HPA, which applies its standard scaling algorithm (target average value, stabilization windows, scaling policies) to decide the replica count between minReplicaCount and maxReplicaCount. This is native Kubernetes autoscaling, just with a richer set of metrics.

This separation is deliberate. HPA was never designed to handle the 0↔1 transition (it requires at least 1 replica to compute per-pod averages). KEDA fills that gap without reimplementing HPA's scaling logic.

Here is how it looks in practice with a Prometheus-based trigger:

# ScaledObject — Prometheus trigger (adapted from keda.sh/docs/2.19/scalers/prometheus)

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: web-app-scaler

namespace: default

spec:

scaleTargetRef:

name: web-app # Deployment to scale

minReplicaCount: 0 # Enable scale-to-zero

maxReplicaCount: 20

pollingInterval: 15 # Check every 15 seconds

cooldownPeriod: 300 # Wait 5 minutes before scaling to zero

triggers:

- type: prometheus

metadata:

serverAddress: http://prometheus.monitoring:9090

threshold: '100' # HPA target: 100 req/s per pod

activationThreshold: '5' # Activate when rate > 5 req/s

query: sum(rate(http_requests_total{deployment="web-app"}[2m]))

When http_requests_total rate exceeds 5 req/s, KEDA activates the Deployment (0→1). HPA then scales to as many replicas as needed to keep each pod at roughly 100 req/s. When traffic drops and stays below the activation threshold for 300 seconds, KEDA scales back to zero.

The Four CRDs

KEDA introduces four Custom Resource Definitions:

ScaledObject

The primary resource. It links a Deployment, StatefulSet, or any custom resource with a /scale subresource to one or more event triggers. Key fields:

| Field | Purpose | Default |

|---|---|---|

scaleTargetRef.name |

Target workload name | Required |

pollingInterval |

How often KEDA checks the scaler (seconds) | 30 |

cooldownPeriod |

Idle time before scaling to zero (seconds) | 300 |

minReplicaCount |

Minimum replicas (set to 0 for scale-to-zero) | 0 |

maxReplicaCount |

Maximum replicas | 100 |

triggers[] |

List of event source triggers | Required |

A ScaledObject can have multiple triggers. KEDA evaluates all of them and uses the highest recommended replica count. This means you can scale on both queue depth and CPU utilization simultaneously.

ScaledJob

The batch-processing counterpart. Instead of scaling a long-running Deployment, ScaledJob creates one Kubernetes Job per event. Each Job pulls a single message, processes it to completion, and terminates. This is ideal for long-running tasks where you do not want HPA to terminate a pod mid-processing.

# ScaledJob — RabbitMQ trigger (adapted from keda.sh/docs/2.19/concepts/scaling-jobs)

apiVersion: keda.sh/v1alpha1

kind: ScaledJob

metadata:

name: order-processor

namespace: default

spec:

jobTargetRef:

template:

spec:

containers:

- name: processor

image: order-processor:latest

command: ["process", "--single-message"]

restartPolicy: Never

backoffLimit: 3

pollingInterval: 10

maxReplicaCount: 30

successfulJobsHistoryLimit: 5

failedJobsHistoryLimit: 3

triggers:

- type: rabbitmq

metadata:

queueName: orders

host: amqp://rabbitmq.default:5672/

mode: QueueLength

value: '1'

ScaledJobs are covered in depth separately.

TriggerAuthentication and ClusterTriggerAuthentication

These resources decouple credentials from ScaledObject definitions. Instead of embedding secrets in trigger metadata, you reference a TriggerAuthentication that pulls credentials from Kubernetes Secrets, environment variables, pod identity providers (AWS IRSA, Azure Workload Identity, GCP Workload Identity), or HashiCorp Vault.

# TriggerAuthentication + ScaledObject (adapted from keda.sh/docs/2.19/scalers/aws-sqs)

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: aws-credentials

namespace: default

spec:

podIdentity:

provider: aws

---

# ScaledObject referencing the TriggerAuthentication

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: sqs-scaler

namespace: default

spec:

scaleTargetRef:

name: queue-consumer

triggers:

- type: aws-sqs-queue

authenticationRef:

name: aws-credentials

metadata:

queueURL: https://sqs.eu-west-1.amazonaws.com/123456789/orders

queueLength: '5'

awsRegion: eu-west-1

ClusterTriggerAuthentication is the cluster-scoped variant. It lets you define credentials once and reference them from ScaledObjects in any namespace.

The Scaler Landscape

KEDA's scaler catalog is what makes it practical. Each scaler knows how to query a specific event source and return a metric value. As of KEDA 2.19, there are 65+ built-in scalers organized by category:

| Category | Examples |

|---|---|

| Messaging | Apache Kafka, AWS SQS, RabbitMQ, Azure Service Bus, NATS JetStream, GCP Pub/Sub |

| Metrics | Prometheus, Datadog, New Relic, Dynatrace, InfluxDB |

| Cloud services | AWS CloudWatch, Azure Monitor, GCP Cloud Tasks |

| Databases | PostgreSQL, MySQL, MongoDB, Redis, Cassandra |

| Scheduling | Cron (time-based scaling) |

| Kubernetes | Kubernetes workload metrics, resource metrics |

| HTTP | HTTP Add-on (separate component for request-based scaling) |

Each scaler exposes two key thresholds:

threshold(or scaler-specific equivalent likequeueLength): the target value for HPA-driven scaling (1↔N). Ifthreshold: 10and the queue has 50 messages, HPA targets 5 replicas.activationThreshold(oractivation+ parameter name): the value that triggers the 0↔1 transition. Default is 0, meaning any non-zero metric activates the scaler.

These thresholds are independent. You can set activationThreshold: 50 and threshold: 10, which means the scaler stays inactive (zero replicas) until the metric exceeds 50, then HPA targets 10 per pod once active. This decoupling is useful when you want to absorb small bursts without spinning up pods, but scale aggressively once the threshold is crossed.

If none of the built-in scalers fit your event source, KEDA supports external scalers via a gRPC interface. You implement a small gRPC server that returns metric values, and KEDA calls it like any other scaler.

Scale-to-Zero: How It Works

Scale-to-zero is KEDA's headline feature and the primary reason teams adopt it over raw HPA with a custom metrics adapter. The mechanism is straightforward:

- Set

minReplicaCount: 0on the ScaledObject - When all scalers report inactive (metric at or below

activationThreshold), KEDA starts the cooldown timer - After

cooldownPeriodseconds (default: 300), the operator scales the target to 0 replicas - When a scaler detects activity again, the operator immediately scales to 1

The cooldownPeriod prevents flapping. A brief spike that subsides within 5 minutes will not trigger a scale-to-zero and subsequent cold start. You can also set initialCooldownPeriod to prevent scaling to zero for a period after the ScaledObject is first created, giving your application time to warm up.

The trade-off is cold start latency. When the first event arrives after a scale-to-zero period, the pod must be scheduled, pulled (if not cached), started, and pass readiness checks before it can process the event. For a typical container, this is 5-30 seconds. For workloads with large images or slow startup, it can be longer.

When to use scale-to-zero:

- Dev and staging environments where idle workloads waste resources

- Batch processors that only run when work arrives (overnight ETL, report generation)

- Multi-tenant platforms where hundreds of low-traffic workloads would otherwise consume a base allocation

When to keep minReplicaCount: 1:

- Latency-sensitive services where cold starts are unacceptable

- Workloads with expensive initialization (ML model loading, large cache warming)

- Services behind health checks that must always respond (load balancer targets)

Gotchas

KEDA owns the HPA. When you create a ScaledObject, KEDA creates a corresponding HPA object. If you already have an HPA targeting the same Deployment, they will conflict. Use the scaledobject.keda.sh/transfer-hpa-ownership: "true" annotation and set advanced.horizontalPodAutoscalerConfig.name to your existing HPA name to let KEDA take over gracefully.

Activation and scaling thresholds are independent. Setting threshold: 10 and activationThreshold: 50 means the scaler stays inactive at 40 messages, even though HPA would want 4 replicas. This is by design, but it catches people who expect the two values to work together.

cooldownPeriod only applies to the 1→0 transition. It is not the same as HPA's stabilizationWindowSeconds, which controls scale-down from N to a lower N. If you need to slow down HPA scale-down behavior, configure it separately via advanced.horizontalPodAutoscalerConfig.behavior.

One ScaledObject per target. The admission webhook rejects a second ScaledObject targeting the same Deployment. If you need multiple triggers, add them all to a single ScaledObject's triggers[] array.

pollingInterval is a latency-throughput trade-off. Lower intervals detect events faster but increase the number of queries against your event source. For a high-traffic Kafka cluster this is fine. For a rate-limited cloud API, set it higher.

activationThreshold uses strict greater-than. With the default activationThreshold: 0, the scaler activates when the metric value is 1 or more, not 0. A metric value of exactly 0 keeps the scaler inactive.

Wrap-up

KEDA bridges the gap between Kubernetes HPA and the external event sources that drive most real workloads. Its two-phase architecture keeps the design clean: KEDA handles activation and deactivation, HPA handles replica scaling. The four CRDs (ScaledObject, ScaledJob, TriggerAuthentication, ClusterTriggerAuthentication) give you a declarative, portable way to define event-driven scaling on any cluster.

The scaler catalog is where KEDA earns its keep. Instead of deploying and maintaining separate metrics adapters for each event source, you get 65+ scalers out of the box with a consistent configuration model. Combined with scale-to-zero and secure credential management via TriggerAuthentication, KEDA makes event-driven autoscaling accessible without the infrastructure overhead.

To get started, install KEDA via Helm (helm install keda kedacore/keda --namespace keda --create-namespace) and deploy a ScaledObject against a Prometheus metric you already export. Start with minReplicaCount: 1 until you understand the activation behavior, then move to scale-to-zero on non-critical workloads. The gotchas above will save you a few hours of debugging.

This post is part of the KEDA — Event-Driven Autoscaling from Zero to Production collection (1 of 7)

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

Custom Metrics and Prometheus-Based Scaling with KEDA

Using KEDA's Prometheus scaler to drive autoscaling from any PromQL query — replacing Prometheus Adapter with a simpler, more flexible approach.

HTTP-Based Autoscaling with the KEDA HTTP Add-on

How the KEDA HTTP Add-on intercepts traffic to scale HTTP workloads to zero, and when the Prometheus scaler is better.

KEDA and Karpenter Together — Pod and Node Scaling Synergy

Combining KEDA's event-driven pod scaling with Karpenter's just-in-time node provisioning for a fully reactive, cost-efficient Kubernetes autoscaling stack.