KEDA and Karpenter Together — Pod and Node Scaling Synergy

HPA gets you basic pod autoscaling. Cluster Autoscaler gets you basic node scaling. Put them together and you have a reaction chain that works, but slowly: CPU crosses a threshold, HPA adds replicas, pods go Pending, CAS notices after a polling cycle, picks from a pre-defined node group, and spins up a VM. Minutes pass. For a web service handling steady traffic, that's fine. For an SQS queue that just received 50,000 messages, it's not.

KEDA and Karpenter replace both halves of that chain with faster, more flexible alternatives. KEDA watches your event source directly (queue depth, Prometheus metric, HTTP concurrency) and scales pods accordingly, including to and from zero. Karpenter watches for unschedulable pods and provisions right-sized nodes in seconds via the EC2 Fleet API, choosing from hundreds of instance types without pre-defined node groups.

This article covers how the two-layer scaling chain works, how to design NodePools for event-driven workloads, what scale-to-zero actually looks like when both layers participate, and a concrete SQS-based reference architecture you can adapt. The examples use AWS (EKS, SQS, EC2 Fleet API) because that's where the most mature reference architectures exist, but Karpenter's core scheduling logic is cloud-agnostic and Azure support is in active development.

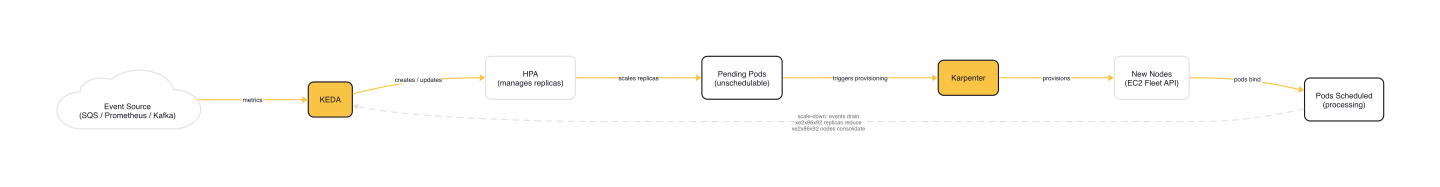

The Two-Layer Scaling Chain

KEDA and Karpenter operate at different layers of the stack. KEDA is the pod layer: it watches external event sources, creates and manages an HPA, and scales Deployment replicas. Karpenter is the node layer: it watches for pods that can't be scheduled and provisions compute to run them.

The scale-up chain looks like this:

- Event source spikes (queue fills, request rate climbs, metric crosses threshold)

- KEDA's metrics adapter reports the new value to the HPA

- HPA increases the Deployment's replica count

- New pods appear as

Pendingbecause existing nodes lack capacity - Karpenter detects unschedulable pods, evaluates their resource requests and scheduling constraints

- Karpenter launches optimal instance types via the cloud provider API

- Nodes join the cluster, pods schedule, processing begins

The scale-down chain is the reverse. Events drain, KEDA reduces replicas, nodes become empty or underutilized, Karpenter's consolidation logic removes them.

Why not HPA + Cluster Autoscaler?

Three reasons. First, CAS uses node groups with pre-defined instance types. You pick m5.xlarge and that's what you get, whether your burst needs 2 vCPUs or 16. Karpenter evaluates the actual pod requirements and selects from the full instance catalog. Second, CAS polls for unschedulable pods on a 10-30 second loop, then triggers ASG scaling, which launches a VM from a fixed instance type. Total time from Pending to schedulable: typically 3-5 minutes. Karpenter bypasses ASG entirely, using the EC2 Fleet API to launch optimal instances in 30-60 seconds. Third, neither HPA nor CAS can scale to zero. KEDA can remove every pod when the event source is idle, and Karpenter removes the now-empty nodes behind them.

Designing NodePools for Event-Driven Workloads

The key design pattern is separation: one NodePool for always-on services (API servers, databases, monitoring) on On-Demand instances, and another for event-driven burst workers on Spot instances. The Karpenter Blueprints repository demonstrates this split:

# karpenter-blueprints/blueprints/od-spot-split/od-spot.yaml

apiVersion: karpenter.sh/v1

kind: NodePool

metadata:

name: node-spot

spec:

disruption:

consolidationPolicy: WhenEmptyOrUnderutilized

consolidateAfter: 1m

limits:

cpu: 1k

memory: 500Gi

template:

metadata:

labels:

intent: apps

spec:

expireAfter: 168h0m0s

nodeClassRef:

group: karpenter.k8s.aws

name: default

kind: EC2NodeClass

requirements:

- key: capacity-spread

operator: In

values: ["2", "3", "4", "5"]

- key: kubernetes.io/arch

operator: In

values: ["amd64"]

- key: karpenter.sh/capacity-type

operator: In

values: ["spot"]

- key: kubernetes.io/os

operator: In

values: ["linux"]

- key: karpenter.k8s.aws/instance-category

operator: In

values: ["c", "m", "r"]

- key: karpenter.k8s.aws/instance-generation

operator: Gt

values: ["2"]

taints:

- effect: NoSchedule

key: intent

value: workload-split

Several things to note. The consolidateAfter: 1m is aggressive: Karpenter will start removing underutilized nodes one minute after the last pod change. That's what you want for burst processing. After KEDA scales pods down, you don't want to pay for idle nodes for ten minutes while the default cooldown expires.

The limits section sets a hard cap. If the NodePool hits 1,000 CPUs, Karpenter stops provisioning regardless of how many pods KEDA is creating. Those pods stay Pending. Size your limits for the maximum burst you're willing to fund.

The taint (intent=workload-split:NoSchedule) ensures only pods that explicitly tolerate this taint land on Spot nodes. Your always-on services run on the On-Demand NodePool; burst workers get the cheaper, interruptible capacity.

Instance categories c, m, and r with generation > 2 give Karpenter a broad selection pool across compute-optimized, general-purpose, and memory-optimized families. This flexibility is critical for Spot availability: the more instance types Karpenter can choose from, the better the price and the lower the interruption rate.

Scale-to-Zero: The Full Idle Stack

KEDA's minReplicaCount: 0 is the headline feature for event-driven workloads. When the event source goes quiet, KEDA removes every consumer pod. With Karpenter's consolidation set to WhenEmptyOrUnderutilized, the empty worker nodes get terminated shortly after.

The result: zero pods, zero worker nodes, zero infrastructure cost during idle periods. For workloads with clear off-peak windows (batch processing that runs overnight, event pipelines that spike during business hours), the savings are significant.

The trade-off is cold-start latency. When events resume, the full provisioning chain activates:

| Phase | Typical latency |

|---|---|

| KEDA detects events (polling interval) | 15-30s |

| Karpenter provisions node | 30-60s |

| Node joins cluster and becomes Ready | 10-20s |

| Pod pulls image and starts | 5-30s |

| Total cold start | ~60-140s |

For queue-based workloads, this is usually acceptable. Messages wait in the queue while capacity spins up. For latency-sensitive workloads, you have options:

- Reduce KEDA's

pollingIntervalfrom the default 30s to 10-15s for faster event detection - Keep

activationQueueLengthat the default of 0 so KEDA activates on the first message. Setting it higher adds a noise filter for sporadic single-message arrivals, but also adds latency for low-volume queues. - Keep a small always-on node with overprovisioned pause pods. When real pods arrive, they evict the pause pods and schedule instantly while Karpenter provisions additional capacity in the background.

A Complete SQS Reference Architecture

Here's how all the pieces fit together for an SQS-based event processing pipeline. This pattern comes from the AWS reference architecture and the KEDA SQS scaler documentation.

The ScaledObject

# Adapted from KEDA SQS scaler docs (keda.sh/docs/2.19/scalers/aws-sqs)

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: keda-aws-credentials

namespace: processing

spec:

podIdentity:

provider: aws

---

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: sqs-consumer-scaler

namespace: processing

spec:

scaleTargetRef:

name: sqs-consumer

minReplicaCount: 0

maxReplicaCount: 50

cooldownPeriod: 60

pollingInterval: 15

triggers:

- type: aws-sqs-queue

authenticationRef:

name: keda-aws-credentials

metadata:

queueURL: https://sqs.eu-west-1.amazonaws.com/123456789012/orders

queueLength: "5"

awsRegion: "eu-west-1"

The queueLength: "5" means KEDA targets 5 messages per pod. If the queue holds 250 messages, KEDA scales to 50 replicas (the maxReplicaCount ceiling). The cooldownPeriod: 60 prevents KEDA from scaling back to zero too quickly after a burst, giving the consumer pods time to drain remaining messages.

TriggerAuthentication with podIdentity.provider: aws uses IAM Roles for Service Accounts (IRSA) to authenticate against SQS. No access keys stored in Secrets.

The Consumer Deployment

# Adapted from karpenter-blueprints workload pattern for SQS consumer with scale-to-zero

apiVersion: apps/v1

kind: Deployment

metadata:

name: sqs-consumer

namespace: processing

spec:

replicas: 0

selector:

matchLabels:

app: sqs-consumer

template:

metadata:

labels:

app: sqs-consumer

spec:

nodeSelector:

intent: apps

tolerations:

- key: "intent"

operator: "Equal"

value: "workload-split"

effect: "NoSchedule"

containers:

- name: consumer

image: myregistry/sqs-consumer:latest

resources:

requests:

cpu: 256m

memory: 512Mi

limits:

memory: 512Mi

The Deployment starts with replicas: 0. KEDA owns the replica count. The nodeSelector and tolerations match the Spot NodePool's labels and taints, ensuring burst pods land on Spot capacity.

Resource requests are important here. Karpenter uses them to determine what instance types to provision. If you don't set requests, Karpenter assumes zero resources needed and may bin-pack pods onto undersized instances.

How the pieces connect

When messages arrive in SQS, the chain executes: KEDA polls the queue every 15 seconds, detects the backlog, and scales the Deployment from 0 to N replicas. The pods go Pending because no Spot nodes exist. Karpenter evaluates the aggregate resource requests (N pods x 256m CPU, 512Mi memory), selects instance types from the c/m/r families that fit, and launches Spot instances. Nodes join the cluster, pods schedule, and processing begins.

When the queue drains, KEDA scales pods back to zero after the 60-second cooldown. Karpenter detects the empty Spot nodes and terminates them within one minute (consolidateAfter: 1m). Back to zero cost.

Gotchas

Consolidation fighting KEDA oscillation. During fluctuating load, KEDA might scale down pods, Karpenter removes nodes, then KEDA scales back up and Karpenter has to provision again. Tune consolidateAfter to be slightly longer than your expected event oscillation period. For workloads with spiky, unpredictable patterns, 2-5 minutes is safer than 1 minute.

Spot interruptions during event processing. EC2 gives a 2-minute warning before reclaiming Spot instances. Karpenter handles this by pre-provisioning replacement nodes when it detects the interruption signal. For SQS workloads, this is mostly transparent: if a consumer pod is terminated, the message returns to the queue after the visibility timeout expires and another pod picks it up. For ScaledJobs processing one message per Job, set the karpenter.sh/do-not-disrupt: "true" annotation on long-running batch pods.

Mismatched timing parameters. KEDA's cooldownPeriod (default: 300s) and Karpenter's consolidateAfter (default: depends on consolidation policy) need to work together. If KEDA's cooldown is much shorter than Karpenter's consolidation delay, you'll have idle pods on idle nodes burning money. If it's much longer, you'll have empty nodes sitting around waiting for KEDA to finally scale down. Align them: a 60-second cooldown pairs well with a 1-minute consolidation delay for burst workloads.

NodePool limits are hard caps. If your NodePool's CPU limit is reached, Karpenter stops provisioning. Pods stay Pending even though KEDA keeps scaling the Deployment. You'll see pods stuck in Pending state with events showing "no nodepool is available." Size your NodePool limits for your expected peak, add headroom, and monitor the karpenter_nodepools_usage metrics.

maxReplicaCount vs. NodePool capacity. The ScaledObject's maxReplicaCount limits pods; the NodePool's spec.limits constrains infrastructure. Both need to be sized for the same burst scenario. If 50 pods need 256m CPU each, that's 12.8 CPUs. If your NodePool limit is 10 CPUs, you'll never run all 50 pods simultaneously.

Wrap-up

KEDA and Karpenter solve different halves of the same problem. KEDA makes pod scaling event-aware: scale on queue depth, not CPU utilization. Karpenter makes node scaling fast and flexible: provision the right compute in seconds, consolidate aggressively when it's no longer needed. Together, they create a fully reactive stack that goes from zero to peak capacity and back without manual intervention.

Start with a single event source (SQS, Kafka, Prometheus metric), a ScaledObject with minReplicaCount: 0, and a Spot NodePool with WhenEmptyOrUnderutilized consolidation. Tune the timing parameters (pollingInterval, cooldownPeriod, consolidateAfter) for your latency tolerance, then expand to additional event sources as the pattern proves out. The infrastructure cost during idle periods should be close to zero. The burst capacity should be limited only by what you're willing to fund.

This post is part of the KEDA — Event-Driven Autoscaling from Zero to Production collection (6 of 7)

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

Introduction to KEDA and Event-Driven Autoscaling

How KEDA extends Kubernetes HPA with 65+ scalers, scale-to-zero, and a two-phase architecture for event-driven pod autoscaling.

Custom Metrics and Prometheus-Based Scaling with KEDA

Using KEDA's Prometheus scaler to drive autoscaling from any PromQL query — replacing Prometheus Adapter with a simpler, more flexible approach.

HTTP-Based Autoscaling with the KEDA HTTP Add-on

How the KEDA HTTP Add-on intercepts traffic to scale HTTP workloads to zero, and when the Prometheus scaler is better.