Observability and Troubleshooting for KEDA

Your queue depth is climbing, pods aren't scaling, and the KEDA operator logs show nothing obvious. Without metrics, you're guessing. With them, you know exactly which scaler failed, why, and how long ago.

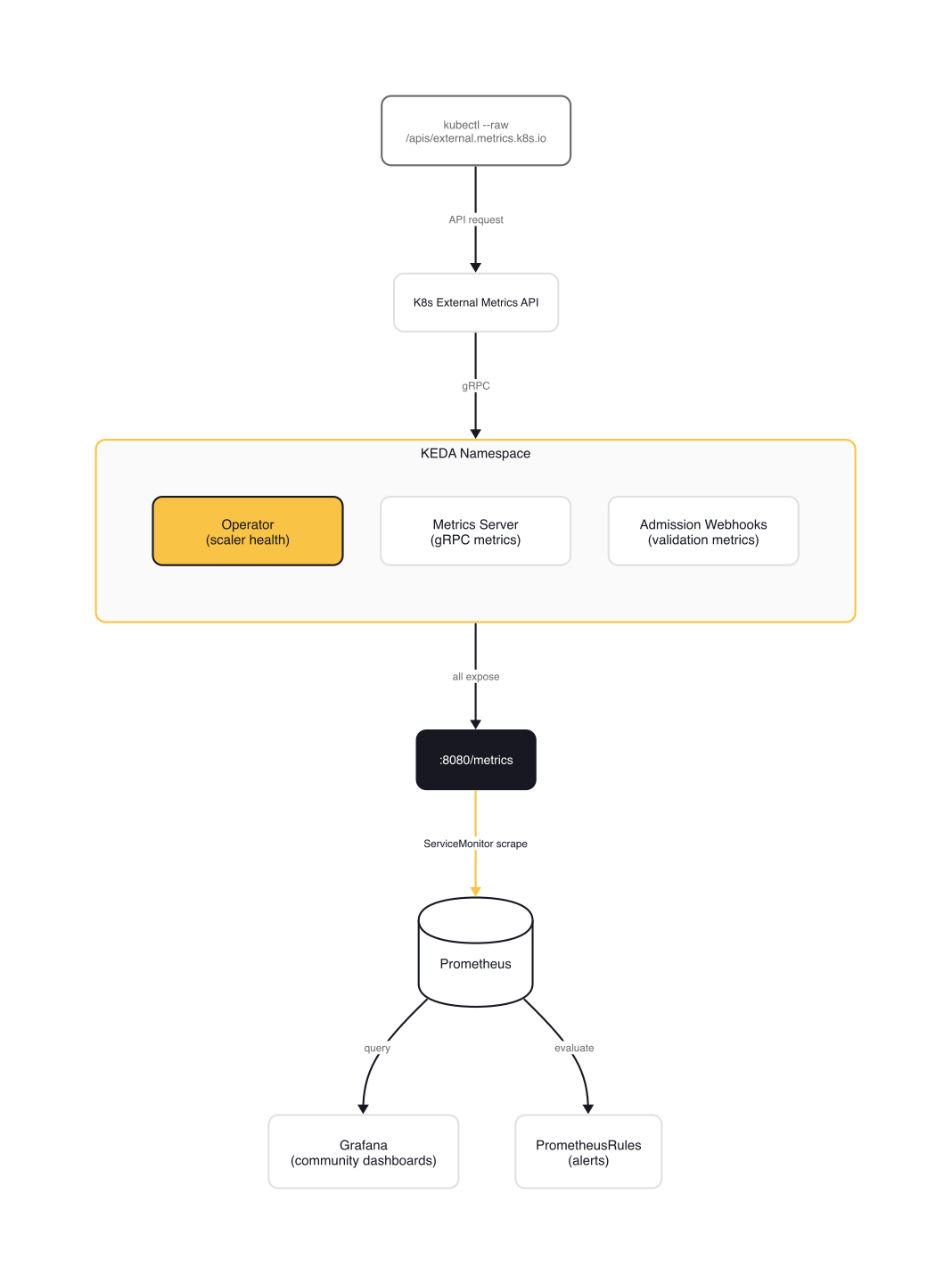

KEDA exposes Prometheus metrics across its three components: the operator, the metrics server, and the admission webhooks. Each serves a different role in the scaling pipeline, and each has its own set of metrics worth monitoring. The goal: make KEDA's scaling decisions visible, alertable, and debuggable before your users notice something is wrong.

Enabling Prometheus Metrics

KEDA ships with Prometheus metrics disabled by default. Every prometheus.*.enabled flag in the Helm chart is false out of the box. Deploy KEDA with default values and you get zero metrics, not even from the operator.

To enable scraping across all three components:

# kedacore/charts/keda/values.yaml (prometheus section)

prometheus:

metricServer:

enabled: true

port: 8080

serviceMonitor:

enabled: true

additionalLabels:

release: prometheus # must match your Prometheus operator's serviceMonitorSelector

operator:

enabled: true

port: 8080

serviceMonitor:

enabled: true

additionalLabels:

release: prometheus

webhooks:

enabled: true

port: 8080

serviceMonitor:

enabled: true

additionalLabels:

release: prometheus

Each component (operator, metrics server, webhooks) has independent configuration for metric exposure, ServiceMonitor creation, and PodMonitor creation. The additionalLabels field is critical: if your Prometheus instance uses a serviceMonitorSelector (most Prometheus Operator installations do), the labels must match or the ServiceMonitor will be silently ignored.

warning: Setting

prometheus.operator.enabled: truewithoutprometheus.operator.serviceMonitor.enabled: trueexposes the metrics endpoint but does not create a ServiceMonitor. Prometheus won't scrape it unless you create the ServiceMonitor manually or configure a Pod annotation-based scrape.

If you're not using the Prometheus Operator, you can skip ServiceMonitors and use Pod annotations instead:

# Pod annotation-based scraping (alternative to ServiceMonitor)

podAnnotations:

keda:

prometheus.io/scrape: "true"

prometheus.io/port: "8080"

prometheus.io/path: "/metrics"

Key Metrics Reference

All three KEDA components expose metrics on port 8080 at /metrics. The operator metrics are the ones you'll reference most often.

Operator Metrics

| Metric | Type | What it tells you |

|---|---|---|

keda_scaler_active |

Gauge | Whether a scaler is active (1) or inactive (0). First thing to check when scaling stops. |

keda_scaler_metrics_value |

Gauge | The current metric value per scaler. This is what the HPA sees when computing target replicas. |

keda_scaler_metrics_latency_seconds |

Gauge | Time to retrieve the metric from each scaler. Spikes here often mean the external system is slow, not KEDA. |

keda_scaler_detail_errors_total |

Counter | Errors per individual scaler. Auth failures, unreachable endpoints, malformed responses. |

keda_scaled_object_errors_total |

Counter | Errors aggregated per ScaledObject. |

keda_scaled_job_errors_total |

Counter | Errors aggregated per ScaledJob. |

keda_scaled_object_paused |

Gauge | Whether a ScaledObject is paused (1) or active (0). Catches forgotten maintenance pauses. |

keda_internal_scale_loop_latency_seconds |

Gauge | Deviation between expected and actual scale loop execution. Growing values mean the operator is overloaded. |

keda_resource_registered_total |

Gauge | Total KEDA CRDs per namespace. Useful for inventory and capacity planning. |

keda_trigger_registered_total |

Gauge | Total triggers per type. Shows your scaler distribution. |

keda_build_info |

Info | Static build information (version, git commit, Go runtime). |

note: When you deploy KEDA without any ScaledObjects or ScaledJobs, the only metric visible is

keda_build_info. Scaler-related metrics only appear once at least one ScaledObject with active triggers is deployed. This catches people off guard: they enable Prometheus scraping, see onlykeda_build_info, and assume the export is broken.

Metrics Server Metrics

The metrics server exposes gRPC metrics for the internal communication between the operator and the metrics API server:

keda_internal_metricsservice_grpc_client_started_total/handled_total— request countskeda_internal_metricsservice_grpc_client_handling_seconds— response latency histogram

These matter when debugging metric delivery failures. If the operator collects metrics but the HPA doesn't see them, the gRPC channel between operator and metrics server is the next place to look.

Admission Webhooks Metrics

keda_webhook_scaled_object_validation_total— total validation requestskeda_webhook_scaled_object_validation_errors— validation failures

These are mostly relevant during rollout of new ScaledObject configurations. A spike in validation errors means your manifests have schema issues.

Grafana Dashboards

KEDA offers a premade Grafana dashboard, and the community has built richer alternatives.

Official Dashboard

The KEDA project maintains a dashboard at kedacore/keda/config/grafana/keda-dashboard.json. It has two sections: metrics server visualization (gRPC request rates, latency) and scale target replica changes over time. Template variables include datasource, namespace, scaledObject, scaledJob, scaler, and metric.

The limitation: it focuses on the metrics server's internal gRPC metrics, not on the operator-level scaler health metrics that you need for day-to-day monitoring. You won't see keda_scaler_active or keda_scaler_detail_errors_total on this dashboard.

Community Dashboards

The kubernetes-autoscaling-mixin project provides two dashboards that are more useful for operations:

Scaled Object dashboard (Grafana ID 23951):

- Total ScaledObject count and status overview

- Per-object scaler metric values and collection latency

- Error rates per ScaledObject

- Direct links to the HPA dashboard for deeper scaling insights

Scaled Job dashboard (Grafana ID 23952):

- Same structure as Scaled Object, tailored for ScaledJobs

- Links to the Kubernetes Workload dashboard for job resource consumption

Import them directly from Grafana Labs. They require the KEDA operator metrics to be enabled (the Helm prometheus.operator.enabled: true flag covered earlier).

If you're building your own panels, these PromQL queries cover the basics:

# Scaler health overview — which scalers are active?

keda_scaler_active{namespace="$namespace", scaledObject="$scaledObject"}

# Metric collection latency per scaler (p99 over 5m)

histogram_quantile(0.99, rate(keda_scaler_metrics_latency_seconds[5m]))

# Error rate per ScaledObject over the last hour

sum(increase(keda_scaled_object_errors_total[1h])) by (scaledObject, exported_namespace)

Start with the community dashboards for daily operations. Import the official dashboard if you need to debug the gRPC channel between operator and metrics server.

Prometheus Alerts

The same kubernetes-autoscaling-mixin project provides five production-ready alerts for KEDA. Here are the PromQL expressions you'll deploy:

# adinhodovic/kubernetes-autoscaling-mixin/prometheus_alerts.yaml (KEDA rules)

- alert: KedaScaledObjectErrors

expr: |

sum(

increase(

keda_scaled_object_errors_total{

job="keda-operator"

}[10m]

)

) by (cluster, job, exported_namespace, scaledObject) > 0

for: 1m

labels:

severity: warning

annotations:

description: >-

KEDA scaled objects are experiencing errors.

Check the scaled object {{ $labels.scaledObject }}

in namespace {{ $labels.exported_namespace }}.

- alert: KedaScalerLatencyHigh

expr: |

avg(

keda_scaler_metrics_latency_seconds{

job="keda-operator"

}

) by (cluster, job, exported_namespace, scaledObject, scaler) > 5

for: 10m

labels:

severity: warning

annotations:

description: >-

Metric latency for scaler {{ $labels.scaler }}

for the object {{ $labels.scaledObject }}

has exceeded acceptable limits.

- alert: KedaScaledObjectPaused

expr: |

max(

keda_scaled_object_paused{

job="keda-operator"

}

) by (cluster, job, exported_namespace, scaledObject) > 0

for: 25h

labels:

severity: warning

annotations:

description: >-

The scaled object {{ $labels.scaledObject }}

in namespace {{ $labels.exported_namespace }}

is paused for longer than 25h.

- alert: KedaScalerDetailErrors

expr: |

sum(

increase(

keda_scaler_detail_errors_total{

job="keda-operator"

}[10m]

)

) by (cluster, job, exported_namespace, scaledObject, type, scaler) > 0

for: 1m

labels:

severity: warning

annotations:

description: >-

Errors in KEDA scaler {{ $labels.scaler }}

for {{ $labels.scaledObject }}

in namespace {{ $labels.exported_namespace }}.

The full set also includes KedaScaledJobErrors (same pattern as KedaScaledObjectErrors but for ScaledJobs).

Tuning these thresholds: The 5-second latency threshold on KedaScalerLatencyHigh works for fast scalers like Prometheus and Redis. SQS and Kafka scalers involve API calls that can take longer under load. Adjust per scaler type. The 25-hour KedaScaledObjectPaused threshold catches maintenance pauses that were never unpaused. Shorten it if you don't routinely pause ScaledObjects for full-day windows.

You can deploy these using the Helm chart's built-in PrometheusRules support:

# kedacore/charts/keda/values.yaml (prometheusRules section)

prometheus:

operator:

prometheusRules:

enabled: true

alerts:

- alert: KedaScaledObjectErrors

expr: sum(increase(keda_scaled_object_errors_total[10m])) by (scaledObject) > 0

for: 1m

labels:

severity: warning

This simplified example groups only by scaledObject. The community version groups by (cluster, job, exported_namespace, scaledObject) for multi-namespace granularity. Keep the full label set if you run KEDA across multiple namespaces.

Or import the community alerts file directly into your Prometheus deployment.

Querying Metrics with kubectl

You don't always need Prometheus to check what KEDA sees. The external metrics API is queryable directly with kubectl.

List all external metrics KEDA exposes:

$ kubectl get --raw "/apis/external.metrics.k8s.io/v1beta1"

{

"kind": "APIResourceList",

"apiVersion": "v1",

"groupVersion": "external.metrics.k8s.io/v1beta1",

"resources": [

{

"name": "externalmetrics",

"singularName": "",

"namespaced": true,

"kind": "ExternalMetricValueList",

"verbs": ["get"]

}

]

}

Query a specific metric value for a ScaledObject:

$ kubectl get --raw "/apis/external.metrics.k8s.io/v1beta1/namespaces/production/s0-prometheus-http_requests_per_second?labelSelector=scaledobject.keda.sh%2Fname%3Dapi-scaler"

{

"kind": "ExternalMetricValueList",

"apiVersion": "external.metrics.k8s.io/v1beta1",

"metadata": {},

"items": [

{

"metricName": "s0-prometheus-http_requests_per_second",

"metricLabels": null,

"timestamp": "2026-03-31T10:15:00Z",

"value": "42"

}

]

}

To find the metric names KEDA generates for a ScaledObject:

$ kubectl get scaledobject api-scaler -n production -o jsonpath='{.status.externalMetricNames}'

["s0-prometheus-http_requests_per_second"]

warning: CPU and memory scalers do not register as external metrics. They use the

metrics.k8s.ioAPI (provided by metrics-server), notexternal.metrics.k8s.io. Querying the external metrics endpoint for CPU/memory scalers returns nothing.

These kubectl queries are useful for quick sanity checks: if KEDA reports a metric value of 0 but your Prometheus shows 42, the problem is in the scaler configuration (wrong query, wrong server address, auth failure), not in the HPA.

Troubleshooting Common Failures

ScaledObject Not Scaling

Check keda_scaler_active first. A value of 0 means the scaler is inactive, either because the external metric source is unreachable or because the scaler is returning errors.

$ kubectl describe scaledobject api-scaler -n production

# Look for:

# Conditions:

# Type Status Reason

# Active False FailedGetExternalMetric

# Ready True ScaledObjectReady

If Active is False, check the operator logs:

$ kubectl logs deployment/keda-operator -n keda | grep "api-scaler"

Common causes: expired credentials in a TriggerAuthentication, wrong serverAddress in a Prometheus trigger, network policies blocking egress from the KEDA namespace.

"Only keda_build_info Visible"

This is the most frequently reported KEDA metrics issue (GitHub issue #5599). Two possible causes:

- No scalers deployed yet. Scaler metrics only appear after at least one ScaledObject with active triggers exists. Deploy a ScaledObject and the remaining metrics populate within one scale loop interval (default 30s).

- Helm

prometheus.*.enablednot set. The default isfalsefor all three components. Verify withhelm get values keda -n kedaand confirm the prometheus section is configured.

Scaler Errors Spiking

Watch keda_scaler_detail_errors_total increasing. This counter increments per scaler, with labels for the scaler name and ScaledObject.

Common causes:

- Expired credentials: TriggerAuthentication referencing a Secret with rotated credentials

- Endpoint unreachable: Prometheus server moved, SQS endpoint changed, DNS resolution failing

- Query returning no data: PromQL query valid but matching zero time series (label mismatch after a deployment change)

$ kubectl get triggerauthentication -n production -o yaml

# Verify secretTargetRef points to a current, valid Secret

Scale Loop Latency Growing

keda_internal_scale_loop_latency_seconds measures how far behind the scale loop is running compared to its expected interval. A value of 0 means on-time. Growing values mean the operator is spending too long collecting metrics.

Check per-scaler latency to find the bottleneck:

# PromQL: which scaler is slow?

topk(5, keda_scaler_metrics_latency_seconds)

Common causes: too many ScaledObjects per operator instance, slow external APIs, network latency between KEDA and the metric source. Consider splitting workloads across namespaces or reducing poll frequency on slow scalers.

ScaledObject Stuck at minReplicaCount

The scaler returns a value but it's below the threshold, so KEDA keeps the deployment at minReplicaCount instead of scaling to zero. Use the kubectl metric query to see what KEDA actually sees:

$ kubectl get --raw "/apis/external.metrics.k8s.io/v1beta1/namespaces/production/s0-prometheus-http_requests_per_second?labelSelector=scaledobject.keda.sh%2Fname%3Dapi-scaler"

Compare this value against your ScaledObject's threshold. If the metric value is 1 and the threshold is 50, you're at minReplicaCount. If the metric value is 0 and you still have pods running, check whether minReplicaCount is set to 1 (the default) instead of 0.

Gotchas

All Prometheus flags default to

false. Deploying KEDA with default Helm values gives you zero metrics. Enable all three:prometheus.operator.enabled,prometheus.metricServer.enabled,prometheus.webhooks.enabled.keda_build_infois a red herring. It's the only metric visible until you deploy at least one ScaledObject. People see it and assume the rest is broken. It's not. Deploy a ScaledObject and wait 30 seconds.The official Grafana dashboard focuses on gRPC metrics. The premade

keda-dashboard.jsonvisualizes the metrics server's internal gRPC communication, not the operator-level scaler health metrics you'll need daily. The community dashboards from kubernetes-autoscaling-mixin are more operationally useful.Scaler latency != KEDA latency. A spike in

keda_scaler_metrics_latency_secondsusually means the external system (Prometheus, SQS, Kafka) is slow, not that KEDA has a bug. Check the external system first.CPU/memory scalers use a different API. They go through

metrics.k8s.io, notexternal.metrics.k8s.io. Querying the external metrics endpoint for them returns nothing, which looks like a broken ScaledObject but is actually expected behavior.

Wrap-up

Enable metrics scraping from day one. Deploy the community dashboards and alerts before you have your first scaling incident, not after.

With these metrics and alerts in place, the next step is building runbooks. Each alert in the community set maps to a diagnostic sequence: KedaScalerLatencyHigh leads you to check the external metric source, KedaScaledObjectErrors leads you to TriggerAuthentication secrets and operator logs, KedaScaledObjectPaused leads you to whoever paused it and forgot. Document those sequences for your on-call team. Event-driven autoscaling is only as trustworthy as the operational playbook behind it.

This post is part of the KEDA — Event-Driven Autoscaling from Zero to Production collection (7 of 7)

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

Observability and Troubleshooting for Karpenter

Setting up Prometheus metrics scraping, building Grafana dashboards for node lifecycle events, and diagnosing common Karpenter issues in production.

Custom Metrics and Prometheus-Based Scaling with KEDA

Using KEDA's Prometheus scaler to drive autoscaling from any PromQL query — replacing Prometheus Adapter with a simpler, more flexible approach.

HTTP-Based Autoscaling with the KEDA HTTP Add-on

How the KEDA HTTP Add-on intercepts traffic to scale HTTP workloads to zero, and when the Prometheus scaler is better.