Custom Metrics and Prometheus-Based Scaling with KEDA

Kubernetes HPA scales on CPU and memory. Most production workloads need to scale on something else entirely: request rate, queue depth, error count, p95 latency, active sessions, orders per second. The metric that reflects real demand is almost never CPU utilization.

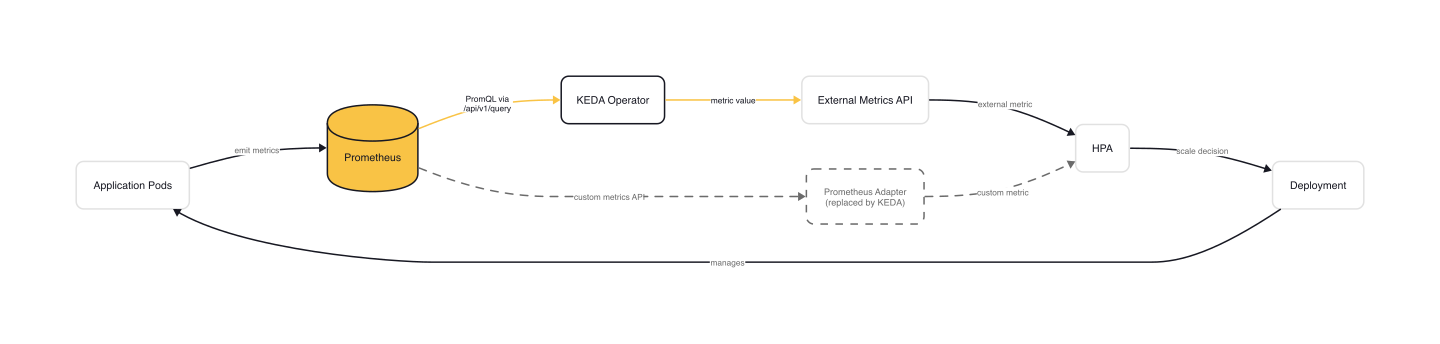

The traditional bridge between Prometheus and HPA is the Prometheus Adapter. It works, technically. But configuring it has driven more than a few teams to question their career choices. KEDA's Prometheus scaler replaces that adapter with a single ScaledObject: one PromQL query, one threshold, and the same scale-to-zero capability that makes KEDA worth running in the first place.

This article covers the Prometheus scaler in depth: how it works under the hood, the critical distinction between activation and scaling thresholds, authentication patterns, managed cloud Prometheus integration, and production-ready PromQL query design.

The Problem with Prometheus Adapter

Before KEDA, the standard path from Prometheus to HPA was the Prometheus Adapter, a Kubernetes SIG project that registers as a custom metrics API server. You define rules in a ConfigMap that tell the adapter how to discover Prometheus metrics, associate them with Kubernetes resources, rename them for the metrics API, and construct the PromQL query.

Here is what a real Prometheus Adapter rule looks like:

# prometheus-adapter ConfigMap rules

rules:

- seriesQuery: '{__name__=~"^container_.*",container!="POD",namespace!="",pod!=""}'

resources:

overrides:

namespace: { resource: 'namespace' }

pod: { resource: 'pod' }

name:

matches: '^container_(.*)_seconds_total$'

metricsQuery: 'sum(rate(<<.Series>>{<<.LabelMatchers>>,container!="POD"}[2m])) by (<<.GroupBy>>)'

Each rule has four parts: discovery (which Prometheus series to find), association (which Kubernetes resources they map to), naming (how to expose them in the custom metrics API), and querying (how to transform them into PromQL). The template variables <<.Series>>, <<.LabelMatchers>>, and <<.GroupBy>> are adapter-specific syntax that most teams find cryptic on first (and second, and third) encounter.

The pain compounds at scale:

- Single ConfigMap: All rules live in one ConfigMap. Hit the 1MB etcd size limit and you're stuck. Every team shares the same file, and one broken rule can take down metrics for every workload.

- Single Prometheus instance: The adapter connects to exactly one Prometheus server. If your metrics span multiple Prometheus instances or you run Thanos, you need additional infrastructure.

- No scale-to-zero: The adapter feeds metrics to HPA, and HPA doesn't scale below

minReplicas. There is no activation phase. - Operational burden: The adapter is another deployment to run, monitor, and upgrade. Its failure mode is silent: metrics stop updating, HPA decisions go stale, and nobody notices until latency spikes.

KEDA eliminates all of these by moving the Prometheus query from a shared ConfigMap into a per-workload ScaledObject.

How the Prometheus Scaler Works

KEDA's Prometheus scaler is an HTTP client. On each polling interval, the KEDA operator sends a GET request to the Prometheus /api/v1/query endpoint, parses the response, and feeds the resulting float value to HPA as an external metric.

The source code in prometheus_scaler.go shows the flow clearly. The ExecutePromQuery function builds the URL:

<serverAddress>/api/v1/query?query=<url-encoded-query>&time=<RFC3339-now>

It sends the request with the configured authentication headers, parses the JSON response, and returns a single float64 value. If the result set is empty and ignoreNullValues is true (the default), the scaler returns 0. If the result set contains more than one element, the scaler returns an error. Your PromQL query must return exactly one value.

Here is a minimal ScaledObject that scales a deployment based on HTTP request rate:

# keda-prometheus-scaledobject.yaml

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: prometheus-scaledobject

namespace: default

spec:

scaleTargetRef:

name: my-deployment

minReplicaCount: 0

maxReplicaCount: 20

triggers:

- type: prometheus

metadata:

serverAddress: http://prometheus-server.monitoring:9090

query: sum(rate(http_requests_total{deployment="my-deployment"}[2m]))

threshold: '100'

activationThreshold: '5'

The key parameters:

| Parameter | Purpose | Required |

|---|---|---|

serverAddress |

Prometheus endpoint URL (supports VictoriaMetrics, Thanos) | Yes |

query |

PromQL query returning a single scalar/vector element | Yes |

threshold |

Target value for 1→N scaling (float) | Yes |

activationThreshold |

Target value for 0→1 scaling (float, default 0) | No |

namespace |

For namespaced queries (Thanos setups) | No |

ignoreNullValues |

Treat empty results as 0 (default true) |

No |

unsafeSsl |

Skip TLS certificate validation | No |

timeout |

Per-trigger HTTP client timeout (overrides global) | No |

customHeaders |

Additional HTTP headers for the query | No |

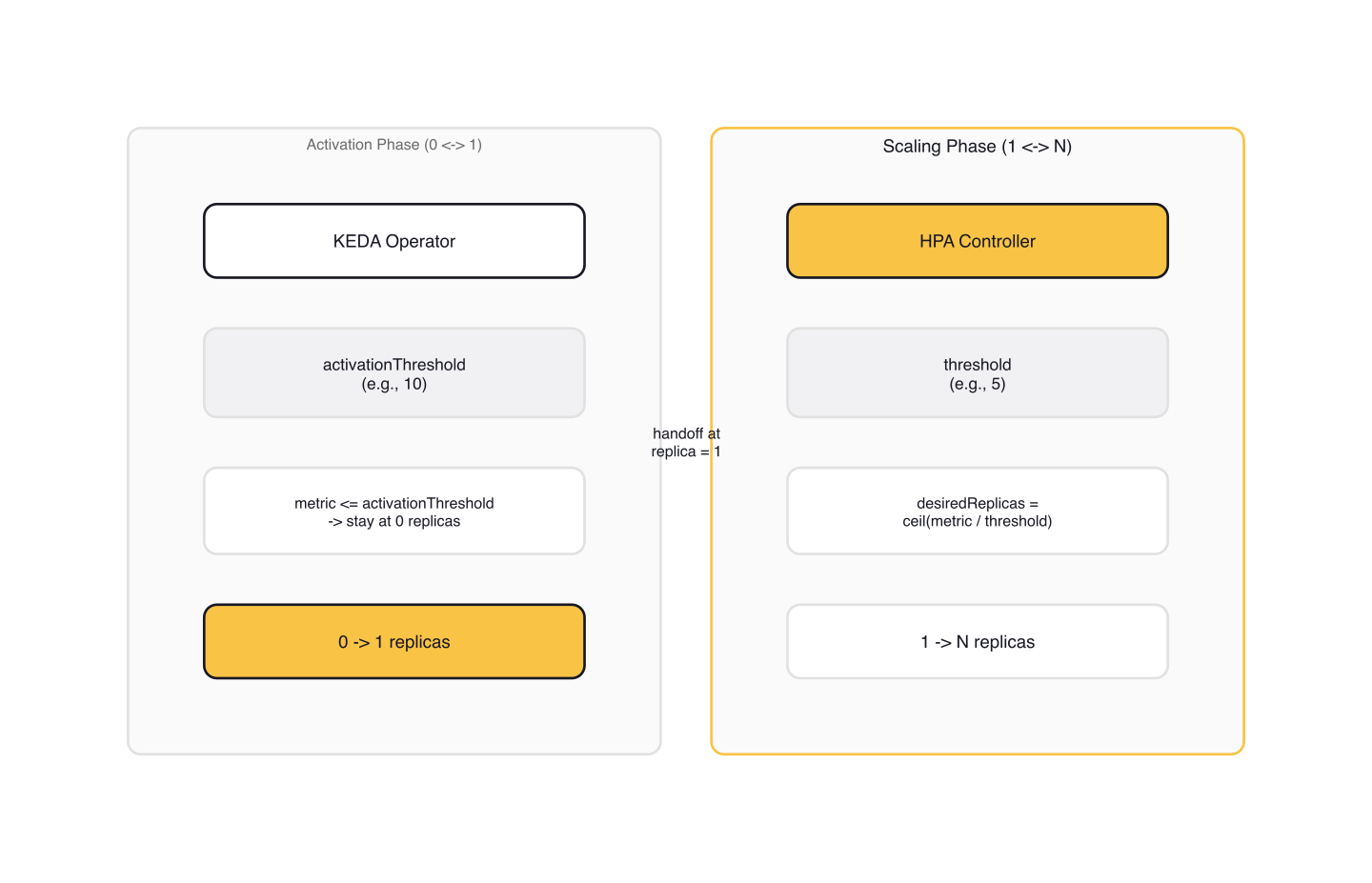

Activation vs Scaling Thresholds

This is the single most important concept for the Prometheus scaler, and the one most commonly misconfigured.

KEDA operates in two phases:

- Activation phase (0↔1): The KEDA operator decides whether to scale the workload from zero to one replica (or back to zero). This decision uses the

activationThreshold. - Scaling phase (1↔N): Once at least one replica is running, HPA takes over and scales between 1 and

maxReplicaCount. HPA uses thethresholdvalue as its target metric.

The activationThreshold defaults to 0, which means any metric value greater than 0 activates the deployment. The critical detail: activation occurs when the metric is strictly greater than the activationThreshold, not greater-than-or-equal. With the default of 0, a metric value of exactly 0 keeps the deployment scaled to zero, while 0.001 activates it.

The threshold works differently. KEDA uses the Value external metric type by default, so HPA calculates desired replicas as ceil(currentMetricValue / threshold). If your threshold is 100 and the current metric value is 450, HPA targets 5 replicas.

warning: The activation threshold takes priority over the scaling threshold. If you set

threshold: 10andactivationThreshold: 50, a metric value of 40 keeps the deployment at zero replicas, even though HPA would want 4 instances. The scaler is not active, so HPA never gets consulted.

When minReplicaCount is 1 or higher, the scaler is always active and activationThreshold is irrelevant. The two-threshold model only matters when you're using scale-to-zero.

A practical example: you scale on sum(rate(http_requests_total{...}[2m])). Set activationThreshold: 5 to ignore background noise (health checks, monitoring scrapers). Set threshold: 100 to target one pod per 100 requests per second. The deployment stays at zero during quiet hours, wakes up when real traffic arrives, and HPA handles the rest.

Authentication and TLS

Production Prometheus instances are rarely open. KEDA supports four authentication modes through TriggerAuthentication, and they can be combined (e.g., authModes: "tls,basic").

Bearer token

# keda-prometheus-bearer-auth.yaml

apiVersion: v1

kind: Secret

metadata:

name: keda-prom-secret

stringData:

bearerToken: "your-prometheus-bearer-token"

---

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: keda-prom-creds

spec:

secretTargetRef:

- parameter: bearerToken

name: keda-prom-secret

key: bearerToken

---

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: prometheus-scaledobject

spec:

scaleTargetRef:

name: my-deployment

triggers:

- type: prometheus

metadata:

serverAddress: http://prometheus-server:9090

query: sum(rate(http_requests_total{deployment="my-deployment"}[2m]))

threshold: '100'

authModes: "bearer"

authenticationRef:

name: keda-prom-creds

TLS with client certificates

For mutual TLS, set authModes: "tls" and provide cert, key, and ca through the TriggerAuthentication. This is common in enterprise environments with internal CAs.

You can also set a CA certificate without any other auth mode. If your Prometheus endpoint uses a self-signed certificate or an enterprise CA, provide the ca parameter and KEDA will trust it regardless of the selected authModes.

Custom auth headers

For Prometheus behind a reverse proxy or API gateway that expects a custom header:

# keda-prometheus-custom-auth.yaml

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: keda-prom-custom

spec:

secretTargetRef:

- parameter: customAuthHeader

name: keda-prom-secret

key: customAuthHeader # e.g., "X-Auth-Token"

- parameter: customAuthValue

name: keda-prom-secret

key: customAuthValue # the token value

One TriggerAuthentication can be referenced by multiple ScaledObjects, so a team with 20 workloads scaling from the same Prometheus instance defines credentials once.

Cloud-Managed Prometheus Services

All three major cloud providers offer managed Prometheus, and KEDA integrates with each using cloud-native identity mechanisms. No static credentials needed.

Amazon Managed Prometheus (AMP)

# keda-amp-scaledobject.yaml

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: keda-trigger-auth-aws

spec:

podIdentity:

provider: aws

---

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: amp-scaledobject

spec:

scaleTargetRef:

name: my-deployment

triggers:

- type: prometheus

authenticationRef:

name: keda-trigger-auth-aws

metadata:

serverAddress: "https://aps-workspaces.us-east-1.amazonaws.com/workspaces/ws-abc123"

query: sum(rate(http_requests_total{deployment="my-deployment"}[2m]))

threshold: '100'

awsRegion: us-east-1

identityOwner: operator

The identityOwner: operator tells KEDA that the operator pod (not the workload pod) assumes the IAM role. Under the hood, the scaler uses AWS SigV4 request signing via NewSigV4RoundTripper to authenticate every query to AMP.

Azure Monitor Managed Prometheus

# keda-azure-prometheus-scaledobject.yaml

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: azure-managed-prometheus-auth

spec:

podIdentity:

provider: azure-workload

---

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: azure-prometheus-scaledobject

spec:

scaleTargetRef:

name: my-deployment

triggers:

- type: prometheus

metadata:

serverAddress: https://my-workspace.eastus.prometheus.monitor.azure.com

query: sum(rate(http_requests_total{deployment="my-deployment"}[2m]))

threshold: '100'

authenticationRef:

name: azure-managed-prometheus-auth

The identity needs the Monitoring Data Reader role on the Azure Monitor Workspace. No other authModes can be combined with Azure Workload Identity.

Google Managed Prometheus

# keda-gcp-prometheus-scaledobject.yaml

apiVersion: keda.sh/v1alpha1

kind: ClusterTriggerAuthentication

metadata:

name: google-workload-identity-auth

spec:

podIdentity:

provider: gcp

---

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: gcp-prometheus-scaledobject

spec:

scaleTargetRef:

name: my-deployment

triggers:

- type: prometheus

metadata:

serverAddress: https://monitoring.googleapis.com/v1/projects/my-project/location/global/prometheus

query: sum(rate(http_requests_total{deployment="my-deployment"}[2m]))

threshold: '100'

authenticationRef:

kind: ClusterTriggerAuthentication

name: google-workload-identity-auth

GCP uses ClusterTriggerAuthentication for cluster-wide scope. The Google Service Account needs the roles/monitoring.viewer role. The source code resolves GCP credentials through GetGCPOAuth2HTTPTransport with the monitoring.read OAuth scope.

Production Patterns and PromQL Design

Fallback replicas

When Prometheus is unreachable, you don't want your workload to scale to zero because the scaler can't get metrics. The fallback mechanism provides a safety net:

# keda-prometheus-fallback.yaml

spec:

fallback:

failureThreshold: 3

replicas: 6

After 3 consecutive failures to query Prometheus, KEDA scales the deployment to 6 replicas. This keeps the workload running at a known-safe capacity until Prometheus recovers.

ignoreNullValues

The default (true) treats empty Prometheus responses as a metric value of 0. This is safe for most cases: if nobody is sending requests, the rate is genuinely 0.

Set it to false when a missing metric means the target is down, not idle. If your deployment is supposed to always emit metrics and Prometheus suddenly returns nothing, that's a monitoring failure, not a scaling signal. With ignoreNullValues: false, the scaler returns an error, which prevents scaling decisions based on phantom zeros.

PromQL query design

Your query must return a single value. This means aggregation is almost always required:

# Good: aggregated to a single value

sum(rate(http_requests_total{deployment="my-deployment"}[2m]))

# Bad: returns one value per pod (multiple results → scaler error)

rate(http_requests_total{deployment="my-deployment"}[2m])

The rate() window matters. A 30-second window is noisy and can cause rapid scaling oscillation. A 10-minute window is sluggish and misses traffic bursts. Two minutes ([2m]) is a reasonable default for most HTTP workloads. Match it to your application's traffic pattern.

RED metrics as scaling signals

The RED method (Rate, Errors, Duration) maps naturally to scaling triggers:

# Rate: scale on throughput (requests per second)

sum(rate(http_requests_total{deployment="my-deployment"}[2m]))

# Errors: scale on error rate (5xx responses per second)

sum(rate(http_requests_total{deployment="my-deployment",status=~"5.."}[2m]))

# Duration: scale on p95 latency

histogram_quantile(0.95,

sum(rate(http_request_duration_seconds_bucket{deployment="my-deployment"}[2m])) by (le)

)

Scaling on latency is the most nuanced. A rising p95 often means your pods are saturated, so adding replicas helps. But latency spikes caused by downstream dependencies (a slow database, an external API) won't improve with more replicas. Use latency-based scaling when you've verified the bottleneck is in the scaled workload itself.

Scaling modifiers

When scaling on multiple signals, KEDA's scalingModifiers feature lets you compose trigger values with a formula:

# keda-scaling-modifiers-example.yaml

advanced:

scalingModifiers:

formula: "error_rate < 0.05 ? request_rate : request_rate * 2"

target: "100"

triggers:

- type: prometheus

name: request_rate

metadata:

serverAddress: http://prometheus-server:9090

query: sum(rate(http_requests_total{deployment="my-deployment"}[2m]))

threshold: '100'

- type: prometheus

name: error_rate

metadata:

serverAddress: http://prometheus-server:9090

query: |

sum(rate(http_requests_total{deployment="my-deployment",status=~"5.."}[2m]))

/ sum(rate(http_requests_total{deployment="my-deployment"}[2m]))

threshold: '0.05'

This scales on request rate normally, but doubles the scaling signal when the error rate exceeds 5%. The formula language supports arithmetic, ternary operators, and aggregation functions across named triggers.

Metric caching

KEDA's metrics server normally queries the scaler on every HPA poll (roughly every 15 seconds). Enable useCachedMetrics: true in the trigger spec to cache the value between polling intervals, reducing load on your Prometheus instance:

# keda-cached-metrics.yaml

triggers:

- type: prometheus

useCachedMetrics: true

metadata:

serverAddress: http://prometheus-server:9090

query: sum(rate(http_requests_total{deployment="my-deployment"}[2m]))

threshold: '100'

This is especially valuable when your Prometheus is resource-constrained or when the query is expensive (high-cardinality labels, long range vectors).

Gotchas

Your query must return exactly one value. A rate() without sum() returns one value per pod. KEDA errors on multiple results. Always aggregate with sum(), avg(), or another function that collapses to a single element.

Inf values are treated as null. If Prometheus returns +Inf (which can happen with rate() over counter resets or division by zero), the scaler returns 0 when ignoreNullValues is true, or errors when it's false. If your scaling decisions seem stuck at zero, check whether your PromQL can produce infinity edge cases.

Pod identity is exclusive. When using podIdentity (AWS, Azure, or GCP), you cannot combine it with any authModes. The source code enforces this in checkAuthConfigWithPodIdentity. If you need TLS on top of pod identity, the TLS termination has to happen at the network layer (a service mesh or load balancer), not in KEDA's HTTP client.

The timeout parameter accepts human-readable durations. Not just milliseconds. Values like "30s" or "2m" work and override the global KEDA_HTTP_DEFAULT_TIMEOUT. Set this when your Prometheus instance is slow to respond under load, rather than letting the scaler fail with a timeout error.

activationThreshold is strictly greater-than, not greater-than-or-equal. With the default of 0, a metric value of exactly 0 does not activate the scaler. A value of 0.001 does. If you're debugging why your workload isn't waking up, check whether the metric is returning exactly your activation value.

Wrap-up

KEDA's Prometheus scaler replaces Prometheus Adapter's shared ConfigMap with per-workload ScaledObjects, adds scale-to-zero, and integrates natively with managed cloud Prometheus. The two numbers to get right: activationThreshold for when your workload wakes up, threshold for how many replicas HPA targets once it's running.

For workloads that need to scale on HTTP traffic specifically, KEDA also offers a dedicated HTTP Add-on with built-in traffic interception and scale-to-zero. That's a different approach with its own trade-offs, covered in the next article in this collection.

This post is part of the KEDA — Event-Driven Autoscaling from Zero to Production collection (3 of 7)

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

HTTP-Based Autoscaling with the KEDA HTTP Add-on

How the KEDA HTTP Add-on intercepts traffic to scale HTTP workloads to zero, and when the Prometheus scaler is better.

Introduction to KEDA and Event-Driven Autoscaling

How KEDA extends Kubernetes HPA with 65+ scalers, scale-to-zero, and a two-phase architecture for event-driven pod autoscaling.

KEDA and Karpenter Together — Pod and Node Scaling Synergy

Combining KEDA's event-driven pod scaling with Karpenter's just-in-time node provisioning for a fully reactive, cost-efficient Kubernetes autoscaling stack.