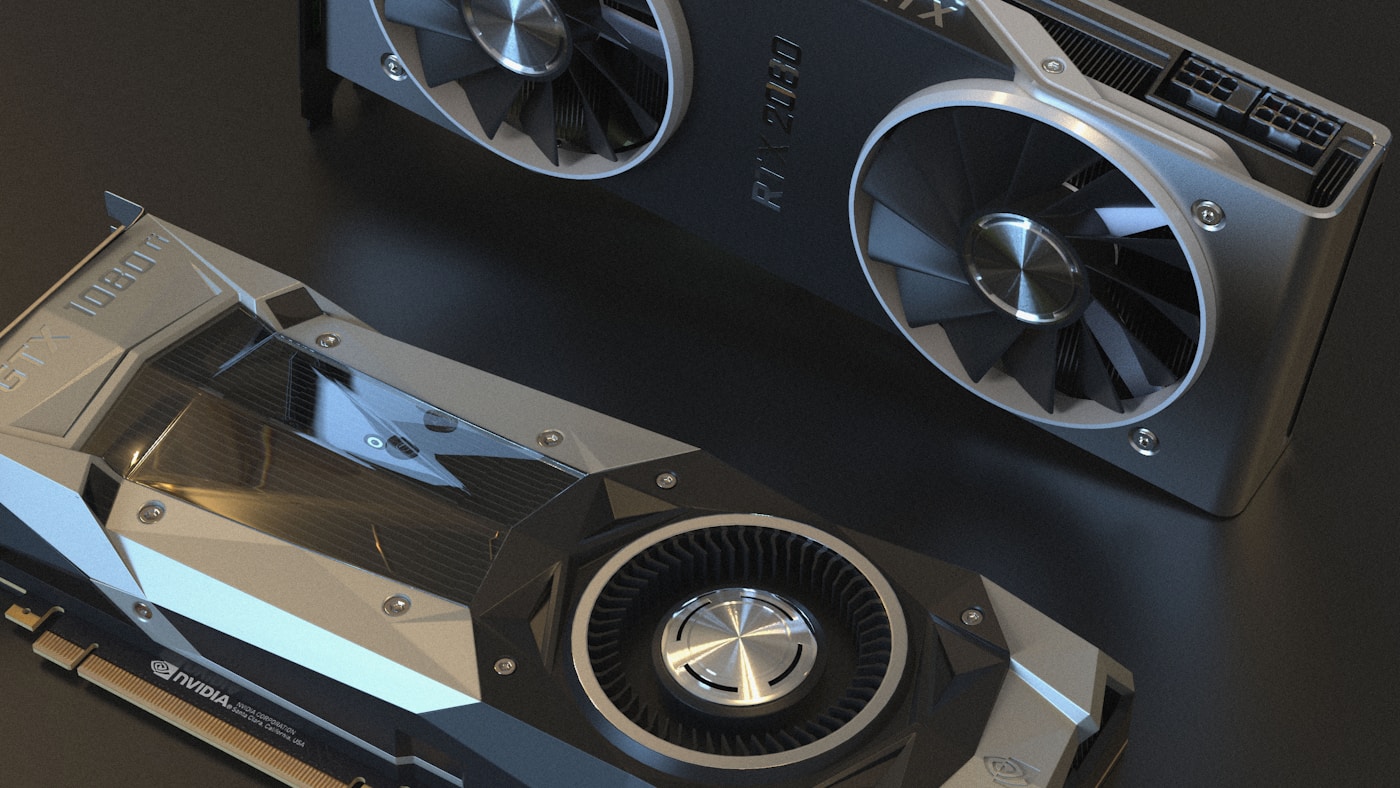

GPU Sharing Strategies for Multi-Tenant Kubernetes: MIG, Time-Slicing, and MPS

NVIDIA's GPU sharing mechanisms — MIG, time-slicing, and MPS — are gaining traction as teams run multiple inference workloads per GPU.

10 posts

NVIDIA's GPU sharing mechanisms — MIG, time-slicing, and MPS — are gaining traction as teams run multiple inference workloads per GPU.

NVIDIA released AI Cluster Runtime, an open-source project providing validated, version-locked Kubernetes configurations for GPU infrastructure.

Kueue manages GPU quotas, enforces fair sharing across teams, and dispatches jobs to remote HPC clusters — the standard for production AI batch scheduling.

CNCF launched v1.0 of the Kubernetes AI Conformance Program defining baseline capabilities for running AI workloads across conformant clusters.

Deploy vLLM with KServe on Kubernetes: InferenceService CRD, KEDA autoscaling on queue depth, and distributed KV cache with LMCache for production inference.

NVIDIA open-sourced KAI Scheduler (Apache 2.0), a Kubernetes-native GPU scheduling solution originally from the Run:ai platform.

HAMi (CNCF Sandbox) emerged at KubeCon EU 2026 as the reference implementation for GPU resource management across NVIDIA, AMD, Huawei, and Cambricon accelerators.

DRA went GA in Kubernetes v1.34 and continues evolving — replacing Device Plugins with richer semantics including DeviceClass, ResourceClaim, CEL-based filtering, and topology awareness.

Armada treats multiple Kubernetes clusters as a single resource pool for GPU-intensive AI workloads, with global queue management, gang scheduling, and production-scale throughput.

Dedicated GPU NodePools, cold start fixes for 10GB+ AI images, disruption protection for training jobs, and gang scheduling for distributed workloads.