Dynamic Resource Allocation (DRA) GA: The New GPU Interface for Kubernetes

If you've operated GPU workloads on Kubernetes, you know the gap. You request nvidia.com/gpu: 1 and hope the scheduler places your Pod on a node with an available GPU. You can't ask for a specific GPU model. You can't express "two GPUs on the same PCIe root complex." You can't share a GPU across containers without resorting to time-slicing hacks configured outside Kubernetes.

Dynamic Resource Allocation changed this. DRA went GA in Kubernetes v1.34, replacing the count-based Device Plugin model with a claim-based API that treats GPUs as first-class, attribute-aware resources. Instead of resources.limits, you create a ResourceClaim that references a DeviceClass with CEL filters. The scheduler matches claims to ResourceSlices published by DRA drivers, then places Pods on nodes that can access the allocated devices.

This article walks through the DRA architecture, the migration path from Device Plugins, and real examples from the NVIDIA and AMD DRA drivers. You'll see how to write CEL filters for GPU selection, set up DeviceClasses, and deploy workloads that share GPUs across containers.

DRA Architecture: DeviceClass, ResourceClaim, ResourceSlice

DRA introduces four API kinds in the resource.k8s.io/v1 group. Understanding how they interact is the foundation for migrating from Device Plugins.

DeviceClass

A DeviceClass defines a category of devices. Think of it as a StorageClass for GPUs — it tells workload operators what types of devices exist and how to request them.

# deviceclass.yaml (k8s.io/examples/dra/deviceclass.yaml)

apiVersion: resource.k8s.io/v1

kind: DeviceClass

metadata:

name: nvidia-gpu

spec:

selectors:

- cel:

expression: |-

device.driver == "gpu.nvidia.com"

This DeviceClass selects all devices managed by the gpu.nvidia.com driver. The CEL expression can filter on any attribute published in the ResourceSlice — driver name, device model, memory capacity, topology hints.

Cluster admins typically create DeviceClass objects during driver installation. The NVIDIA DRA driver, for example, creates gpu.nvidia.com and mig.nvidia.com classes out of the box.

ResourceSlice

A ResourceSlice represents one or more devices attached to nodes. DRA drivers create and manage these objects — you don't create them manually. Each slice publishes device attributes that CEL filters can match against.

Here's a representative ResourceSlice from the AMD GPU DRA driver advertising an MI300X (attributes based on github.com/ROCm/k8s-gpu-dra-driver docs/driver-attributes.md):

# ResourceSlice example (representative, based on github.com/ROCm/k8s-gpu-dra-driver driver attributes)

apiVersion: resource.k8s.io/v1

kind: ResourceSlice

metadata:

name: gpu-worker-2-gpu.amd.com-5fddf

spec:

driver: gpu.amd.com

nodeName: gpu-worker-2

devices:

- name: gpu-56-184

attributes:

cardIndex:

int: 56

deviceID:

string: "8462659767828489944"

driverVersion:

version: 6.12.12

family:

string: AI

partitionProfile:

string: spx_nps1

pciAddr:

string: "0003:00:04.0"

productName:

string: AMD_Instinct_MI300X_OAM

resource.kubernetes.io/pcieRoot:

string: pci0003:00

type:

string: amdgpu

capacity:

computeUnits:

value: "304"

simdUnits:

value: "1216"

memory:

value: 196592Mi

Every GPU gets this treatment — PCI address, partition profile, driver version, memory, compute units. The scheduler sees the entire cluster's GPU landscape, not just a count per node.

ResourceClaim vs ResourceClaimTemplate

You request devices using either a ResourceClaim or a ResourceClaimTemplate. The choice depends on whether you want to share devices across Pods or give each Pod its own device.

ResourceClaim — manual creation, shared access, lifecycle independent of Pods:

# resourceclaim.yaml (k8s.io/examples/dra/resourceclaim.yaml)

apiVersion: resource.k8s.io/v1

kind: ResourceClaim

metadata:

name: shared-gpu-claim

spec:

devices:

requests:

- name: single-gpu-claim

exactly:

deviceClassName: nvidia-gpu

allocationMode: All

selectors:

- cel:

expression: |-

device.attributes["gpu.nvidia.com"].type == "gpu"

Multiple Pods can reference shared-gpu-claim and share the allocated GPU. This is ideal for inference workloads where multiple replicas can use the same device.

ResourceClaimTemplate — automatic per-Pod generation, dedicated devices, lifecycle bound to Pod:

# resourceclaimtemplate.yaml (k8s.io/examples/dra/resourceclaimtemplate.yaml)

apiVersion: resource.k8s.io/v1

kind: ResourceClaimTemplate

metadata:

name: per-pod-gpu

spec:

spec:

devices:

requests:

- name: gpu-claim

exactly:

deviceClassName: nvidia-gpu

selectors:

- cel:

expression: |-

device.attributes["gpu.nvidia.com"].type == "gpu" &&

device.capacity["gpu.nvidia.com"].memory == quantity("64Gi")

Kubernetes generates a fresh ResourceClaim for each Pod created from a Deployment or Job. Each Pod gets its own GPU. Use this for training workloads where each replica needs dedicated hardware.

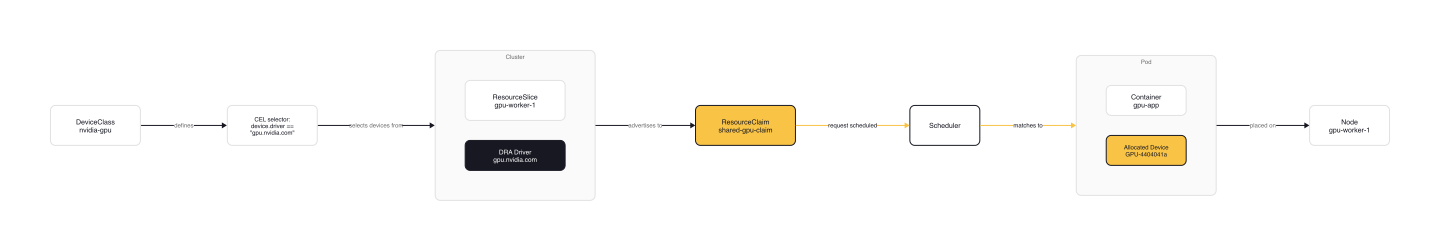

DRA Workflow

The DRA workflow ties these components together:

- Admin creates DeviceClass — defines GPU categories with CEL selectors

- DRA Driver creates ResourceSlice — advertises GPU attributes to the cluster

- Operator creates ResourceClaim — requests devices for a workload

- Scheduler matches Claim to Slice — finds nodes with matching devices

- Pod scheduled on node — kubelet attaches allocated device to container

Migration: From Device Plugin to DRA

The migration from Device Plugins to DRA is more about changing how you request devices than replacing your entire GPU stack. The Device Plugin model isn't going away — but DRA is the future for attribute-aware scheduling.

What changes

Request syntax — from count-based to claim-based:

# Device Plugin (old)

containers:

- name: app

image: my-gpu-app

resources:

limits:

nvidia.com/gpu: 1

# DRA (new)

containers:

- name: app

image: my-gpu-app

resources:

claims:

- name: gpu-claim

resourceClaims:

- name: gpu-claim

resourceClaimTemplateName: per-pod-gpu

Scheduling semantics — the scheduler now matches claims to ResourceSlices using CEL filters. You can express constraints that were impossible before: "two GPUs from the same parent device," "GPUs with at least 64Gi memory," "GPUs on the same PCIe root complex."

What stays

Device Plugin metrics — kubelet still exposes PodResourcesLister gRPC service for monitoring. Tools like kubectl top and Prometheus exporters continue working.

nvidia-smi visibility — once the Pod is running, nvidia-smi -L shows the same GPU list. The allocation mechanism changed, not the runtime behavior.

When to migrate

New GPU workloads — start with DRA. The claim-based model gives you more flexibility and better scheduling.

Existing Device Plugin deployments — no rush. Device Plugins continue working. Migrate when you need attribute-based scheduling or GPU sharing across containers.

When DRA is worth the migration

Migrate to DRA when:

- You need GPU sharing across containers or Pods

- You're running MIG workloads and want partition-aware scheduling

- You have multi-vendor GPUs and want a unified scheduling model

- You need topology constraints (same PCIe root, same NVLink domain)

Stay on Device Plugins when:

- Your workloads only need whole-GPU allocation

- You're on Kubernetes < v1.34

- Your GPU operator doesn't yet support DRA

Real example: NVIDIA GPU Operator with MIG + DRA on AKS

Azure AKS demonstrates the migration path. The GPU Operator installs both the Device Plugin and DRA driver, but you disable the Device Plugin to let DRA take over:

# operator-install.yaml (blog.aks.azure.com/2026/03/03/multi-instance-gpu-with-dra-on-aks)

mig:

strategy: single

devicePlugin:

enabled: false # DRA replaces Device Plugin

driver:

enabled: true

toolkit:

env:

- name: ACCEPT_NVIDIA_VISIBLE_DEVICES_ENVVAR_WHEN_UNPRIVILEGED

value: "false"

The DRA driver installation then points to the driver root managed by the Operator:

# dra-install.yaml (blog.aks.azure.com/2026/03/03/multi-instance-gpu-with-dra-on-aks)

gpuResourcesEnabledOverride: true

resources-computeDomains:

enabled: false

controller:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.azure.com/mode

operator: In

values:

- system

nvidiaDriverRoot: "/run/nvidia/driver"

With MIG enabled and DRA managing allocation, you create a ResourceClaimTemplate for MIG partitions:

# mig-gpu-1g ResourceClaimTemplate (blog.aks.azure.com/2026/03/03/multi-instance-gpu-with-dra-on-aks)

apiVersion: resource.k8s.io/v1

kind: ResourceClaimTemplate

metadata:

name: mig-gpu-1g

spec:

spec:

devices:

requests:

- name: gpu

exactly:

deviceClassName: nvidia-mig

count: 1

A TensorFlow Job then references the template:

# samples-tf-mnist-demo Job (blog.aks.azure.com/2026/03/03/multi-instance-gpu-with-dra-on-aks, adapted from k8s.io/examples/dra/dra-example-job.yaml)

apiVersion: batch/v1

kind: Job

metadata:

name: samples-tf-mnist-demo

spec:

template:

spec:

restartPolicy: OnFailure

containers:

- name: samples-tf-mnist-demo

image: mcr.microsoft.com/azuredocs/samples-tf-mnist-demo:gpu

args: ["--max_steps", "500"]

resources:

claims:

- name: gpu

resourceClaims:

- name: gpu

resourceClaimTemplateName: mig-gpu-1g

The Job runs on a MIG partition, not a full GPU. Multiple Jobs can share the same physical GPU, each with isolated memory and compute.

Setting Up DRA: NVIDIA and AMD Drivers

Setting up DRA requires installing the DRA driver for your GPU vendor. The drivers handle ResourceSlice creation, device allocation, and CDI injection.

NVIDIA k8s-dra-driver-gpu

The NVIDIA DRA driver manages two resource types:

GPUs — traditional GPU allocation with MIG support (beta, not yet officially supported as of v25.3.0)

ComputeDomains — abstraction for Multi-Node NVLink (MNNVL), officially supported

Installation uses Helm. First, create a kind cluster (or use your production cluster):

git clone https://github.com/NVIDIA/k8s-dra-driver-gpu.git

cd k8s-dra-driver-gpu

export KIND_CLUSTER_NAME="kind-dra-1"

./demo/clusters/kind/build-dra-driver-gpu.sh

./demo/clusters/kind/create-cluster.sh

./demo/clusters/kind/install-dra-driver-gpu.sh

The driver creates ResourceSlices automatically. Verify with:

kubectl get resourceslices

AMD k8s-gpu-dra-driver

The AMD driver integrates with ROCm and publishes ResourceSlices with detailed attributes. Each MI300X gets advertised with:

- PCI address (

pciAddr: "0003:00:04.0") - Partition profile (

partitionProfile: "spx_nps1") - Memory capacity (

memory: 196592Mi) - Compute units (

computeUnits: "304")

The driver also supports partitioned GPUs. A child device references its parent:

# Partitioned GPU ResourceSlice (representative, based on github.com/ROCm/k8s-gpu-dra-driver driver attributes)

apiVersion: resource.k8s.io/v1

kind: ResourceSlice

metadata:

name: gpu-worker-2-gpu.amd.com-5fddf

spec:

driver: gpu.amd.com

nodeName: gpu-worker-2

devices:

- name: gpu-4-132

attributes:

parentDeviceID:

string: "16912319329091163297"

parentPciAddr:

string: "0002:00:01.0"

partitionProfile:

string: cpx_nps1

productName:

string: AMD_Instinct_MI300X_OAM

type:

string: amdgpu-partition

capacity:

computeUnits:

value: "38"

memory:

value: 24574Mi

GPU sharing across containers

The NVIDIA driver demo shows two containers sharing one GPU via a ResourceClaimTemplate:

# gpu-test2.yaml (github.com/NVIDIA/k8s-dra-driver-gpu/demo/specs/quickstart/v1/)

apiVersion: v1

kind: Pod

metadata:

name: pod

namespace: gpu-test2

spec:

containers:

- name: ctr0

image: ubuntu:22.04

command: ["bash", "-c"]

args: ["nvidia-smi -L; trap 'exit 0' TERM; sleep 9999 & wait"]

resources:

claims:

- name: shared-gpu

- name: ctr1

image: ubuntu:22.04

command: ["bash", "-c"]

args: ["nvidia-smi -L; trap 'exit 0' TERM; sleep 9999 & wait"]

resources:

claims:

- name: shared-gpu

resourceClaims:

- name: shared-gpu

resourceClaimTemplateName: single-gpu

Both containers reference the same claim. When they run nvidia-smi -L, they see the same UUID:

[pod/pod/ctr0] GPU 0: NVIDIA A100-SXM4-40GB (UUID: GPU-4404041a-04cf-1ccf-9e70-f139a9b1e23c)

[pod/pod/ctr1] GPU 0: NVIDIA A100-SXM4-40GB (UUID: GPU-4404041a-04cf-1ccf-9e70-f139a9b1e23c)

CEL Filters: Expressive GPU Selection

CEL (Common Expression Language) filters are the heart of DRA's expressiveness. You can filter on any attribute published in the ResourceSlice.

Basic filter

Select all devices from a driver:

selectors:

- cel:

expression: device.driver == "gpu.nvidia.com"

Attribute filter

Select a specific GPU model:

selectors:

- cel:

expression: |-

device.attributes["gpu.amd.com"].productName == "AMD_Instinct_MI300X_OAM" &&

device.attributes["gpu.amd.com"].partitionProfile == "spx_nps1"

Capacity filter

Request GPUs with minimum memory:

selectors:

- cel:

expression: |-

device.attributes["driver.example.com"].type == "gpu" &&

device.capacity["driver.example.com"].memory == quantity("64Gi")

Topology constraint

Allocate two GPU partitions from the same parent device:

# ResourceClaim with constraints (github.com/ROCm/k8s-gpu-dra-driver)

apiVersion: resource.k8s.io/v1

kind: ResourceClaim

metadata:

name: two-partitions-same-parent

namespace: gpu-test

spec:

devices:

constraints:

- matchAttribute: "gpu.amd.com/deviceID"

requests: ["gpu1", "gpu2"]

requests:

- name: gpu1

exactly:

deviceClassName: gpu.amd.com

selectors:

- cel:

expression: device.attributes["gpu.amd.com"].type == "amdgpu-partition"

- name: gpu2

exactly:

deviceClassName: gpu.amd.com

selectors:

- cel:

expression: device.attributes["gpu.amd.com"].type == "amdgpu-partition"

The matchAttribute constraint ensures both gpu1 and gpu2 have the same deviceID — they're partitions of the same physical GPU.

Gotchas

DRA requires Kubernetes v1.34+ — check before deploying. Run kubectl get deviceclasses. If you get error: the server doesn't have a resource type "deviceclasses", your cluster is too old or the API group is disabled.

ResourceClaim must exist before Pod references it — unless you use ResourceClaimTemplate. If you manually create a ResourceClaim and reference it in a Pod, the Pod won't schedule until the claim exists. ResourceClaimTemplate avoids this by generating claims automatically.

Admin access requires namespace labeling — the adminAccess field in a ResourceClaim grants privileged device access. Starting with Kubernetes v1.34, you can only use this field in namespaces labeled resource.kubernetes.io/admin-access: "true" (case-sensitive).

Device taints can block scheduling — DRA supports device taints (alpha in v1.33). A device with a NoSchedule taint won't be allocated. A NoExecute taint evicts Pods already using the device. Taints are applied via DeviceTaintRule objects.

Pre-scheduled Pods bypass the scheduler — if you set spec.nodeName directly, the scheduler doesn't run. ResourceClaims may not be allocated. Use a node selector instead:

spec:

nodeSelector:

kubernetes.io/hostname: name-of-the-intended-node

Binding conditions wait up to 600 seconds — alpha feature in v1.34. External device preparation (e.g., fabric-attached GPUs) can delay binding. The scheduler waits for conditions like dra.example.com/is-prepared to become True. Configure the timeout in KubeSchedulerConfiguration.

Wrap-up

DRA replaces the count-based Device Plugin model with a claim-based API that treats GPUs as first-class, attribute-aware resources. You create DeviceClass objects to categorize devices, ResourceClaim or ResourceClaimTemplate to request them, and CEL filters to express precise requirements.

The migration path is straightforward: install the DRA driver, create or use existing DeviceClass objects, and update your workload manifests to use resources.claims instead of resources.limits. The NVIDIA and AMD drivers are both production-ready, with the NVIDIA driver focusing on ComputeDomains for MNNVL and the AMD driver providing full GPU attribute visibility.

For AI workloads, DRA is the foundation for more advanced scheduling patterns. Kueue uses DRA for batch workload management. HAMi provides GPU virtualization on top of DRA. The Gateway API inference extension integrates with DRA for model-aware routing.

Start by deploying the NVIDIA or AMD DRA driver on a test cluster. Migrate a single GPU workload and compare the scheduling behavior against your current Device Plugin setup. The claim-based model takes more YAML upfront, but you get back something Device Plugins never offered: the ability to ask for exactly what you need.

The claim-based model restores Kubernetes' core promise: you declare what you need, and the scheduler handles the rest. No more node labels for GPU models. No more manual coordination between cluster admins and workload operators. Just declarative, attribute-aware GPU allocation.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

CNCF Certified Kubernetes AI Conformance Program

CNCF launched v1.0 of the Kubernetes AI Conformance Program defining baseline capabilities for running AI workloads across conformant clusters.

NVIDIA AI Cluster Runtime: Validated GPU Kubernetes Recipes

NVIDIA released AI Cluster Runtime, an open-source project providing validated, version-locked Kubernetes configurations for GPU infrastructure.

Kueue: The Community Standard for Kubernetes AI Batch Scheduling

Kueue manages GPU quotas, enforces fair sharing across teams, and dispatches jobs to remote HPC clusters — the standard for production AI batch scheduling.