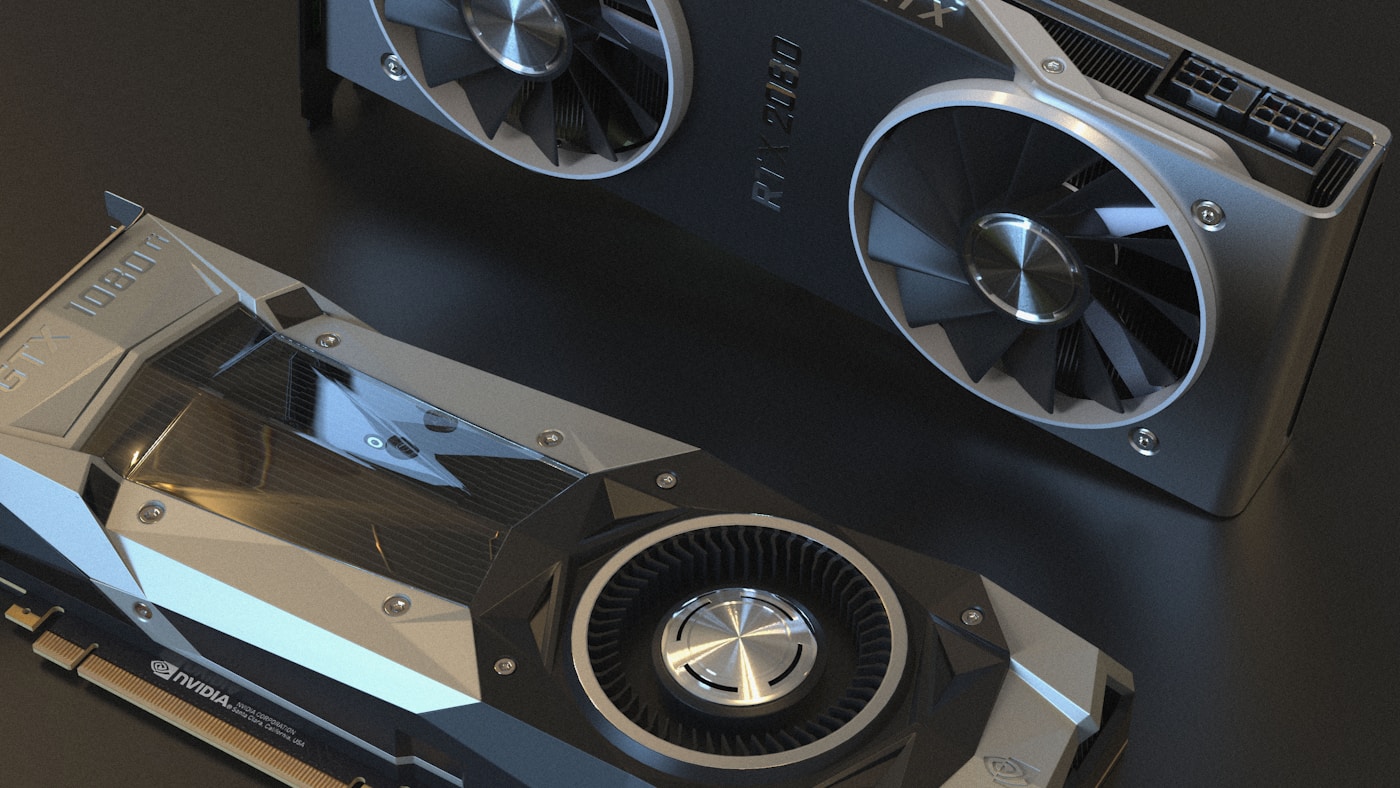

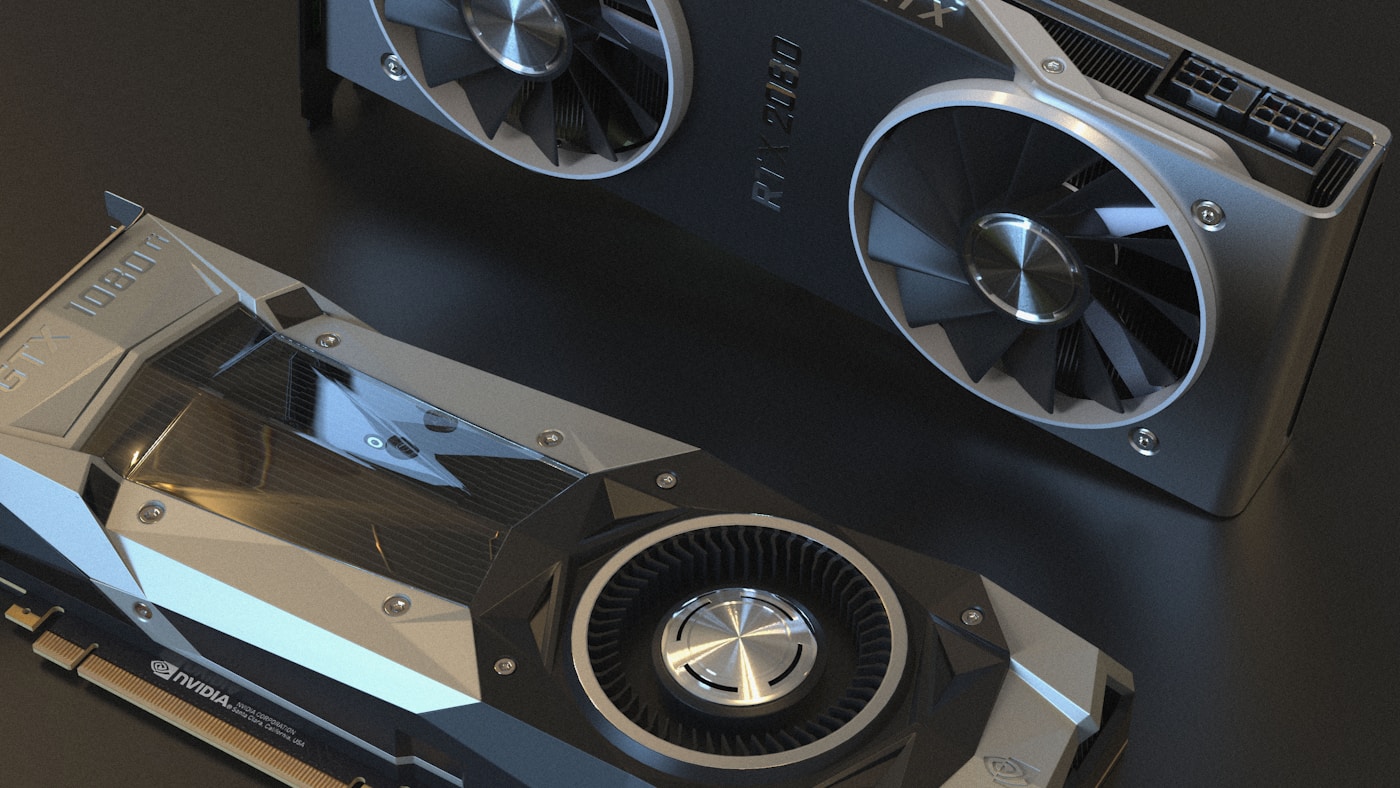

GPU Sharing Strategies for Multi-Tenant Kubernetes: MIG, Time-Slicing, and MPS

NVIDIA's GPU sharing mechanisms — MIG, time-slicing, and MPS — are gaining traction as teams run multiple inference workloads per GPU.

by KubeDojo

5 posts

NVIDIA's GPU sharing mechanisms — MIG, time-slicing, and MPS — are gaining traction as teams run multiple inference workloads per GPU.

NVIDIA released AI Cluster Runtime, an open-source project providing validated, version-locked Kubernetes configurations for GPU infrastructure.

NVIDIA open-sourced KAI Scheduler (Apache 2.0), a Kubernetes-native GPU scheduling solution originally from the Run:ai platform.

Production deployment patterns for NVIDIA Dynamo 1.0 on EKS and GKE — disaggregated serving, KV-aware routing, and gotchas from real deployments.

Dedicated GPU NodePools, cold start fixes for 10GB+ AI images, disruption protection for training jobs, and gang scheduling for distributed workloads.