GPU Sharing Strategies for Multi-Tenant Kubernetes: MIG, Time-Slicing, and MPS

Introduction

Three GPU sharing mechanisms. Three isolation models. One ecosystem where cost pressure forces hard choices.

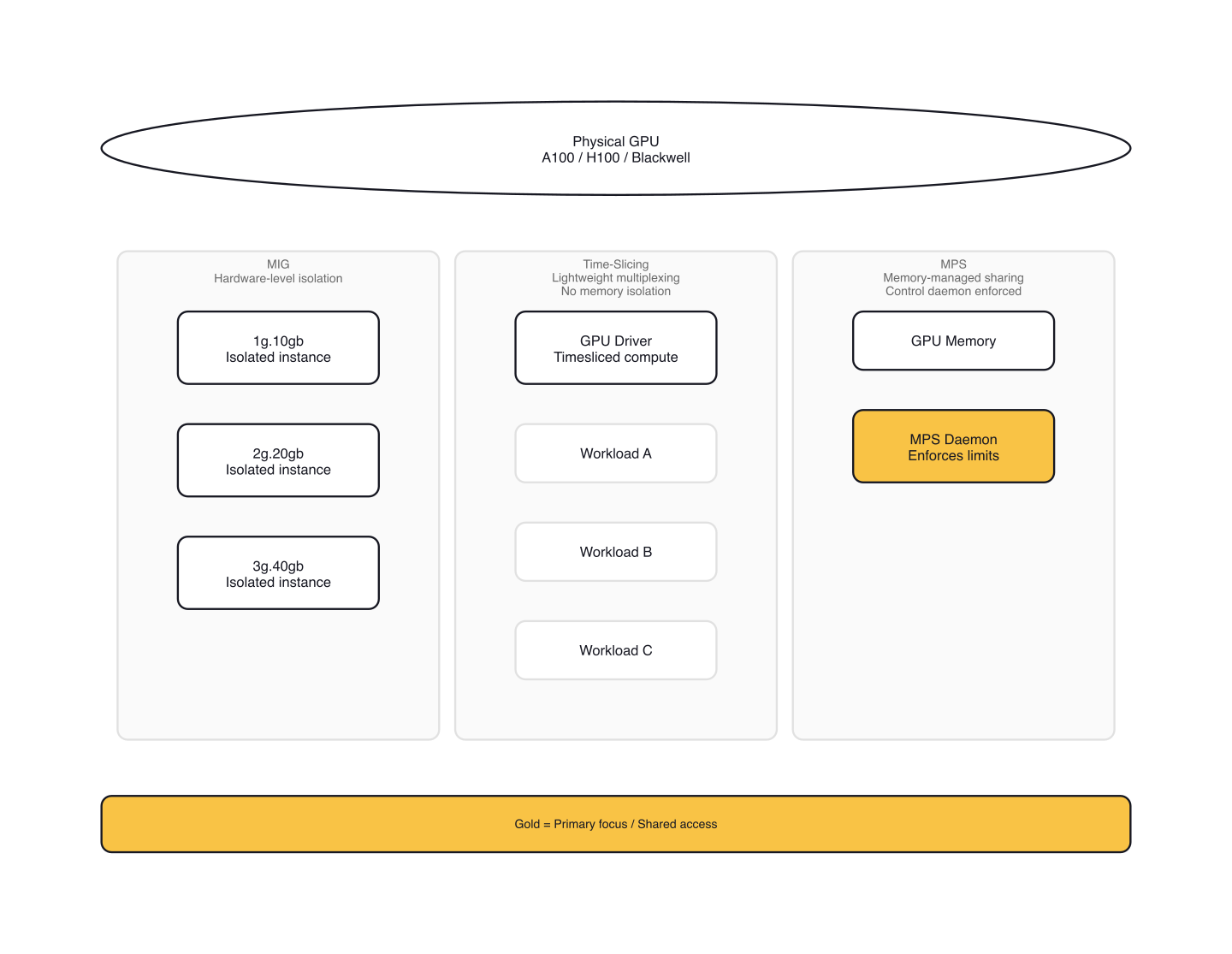

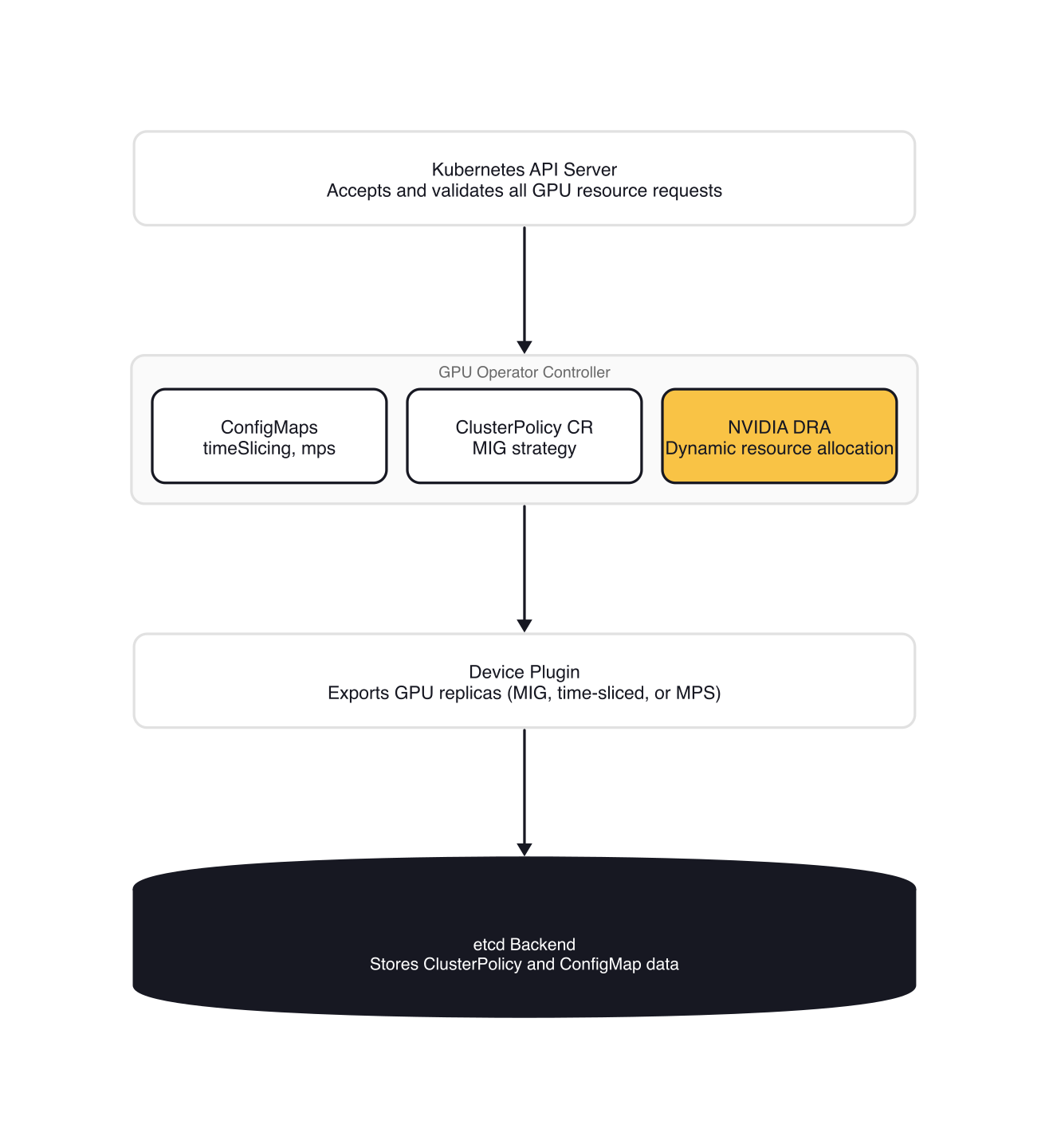

When teams run multiple inference workloads per GPU, they need to understand the trade-offs between isolation, throughput, and resource utilization. MIG partitioning provides hardware-level isolation. Time-slicing multiplexes workloads with no memory isolation. MPS shares memory across processes with a control daemon. All three are orchestrated through the NVIDIA GPU Operator with DRA integration.

MIG Partitioning: Hardware-Level Isolation

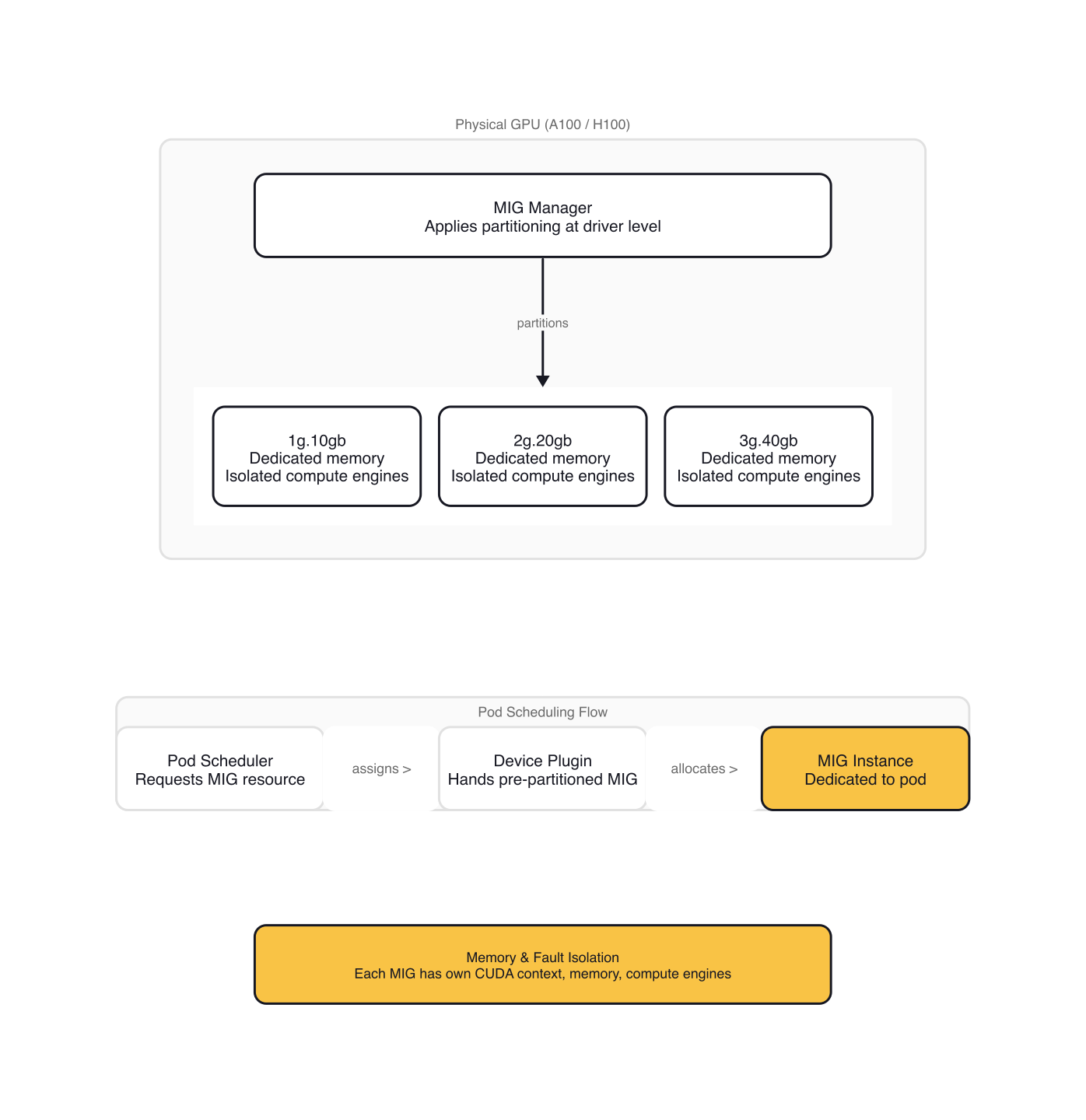

Multi-Instance GPU (MIG) partitions a single physical GPU into smaller, isolated instances with their own memory, compute engines, and I/O paths. This is the only GPU sharing mechanism that provides true hardware-level isolation. MIG works on Ampere architecture and later: A100, H100, H200, and Blackwell GPUs.

MIG Manager, deployed by the GPU Operator, applies the partitioning at the driver level. You then request MIG-sliced resources like nvidia.com/mig-1g.10gb from your application. Kubernetes schedules the pod on a node with that MIG profile, and the device plugin hands the pod a pre-partitioned MIG instance.

Single vs Mixed MIG Strategy

The GPU Operator supports two MIG strategies: single and mixed. Single MIG applies one profile to all GPUs on a node. Mixed MIG applies multiple profiles, letting you share a node's GPUs in different ways.

Set the strategy via clusterpolicies.nvidia.com/cluster-policy:

# Configure single MIG strategy: one profile per GPU

apiVersion: nvidia.com/v1

kind: ClusterPolicy

metadata:

name: cluster-policy

spec:

mig:

strategy: single # or: mixed

migManager:

config:

name: default-mig-parted-config

devicePlugin:

enabled: true

config:

name: ""

default: ""

gfd:

enabled: true # Enables GPU Feature Discovery for automatic node labeling

Single MIG applies one profile, like all-1g.10gb. Label nodes with the profile name:

$ kubectl label nodes <node-name> nvidia.com/mig.config=all-1g.10gb --overwrite

MIG Manager applies the configuration, which terminates all GPU pods and requires a node reboot in some cases (especially on cloud providers). Monitor the MIG Manager pod:

$ kubectl logs -n gpu-operator -l app=nvidia-mig-manager -c nvidia-mig-manager

Applying the selected MIG config to the node

time="2024-05-14T18:31:26Z" level=debug msg="Parsing config file..."

time="2024-05-14T18:31:26Z" level=debug msg="Selecting specific MIG config..."

time="2024-05-14T18:31:26Z" level=debug msg="Running apply-start hook"

time="2024-05-14T18:31:26Z" level=debug msg="Checking current MIG mode..."

time="2024-05-14T18:31:26Z" level=debug msg="Walking MigConfig for (devices=all)"

MIG configuration applied successfully

Restarting validator pod to re-run all validations

pod "nvidia-operator-validator-kmncw" deleted

node/node-name labeled

Changing the 'nvidia.com/mig.config.state' node label to 'success'

Mixed MIG applies multiple profiles. Label nodes with all-balanced or a custom profile:

$ kubectl label nodes <node-name> nvidia.com/mig.config=all-balanced --overwrite

This creates MIG slices like 1g.10gb, 2g.20gb, and 3g.40gb from each GPU. Kubernetes advertises them as separate extended resources:

$ kubectl get node <node-name> -o jsonpath='{.metadata.labels}' | jq .

{

"nvidia.com/gpu.present": "true",

"nvidia.com/gpu.product": "NVIDIA-H100-80GB-HBM3",

"nvidia.com/gpu.sharing-strategy": "none",

"nvidia.com/mig-1g.10gb.count": "2",

"nvidia.com/mig-1g.10gb.engines.copy": "1",

"nvidia.com/mig-1g.10gb.engines.decoder": "1",

"nvidia.com/mig-1g.10gb.engines.encoder": "0",

"nvidia.com/mig-1g.10gb.engines.jpeg": "1",

"nvidia.com/mig-1g.10gb.engines.ofa": "0",

"nvidia.com/mig-1g.10gb.memory": "9984",

"nvidia.com/mig-1g.10gb.multiprocessors": "16",

"nvidia.com/mig-1g.10gb.product": "NVIDIA-H100-80GB-HBM3-MIG-1g.10gb",

"nvidia.com/mig-2g.20gb.count": "1",

"nvidia.com/mig-3g.40gb.count": "1",

"nvidia.com/mig.config.state": "success",

"nvidia.com/mig.strategy": "mixed",

"nvidia.com/mps.capable": "false"

}

Request MIG resources in your pod spec:

# Request a MIG-sliced GPU

apiVersion: v1

kind: Pod

metadata:

name: mig-inference-pod

spec:

restartPolicy: OnFailure

containers:

- name: inference

image: nvidia/samples:vectoradd-cuda11.2.1

resources:

limits:

nvidia.com/mig-1g.10gb: 1

nodeSelector:

nvidia.com/mig.config: all-1g.10gb

MIG provides memory and fault isolation. Each MIG instance has its own CUDA context, memory space, and compute engines. A crash or kernel fault in one MIG instance doesn't affect others on the same GPU.

Custom MIG Configurations

The default MIG profiles come from the default-mig-parted-config ConfigMap. Create a custom ConfigMap for fine-grained control:

apiVersion: v1

kind: ConfigMap

metadata:

name: custom-mig-config

data:

config.yaml: |

version: v1

mig-configs:

all-disabled:

- devices: all

mig-enabled: false

five-1g-one-2g:

- devices: all

mig-enabled: true

mig-devices:

"1g.10gb": 5

"2g.20gb": 1

Patch the ClusterPolicy to use your custom ConfigMap:

$ kubectl patch clusterpolicies.nvidia.com/cluster-policy \\

--type='json' \\

-p='[{"op":"replace", "path":"/spec/migManager/config/name", "value":"custom-mig-config"}]'

Label nodes with your custom profile:

$ kubectl label nodes <node-name> nvidia.com/mig.config=five-1g-one-2g --overwrite

MIG Manager reads the custom ConfigMap and applies the geometry. The node labels reflect the new MIG devices:

$ kubectl get node <node-name> -o jsonpath='{.metadata.labels}' | jq .

{

"nvidia.com/gpu.count": "6",

"nvidia.com/gpu.product": "NVIDIA-H100-80GB-HBM3",

"nvidia.com/mig-1g.10gb.count": "5",

"nvidia.com/mig-2g.20gb.count": "1",

"nvidia.com/mig.capable": "true",

"nvidia.com/mig.config.state": "success",

"nvidia.com/mig.strategy": "mixed",

"nvidia.com/mps.capable": "false"

}

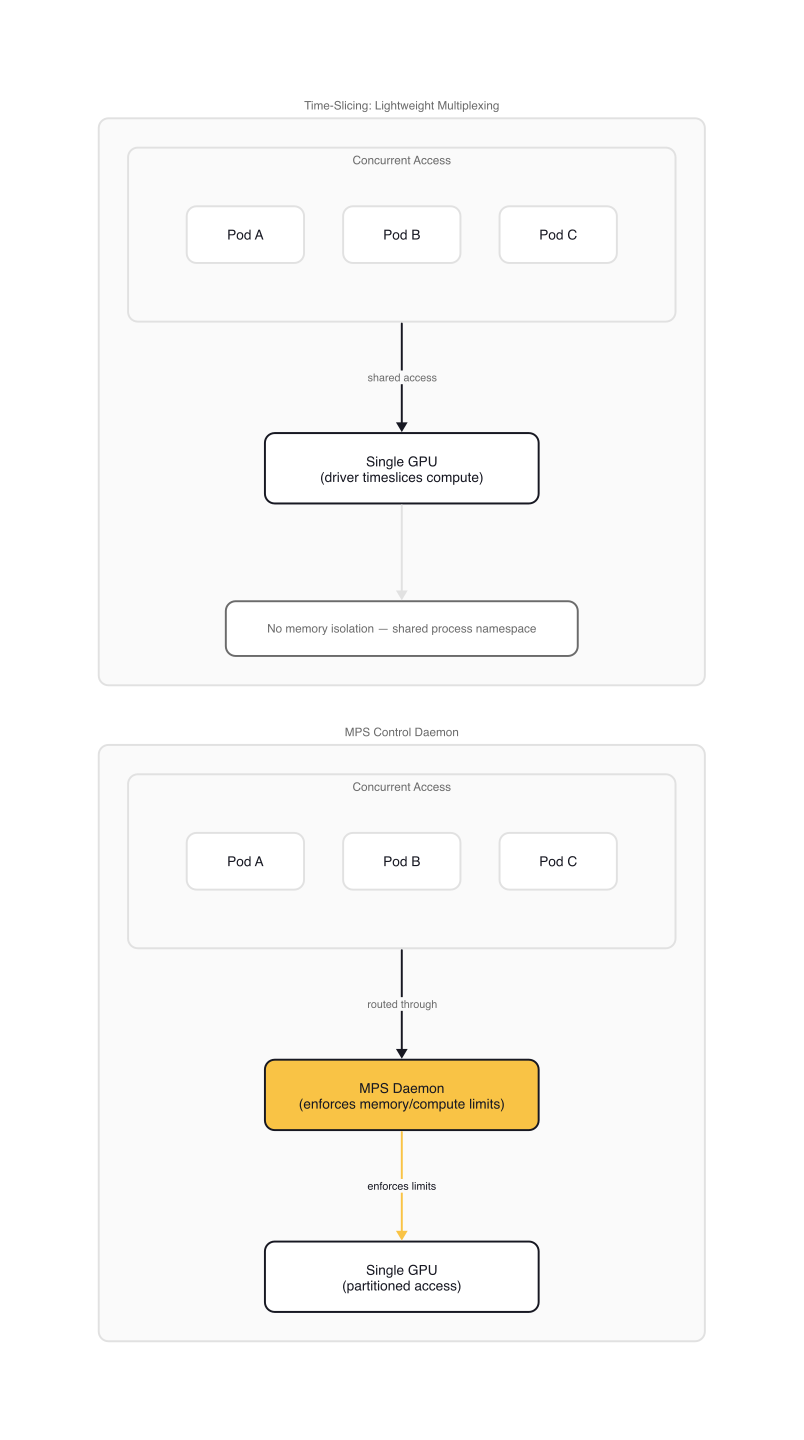

Time-Slicing: Lightweight Multiplexing

Time-slicing oversubscribes GPUs by interleaving workloads across time. Multiple pods share the same GPU, and the GPU driver timeslices compute time between them. There's no memory isolation—each pod runs in the same process namespace as the GPU driver.

Time-slicing works on all GPU generations, not just Ampere and later. It's ideal for inference workloads where latency tolerance is higher than strict isolation requirements.

Configuring Time-Slicing

Create a ConfigMap with time-slicing settings:

apiVersion: v1

kind: ConfigMap

metadata:

name: time-slicing-config

data:

any: |-

version: v1

flags:

migStrategy: none

sharing:

timeSlicing:

renameByDefault: false

failRequestsGreaterThanOne: false

resources:

- name: nvidia.com/gpu

replicas: 4

The any key applies the config to all GPU types. Use node-specific keys like a100-40gb for targeted configurations.

Key settings:

renameByDefault=false: Usesnvidia.com/gpuwith-SHAREDsuffix on the product labelfailRequestsGreaterThanOne=false: Allows requesting multiple replicas (not recommended)

Apply the ConfigMap to the GPU Operator namespace:

$ kubectl create -n gpu-operator -f time-slicing-config.yaml

configmap/time-slicing-config created

Patch the device plugin to use the ConfigMap:

$ kubectl patch clusterpolicies.nvidia.com/cluster-policy -n gpu-operator --type=merge \\

-p '{"spec": {"devicePlugin": {"config": {"name": "time-slicing-config", "default": "any"}}}}'

Apply node labels to target specific GPU types:

$ kubectl label node <node-name> nvidia.com/device-plugin.config=tesla-t4

Verifying Time-Slicing

Check the node labels after applying the config:

$ kubectl describe node <node-name>

...

Labels:

nvidia.com/gpu.count=4

nvidia.com/gpu.product=Tesla-T4-SHARED

nvidia.com/gpu.replicas=4

Capacity:

nvidia.com/gpu: 16

Allocatable:

nvidia.com/gpu: 16

...

The nvidia.com/gpu.replicas label shows the oversubscription factor. The -SHARED suffix on the product label indicates time-slicing is active.

Deploy a workload to validate:

apiVersion: apps/v1

kind: Deployment

metadata:

name: time-slicing-verification

labels:

app: time-slicing-verification

spec:

replicas: 5

selector:

matchLabels:

app: time-slicing-verification

template:

metadata:

labels:

app: time-slicing-verification

spec:

restartPolicy: Always

tolerations:

- key: nvidia.com/gpu

operator: Exists

effect: NoSchedule

containers:

- name: cuda-sample-vector-add

image: "nvcr.io/nvidia/k8s/cuda-sample:vectoradd-cuda11.7.1-ubuntu20.04"

command: ["/bin/bash", "-c", "--"]

args:

- while true; do /cuda-samples/vectorAdd; done

resources:

limits:

nvidia.com/gpu: 1

All five replicas run concurrently on the same GPU, timesharing compute time. The vectorAdd sample completes successfully in each pod:

$ kubectl logs deploy/time-slicing-verification

Found 5 pods, using pod/time-slicing-verification-7cdc7f87c5-s8qwk

[Vector addition of 50000 elements]

Copy input data from the host memory to the CUDA device

CUDA kernel launch with 196 blocks of 256 threads

Copy output data from the CUDA device to the host memory

Test PASSED

Done

Time-Slicing Limitations

warning: DCGM-Exporter doesn't support container metrics when GPU time-slicing is enabled. You lose per-container GPU metrics, which complicates observability for production deployments.

When failRequestsGreaterThanOne=true, pods requesting more than one GPU replica fail with UnexpectedAdmissionError — the failure is explicit. When it's false (the default), requests succeed but the scheduler doesn't guarantee proportional compute time across replicas.

tip: Set

failRequestsGreaterThanOne=truefor production deployments to catch misconfigurations early. Request exactly one replica per pod.

MPS Sharing: Memory-Managed Oversubscription

Multi-Process Service (MPS) shares GPU memory between processes. The MPS control daemon enforces memory and compute limits per partition. MPS doesn't partition the GPU at the hardware level—instead, it manages memory allocations across multiple CUDA contexts.

warning: MPS and MIG are mutually exclusive on the same GPU. MPS requires native GPU access to enforce memory limits. If you enable MIG, MPS cannot run on MIG-partitioned GPUs. Choose based on your isolation requirements: use MIG for hardware isolation, MPS for memory-managed sharing on non-MIG GPUs.

Enabling MPS

Enable MPS via the device plugin ConfigMap:

apiVersion: v1

kind: ConfigMap

metadata:

name: mps-config

data:

any: |-

version: v1

flags:

migStrategy: none

sharing:

mps:

resources:

- name: nvidia.com/gpu

replicas: 10

The MPS control daemon runs as a sidecar in the GPU Operator namespace. It enforces memory and compute limits per partition, throttling processes that exceed their allocation. This prevents one workload from exhausting GPU memory and starving others.

MPS works best for inference workloads with predictable memory usage. Training workloads with volatile memory spikes are a poor fit — the daemon throttles aggressively when limits are hit. MPS and time-slicing are mutually exclusive on the same GPU — configure one or the other per node.

MPS vs MIG

MPS and MIG target different isolation levels. MIG partitions at the hardware level — dedicated memory, compute engines, and fault domains per instance. MPS manages memory allocation in software across CUDA contexts sharing the same GPU.

The two are mutually exclusive on a given GPU. MIG partitions the hardware; MPS needs full GPU access to manage memory across processes. In a cluster, you can run MIG on some nodes and MPS on others, but never both on the same GPU.

Gotchas and Lessons Learned

MIG Requires Node Reboot

Changing MIG geometry requires a node reboot on some platforms, especially cloud providers. MIG Manager sets nvidia.com/mig.config.state: rebooting when a reboot is required.

Plan MIG configuration changes during maintenance windows. Cordon nodes before applying MIG changes to avoid scheduling surprises.

Time-Slicing Request Behavior

When failRequestsGreaterThanOne=true, pods requesting more than one replica fail with UnexpectedAdmissionError. When false (the default), requests succeed silently but without guaranteed proportional compute time — multiple replicas share the same GPU context with no fairness guarantees.

DRA Requires Kubernetes 1.34+

The NVIDIA DRA Driver for GPUs requires Kubernetes 1.34.2 or newer. Earlier versions don't support the DeviceClass resources that DRA uses for GPU and MIG allocation. After installing the NVIDIA DRA driver, verify the device classes exist:

$ kubectl get deviceclass

NAME AGE

gpu.nvidia.com 5m

mig.nvidia.com 5m

Resource Request Confusion

When requesting MIG resources, you request nvidia.com/mig-1g.10gb, not nvidia.com/gpu. The device plugin doesn't create nvidia.com/gpu replicas for MIG-enabled nodes.

$ kubectl describe node <node-name>

...

Capacity:

nvidia.com/mig-1g.10gb: 5

nvidia.com/mig-2g.20gb: 1

...

Request MIG resources in your pod spec, not the base nvidia.com/gpu resource.

Choosing the Right Strategy

| MIG | Time-Slicing | MPS | |

|---|---|---|---|

| Isolation | Hardware (dedicated memory, compute, I/O) | None (shared GPU context) | Software (memory limits enforced) |

| GPU generations | Ampere+ (A100, H100, H200, Blackwell) | All | All |

| Memory isolation | Yes — per-instance memory space | No — shared memory | Enforced limits per process |

| Reboot required | Sometimes (geometry changes) | No | No |

| Observability | Full DCGM metrics per instance | No per-container metrics | Limited |

| Best for | Multi-tenant, strict SLA | Throughput, inference batching | Latency-sensitive, predictable memory |

Wrap-up

Start with time-slicing for inference workloads where throughput matters more than isolation — it works on any GPU and requires zero reboots. Move to MIG when you need hard isolation between tenants or SLA-bound workloads on Ampere+ hardware. Use MPS when you need memory-managed sharing on non-MIG GPUs with predictable allocation patterns.

Configure each mechanism through the NVIDIA GPU Operator, validate with kubectl describe node, and request the appropriate resources in your pod specs. Benchmark with your actual workloads before production — GPU utilization improvements of 2-4x are typical when moving from whole-GPU allocation to shared strategies.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

HAMi: GPU Virtualization as the Reference Pattern for AI Infrastructure

HAMi (CNCF Sandbox) emerged at KubeCon EU 2026 as the reference implementation for GPU resource management across NVIDIA, AMD, Huawei, and Cambricon accelerators.

NVIDIA AI Cluster Runtime: Validated GPU Kubernetes Recipes

NVIDIA released AI Cluster Runtime, an open-source project providing validated, version-locked Kubernetes configurations for GPU infrastructure.

Kueue: The Community Standard for Kubernetes AI Batch Scheduling

Kueue manages GPU quotas, enforces fair sharing across teams, and dispatches jobs to remote HPC clusters — the standard for production AI batch scheduling.