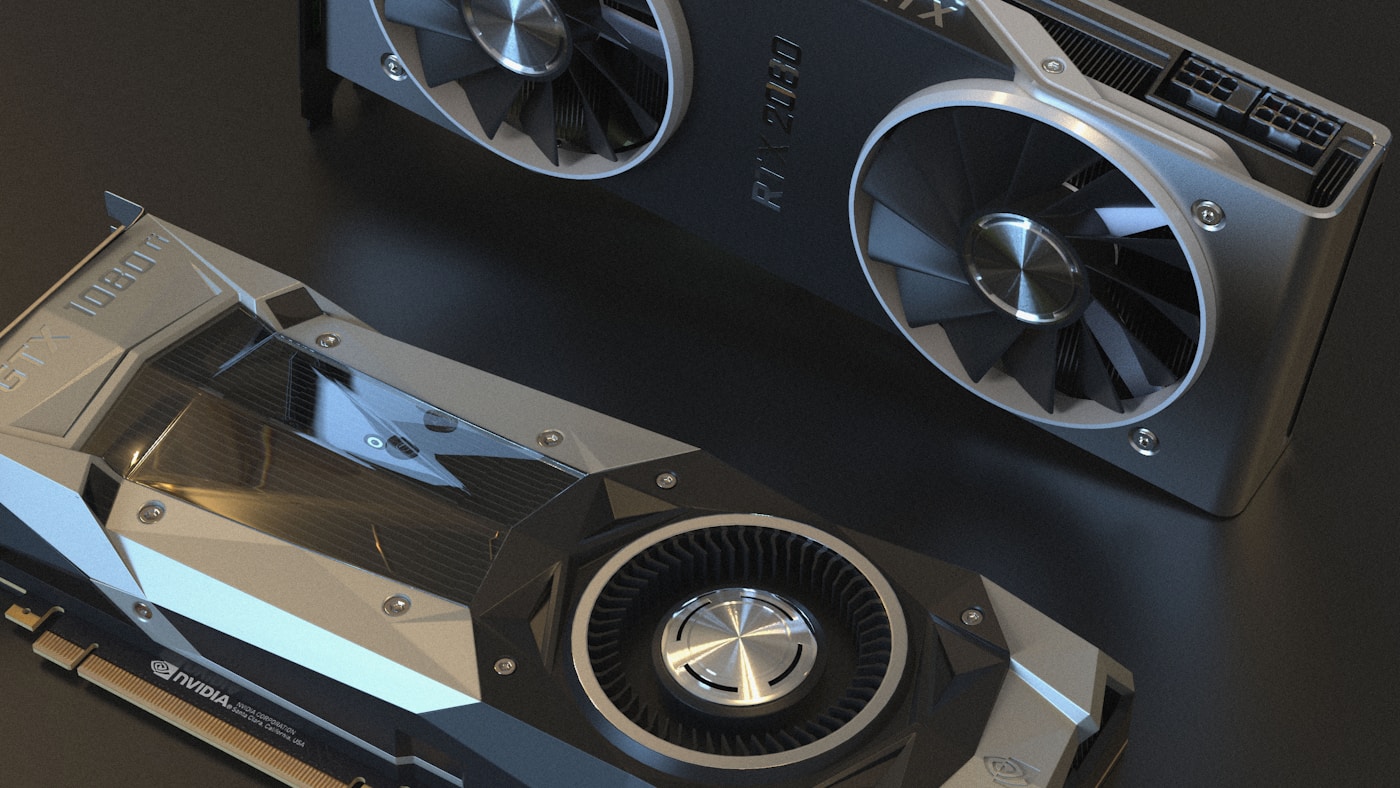

NVIDIA Dynamo 1.0: The Inference Operating System for AI Factories

NVIDIA Dynamo 1.0 entered production at GTC 2026 as the first datacenter-scale inference operating system for AI factories. If you're running LLMs across multiple GPUs or nodes — especially agentic workloads with multi-turn conversations and long contexts — Dynamo solves the coordination problem that single-node inference engines can't touch.

The numbers from production deployments are concrete: 7x higher throughput per GPU on DeepSeek R1 running GB200 NVL72, 2x faster time-to-first-token with KV-aware routing, and 7x faster model startup via weight streaming. AWS, Google Cloud, CoreWeave, Pinterest, and ByteDance have all deployed Dynamo in production over the past year.

This article breaks down what Dynamo actually does (and doesn't do), walks through the architecture components that matter, shows you how to deploy on EKS and GKE, and surfaces the gotchas that bit early adopters.

What Dynamo Actually Is (and Isn't)

Dynamo sits above inference engines like vLLM, SGLang, and TensorRT-LLM — it doesn't replace them. Think of it as the orchestration layer that turns a cluster of independent GPU workers into a coordinated inference system with shared state.

The distinction matters: if you're serving a single model on a single GPU, Dynamo adds complexity you don't need. Your inference engine alone is sufficient. But once you're running multi-node deployments with KV cache reuse patterns, independent prefill/decode scaling, or automatic scaling to meet SLAs, Dynamo becomes the control plane.

Core capabilities:

- Disaggregated serving — Split prefill (token-parallel, compute-heavy) and decode (autoregressive, latency-sensitive) onto separate GPU pools that scale independently

- KV-aware routing — Track which workers have relevant KV cache blocks, route requests to avoid recomputation

- KV Block Manager (KVBM) — Tiered cache offload: GPU HBM → CPU memory → local SSD → remote S3/Azure blob storage

- Planner autoscaling — Profile workloads against SLA targets (TTFT, ITL), right-size GPU pools for minimum TCO

- Grove — Kubernetes operator for topology-aware gang scheduling on rack-scale systems like NVL72

- ModelExpress — Stream model weights GPU-to-GPU over NVLink/NIXL instead of downloading per-replica

Dynamo's modular design means you can adopt components incrementally. KVBM installs as a standalone pip module into vLLM or TensorRT-LLM without requiring the full stack. Grove integrates with KAI Scheduler or other advanced AI schedulers. The KV-aware router runs as a plugin inside the Kubernetes Inference Gateway.

These capabilities exist because multi-node inference has three hard problems: coordination (how do GPUs share state?), scheduling (which GPU handles which request?), and resource management (how do you minimize TCO while meeting SLAs?). Dynamo's architecture addresses each.

Architecture Deep Dive

Disaggregated Prefill/Decode

LLM inference has two phases with fundamentally different resource profiles. Prefill processes the input prompt in parallel — compute-bound, benefits from high tensor parallelism. Decode generates tokens autoregressively — latency-bound, sensitive to memory bandwidth and interconnect speed.

Traditional serving co-locates both phases on the same GPU, creating contention. Long prefills block decode queues. Decode latency spikes when prefill hogs memory.

Dynamo separates them:

# Disaggregated deployment topology

prefill:

replicas: 2

tensor_parallelism: 8

gpu_type: NVIDIA GB200

decode:

replicas: 8

tensor_parallelism: 4

gpu_type: NVIDIA GB200

The Planner monitors queue depths and request characteristics. If a burst of long-prompt requests arrives, it scales prefill workers independently. Decode-heavy workloads (like multi-turn agents with short inputs, long outputs) get more decode replicas.

SemiAnalysis benchmarks show 7x throughput improvement on DeepSeek R1 with disaggregated serving plus wide expert parallel on GB200 NVL72.

KV-Aware Router

The router tracks KV cache blocks across all workers. When a request arrives, it calculates an overlap score — how much relevant context already lives in each worker's cache.

For multi-turn agents and RAG workloads, this eliminates redundant prefill computation. The same system prompt reused across 20 turns doesn't get recomputed 20 times.

Request: User turn #5 in conversation

Router checks overlap scores:

Worker A: 85% cache overlap (has turns 1-4)

Worker B: 0% cache overlap (cold)

Worker C: 40% cache overlap (has turns 3-4, evicted 1-2)

→ Route to Worker A, skip recomputation

Baseten measured 2x faster TTFT on Qwen3-Coder 480B with KV-aware routing enabled. The router also factors in queue depth and expected output sequence length for load balancing.

KV Block Manager

KV cache grows linearly with context length and batch size. At 1M+ token contexts, storing everything in GPU HBM becomes prohibitively expensive — both in memory cost and opportunity cost (less room for weights, activations).

KVBM implements tiered eviction:

- GPU HBM — Active blocks, pinned blocks for multi-turn agents

- CPU memory — Recently evicted blocks, fast recall

- Local NVMe SSD — Cold blocks, LRU eviction

- Remote object storage (S3/Azure blob) — Archive tier, petabyte-scale

KVBM emits events whenever blocks move between tiers. The router's indexer consumes these events to maintain a cluster-wide view of block locations. This enables cross-replica cache reuse even after eviction from the original worker.

Cloudian, DDN, Dell, Pure Storage, HPE, IBM, NetApp, VAST, and WEKA have all integrated KVBM into their storage solutions for AI factories.

Planner and AIConfigurator

The Planner is Dynamo's SLA-driven autoscaler. It continuously monitors:

- Request rates and sequence length distributions

- GPU capacity and queue wait times

- TTFT/ITL percentiles against SLA targets

- Cost signals (GPU type, power, TCO)

Based on these signals, the Planner decides whether to serve requests in aggregated mode (prefill+decode together) or disaggregated mode, and adjusts replica counts for each pool.

AIConfigurator complements the Planner by simulating 10K+ deployment configurations in seconds. Instead of burning GPU-hours profiling every topology, you run AIConfigurator first to narrow the search space, then let the Planner fine-tune on-cluster.

Alibaba reported 80% fewer SLA breaches with Planner autoscaling at 5% lower TCO (APSARA 2025).

ModelExpress: Weight Streaming

Large MoE models like DeepSeek-V3 take minutes to load per replica. In a cluster that scales aggressively, cold starts compound — every new replica downloads the same weights from storage, loads them into GPU memory, compiles kernels, builds CUDA graphs.

ModelExpress changes the flow:

- First replica boots normally: download weights → load to GPU → compile → ready

- Capture the "ready-to-serve" state as a checkpoint

- New replicas restore from checkpoint OR stream weights GPU-to-GPU over NVLink/NIXL

Weight streaming eliminates repeated disk I/O. DeepSeek-V3 on H200 sees 7x faster startup — critical when Karpenter or Cluster Autoscaler is spinning up replicas to meet traffic spikes.

Grove: Topology-Aware Scheduling

NVL72 racks have non-uniform topology: GPUs within a rack communicate over NVLink fabric (high bandwidth, low latency), cross-rack traffic goes through Ethernet/InfiniBand.

Grove is a Kubernetes operator that understands this hierarchy. It places workloads to minimize cross-rack traffic:

- Prefill and decode on the same NVL72 rack for KV cache transfers

- Frontend services on nearby CPU-only nodes

- Multi-replica deployments spread across racks for fault tolerance

Grove integrates with KAI Scheduler and other AI schedulers to enforce placement constraints. The unified topology API replaces manual compute domain definitions.

Deploying Dynamo on Kubernetes

Amazon EKS Deployment

AWS published a production-ready blueprint on the AI-on-EKS repository. The architecture uses:

- Karpenter for GPU autoscaling — provisions g6 instances in <60 seconds when queues fill

- EFA (Elastic Fabric Adapter) for low-latency inter-node communication — required for disaggregated serving

- EFS CSI driver for shared model repository — all pods mount the same PVC

- NVIDIA Dynamo Operator — manages DynamoGraphDeploymentRequest CRDs

Deployment flow:

# Clone AI-on-EKS repo

git clone https://github.com/awslabs/ai-on-eks.git && cd ai-on-eks

# Deploy infrastructure (VPC, EKS, ECR, monitoring)

cd infra/nvidia-dynamo

./install.sh

# Build base images (vLLM backend example)

cd ../blueprints/inference/nvidia-dynamo

source dynamo_env.sh

./build-base-image.sh vllm --push

# Deploy inference graph (choose architecture: agg, disagg, multinode)

./deploy.sh

# Validate deployment

./test.sh

The install.sh script provisions the complete stack in 15-30 minutes: VPC with public/private subnets, EKS cluster with GPU node groups, ECR repositories, Prometheus+Grafana monitoring, and Dynamo platform components (Operator, API Store, NATS, PostgreSQL, MinIO).

Critical EKS considerations:

- EFA requires specific instance types (g6e, p5, p6) and VPC configuration — enable EFA during cluster creation

- IRSA (IAM Roles for Service Accounts) must be configured for KVBM S3 offload

- EFS throughput modes matter — provisioned throughput recommended for large model repositories

Google GKE Integration

Google Cloud announced Dynamo integration with GKE Inference Gateway at GTC 2026. Key differentiators:

- Fractional G4 VMs — 1/2, 1/4, 1/8 GPU slices using NVIDIA vGPU technology, managed via GKE binpacking

- A4X VMs with GB200 NVL72 — Rack-scale systems with NVLink fabric, Dynamic Workload Scheduler for reservation

- Inference Gateway plugin — KV-aware routing inside the standard Kubernetes gateway API

Fractional GPU slicing is unique to GKE. We've had success with 1/4 GPU slices for models under 7B parameters — anything larger needs dedicated GPU. Set resource requests at 80% of slice capacity to avoid OOM. GKE's binpacking can leave GPU slices stranded without careful tuning; use pod topology spread constraints to pack workloads efficiently.

Dynamic Workload Scheduler supports Calendar Mode and Flex Start for A4X/A4X Max reservations. G4 VMs also support Flex Start for cost optimization.

Google published scaling recipes for MoE models on A4X VMs with Dynamo — overcoming memory and interconnect bottlenecks on GB200 NVL72.

Zero-Config Deployment

Dynamo 1.0 introduced the DynamoGraphDeploymentRequest (DGDR) CRD — specify model, hardware, and SLA in one YAML, Dynamo handles the rest:

apiVersion: nvidia.com/v1beta1

kind: DynamoGraphDeploymentRequest

metadata:

name: deepseek-r1

spec:

model: deepseek-ai/DeepSeek-R1-Distill-Llama-8B

backend: vllm

sla:

ttft: 200.0 # ms, time to first token

itl: 20.0 # ms, inter-token latency

p99: true # enforce at p99 percentile

compute:

minReplicas: 2

maxReplicas: 20

gpuType: NVIDIA GB200

kvCache:

offloadEnabled: true

storageClass: s3

maxGpuMemoryPercent: 80

autoApply: true

Behind the scenes:

- AIConfigurator runs simulation-based recommendations

- Planner profiles the workload on-cluster

- Dynamo generates and applies the optimal deployment graph

No hand-tuning tensor parallelism, replica counts, or KV cache sizes. The system finds the configuration that meets your SLA at minimum TCO.

Pre-built recipes exist for common models: Llama-3-70B (vLLM, aggregated), DeepSeek-R1 (SGLang, disaggregated), Qwen3-32B-FP8 (TensorRT-LLM, aggregated).

Performance Benchmarks

Production benchmarks from Dynamo adopters:

| Metric | Result | Workload | Source |

|---|---|---|---|

| Throughput per GPU | 7x higher | DeepSeek R1, GB200 NVL72 | SemiAnalysis InferenceX |

| Time to first token | 2x faster | Qwen3-Coder 480B, KV routing | Baseten production |

| Model startup | 7x faster | DeepSeek-V3, weight streaming | NVIDIA internal |

| SLA breaches | 80% reduction | Multi-tenant inference | Alibaba APSARA 2025 |

| Throughput (peak) | 750x higher vs. single-GPU | DeepSeek-R1, GB300 NVL72 (72 GPUs) | InferenceXv2 |

Context matters: these benchmarks assume disaggregated serving with KV-aware routing on NVL72 racks. Single-node aggregated deployments see more modest gains (1.5-2x) from Planner autoscaling and KV cache management alone.

The 750x throughput number on GB300 NVL72 reflects rack-scale parallelism — 72 GPUs coordinated as a single inference engine with NVLink fabric. That's not comparable to single-GPU benchmarks.

Production Gotchas

EFA/libfabric version mismatches on EKS — Inter-node KV transfers fail silently if libfabric versions don't match across nodes. Verify with fi_info -p efa before enabling disaggregated mode.

KVBM S3 offload requires IRSA — IAM Roles for Service Accounts must be configured with S3 permissions. Without IRSA, KVBM falls back to local SSD only, losing the archive tier.

Planner profiling burns GPU-hours — Letting Planner profile every topology from scratch costs real money. Run AIConfigurator simulation first to narrow the search space, then let Planner fine-tune.

Fractional G4 VMs need binpacking tuned — GKE's default scheduler can leave GPU slices stranded. Set resource requests/limits carefully and use pod topology spread constraints.

Disaggregated mode adds network hops — Prefill and decode on separate workers means KV cache transfer latency. Measure TTFT trade-offs on your workload before enabling — short-prompt workloads may not benefit.

Service discovery choice matters — K8s-native discovery works for single-cluster deployments. Multi-cluster or hybrid cloud needs etcd-backed discovery for cross-cluster routing.

Failure Modes

Dynamo adds complexity — more moving parts means more failure domains. The KV router is a potential bottleneck; if it goes down, routing decisions stall. Dynamo mitigates this with canary health checks and request migration, but you should run the router with high availability (3+ replicas, pod disruption budgets).

Etcd-backed discovery is another failure domain for multi-cluster deployments. If etcd goes down, new workers can't register and the router loses its cluster-wide KV cache view. For single-cluster deployments, use K8s-native discovery to avoid this dependency.

In our testing, the most common failure mode is EFA misconfiguration on EKS — inter-node KV transfers fail silently, and the Planner keeps scheduling to workers that can't communicate. The symptom is sudden throughput collapse when scaling beyond single-node. The fix: validate EFA with fi_info -p efa and run a multi-node NCCL test before enabling disaggregated mode.

Rollback strategy: keep your previous inference engine deployment running in parallel. Dynamo is compatible with vLLM, SGLang, and TensorRT-LLM standalone — you can route traffic back to the non-Dynamo deployment if needed.

Wrap-up

NVIDIA Dynamo 1.0 solves a specific problem: coordinating multi-node LLM inference with shared state. It's not for everyone — single-GPU serving doesn't need an orchestration layer. But if you're running agentic workloads, multi-turn agents, or long-context RAG on Kubernetes, Dynamo's KV-aware routing and disaggregated serving deliver real production gains.

Start with aggregated mode and profile your workload. If prefill/decode imbalance is real (long prompts, bursty traffic), enable disaggregation. Use the zero-config DGDR for your first deployment — hand-tune only after measuring SLA gaps.

Dynamo solves multi-node coordination. If you're running single-node, you don't need it. When you hit the limits of single-node serving — KV cache contention, prefill/decode imbalance, cold start latency — that's when Dynamo becomes relevant. AWS, Google Cloud, and Azure all have production integrations. Storage vendors from Cloudian to WEKA integrated KVBM. The adoption signal is clear, but the decision is simple: if you're not running multi-node yet, start with vLLM or TensorRT-LLM standalone. Add Dynamo when the operational pain forces the conversation.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

NVIDIA AI Cluster Runtime: Validated GPU Kubernetes Recipes

NVIDIA released AI Cluster Runtime, an open-source project providing validated, version-locked Kubernetes configurations for GPU infrastructure.

llm-d Joins CNCF Sandbox: Kubernetes-Native Distributed LLM Inference

llm-d was accepted as a CNCF Sandbox project, providing Kubernetes-native distributed inference with KV-cache-aware routing, prefill/decode disaggregation, and accelerator-agnostic serving.

GPU Sharing Strategies for Multi-Tenant Kubernetes: MIG, Time-Slicing, and MPS

NVIDIA's GPU sharing mechanisms — MIG, time-slicing, and MPS — are gaining traction as teams run multiple inference workloads per GPU.