Armada: Multi-Cluster GPU Scheduling as a Single Resource Pool

When a 100-GPU training job saturates your cluster, inference queues up and data processing stalls. Single Kubernetes clusters hit hard limits — 5,000 to 15,000 nodes max, etcd bottlenecks, kube-scheduler not built for batch. Multi-cluster scheduling isn't optional for GPU-intensive workloads.

Armada (CNCF Sandbox) treats multiple Kubernetes clusters as a single resource pool. Built on event-sourcing with Apache Pulsar, it handles millions of queued jobs across tens of thousands of nodes. G-Research runs it in production processing millions of jobs daily.

This article walks through Armada's event-sourcing architecture, queue setup, job submission with armadactl, and how it compares to MultiKueue for multi-cluster scheduling.

Why Multi-Cluster Scheduling for AI Workloads

The GPU economy creates competing demands: training saturates capacity, inference waits, data processing stalls.

Single cluster limits are real. Tuned clusters max out around 5,000-15,000 nodes. Etcd becomes the bottleneck. The default kube-scheduler lacks batch features like fair queuing, gang scheduling, and preemption.

Armada sidesteps these with out-of-cluster scheduling. Apache Pulsar serves as a durable event log, PostgreSQL for materialized views. This handles millions of queued jobs across many clusters.

G-Research deployed Armada to manage tens of thousands of nodes across multiple clusters, processing millions of jobs per day.

Armada Architecture and Core Components

Armada separates concerns:

- Worker clusters: Kubernetes clusters running workloads

- Control plane: Submit API, Scheduler

- Executors: One per worker cluster

Event-sourcing is key. Apache Pulsar is the source of truth — all state transitions flow through it. Each component maintains its own PostgreSQL view, replaying from the log as needed.

# armada-crs.yaml (line 1-90) — ArmadaServer, Scheduler, and Executor Custom Resources (trimmed)

apiVersion: install.armadaproject.io/v1alpha1

kind: ArmadaServer

metadata:

name: armada-server

namespace: armada

spec:

replicas: 1

image:

repository: gresearch/armada-server

tag: latest

applicationConfig:

httpNodePort: 30001

grpcNodePort: 30002

schedulerApiConnection:

armadaUrl: "armada-scheduler.armada.svc.cluster.local:50051"

forceNoTls: true

pulsar:

URL: pulsar://pulsar-broker.data.svc.cluster.local:6650

# ... pulsarInit, ingress, corsAllowedOrigins, auth, eventsApiRedis, postgres, queryapi, redis omitted

---

apiVersion: install.armadaproject.io/v1alpha1

kind: Scheduler

metadata:

name: armada-scheduler

namespace: armada

spec:

replicas: 1

image:

repository: gresearch/armada-scheduler

tag: latest

applicationConfig:

grpc:

port: 50051

pulsar:

URL: pulsar://pulsar-broker.data.svc.cluster.local:6650

# ... ingress, auth, armadaApi, postgres omitted

---

apiVersion: install.armadaproject.io/v1alpha1

kind: Executor

metadata:

name: armada-executor

namespace: armada

spec:

image:

repository: gresearch/armada-executor

tag: latest

applicationConfig:

executorApiConnection:

armadaUrl: armada-scheduler.armada.svc.cluster.local:50051

forceNoTls: true

# ... metric port, storage, pulsar omitted

ArmadaServer handles submissions, Scheduler makes placement decisions, Executor creates Kubernetes resources. Each reads from Pulsar and maintains its own PostgreSQL view.

Job flow:

- Submission: Client → Submit API validates → publishes to Pulsar

- Queued: Scheduler reads, stores with fair-share accounting

- Scheduled: Scheduler assigns to cluster/node → publishes to log

- Leased: Executor reads from database view

- Pending: Executor creates Kubernetes Pods

- Running: Kubernetes starts containers

- Completed: Executor reports → Scheduler publishes → Lookout UI updates

Lookout UI provides a dashboard. Eventual consistency means job state updates appear within seconds.

Setting Up Queues and Submitting Jobs

Armada organizes jobs into queues for fair scheduling. Create a queue before submitting jobs.

# Create queue

armadactl create queue example

# Submit job

armadactl submit dev/quickstart/example-job.yaml

# Watch progress

armadactl watch example job-set-1

The job spec uses standard Kubernetes podSpec:

# example-job.yaml (line 1-24) — Basic Armada job submission

queue: example

jobSetId: job-set-1

jobs:

- namespace: default

priority: 0

podSpec:

priorityClassName: armada-default

terminationGracePeriodSeconds: 0

restartPolicy: Never

containers:

- name: sleeper

image: alpine:latest

command:

- sh

args:

- -c

- sleep $(( (RANDOM % 60) + 10 ))

resources:

limits:

memory: 128Mi

cpu: 2

requests:

memory: 128Mi

cpu: 2

PriorityClass required. Apply a default PriorityClass before job submission:

# priority-class.yaml (line 1-7) — Required PriorityClass for Armada jobs

apiVersion: scheduling.k8s.io/v1

kind: PriorityClass

metadata:

name: armada-default

value: 1000

globalDefault: false

description: "This priority class should be used as a default for Armada jobs."

Apply this before submitting jobs, or they'll be rejected.

Gang scheduling is critical for distributed training. JobSet pods schedule atomically — all start together or none do. This prevents GPUs sitting idle waiting for stragglers.

Fair Scheduling and Resource Management

Armada implements Dominant Resource Fairness across queues. Each queue gets a fair share based on priority factor and historical usage.

Fair Scheduling in Practice

Two teams share a 100-GPU cluster. Team A's queue has priority factor 2.0, Team B has 1.0. When both submit jobs simultaneously:

- Team A receives ~67 GPUs (2/3 of capacity)

- Team B receives ~33 GPUs (1/3 of capacity)

If Team A's queue goes idle, Team B can burst to 100%. When Team A submits again, preemption reclaims the fair share within seconds.

Preemption lets urgent jobs run timely. When a high-priority job arrives, Armada can preempt lower-priority jobs, balancing fairness with urgency.

Analytics integrate via Prometheus — metrics on resource allocation, queue depth, job latency. Automatic node failure detection removes failed nodes and reschedules jobs.

Production Deployment: TLS and Ingress

The quickstart uses NodePort for simplicity, but production deployments need TLS and ingress:

# armada-server-prod.yaml (excerpt) — TLS configuration for ArmadaServer

spec:

tls:

enabled: true

certSecret: armada-server-tls

ingress:

enabled: true

host: armada.example.com

className: nginx

annotations:

cert-manager.io/cluster-issuer: letsencrypt-prod

The TLS secret contains the certificate and key for armada.example.com. Use cert-manager to automate renewal.

Armada vs. MultiKueue — Choosing the Right Tool

MultiKueue (Kubernetes SIGs) also enables multi-cluster scheduling with different goals.

Armada is a purpose-built batch meta-scheduler: event-sourcing with Pulsar, millions of queued jobs, fair queuing and gang scheduling built-in, production at G-Research scale (CNCF Sandbox).

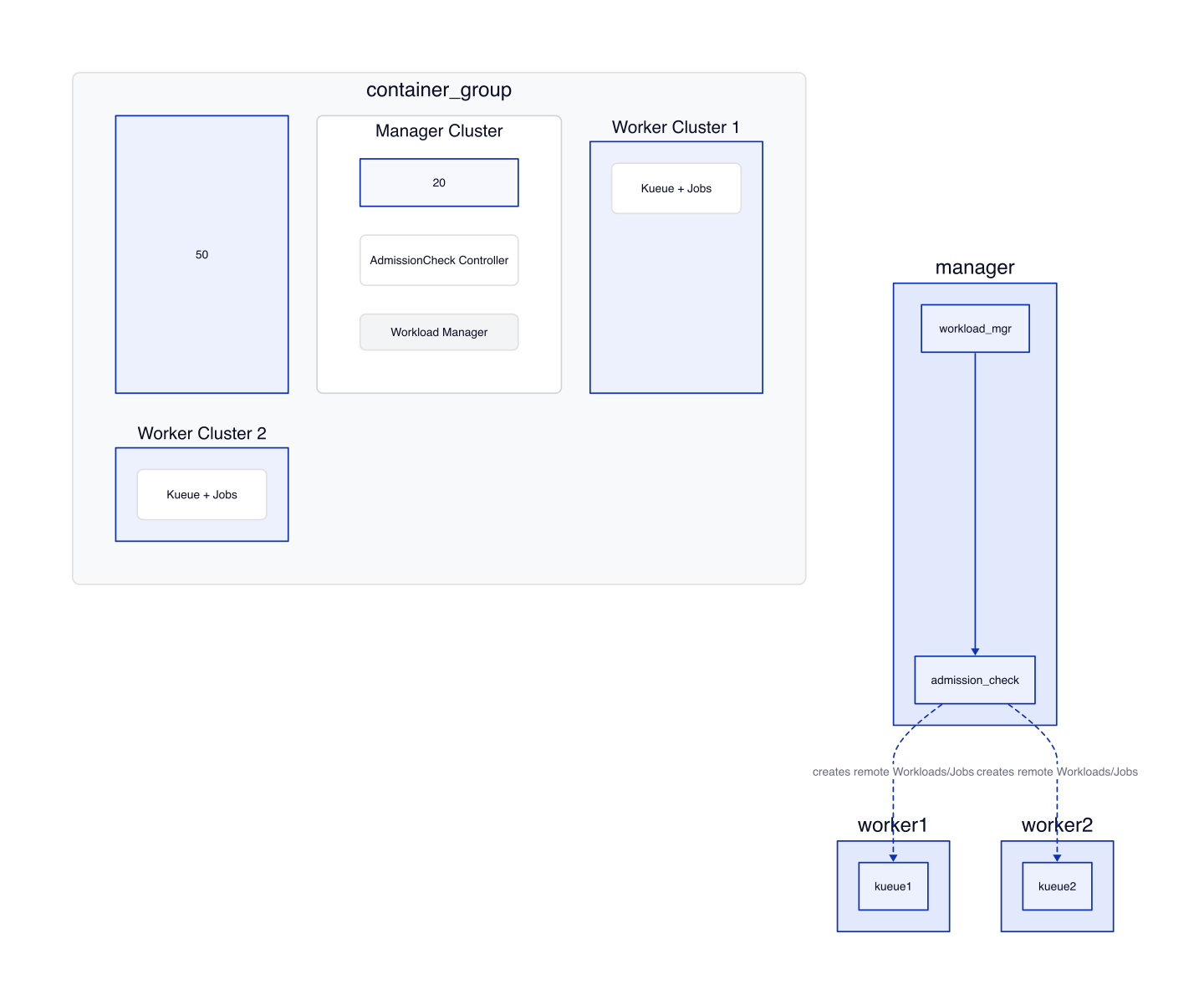

MultiKueue is a multi-cluster dispatcher for Kueue users: AdmissionCheck controller pattern, manager cluster maintains connections to workers, dispatches Workloads (AllAtOnce, Incremental, External), best for existing Kueue users (Kubernetes SIGs project).

Figure 1: MultiKueue manager cluster maintains connections to worker clusters and creates remote Workloads/Jobs via the AdmissionCheck controller.

Key differences:

| Aspect | Armada | MultiKueue |

|---|---|---|

| Purpose | Batch meta-scheduler for massive scale | Multi-cluster dispatch for Kueue users |

| Architecture | Event-sourcing (Pulsar) | AdmissionCheck controller |

| Scale | Millions of jobs, many clusters | Kueue-scale, manager-to-workers |

| Best for | HPC, AI training at scale | Kueue users needing multi-cluster |

warning: MultiKueue limitation The manager cluster cannot serve as a worker for itself. Role sharing isn't supported — you need dedicated worker clusters.

Gotchas

PriorityClass required. Jobs rejected without default PriorityClass. Apply armada-default first.

Queue creation first. Create queue with armadactl create queue <name> before submitting. Submit API validates existence.

Eventual consistency delays. Materialized views update independently from Pulsar. Job state transitions appear in Lookout UI within seconds. Use armadactl watch for real-time updates.

Executor-cluster binding. One executor per worker cluster. Can't manage multiple clusters with one executor.

MultiKueue manager limitation. Manager cluster cannot serve as worker for itself.

NodePort quickstart. Quickstart uses NodePort (Lookout :30000, gRPC :30001, REST :30002). Fine for Kind; production needs ingress and TLS.

Wrap-up

Single clusters hit limits at 5,000-15,000 nodes. Armada bypasses etcd and kube-scheduler entirely — event-sourcing with Pulsar handles millions of jobs across tens of thousands of nodes. For AI platforms running GPU-intensive batch workloads, it treats many clusters as one.

Fair queuing, gang scheduling, and preemption are built-in, not bolted on. G-Research runs it at scale: millions of jobs per day across tens of thousands of nodes.

If you're already using Kueue, MultiKueue handles simpler multi-cluster dispatch. If you need massive throughput or purpose-built batch features, Armada is the mature choice. Both projects are active — Armada in CNCF Sandbox, MultiKueue as a Kubernetes SIGs project.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

Kueue: The Community Standard for Kubernetes AI Batch Scheduling

Kueue manages GPU quotas, enforces fair sharing across teams, and dispatches jobs to remote HPC clusters — the standard for production AI batch scheduling.

Workload-Aware Scheduling in Kubernetes 1.36: The Decoupled PodGroup Model

Kubernetes 1.36 decouples scheduling policy from runtime instances with Workload API v1alpha2, standalone PodGroups, and a dedicated group scheduling cycle.

NVIDIA KAI Scheduler: Open-Source GPU-Aware Kubernetes Scheduling

NVIDIA open-sourced KAI Scheduler (Apache 2.0), a Kubernetes-native GPU scheduling solution originally from the Run:ai platform.