Workload-Aware Scheduling in Kubernetes 1.36: The Decoupled PodGroup Model

Introduction

Kubernetes 1.35 shipped gang scheduling, and it worked: create a Workload, reference it from your Pods via workloadRef, and the scheduler enforces all-or-nothing placement through barriers at PreEnqueue and Permit. Correctness, solved. Performance, not so much. Every Pod still entered the standard scheduling queue individually. For a 64-worker training job, that meant 64 separate scheduling cycles, 64 node evaluations, and 64 Permit checks before the group could bind.

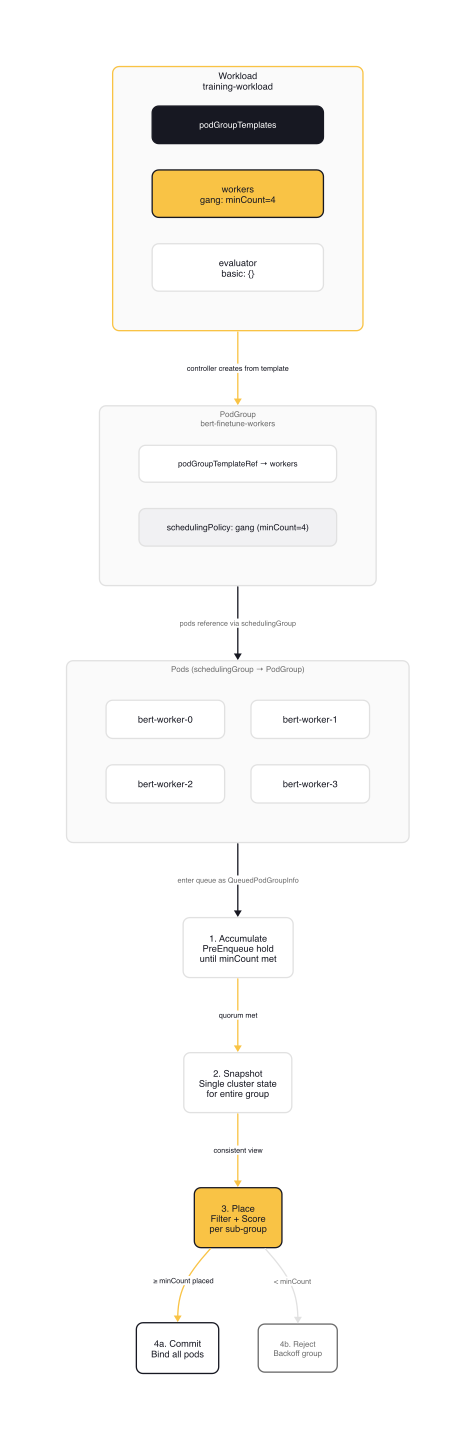

v1.36 rewrites the foundation. The Workload API moves to v1alpha2 with a decoupled two-object model: static Workload templates define scheduling policies, standalone PodGroup objects represent runtime instances, and a new schedulingGroup field on Pods replaces the old workloadRef. The scheduler gains a dedicated Workload Scheduling Cycle that processes an entire PodGroup in a single batch. Combined with opportunistic batching for identical pods, this turns gang scheduling from a correctness feature into a performance feature.

From v1alpha1 to v1alpha2

The v1alpha2 API makes three structural changes from v1alpha1.

workloadRef becomes schedulingGroup. In v1alpha1, Pods linked to their Workload directly via spec.workloadRef.name and spec.workloadRef.podGroup. This coupled the Pod to the static Workload definition. In v1alpha2, Pods reference a runtime PodGroup object through spec.schedulingGroup.podGroupName. The Pod no longer needs to know about the Workload at all.

podGroups becomes podGroupTemplates. The Workload's field changed from podGroups to podGroupTemplates to clarify its role: these are templates, not runtime instances. A Workload defines the shape and policy. Controllers instantiate actual PodGroup objects from these templates.

PodGroup becomes a standalone resource. In v1alpha1, pod groups existed only as inline definitions within the Workload. In v1alpha2, PodGroup is a separate API object in scheduling.k8s.io/v1alpha2. Workload controllers (Job, JobSet, Kubeflow) create PodGroup objects from Workload templates, each representing one runtime instance. This decoupling lets the scheduler track PodGroup lifecycle independently from the Workload definition.

note: The

WorkloadReferencefield in PodSpec is tombstoned in v1alpha2. Existing v1alpha1 Pods referencingworkloadRefwill need migration when upgrading.

Workload and PodGroup APIs

Both resources live in the scheduling.k8s.io/v1alpha2 API group. The GenericWorkload feature gate must be enabled on both kube-apiserver and kube-scheduler.

Workload

You define one or more pod group templates in a Workload, each with its own scheduling policy. The controllerRef field optionally links back to your workload controller (a Job, TrainingJob, or custom resource) for tooling integration.

# workload.yaml

apiVersion: scheduling.k8s.io/v1alpha2

kind: Workload

metadata:

name: training-workload

namespace: ml-jobs

spec:

controllerRef:

apiGroup: batch.kubeflow.org

kind: TrainingJob

name: bert-finetune

podGroupTemplates:

- name: workers

schedulingPolicy:

gang:

minCount: 4

- name: evaluator

schedulingPolicy:

basic: {}

The podGroupTemplates field is immutable and limited to 8 entries per Workload (WorkloadMaxPodGroups = 8). Each template has a unique name and a schedulingPolicy that selects either gang or basic.

Gang policy enforces all-or-nothing placement. The scheduler will not bind any pods in the group unless at least minCount can be placed simultaneously.

Basic policy uses standard pod-by-pod scheduling. Pods in a basic group are scheduled independently, without group constraints.

PodGroup

A PodGroup represents a runtime instance that your workload controller creates from a Workload template. It references the template via podGroupTemplateRef and carries a copy of the scheduling policy.

# podgroup.yaml

apiVersion: scheduling.k8s.io/v1alpha2

kind: PodGroup

metadata:

name: bert-finetune-workers

namespace: ml-jobs

spec:

podGroupTemplateRef:

workloadName: training-workload

podGroupTemplateName: workers

schedulingPolicy:

gang:

minCount: 4

The schedulingPolicy is copied from the template at creation time. The PodGroup tracks its own status through Conditions, reporting whether the group has been scheduled, is pending, or was rejected.

Pod SchedulingGroup

Pods reference their PodGroup through the new spec.schedulingGroup field, which replaces workloadRef from v1alpha1.

# worker-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: bert-worker-0

namespace: ml-jobs

spec:

schedulingGroup:

podGroupName: bert-finetune-workers

containers:

- name: trainer

image: pytorch/pytorch:2.5.0-cuda12.4-cudnn9-runtime

resources:

requests:

nvidia.com/gpu: 1

memory: 32Gi

cpu: 8

limits:

nvidia.com/gpu: 1

The schedulingGroup field is immutable. The referenced PodGroup does not need to exist when you create the Pod. The scheduler holds the Pod in PreEnqueue until the PodGroup appears and enough peers accumulate to meet minCount.

The Workload Scheduling Cycle

This is the biggest change in 1.36. Instead of processing gang pods through the standard pod-by-pod queue with barriers at Permit, the scheduler now has a dedicated scheduling phase for PodGroups.

How It Works

The scheduling queue becomes workload-aware. It can hold both individual Pods and QueuedPodGroupInfo objects. When Pods with a schedulingGroup arrive, the scheduler aggregates them into a QueuedPodGroupInfo instead of queuing them individually.

The cycle proceeds in four steps:

1. Accumulation. Pods arriving with a schedulingGroup reference are held in PreEnqueue. Once the referenced Workload and PodGroup exist and the number of pending Pods meets minCount, the QueuedPodGroupInfo moves to the active queue.

2. Snapshot. When the scheduler pops a PodGroup from the queue, it takes a single cluster state snapshot for the entire group operation. All placement decisions within the cycle use this consistent view.

3. Placement. The scheduler iterates through the group's pods, grouped into homogeneous sub-groups by scheduling signature (from KEP-5598). For each pod, it runs standard filtering and scoring against nodes. If a pod fits, it is temporarily assumed on the selected node. If it doesn't fit, the scheduler attempts preemption. Victims are nominated for removal but not actually preempted yet.

4. Commit or reject. If at least minCount pods found valid placements, all of them proceed directly to binding. If preemption was needed, the PodGroup returns to the queue to wait for preempted pods to vacate. If minCount cannot be met even with preemption, the entire group is rejected and enters backoff.

warning: The single-cycle approach means placement decisions are order-dependent. The scheduler processes higher-priority sub-groups first. For homogeneous groups this is predictable. For heterogeneous groups, the processing order may prevent finding a valid placement that exists in theory.

Delayed Preemption

v1.36 introduces delayed preemption for gang-scheduled workloads. When the scheduler determines that preemption is needed during the Workload Scheduling Cycle, it does not immediately evict victim pods. Instead, victims are marked as nominated for removal. The actual preemption happens only after the entire group's placement is confirmed.

This prevents unnecessary disruption. If the scheduler finds valid placements for some pods but ultimately cannot meet minCount, no preemption occurs. Only confirmed, complete placements trigger evictions.

Opportunistic Batching

Opportunistic batching is orthogonal to gang scheduling but works particularly well with it. Enabled by default since v1.35 (Beta), it optimizes scheduling for identical pods by reusing filtering and scoring results.

Pod Signatures

When a Pod enters the queue, each scheduler plugin computes a SignFragment capturing the pod attributes relevant to that plugin's decisions. The framework combines all fragments into a PodSignature. Pods with matching signatures are guaranteed to receive identical scheduling results.

The signature captures:

- Container images and resource requests

- Node affinity and node selectors

- Tolerations

- Volume requirements (except ConfigMaps and Secrets)

- Host ports

// staging/src/k8s.io/kubernetes/pkg/scheduler/framework/interface.go

type SignPlugin interface {

Plugin

// SignPod returns SignFragments for this pod.

// Success: plugin can sign the pod, returns signature fragments

// Unschedulable: plugin cannot sign pod (not eligible for batching)

// Error: unexpected failure (not eligible for batching, error logged)

SignPod(ctx context.Context, pod *v1.Pod) ([]SignFragment, *Status)

}

The Fast Path

When the scheduler processes a pod and finds a valid placement, it stores the sorted list of remaining feasible nodes keyed by the pod's signature. For the next pod with a matching signature, the scheduler tries the top node from the cached list first. If it passes all filters, the scheduler skips scoring entirely. One cache lookup instead of evaluating every node.

The cache is short-lived and in-memory only. It requires cycle continuity (no other pods scheduled between cached results) and the previously selected node to no longer have capacity for another pod with the same signature. If either condition fails, the scheduler falls back to full evaluation.

What Disables Batching

Certain features make pods unsignable:

- Pod anti-affinity or pod affinity — placement depends on other pods, not just node attributes

- Topology spread constraints — placement depends on current pod distribution

- Dynamic resource allocation — resource claims are node-specific

- Plugins that don't implement the

SignPlugininterface

If any enabled scoring/filtering plugin cannot sign a pod, batching is disabled for that pod entirely.

Putting It Together: Feature Gates and Resources

To use the Workload API, you need three feature gates on your control plane:

# kube-apiserver flags

kube-apiserver \

--feature-gates=GenericWorkload=true

# kube-scheduler flags

kube-scheduler \

--feature-gates=GangScheduling=true,GenericWorkload=true

GenericWorkload enables the Workload and PodGroup APIs on both components. GangScheduling activates the gang scheduling plugin in the scheduler. Opportunistic batching (SchedulerOpportunisticBatching) is enabled by default since v1.35.

With the Workload from the previous section in place, you create a PodGroup for each runtime instance and Pods that reference it.

PodGroup and Worker Pods

# podgroup-workers.yaml

apiVersion: scheduling.k8s.io/v1alpha2

kind: PodGroup

metadata:

name: bert-finetune-workers

namespace: ml-jobs

spec:

podGroupTemplateRef:

workloadName: training-workload

podGroupTemplateName: workers

schedulingPolicy:

gang:

minCount: 4

---

# worker-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: bert-worker-0

namespace: ml-jobs

labels:

app: bert-training

role: worker

spec:

schedulingGroup:

podGroupName: bert-finetune-workers

containers:

- name: trainer

image: pytorch/pytorch:2.5.0-cuda12.4-cudnn9-runtime

command: ["torchrun"]

args: ["--nnodes=4", "--node-rank=0", "train.py"]

resources:

requests:

nvidia.com/gpu: 1

memory: 32Gi

cpu: 8

limits:

nvidia.com/gpu: 1

You create one Pod per worker (bert-worker-0 through bert-worker-3), each pointing to the same PodGroup. The scheduler holds all four in PreEnqueue until the quorum is met, then processes them together in a single Workload Scheduling Cycle.

Verification

# Verify pods reference the correct group

$ kubectl get pods -n ml-jobs \

-o custom-columns=NAME:.metadata.name,GROUP:.spec.schedulingGroup.podGroupName,STATUS:.status.phase

NAME GROUP STATUS

bert-worker-0 bert-finetune-workers Running

bert-worker-1 bert-finetune-workers Running

bert-worker-2 bert-finetune-workers Running

bert-worker-3 bert-finetune-workers Running

bert-evaluator <none> Running

All four workers bind simultaneously once the scheduler confirms placements for the group. The evaluator schedules independently through basic policy.

Gotchas

Heterogeneous Pod Groups May Not Find Placements

The scheduling algorithm groups pods by signature and processes sub-groups sequentially. For homogeneous groups (identical resource requests, images, affinities), it finds a valid placement whenever one exists. For heterogeneous groups, the processing order may prevent finding a placement that theoretically exists.

If your workload has pods with different GPU types, different memory requirements, or different node affinities within the same PodGroup, you may see scheduling failures even when the cluster has sufficient resources. The rejection message will indicate that the failure may be due to algorithmic limitations, distinct from a generic Unschedulable status.

Split your heterogeneous workloads into separate pod group templates within the Workload.

Inter-Pod Dependencies Break Deterministic Processing

If one pod in a gang has an affinity rule targeting another pod in the same gang, the deterministic processing order may prevent scheduling. Pod A needs Pod B to be placed first to satisfy its affinity, but Pod B gets processed after Pod A.

The workaround: assign lower priority to dependent pods. The algorithm processes higher-priority pods first, so setting the required pod's priority higher ensures it schedules before pods that depend on it.

Topology Spread Constraints Are Unsupported

Topology spread constraints evaluate pods individually against current pod distribution. Gang scheduling evaluates the entire group as a unit against a snapshot. These approaches conflict. The scheduler cannot simultaneously guarantee spread requirements and all-or-nothing placement.

If you need rack-aware or zone-aware placement for gang-scheduled workloads, wait for topology-aware scheduling (TAS), which is on the roadmap as a dedicated integration with the Workload Scheduling Cycle.

8 Templates Per Workload

The podGroupTemplates field is capped at 8 entries (WorkloadMaxPodGroups = 8). If you have more than 8 distinct pod roles, consolidate roles with identical scheduling requirements into a single template or split across multiple Workload objects.

All Pods Must Use the Same Scheduler

Every pod in a PodGroup must share the same spec.schedulerName. If the scheduler detects a mismatch, it rejects the entire group as unschedulable. This prevents conflicting placement decisions from multiple schedulers operating on the same group.

Wrap-up

The v1alpha2 API establishes a clean separation: Workloads define policy, PodGroups track runtime state, and the scheduler processes groups atomically through a dedicated cycle. This is a foundation, not a finished product.

If you run homogeneous GPU training jobs today, the Workload API is ready for testing. Start with a single PodGroup template using gang scheduling and minCount equal to your worker count. You get all-or-nothing placement with the performance benefits of the Workload Scheduling Cycle. If you run heterogeneous workloads with mixed pod types, wait for the algorithm to mature. The current limitations around processing order mean you may hit scheduling failures that do not exist with external schedulers like Kueue or Volcano.

The tracking issue outlines what comes next: topology-aware scheduling for rack-level placement, workload-level preemption that evicts entire groups instead of individual pods, and tighter integration between scheduling and cluster autoscaling. The APIs are alpha and will change. Report your experience on the tracking issue or SIG Scheduling Slack.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

Dynamic Resource Allocation (DRA) GA: The New GPU Interface for Kubernetes

DRA went GA in Kubernetes v1.34 and continues evolving — replacing Device Plugins with richer semantics including DeviceClass, ResourceClaim, CEL-based filtering, and topology awareness.

Armada: Multi-Cluster GPU Scheduling as a Single Resource Pool

Armada treats multiple Kubernetes clusters as a single resource pool for GPU-intensive AI workloads, with global queue management, gang scheduling, and production-scale throughput.

Introduction to KEDA and Event-Driven Autoscaling

How KEDA extends Kubernetes HPA with 65+ scalers, scale-to-zero, and a two-phase architecture for event-driven pod autoscaling.