What Is Karpenter and Why It Replaced Cluster Autoscaler

Cluster Autoscaler worked. For years, it was the default answer to "how do I scale nodes in Kubernetes?" You defined Auto Scaling groups, picked your instance types, and CAS adjusted the desired count when pods couldn't schedule. Then your team needed GPU nodes, and you added another ASG. Then arm64, another ASG. Then spot instances across three availability zones. Suddenly you had dozens of node groups, each locked to a specific instance type and configuration, and scaling took minutes instead of seconds.

Karpenter takes a fundamentally different approach. Instead of managing node groups, it provisions individual instances directly from cloud APIs based on what your pods actually need. No ASGs, no managed node groups, no pre-defined instance type lists. Karpenter watches for unschedulable pods, evaluates their requirements against your constraints, and launches right-sized compute in seconds.

This article covers the architectural decisions that make Karpenter work: the three core resources you need to understand, the layered constraints model that governs provisioning, and how the provisioning loop actually executes. If you're evaluating Karpenter or about to deploy it, this is the mental model you need first.

Where Cluster Autoscaler Falls Short

Cluster Autoscaler's core design ties node scaling to Auto Scaling groups. Each ASG defines a fixed set of instance types, a min/max count, and a target availability zone. CAS watches for unschedulable pods, finds an ASG that could fit them, and bumps its desired count. The ASG handles the rest.

This works at small scale. At enterprise scale, it breaks down in predictable ways.

Node group proliferation. Every combination of instance type, architecture, capacity type, and AZ needs its own ASG. Salesforce, running 1,000+ EKS clusters, accumulated thousands of node groups across their fleet. Each group needed configuration, monitoring, and lifecycle management. The operational overhead grew faster than the clusters themselves.

Scaling latency. CAS runs on a periodic scan loop. When it detects unschedulable pods, it adjusts an ASG's desired count and waits for the ASG to fulfill the request. That fulfillment path goes through the cloud provider's auto scaling abstraction layer, adding communication overhead. During demand spikes, this meant multi-minute delays before pods could run.

Right-sizing limitations. ASGs lock instance types at definition time. If a pod needs 4 CPUs and the matching ASG only offers m5.2xlarge (8 CPUs), you get an 8-CPU node with half its capacity wasted. CAS can't select a better-fitting instance type on the fly because it doesn't interact with the instance type catalog directly.

Limited cost optimization. CAS can delete empty nodes, but it doesn't proactively replace underutilized ones with cheaper alternatives. If three half-empty nodes could be consolidated into one, CAS won't make that move. Teams often kept spare nodes running to avoid scheduling delays, driving up costs further.

Salesforce's migration tells the story in numbers: after switching to Karpenter, they reduced manual operational overhead by 80%, cut scaling latency from minutes to seconds, and achieved 5% cost savings in FY2026 with 5-10% projected for FY2027 as the rollout completes.

| Karpenter | Cluster Autoscaler | |

|---|---|---|

| Scaling mechanism | Direct cloud API (EC2 CreateFleet) | ASG desired count adjustment |

| Provisioning speed | Seconds (direct API call, fast retry) | Minutes (ASG fulfillment loop) |

| Instance selection | Right-sized per pod batch from full catalog | Locked to ASG-defined instance types |

| Cost optimization | Active consolidation (replace with cheaper) | Delete empty nodes only |

| Configuration | NodePool + NodeClass (2 objects) | N node groups x M instance types |

| Cloud support | Provider-agnostic core, 10+ providers | Bundled project, broad environment support |

| Node lifecycle | Drift, expiration, interruption, consolidation | TTL-based, limited lifecycle management |

Karpenter's Architecture: Three Objects, One Loop

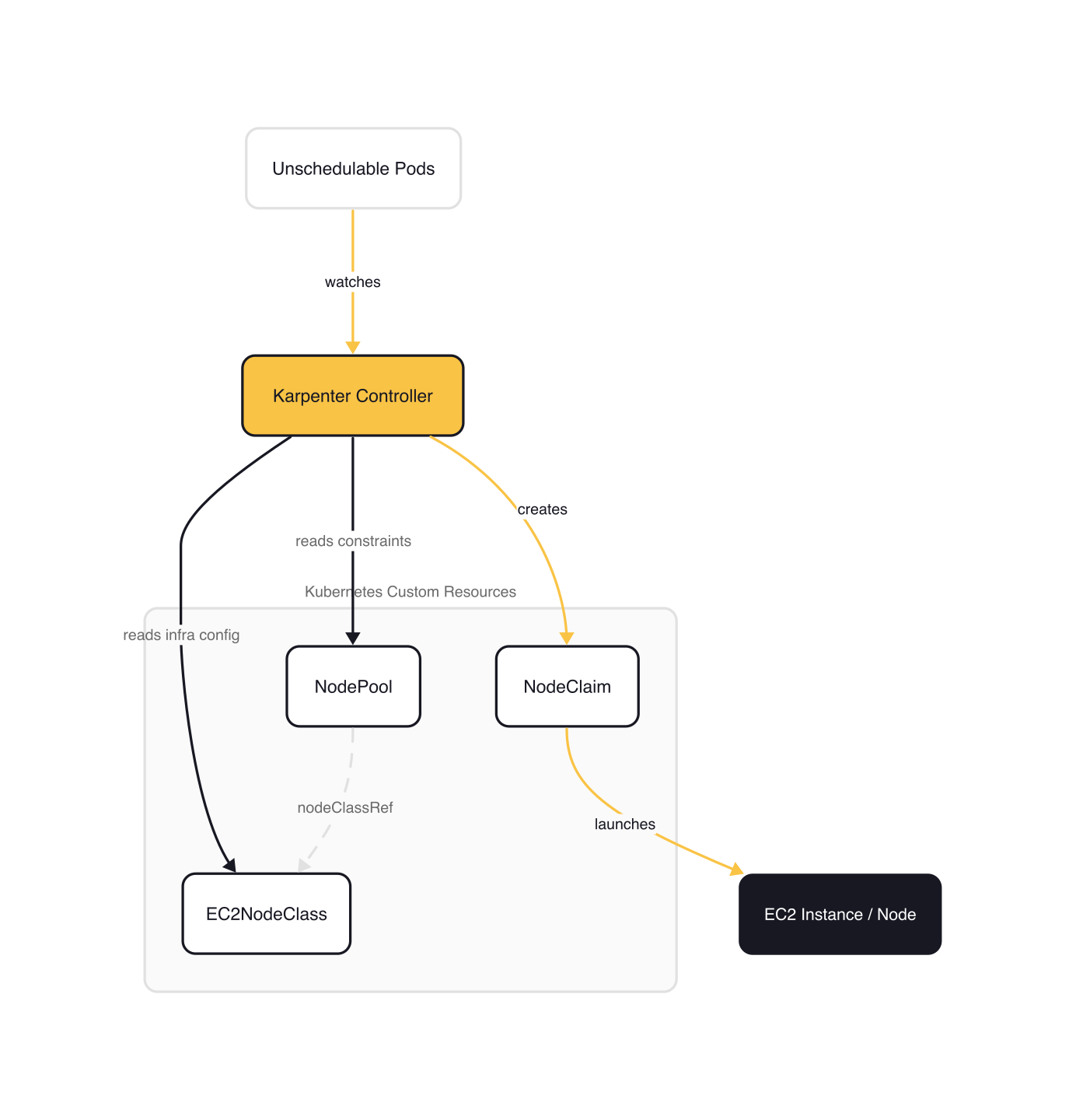

Karpenter replaces the ASG abstraction with three Kubernetes custom resources that separate concerns cleanly: what to provision, how to provision it, and the concrete instance request.

NodePool: The Policy Layer

A NodePool is provider-agnostic. It defines the constraints and behaviors for a group of nodes: which instance categories are allowed, which architectures, which capacity types, how aggressively to consolidate, and resource limits for the pool.

# Adapted from karpenter.sh/docs/concepts/nodepools

apiVersion: karpenter.sh/v1

kind: NodePool

metadata:

name: default

spec:

template:

spec:

nodeClassRef:

group: karpenter.k8s.aws

kind: EC2NodeClass

name: default

requirements:

- key: kubernetes.io/arch

operator: In

values: ["amd64"]

- key: karpenter.sh/capacity-type

operator: In

values: ["reserved", "spot", "on-demand"]

- key: karpenter.k8s.aws/instance-category

operator: In

values: ["c", "m", "r"]

- key: karpenter.k8s.aws/instance-generation

operator: Gte

values: ["3"]

limits:

cpu: "1000"

memory: 1000Gi

disruption:

consolidationPolicy: WhenEmptyOrUnderutilized

consolidateAfter: 1m

The requirements field is where the real flexibility lives. Instead of defining a fixed list of instance types, you specify categories (c, m, r), minimum generations, architectures, and capacity types. Karpenter evaluates the full instance type catalog within those constraints and picks the best fit for each batch of pending pods.

The disruption block controls how aggressively Karpenter optimizes your fleet after initial provisioning. WhenEmptyOrUnderutilized tells Karpenter to actively look for cheaper replacements, not just delete empty nodes.

NodeClass: The Infrastructure Layer

A NodeClass is provider-specific. On AWS, the EC2NodeClass defines how instances are launched: which AMIs, which subnets, which security groups, and what IAM role nodes assume.

# Adapted from karpenter.sh/docs/concepts/nodeclasses

apiVersion: karpenter.k8s.aws/v1

kind: EC2NodeClass

metadata:

name: default

spec:

amiSelectorTerms:

- alias: al2023@latest

subnetSelectorTerms:

- tags:

karpenter.sh/discovery: my-cluster

securityGroupSelectorTerms:

- tags:

karpenter.sh/discovery: my-cluster

role: "KarpenterNodeRole-my-cluster"

tags:

Team: "platform"

Environment: "production"

This separation is deliberate. The NodePool says "provision c-family or m-family instances, generation 3+, spot or on-demand." The EC2NodeClass says "use Amazon Linux 2023, deploy into subnets tagged for this cluster, assign this IAM role." Changing your AMI strategy doesn't touch your scaling policy. Changing your instance type constraints doesn't touch your networking config.

Karpenter includes a controller that resolves the high-level selectors in EC2NodeClass into concrete subnet IDs, security group IDs, and AMI IDs by querying AWS APIs. These resolved values are cached so Karpenter can compose launch requests immediately when pods need capacity.

NodeClaim: The Concrete Request

A NodeClaim is created by Karpenter, not by you. It represents a specific node through its entire lifecycle. The full provisioning flow has six steps (find provisionable pods, compute NodeClaim shape, create the NodeClaim object, launch the instance, register the node, initialize resources), but what you observe as an operator are four status conditions on the NodeClaim:

$ kubectl get nodeclaims

NAME TYPE ZONE NODE READY AGE

default-m6pzn c7i-flex.2xlarge us-west-1a ip-10-0-42-137.us-west-1.compute.internal True 7m50s

The lifecycle stages, visible in the NodeClaim's status conditions:

- Launched: Karpenter called the cloud provider API and got a response

- Registered: the instance joined the cluster and Karpenter applied labels, taints, and annotations

- Initialized: all requested resources are available and startup taints removed

- Ready: the node is fully operational

If any stage fails (capacity unavailable, registration timeout), Karpenter deletes the NodeClaim and retries with a different instance type. The retry decision happens in milliseconds because Karpenter talks directly to the cloud API, but the replacement instance still needs time to boot and register.

How Provisioning Actually Works

The provisioning loop has five steps, and understanding them explains why Karpenter is faster and more efficient than CAS.

Step 1: Watch for unschedulable pods. The Kubernetes scheduler marks pods as Unschedulable=True when no existing node can run them. Karpenter watches for this condition.

Step 2: Batch pending pods. Instead of reacting to each pod individually, Karpenter waits briefly to collect a batch. The idle duration (default 1 second) resets each time a new pod arrives. A maximum duration (default 10 seconds) caps the window. This prevents overprovisioning: five pods that arrive within a second get one well-packed node instead of five separate ones.

Step 3: Run scheduling simulations. Karpenter evaluates the batch against all configured NodePools, considering each pod's resource requests, node selectors, affinities, tolerations, and topology spread constraints. It finds the smallest set of instances that can run all pending pods.

Step 4: Create NodeClaims and call the cloud API. Karpenter creates a NodeClaim for each node it needs, then calls the cloud provider's instance API directly. On AWS, this is the EC2 CreateFleet API. If a capacity error occurs (e.g., insufficient spot capacity for that instance type), Karpenter caches the failure for 3 minutes and retries with a different instance type within milliseconds.

Step 5: Track in-flight nodes. Karpenter maintains awareness of nodes that have been requested but haven't registered yet. If new pods arrive while a node is launching, Karpenter accounts for the pending capacity instead of launching duplicates.

When multiple capacity types are allowed, Karpenter follows a strict priority: reserved capacity first, then spot, then on-demand. If spot capacity is unavailable, it falls back to on-demand automatically.

Layered Constraints

Karpenter's provisioning decisions are shaped by three layers of constraints, and only the intersection of all three determines what gets launched.

Layer 1: Cloud provider inventory. The universe of available instance types, sizes, AZs, and pricing. This is fixed by the provider.

Layer 2: Cluster administrator guardrails. The NodePool and NodeClass define what the admin allows. Instance categories, architectures, capacity types, resource limits, subnet placement, AMI selection. This narrows the cloud provider's full inventory to a curated subset.

Layer 3: Workload developer intent. Pod specs express what the workload needs: resources.requests, nodeSelector, nodeAffinity, topologySpreadConstraints. These further narrow the admin's allowed set. A developer can tighten constraints (require GPU, request a specific zone) but can never loosen them beyond what the NodePool permits.

This is a meaningful shift from CAS. With Cluster Autoscaler, developers either targeted specific node groups by label or got whatever instance type the matching ASG provided. With Karpenter, developers express intent ("I need 4 CPUs and 8Gi of memory, prefer spot") and the system finds the cheapest instance from the allowed set that satisfies all three layers.

Beyond Provisioning: Disruption and Fleet Management

Karpenter doesn't just add nodes. It actively manages your fleet to keep costs down and configurations current.

Consolidation is the headline feature. Karpenter continuously evaluates whether nodes can be removed or replaced with cheaper alternatives. It runs three checks in order: delete empty nodes, consolidate multiple underutilized nodes into fewer ones, and replace individual nodes with cheaper instance types. All consolidation respects PodDisruptionBudgets to avoid availability issues.

Drift detection handles configuration changes. When you update a NodePool or NodeClass, existing nodes aren't patched in place. Karpenter detects that running nodes have diverged from the desired spec (different AMI, different requirements) and gradually replaces them. This keeps your fleet converging toward your intended configuration without manual node rotation.

Expiration enforces maximum node lifetime. The default is 720 hours (30 days). Karpenter begins draining expired nodes immediately, provisioning replacements as needed. This is useful for security compliance and preventing issues from long-running nodes (memory leaks, file fragmentation).

Interruption handling covers involuntary events: spot interruption warnings, health events, instance termination notices. Karpenter watches an SQS queue for these events and pre-spins replacement nodes before the interruption hits, minimizing workload disruption.

Each of these mechanisms deserves its own deep dive. For now, the key insight is that Karpenter treats nodes as disposable compute, not permanent infrastructure, and its disruption logic is designed around that assumption.

Gotchas

Karpenter is not a drop-in CAS replacement. The mental model is different. There are no node groups. Configuration is two objects (NodePool + NodeClass), not N ASGs. Migration requires rethinking how you express capacity requirements, not just swapping controllers.

Multi-cloud maturity varies. AWS support is production-grade with the full feature set. Azure is GA on AKS. Other providers (GCP, AlibabaCloud, IBM Cloud, Proxmox, OCI) are community-maintained with varying feature parity. Check your provider's implementation status before committing.

PDB misconfiguration blocks everything. Overly restrictive PodDisruptionBudgets prevent consolidation, drift replacement, and node rotation. Salesforce called this their top migration challenge: services with maxUnavailable: 0 or minAvailable: 100% blocked node replacements entirely. Audit your PDBs before enabling Karpenter.

Karpenter needs its own stable nodes. It runs as a Deployment and should not manage the nodes it runs on. On AWS, run Karpenter on Fargate or a small static managed node group. Self-eviction is a real failure mode if you skip this.

Aggressive consolidation can surprise you. WhenEmptyOrUnderutilized actively moves pods to cheaper nodes. Without proper PDBs, this can cause unexpected restarts. Start with WhenEmpty if you're not confident in your disruption budget configuration.

Wrap-up

Karpenter's groupless architecture eliminates the ASG abstraction layer that made Cluster Autoscaler slow, inflexible, and operationally expensive at scale. Three resources replace potentially thousands of node groups: a NodePool defines what you allow, a NodeClass defines how to launch it, and NodeClaims represent the concrete instances Karpenter manages. The layered constraints model lets administrators set guardrails while developers express intent, and Karpenter finds the optimal intersection.

Karpenter is not the answer to every autoscaling problem. It does not yet support Kubernetes Dynamic Resource Allocation (DRA), has no built-in multi-cluster awareness, and feature parity varies across cloud providers. CAS remains a reasonable choice for steady-state workloads on providers where Karpenter support is immature. Evaluate the fit before committing.

Before you install anything, do two things: audit your current node groups to understand how many ASG/instance-type combinations you maintain, and review your PodDisruptionBudgets for services that block with maxUnavailable: 0. Both will determine how smooth your migration is.

This post is part of the Karpenter — Kubernetes Node Autoscaling from Setup to Optimization collection (1 of 6)

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

Introduction to KEDA and Event-Driven Autoscaling

How KEDA extends Kubernetes HPA with 65+ scalers, scale-to-zero, and a two-phase architecture for event-driven pod autoscaling.

KEDA and Karpenter Together — Pod and Node Scaling Synergy

Combining KEDA's event-driven pod scaling with Karpenter's just-in-time node provisioning for a fully reactive, cost-efficient Kubernetes autoscaling stack.

Custom Metrics and Prometheus-Based Scaling with KEDA

Using KEDA's Prometheus scaler to drive autoscaling from any PromQL query — replacing Prometheus Adapter with a simpler, more flexible approach.