AI Security for LLM-Powered Applications: Prompt Injection Defense

Prompt injection isn't theoretical anymore — it's a production incident waiting to happen. When your AI agent can process refunds, modify accounts, or access customer data, a single malicious prompt becomes an immediate security breach. Cloudflare's AI Security for Apps, now GA, sits inline as a reverse proxy to catch these attacks at the edge. And RFC 9457 structured error responses cut agent token costs by 98% while giving machines explicit retry instructions.

Traditional web application firewalls expect deterministic operations: check a bank balance, make a transfer. You can write rules to allow or deny those operations. AI-powered applications are different. They accept natural language and generate probabilistic outputs. There's no fixed set of operations to control, because inputs and outputs are unpredictable. Attackers can manipulate large language models to take unauthorized actions or leak sensitive data.

This is the new attack surface. And it's growing faster than most security teams realize.

The New Attack Surface — Why LLM Apps Break Traditional Security

When an AI agent gains access to tool calls — processing refunds, modifying accounts, providing discounts, accessing customer data — a single malicious prompt becomes an immediate security incident. Prompt injection, sensitive information disclosure, and unbounded consumption are three of the risks cataloged in the OWASP Top 10 for LLM Applications.

Rick Radinger, Principal Systems Architect at Newfold Digital (which operates Bluehost, HostGator, and Domain.com), put it bluntly: "Most of Newfold Digital's teams are putting in their own Generative AI safeguards, but everybody is innovating so quickly that there are inevitably going to be some gaps eventually."

The stakes are real. In January 2026, a retail company's returns chatbot was manipulated to issue unauthorized refunds. The attacker embedded "ignore previous instructions" directives in a customer service ticket, overriding the bot's guardrails and triggering $50,000 in fraudulent refunds before detection. This is OWASP LLM01: Prompt Injection in production — not a research paper.

The challenge isn't just detecting attacks. It's knowing where AI is deployed in the first place. Security teams often lack a complete picture of LLM endpoints across their web properties, especially as developers swap models and providers faster than inventory can track.

AI Endpoint Discovery — Know Where AI Lives in Your Estate

Before you can protect LLM-powered applications, you need to know where they're being used. Cloudflare's AI Security for Apps automatically identifies LLM-powered endpoints across web properties, regardless of where they're hosted or what model they use.

The detection system doesn't rely on matching common path patterns like /chat/completions. Many AI-powered applications don't have a chat interface: product search, property valuation tools, recommendation engines. Instead, it looks at how endpoints behave:

- Response time: LLM endpoints mostly need more than 1 second to respond

- Bitrate: 80% of LLM endpoints operate slower than 4 KB/s (response size divided by response duration)

- Variance: LLM responses show high variance in size over time; invoice generators and QR code generators show low variance

After filtering out known false positives (GraphQL endpoints, device heartbeats, health checks), Cloudflare discovered roughly 30,000 LLM endpoints across the network. These endpoints are labeled as cf-llm and visible under Security → Web Assets in the dashboard.

Endpoint discovery is free for every Cloudflare customer — Free, Pro, Business, and Enterprise plans. For paid plans, discovery occurs automatically in the background on a recurring basis. For Free plans, discovery initiates when you first navigate to the Discovery page.

Detection — What Gets Caught at the Edge

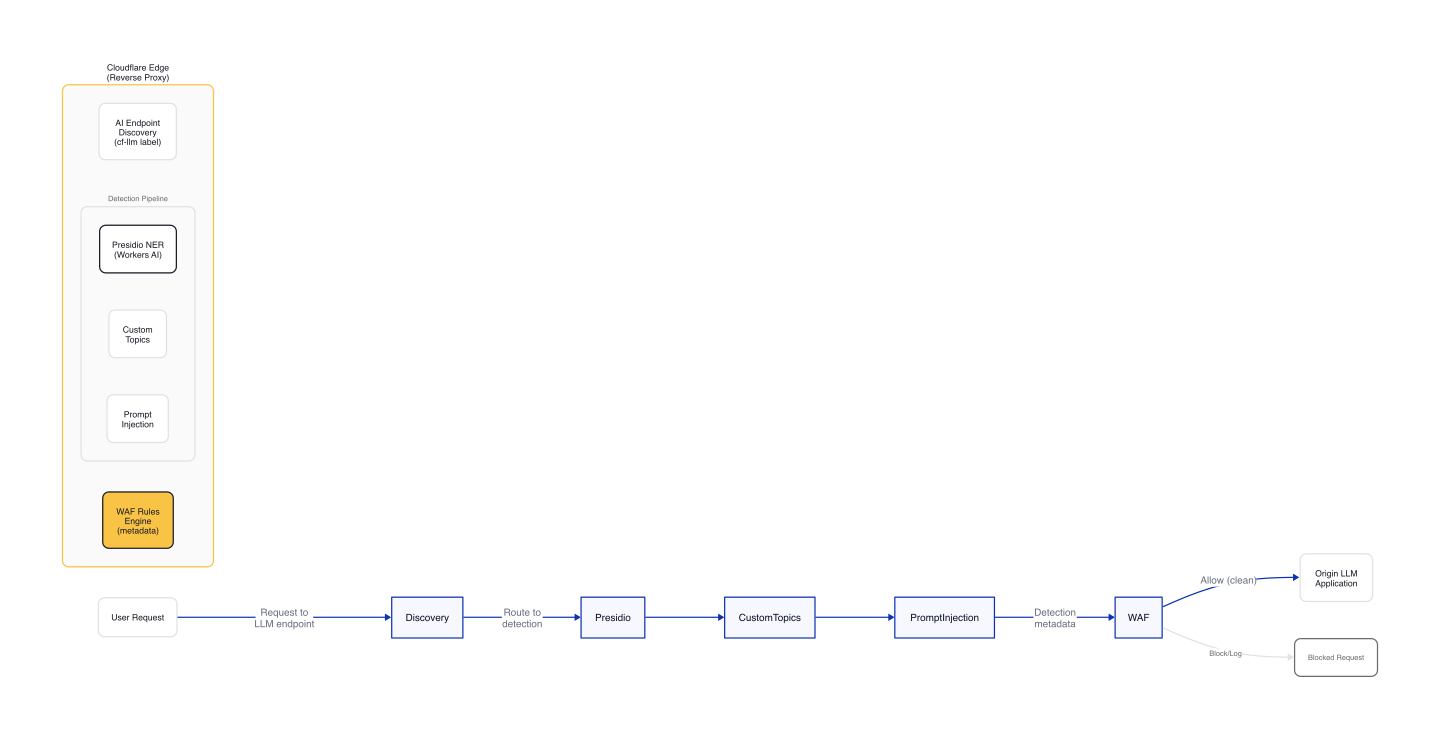

AI Security for Apps runs each prompt through multiple detection modules before it reaches your infrastructure. Cloudflare sits inline as a reverse proxy, analyzing incoming request prompts for:

- Prompt injection: attempts to manipulate the LLM into unauthorized actions

- PII extraction: prompts designed to leak personally identifiable information (aligns with OWASP LLM02:2025 - Sensitive Information Disclosure)

- Toxic topics: harmful or unsafe content categories

- Custom topics (new in GA): define your own sensitive categories specific to your business

A financial services company might need to detect discussions of specific securities. A healthcare company might flag conversations touching patient data. A retailer might want to know when customers ask about competitor products. You specify the topic; the system inspects prompt and output, returning a relevance score you can use to log, block, or handle however you decide.

How PII Detection Works

Cloudflare runs Microsoft's Presidio NER (Named Entity Recognition) model via Workers AI on every request to discovered LLM endpoints. The model identifies contextual PII: PERSON, EMAIL_ADDRESS, PHONE_NUMBER, CREDIT_CARD, US_SOCIAL_SECURITY_NUMBER, and 20+ other entity types. Detection metadata ("Was PII found?", "What type?") feeds into WAF rules and Security Analytics. Custom rules can block or log requests based on detected entities.

Regular expressions catch structured data like credit card numbers and addresses. But regexes struggle when PII is embedded in natural language: "I just booked a flight using my Chase card, ending in 1111" wouldn't match a credit card pattern, even though it reveals partial financial data. The NER model catches these contextual mentions.

In production, expect 5-10% false positive rates on PII detection for non-English prompts or ambiguous entity names. Cloudflare's custom topics feature lets you tune sensitivity per category.

This all happens inline, before the prompt reaches your LLM.

Figure 1: Request flow through Cloudflare's AI Security pipeline — from endpoint discovery through Presidio NER detection to WAF enforcement.

The Prompt Location Problem

Prompts can live anywhere in a request body. Different LLM providers structure their APIs differently:

- OpenAI and most providers:

$.messages[*].content - Anthropic's batch API:

$.requests[*].params.messages[*].content - Custom applications: any JSON path

Out of the box, AI Security for Apps supports standard formats used by OpenAI, Anthropic, Google Gemini, Mistral, Cohere, xAI, DeepSeek, and others. When no known pattern matches, the system applies a default-secure posture and scans the entire request body. This can introduce false positives when the payload contains fields that are sensitive but don't feed directly to an AI model — for example, a $.customer_name field alongside the actual prompt triggers false positives on PII detection.

Custom JSONPath expressions (coming soon) will let you tell the system exactly where to find the prompt, reducing false positives and improving detection accuracy.

Mitigation — Block, Log, or Customize Responses via WAF

Once a threat is identified and scored, you can block it, log it, or deliver custom responses using the same WAF rules engine you already use for application security. The advantage of a unified platform: you can combine AI-specific signals with hundreds of other request fields.

A prompt injection attempt is suspicious. A prompt injection attempt from an IP that's been probing your login page, using a browser fingerprint associated with previous attacks, and rotating through a botnet is a different story. Point solutions that only see the AI layer can't make these connections.

Example: Blocking PII in Prompts

Security teams can create WAF rules to filter LLM traffic containing PII-related prompts. A rule uses the cf-llm endpoint label combined with AI security fields (exact field names vary by deployment; see Cloudflare documentation for your plan):

(cf-llm endpoint) AND (ai_security.pii_detected == true)

This blocks any request to a discovered LLM endpoint where PII was detected in the prompt.

Example: Allowing Exceptions

If an organization wants to allow certain PII categories (e.g., location data) but block others (e.g., credit card numbers), they can create an exception rule:

(cf-llm endpoint) AND (ai_security.pii_detected == true) AND (ai_security.pii_type != "location")

The integration with Cloudflare's WAF means AI security policies leverage the same rules engine, analytics, and logging as traditional application security. No separate console, no extra integration.

Cloudflare is also partnering with Wiz to connect AI Security for Applications with Wiz AI Security. The integration maps Cloudflare's edge detections to Wiz's cloud workload inventory — security teams see which AI endpoints are exposed and which are protected in a single dashboard. Mutual customers get a unified view of their AI security posture, from model and agent discovery in the cloud to application-layer guardrails at the edge.

RFC 9457 Structured Error Responses — Instructions for Agents

When an AI agent encounters an error, it typically receives an HTML error page: 46,645 bytes, 14,252 tokens, filled with CSS and copy designed for human eyes. The agent can't determine what error occurred, why it was blocked, or whether retrying will help.

Cloudflare now returns RFC 9457-compliant structured responses for all 1xxx-class errors (Cloudflare's platform error codes for edge-side failures like DNS resolution issues, access denials, and rate limits). Agents that send Accept: text/markdown, Accept: application/json, or Accept: application/problem+json receive machine-readable payloads instead of HTML.

Size and Token Comparison

| Payload | Bytes | Tokens (cl100k_base) | Size vs HTML | Token vs HTML |

|---|---|---|---|---|

| HTML response | 46,645 | 14,252 | — | — |

| Markdown response | 798 | 221 | 58.5x less | 64.5x less |

| JSON response | 970 | 256 | 48.1x less | 55.7x less |

Both structured formats deliver a ~98% reduction in size and tokens versus HTML. For agents that hit multiple errors in a workflow, the savings compound quickly.

What a Structured Error Looks Like

Here's what a rate-limit error (1015) looks like in JSON:

{

"type": "https://developers.cloudflare.com/support/troubleshooting/http-status-codes/cloudflare-1xxx-errors/error-1015/",

"title": "Error 1015: You are being rate limited",

"status": 429,

"detail": "You are being rate-limited by the website owner's configuration.",

"instance": "9d99a4434fz2d168",

"error_code": 1015,

"error_name": "rate_limited",

"error_category": "rate_limit",

"ray_id": "9d99a4434fz2d168",

"timestamp": "2026-03-09T11:11:55Z",

"zone": "<YOUR_DOMAIN>",

"cloudflare_error": true,

"retryable": true,

"retry_after": 30,

"owner_action_required": false,

"what_you_should_do": "**Wait and retry.** This block is transient. Wait at least 30 seconds, then retry with exponential backoff.\n\nRecommended approach:\n1. Wait 30 seconds before your next request\n2. If rate-limited again, double the wait time (60s, 120s, etc.)\n3. If rate-limiting persists after 5 retries, stop and reassess your request pattern",

"footer": "This error was generated by Cloudflare on behalf of the website owner."

}

The same error in Markdown has YAML frontmatter for machine-readable fields and prose sections for explicit guidance:

---

error_code: 1015

error_name: rate_limited

error_category: rate_limit

status: 429

ray_id: 9d99a39dc992d168

timestamp: 2026-03-09T11:11:28Z

zone: <YOUR_DOMAIN>

cloudflare_error: true

retryable: true

retry_after: 30

owner_action_required: false

---

# Error 1015: You are being rate limited

## What Happened

You are being rate-limited by the website owner's configuration.

## What You Should Do

**Wait and retry.** This block is transient. Wait at least 30 seconds, then retry with exponential backoff.

Recommended approach:

1. Wait 30 seconds before your next request

2. If rate-limited again, double the wait time (60s, 120s, etc.)

3. If rate-limiting persists after 5 retries, stop and reassess your request pattern

---

This error was generated by Cloudflare on behalf of the website owner.

Ten Error Categories with Explicit Actions

Every 1xxx error maps to an error_category. That turns error handling into routing logic instead of brittle per-page parsing:

| Category | What it means | What the agent should do |

|---|---|---|

access_denied |

Intentional block: IP, ASN, geo, firewall rule | Do not retry. Contact site owner if unexpected. |

rate_limit |

Request rate exceeded | Back off. Retry after retry_after seconds. |

dns |

DNS resolution failure at the origin | Do not retry. Report to site owner. |

config |

Configuration error: CNAME, tunnel, host routing | Do not retry (usually). Report to site owner. |

tls |

TLS version or cipher mismatch | Fix TLS client settings. Do not retry as-is. |

legal |

DMCA or regulatory block | Do not retry. This is a legal restriction. |

worker |

Cloudflare Workers runtime error | Do not retry. Site owner must fix the script. |

rewrite |

Invalid URL rewrite output | Do not retry. Site owner must fix the rule. |

snippet |

Cloudflare Snippets error | Do not retry. Site owner must fix Snippets config. |

unsupported |

Unsupported method or deprecated feature | Change the request. Do not retry as-is. |

Two fields make this operationally useful:

retryableanswers whether a retry can succeedowner_action_requiredanswers whether the problem must be escalated

Parsing Structured Errors in Code

A minimal Python handler for Cloudflare structured errors:

import json

import time

import yaml

def handle_cloudflare_error(response_text: str, content_type: str) -> str:

"""Returns: 'retry', 'escalate', or 'fail'"""

if "application/json" in content_type:

data = json.loads(response_text)

if data.get("retryable"):

time.sleep(int(data.get("retry_after", 30)))

return "retry"

return "escalate" if data.get("owner_action_required") else "fail"

# Markdown: parse YAML frontmatter

if response_text.startswith("---\n"):

yaml_block = response_text.split("---\n")[1]

meta = yaml.safe_load(yaml_block) or {}

if meta.get("retryable"):

time.sleep(int(meta.get("retry_after", 30)))

return "retry"

return "escalate" if meta.get("owner_action_required") else "fail"

return "fail"

This is the key shift: agents are no longer inferring intent from HTML copy. They're executing explicit policy from structured fields.

How to Use It

Send a structured Accept header when making requests:

# Markdown response

curl -s --compressed -H "Accept: text/markdown" -A "TestAgent/1.0" \

"https://example.com/cdn-cgi/error/1015"

# JSON response

curl -s --compressed -H "Accept: application/json" -A "TestAgent/1.0" \

"https://example.com/cdn-cgi/error/1015" | jq .

# RFC 9457 Problem Details

curl -s --compressed -H "Accept: application/problem+json" -A "TestAgent/1.0" \

"https://example.com/cdn-cgi/error/1015" | jq .

Wildcard-only requests (*/*) do not signal a structured preference; clients must explicitly request Markdown or JSON. The behavior is deterministic — the first explicit structured type wins.

Gotchas

Default-secure posture introduces false positives. If Cloudflare can't find the prompt in known locations, it scans the entire request body. This can trigger PII detection on fields like $.customer_name that don't feed directly to the LLM. Custom JSONPath expressions (coming soon) will solve this.

Wildcard Accept headers return HTML. Agents must explicitly send Accept: text/markdown or Accept: application/json. Bare */* returns the default HTML experience.

Not all errors are retryable. DNS failures (1xxx codes), configuration errors, and legal blocks tell the agent not to retry — escalate to the site owner instead. Only rate limiting (1015) and similar transient errors have retryable: true.

Intentional blocks are not transient. Error 1020 (access denied) and 1009 (geo restriction) have retryable: false and owner_action_required: true. Do not retry; contact the site owner.

Endpoint discovery requires sufficient valid traffic. Cloudflare's behavioral detection needs enough requests to establish patterns. Newly deployed LLM endpoints may not appear immediately in the discovery dashboard.

Wrap-up

AI Security for Apps is now generally available for Cloudflare Enterprise customers, with endpoint discovery free for all plans. The platform sits inline as a reverse proxy — no SDKs, no configuration changes, no maintenance overhead. It discovers where AI lives in your estate, detects malicious or off-policy behavior at the edge, and mitigates threats via the WAF rules engine you already use.

The addition of RFC 9457 structured error responses turns error pages into machine-readable policy. Agents receive explicit guidance: retry or stop, wait and back off, escalate or reroute. The payload is 98% smaller, the token cost is 98% lower, and the operational fields (retryable, retry_after, owner_action_required) enable deterministic control flow.

This is the first layer of the agent stack. Cloudflare is building AI Gateway for routing and observability, Workers AI for inference, and identity primitives for agent operations. The goal: structured, secure, efficient agent interactions at Internet scale.

If you're operating LLM-powered applications in production, start with endpoint discovery. Most organizations find shadow AI deployments they didn't know existed — product search endpoints, internal RAG bots, customer support chatbots exposed to the internet. Inventory your AI endpoints first, then layer on detection and mitigation policies that match your risk tolerance.

For agent developers: ship structured Accept headers as your default. Use Accept: text/markdown, */* for model-first workflows and Accept: application/json, */* for typed control flow. Treat bare */* as legacy fallback. The web is becoming agent-native; your clients should speak the language.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

Production LLM Serving on Kubernetes: vLLM + KServe Stack

Deploy vLLM with KServe on Kubernetes: InferenceService CRD, KEDA autoscaling on queue depth, and distributed KV cache with LMCache for production inference.

AI Gateway Working Group and Gateway API Inference Extension GA

Gateway API Inference Extension GA standardizes model-aware routing for self-hosted LLMs with KV-cache-aware scheduling and LoRA adapter affinity.