Agentic AI Workloads on Kubernetes

Introduction

AI agents are no longer stateless request/response services. They run multi-step reasoning loops, spawn sub-agents in parallel, maintain conversation state across hours, and call external tools autonomously. A single user request can trigger dozens of LLM calls, tool invocations, and intermediate reasoning steps before producing a result.

Standard Kubernetes primitives struggle with this workload pattern. HPA reacts to CPU and memory, but agent demand is event-driven: queue depth, request rate, or the number of pending agent tasks. Deployments treat pods as disposable, but agents need persistent memory. And when an agent can autonomously modify databases or call external APIs, you need admission-time guardrails, not just RBAC.

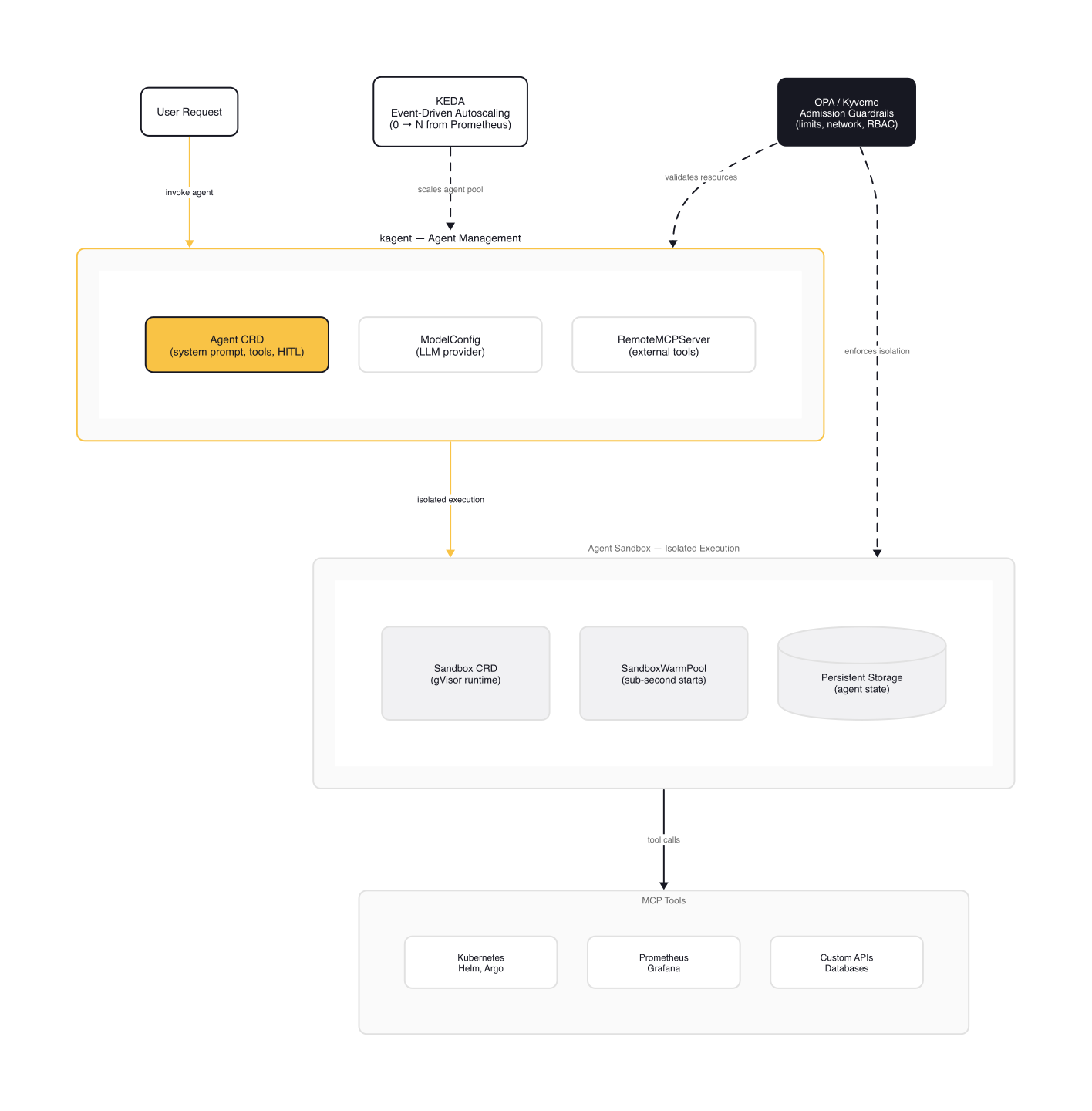

Two new Kubernetes-native projects address this directly. kagent (CNCF Sandbox) provides declarative CRDs for managing AI agents, their tools, and LLM configurations. Agent Sandbox (kubernetes-sigs) introduces a purpose-built CRD for isolated, stateful, singleton workloads designed for agent code execution. Combined with KEDA for event-driven autoscaling and OPA/Kyverno for policy enforcement, these tools form the core stack for production agentic workloads.

The Agent Stack

kagent: Declarative Agent Management

kagent is an open-source framework created at Solo.io in 2025, now a CNCF Sandbox project. It brings agent management into the Kubernetes control plane with custom resources.

The architecture has three layers. A Go-based controller watches custom resources. An engine runs the agent conversation loop using either the Python ADK or Go ADK runtime. And MCP tools let agents invoke external systems.

The core CRD is the Agent resource:

# kagent Agent CRD with MCP tools and HITL approval

# Adapted from: https://kagent.dev/docs/kagent/concepts/agents

apiVersion: kagent.dev/v1alpha2

kind: Agent

metadata:

name: k8s-troubleshooter

namespace: kagent

spec:

type: Declarative

declarative:

runtime: go

modelConfig: default-model-config

systemMessage: |

You are a Kubernetes troubleshooting agent.

Diagnose connectivity issues, check pod health,

and suggest remediation steps.

tools:

- type: McpServer

mcpServer:

name: kagent-tool-server

kind: RemoteMCPServer

apiGroup: kagent.dev

toolNames:

- k8s_get_resources

- k8s_describe_resource

- k8s_delete_resource

- k8s_apply_manifest

requireApproval:

- k8s_delete_resource

- k8s_apply_manifest

- type: Agent

agent:

name: promql-agent

context:

compaction:

compactionInterval: 5

Several things to note. The runtime: go field selects the Go ADK, which starts in ~2 seconds vs ~15 seconds for the Python ADK. The requireApproval list gates destructive tools behind human approval in the UI. And context.compaction automatically summarizes older messages when conversations exceed the LLM context window.

kagent ships with built-in MCP tools for Kubernetes, Istio, Helm, Argo, Prometheus, Grafana, and Cilium. You can reference other agents as tools too: the promql-agent above is called whenever the troubleshooter needs a PromQL query.

Agent Sandbox: Isolated Execution Environments

Agent Sandbox is a kubernetes-sigs project announced at KubeCon NA 2025 by Google. It fills a gap that neither Deployments nor StatefulSets address. The goal: manage a single, isolated, stateful pod with lifecycle controls like hibernation and warm pools.

# Agent Sandbox CRD — isolated singleton workload

# Adapted from: https://github.com/kubernetes-sigs/agent-sandbox

apiVersion: agents.x-k8s.io/v1alpha1

kind: Sandbox

metadata:

name: code-executor

spec:

podTemplate:

spec:

runtimeClassName: gvisor

containers:

- name: agent-runtime

image: agent-runtime:latest

securityContext:

privileged: false

allowPrivilegeEscalation: false

capabilities:

drop:

- ALL

The runtimeClassName: gvisor ensures each sandbox runs with kernel-level isolation through a userspace syscall filter. Kata Containers is also supported for lightweight VM-based isolation.

Agent Sandbox includes three extension CRDs:

- SandboxTemplate for reusable sandbox configurations

- SandboxClaim for requesting sandboxes from templates (similar to PVC/PV binding)

- SandboxWarmPool for pre-warmed pools that deliver sub-second allocation, significantly faster than cold starts

warning: Agent Sandbox is for singleton isolated workloads, not replicated stateful services. Each Sandbox manages exactly one pod. If you need N replicas with persistent storage, use a StatefulSet. If you need isolated code execution per task, use Agent Sandbox.

Event-Driven Autoscaling with KEDA

HPA watches CPU and memory. Agents don't saturate CPU while waiting for LLM responses. They saturate queues, rack up pending requests, and spike external metric counters. You need KEDA (Kubernetes Event-Driven Autoscaling) to scale based on what actually matters.

KEDA operates in two distinct phases:

- Activation (0↔1): The KEDA operator monitors the scaler's

IsActivefunction. When the metric is strictly greater than theactivationThreshold, KEDA creates the first replica. When it drops back, KEDA scales to zero. - Scaling (1↔N): Once active, the HPA controller takes over. It scales based on the

thresholdvalue using standard HPA algorithms.

Here's a ScaledObject using the Prometheus scaler:

# KEDA ScaledObject with Prometheus scaler

# Adapted from: https://keda.sh/docs/2.19/scalers/prometheus/

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: agent-scaler

namespace: agentic-ai

spec:

scaleTargetRef:

name: agent-pool

minReplicaCount: 0

maxReplicaCount: 20

cooldownPeriod: 600

triggers:

- type: prometheus

metadata:

serverAddress: http://prometheus.monitoring:9090

query: |

sum(rate(agent_requests_total{service="agent-pool"}[5m]))

threshold: '50'

activationThreshold: '3'

authenticationRef:

name: prometheus-auth

The activationThreshold: '3' means KEDA activates the workload when the metric is strictly greater than 3 (not at 3). The cooldownPeriod: 600 gives the HPA 10 minutes to stabilize before scaling back down, preventing oscillation with bursty agent traffic.

Once deployed, you can verify the scaler is working:

$ kubectl get scaledobjects -n agentic-ai

NAME SCALETARGETKIND SCALETARGETNAME MIN MAX TRIGGERS READY ACTIVE AGE

agent-scaler apps/v1.StatefulSet agent-pool 0 20 prometheus True True 3d

$ kubectl get pods -n agentic-ai -l app=agent-pool

NAME READY STATUS RESTARTS AGE

agent-pool-0 1/1 Running 0 2h

agent-pool-1 1/1 Running 0 2h

agent-pool-2 1/1 Running 0 45m

Authentication is handled through a separate TriggerAuthentication CRD:

# TriggerAuthentication for Prometheus with Bearer token

# Adapted from: https://keda.sh/docs/2.19/scalers/prometheus/

apiVersion: v1

kind: Secret

metadata:

name: prometheus-secret

namespace: agentic-ai

data:

bearerToken: "dG9rZW4tZ29lcy1oZXJl"

---

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: prometheus-auth

namespace: agentic-ai

spec:

secretTargetRef:

- parameter: bearerToken

name: prometheus-secret

key: bearerToken

For composite scaling decisions, KEDA supports scaling modifiers with formula expressions:

# ScaledObject scaling modifiers (partial spec — add to a full ScaledObject)

# Adapted from: https://keda.sh/docs/2.19/concepts/scaling-deployments/

advanced:

scalingModifiers:

formula: "(queue_depth + request_rate) / 2"

target: "10"

activationTarget: "2"

triggers:

- type: prometheus

name: request_rate

metadata:

query: sum(rate(agent_requests_total[5m]))

- type: rabbitmq

name: queue_depth

metadata:

queueName: agent-tasks

threshold: '5'

This averages queue depth and request rate into a single metric, giving you more nuanced scaling behavior than either metric alone.

Long-Running Agent Executions

Agents can run reasoning loops for minutes or hours. When HPA decides to scale down, it may terminate a pod that's mid-reasoning. KEDA's documentation recommends two approaches:

Lifecycle hooks: Use preStop hooks and terminationGracePeriodSeconds to delay termination until the current task completes. The pod stays in Terminating state during this grace period.

Jobs instead of Deployments: For discrete agent tasks with clear start/end points, use KEDA's ScaledJob CRD. Each job runs to completion before being cleaned up.

note: For agents that maintain long conversations, lifecycle hooks are the better choice. For batch-style agent tasks (generate a report, process a dataset), Jobs are cleaner. Don't force long-running conversational agents into Jobs; they don't map well to the run-to-completion model.

Choosing the Right Workload Primitive

The right workload primitive depends on state requirements, isolation needs, and execution patterns.

| Primitive | Use case | State | Replicas | Isolation |

|---|---|---|---|---|

| Deployment | Stateless tool agents, API gateways | None | N | Standard container |

| StatefulSet | Agent pools with persistent memory | Per-pod PVC | N | Standard container |

| Agent Sandbox | Untrusted code execution, isolated runtimes | Per-sandbox PV | 1 (singleton) | gVisor/Kata |

| Job / CronJob | Discrete agent tasks, scheduled runs | None | 1 per task | Standard container |

For StatefulSets with persistent agent state, use volumeClaimTemplates so each pod gets its own PVC:

# StatefulSet with per-pod persistent storage for agent state

# Adapted from: https://kubernetes.io/docs/concepts/workloads/controllers/statefulset/

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: agent-pool

namespace: agentic-ai

spec:

serviceName: agent-pool

replicas: 3

selector:

matchLabels:

app: agent-pool

template:

metadata:

labels:

app: agent-pool

spec:

containers:

- name: agent

image: agent-runtime:latest

ports:

- containerPort: 8080

volumeMounts:

- name: agent-state

mountPath: /data/state

lifecycle:

preStop:

exec:

command: ["/bin/sh", "-c", "while curl -sf http://localhost:8080/healthz; do sleep 5; done"]

terminationGracePeriodSeconds: 300

volumeClaimTemplates:

- metadata:

name: agent-state

spec:

accessModes: ["ReadWriteOnce"]

storageClassName: fast-ssd

resources:

requests:

storage: 10Gi

The terminationGracePeriodSeconds: 300 gives agents 5 minutes to complete their current task before shutdown. The preStop hook polls a health endpoint, keeping the pod alive while the agent is still processing. Kubernetes sends SIGTERM after the hook exits, so the agent process receives the signal only after its current task finishes or the grace period expires.

Inter-Agent Communication

When running multiple agent types (planner, executor, reviewer), deploy each as a separate Deployment or StatefulSet. A single pod running all agents is a single point of failure. For production multi-agent systems, a service mesh (Istio, Linkerd) adds mTLS, retries, and distributed tracing. That tracing matters: agent behavior is non-deterministic, and you need to follow the full chain from user request through planner decisions to tool invocations when debugging.

Security and Policy Enforcement

Agents act autonomously. An agent with broad permissions can modify databases, call external APIs, or create Kubernetes resources. Security requires defense at multiple layers.

Workload Identity and RBAC

Every agent gets its own ServiceAccount with minimal permissions. No shared credentials, no default service accounts.

# Minimal RBAC for an agent ServiceAccount

# Adapted from: https://kubernetes.io/docs/reference/access-authn-authz/rbac/

apiVersion: v1

kind: ServiceAccount

metadata:

name: troubleshooter-agent

namespace: agentic-ai

---

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: troubleshooter-role

namespace: agentic-ai

rules:

- apiGroups: [""]

resources: ["pods", "pods/log", "services", "events"]

verbs: ["get", "list", "watch"]

- apiGroups: ["apps"]

resources: ["deployments", "statefulsets"]

verbs: ["get", "list"]

This troubleshooter agent can read pods, logs, services, and events, but cannot create, update, or delete anything. The principle: start with read-only access, expand only when a specific tool requires it.

Runtime Sandboxing

For agents executing untrusted code (user-provided scripts, LLM-generated code), use Agent Sandbox with gVisor or Kata Containers. gVisor intercepts syscalls in userspace, providing kernel-level isolation without full VM overhead. Kata Containers run each container in a lightweight VM for even stronger isolation.

Policy Engines: OPA and Kyverno

OPA/Gatekeeper (CNCF Graduate) and Kyverno (CNCF Incubating) enforce policies at admission time, before resources reach the cluster.

Here's a Kyverno policy requiring resource limits on all agent pods:

# Kyverno ClusterPolicy enforcing resource limits on agent workloads

# Adapted from: https://nirmata.com/2025/02/07/kubernetes-policy-comparison-kyverno-vs-opa-gatekeeper/

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: require-agent-resource-limits

spec:

validationFailureAction: Enforce

rules:

- name: check-resource-limits

match:

any:

- resources:

kinds:

- Pod

namespaceSelector:

matchLabels:

workload-type: agentic-ai

validate:

message: "Agent pods must specify CPU and memory limits."

pattern:

spec:

containers:

- resources:

limits:

memory: "?*"

cpu: "?*"

And a NetworkPolicy enforcing default-deny with explicit allow for agent-to-LLM traffic:

# Default-deny NetworkPolicy with explicit allow for LLM access

# Adapted from: https://kubernetes.io/docs/concepts/services-networking/network-policies/

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: agent-network-policy

namespace: agentic-ai

spec:

podSelector:

matchLabels:

app: agent-pool

policyTypes:

- Egress

egress:

- to:

- namespaceSelector:

matchLabels:

name: llm-serving

ports:

- protocol: TCP

port: 8080

- to:

- namespaceSelector:

matchLabels:

name: kube-system

ports:

- protocol: UDP

port: 53

This restricts agent pods to DNS resolution and LLM service access only. No direct internet access, no cross-namespace communication unless explicitly allowed.

tip: Start with 3-5 critical policies: resource limits, no privileged containers, network isolation, namespace restrictions, and image allowlists. Test in audit mode first (Kyverno:

validationFailureAction: Audit, Gatekeeper:enforcementAction: dryrun), then enforce after validating there are no false positives.

Gotchas

KEDA Activation Threshold Semantics

activationThreshold: 0 means the scaler activates when the metric value is strictly greater than 0. Set it to 0 and the workload scales from zero at the first request. Set it to 5 and the workload stays idle until the sixth request. This is "greater than," not "greater than or equal to." Getting this wrong means agents either never wake up or never sleep.

HPA Oscillation with Bursty Traffic

Agent traffic comes in waves. A batch of users triggers agents simultaneously, KEDA scales to 15 replicas, the burst processes, and HPA scales back to 2. The next wave hits and the cycle repeats. This sawtooth pattern wastes resources and creates startup latency.

Increase cooldownPeriod to 600-900 seconds for agent workloads. For more control, use scaling modifiers with dampening formulas that smooth out metric spikes.

kagent Go vs Python Runtime

The Go ADK starts in ~2 seconds vs ~15 seconds for Python. If your agents scale from zero frequently, those 13 seconds matter. But the Python runtime integrates with LangGraph, CrewAI, and Google ADK-native features. Choose Go when cold-start latency drives user experience. Choose Python when you need framework integrations.

StatefulSet vs Agent Sandbox

Agent Sandbox manages exactly one pod per sandbox. It's designed for isolated code execution environments, not replicated agent pools. If you need three replicas of the same agent with persistent storage, use a StatefulSet. If you need 100 isolated sandboxes for running user-submitted code, use Agent Sandbox with SandboxWarmPool.

OPA Policy Complexity Creep

It's tempting to write policies for every edge case on day one. Don't. Start with the five critical policies listed above. Run in audit mode for a week. Review the violations. Then add policies incrementally based on real incidents, not hypothetical risks.

Wrap-up

kagent, Agent Sandbox, KEDA, and OPA/Kyverno form the production stack for agentic AI workloads on Kubernetes. kagent makes agents declarative. Agent Sandbox provides the isolation. KEDA scales based on actual demand. Policy engines enforce guardrails at admission time.

The landscape is converging fast. Agent Sandbox launched at KubeCon NA 2025. kagent entered CNCF Sandbox. KEDA 2.19 added OpenTelemetry metrics. These projects are building toward an agent-native platform layer where agents, tools, and LLM providers are first-class Kubernetes citizens.

Try deploying a kagent agent with KEDA scaling in a test cluster. The CRDs are straightforward, the documentation is solid, and you'll quickly see where your existing Kubernetes patterns need adaptation. The shift from stateless microservices to autonomous agent workloads is the next infrastructure challenge, and the tools to meet it are already here.

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

Introduction to KEDA and Event-Driven Autoscaling

How KEDA extends Kubernetes HPA with 65+ scalers, scale-to-zero, and a two-phase architecture for event-driven pod autoscaling.

Message Queue Scaling with KEDA — Kafka and SQS

How KEDA's Kafka and SQS scalers calculate lag and queue depth, with TriggerAuthentication patterns and production edge cases.

Custom Metrics and Prometheus-Based Scaling with KEDA

Using KEDA's Prometheus scaler to drive autoscaling from any PromQL query — replacing Prometheus Adapter with a simpler, more flexible approach.