Production Cost Optimization Patterns with Karpenter

Your Karpenter NodePools are configured. Pods get the right instance types. But check your cluster utilization after business hours: nodes sitting at 30% CPU, Spot instances locked to expensive types long after cheaper capacity opened up, pods requesting 2Gi that use 400Mi. Provisioning is half the cost equation. The other half is what happens after pods scale down, workloads shift, and Spot prices fluctuate.

This article covers the patterns that close that gap: Spot instance diversification, Spot-to-Spot consolidation, the On-Demand/Spot split, right-sizing pod requests, and the safety nets that keep costs predictable. These patterns compound. Tinybird cut AWS compute costs by 20% overall and 90% on CI/CD workloads. Grover runs 80% of production on Spot. The starting point for all of them is the same: broad instance flexibility and accurate pod resource requests.

Spot Instance Diversification

Karpenter uses the price-capacity-optimized (PCO) allocation strategy when launching Spot instances. PCO balances two competing goals: lowest price and lowest interruption risk. It selects from the deepest capacity pools among your eligible instance types, then picks the cheapest option within those pools. The broader your instance selection, the more pools PCO can draw from, and the better the price-availability tradeoff.

A narrow instance selection defeats this. If you constrain your NodePool to three instance types, PCO has almost no room to optimize. Worse, if all three types get reclaimed simultaneously, your pods sit in Pending until capacity returns.

The standard pattern uses category-based selection across multiple families and sizes:

# nodepool.yaml

apiVersion: karpenter.sh/v1

kind: NodePool

metadata:

name: default

spec:

template:

spec:

requirements:

- key: karpenter.sh/capacity-type

operator: In

values: ["spot"]

- key: karpenter.k8s.aws/instance-category

operator: In

values: ["c", "m", "r"]

- key: karpenter.k8s.aws/instance-size

operator: NotIn

values: ["nano", "micro", "small", "medium"]

- key: karpenter.k8s.aws/instance-hypervisor

operator: In

values: ["nitro"]

limits:

cpu: 100

memory: 100Gi

disruption:

consolidationPolicy: WhenEmptyOrUnderutilized

This configuration pulls from compute-optimized (c), general-purpose (m), and memory-optimized (r) families while excluding the smallest sizes. The nitro hypervisor filter restricts to modern instance types. The result: dozens of eligible instance types across multiple availability zones, giving PCO a deep pool to work with.

You can enforce minimum diversity with the minValues constraint. This guarantees Karpenter considers at least N distinct values for a given key before launching:

# nodepool.yaml (requirements section)

- key: karpenter.k8s.aws/instance-family

operator: Exists

minValues: 5

This prevents a scenario where scheduling constraints accidentally narrow the selection to a single family. If fewer than 5 families can satisfy the pod's requirements, Karpenter won't launch at all, surfacing the constraint problem early rather than launching into a shallow capacity pool.

Spread across availability zones matters too. Spot capacity pools are per-AZ, so a c6i.xlarge in us-east-1a is a different pool than c6i.xlarge in us-east-1b. Topology spread constraints in your Deployments handle this at the pod level, and Karpenter respects them when selecting nodes.

Spot-to-Spot Consolidation

Before Karpenter v0.34.0, Spot nodes had a limitation: they could be deleted when empty, but never replaced with a cheaper Spot instance. If you had four 24cpu pods on a 96cpu Spot node and three of them completed, the node sat at 25% utilization indefinitely. Karpenter's consolidation engine could delete it and move the remaining pod elsewhere, but it couldn't launch a smaller Spot replacement.

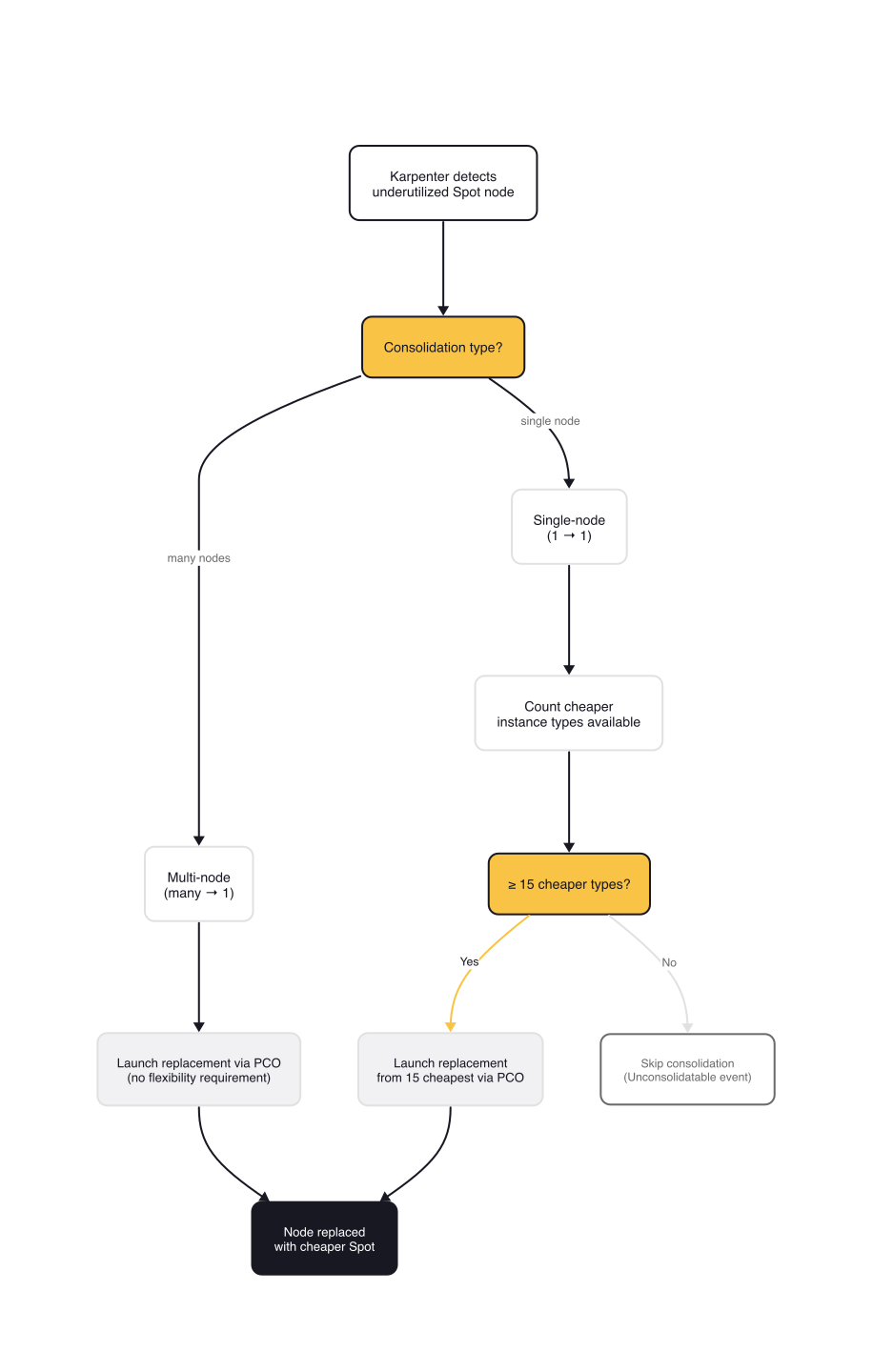

The challenge is what the Karpenter design documents call the "race to the bottom." On-Demand consolidation is straightforward: replace with the cheapest type that fits. For Spot, doing the same thing is dangerous. PCO deliberately avoids the cheapest instance type because it often has the highest interruption rate. If consolidation kept replacing nodes with cheaper options, it would walk down the PCO decision ladder until only the cheapest, most interruptible instance remained, triggering repeated interruptions.

The solution is the Minimum Flexibility design. Karpenter will replace a Spot node with a cheaper Spot node only if there are at least 15 instance types cheaper than the current one. This ensures the replacement launch request sent to PCO has enough diversity to balance price and availability, just like the initial launch did.

Key details of how this works:

- Single-node consolidation (1 node replaced by 1 cheaper node): requires 15+ cheaper instance types in the candidate set

- Multi-node consolidation (many nodes replaced by 1 node): has no flexibility requirement, because consolidating multiple nodes into one doesn't cause the laddering problem

- The feature requires the

SpotToSpotConsolidationfeature gate, enabled at install time:

# Helm install with Spot-to-Spot consolidation enabled

helm upgrade --install karpenter oci://public.ecr.aws/karpenter/karpenter \

--set settings.featureGates.spotToSpotConsolidation=true

When consolidation fires, the Karpenter logs show the replacement decision with the candidate instance types:

# Karpenter controller logs

{"level":"INFO","time":"2024-02-19T12:09:50.299Z","logger":"controller.disruption",

"message":"disrupting via consolidation replace, terminating 1 candidates

ip-10-0-12-181.eu-west-1.compute.internal/c6g.2xlarge/spot and replacing with

spot node from types m6gd.xlarge, m5dn.large, c7a.xlarge, r6g.large, r6a.xlarge

and 10 other(s)"}

When flexibility is insufficient, you get an Unconsolidatable event on the NodeClaim:

# kubectl describe nodeclaim

Events:

Normal Unconsolidatable 31s karpenter SpotToSpotConsolidation requires 15

cheaper instance type options than the current candidate to consolidate, got 1

This is your signal to broaden instance type selection. If you see this event across most of your nodes, your NodePool requirements are too narrow for Spot consolidation to work.

The On-Demand/Spot Split Pattern

Not every workload tolerates interruption. Stateful services, leader-elected controllers, and databases need On-Demand reliability. Stateless HTTP servers, batch jobs, and CI runners thrive on Spot pricing. The common pattern is splitting capacity: On-Demand for the critical baseline, Spot for everything else.

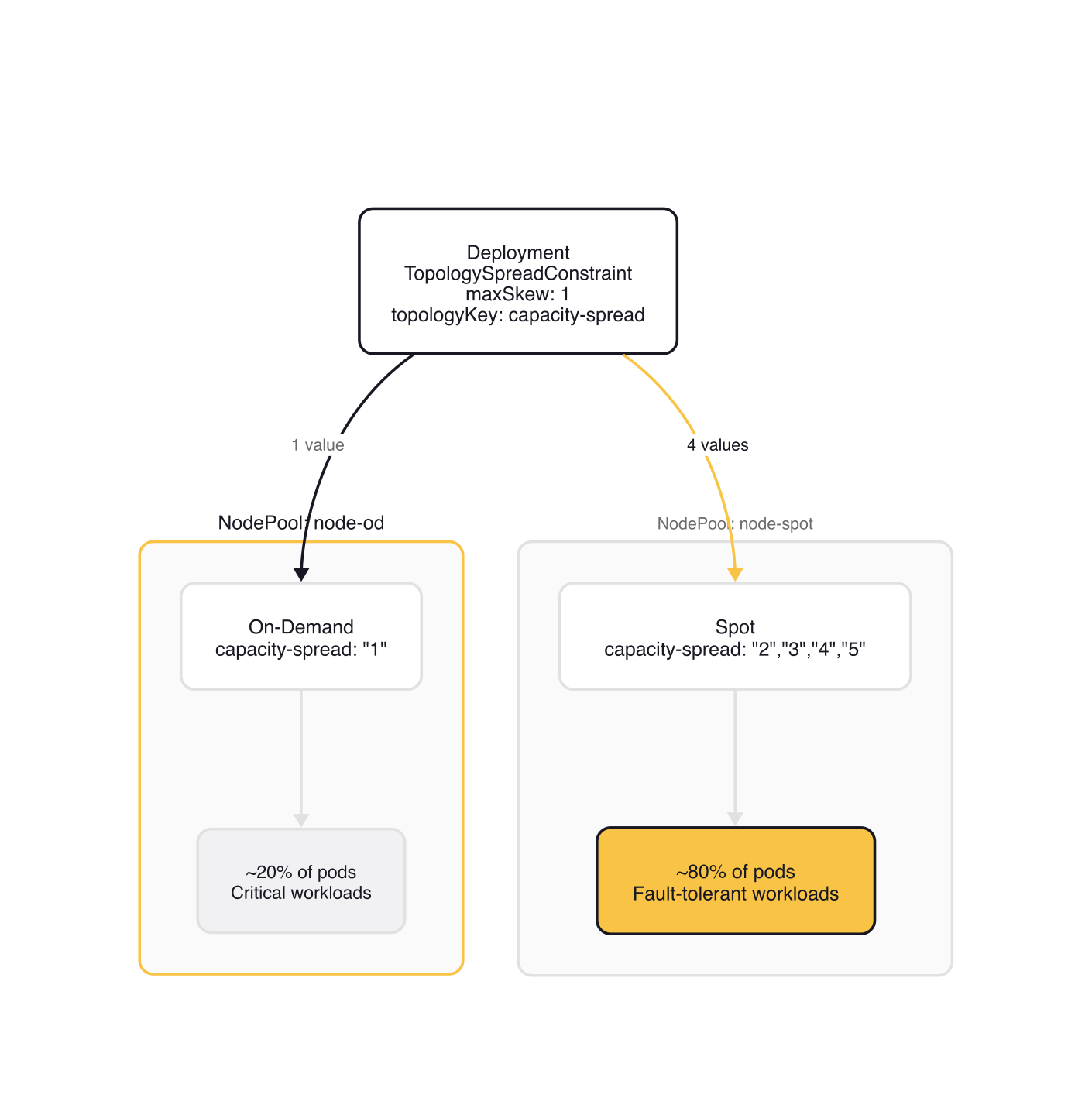

The Karpenter Blueprints repository demonstrates this with a capacity-spread label and topology spread constraints. You create two NodePools, one for On-Demand and one for Spot, with disjoint values for a custom label:

# od-nodepool.yaml (trimmed from aws-samples/karpenter-blueprints)

apiVersion: karpenter.sh/v1

kind: NodePool

metadata:

name: node-od

spec:

template:

spec:

nodeClassRef:

group: karpenter.k8s.aws

kind: EC2NodeClass

name: default

requirements:

- key: karpenter.sh/capacity-type

operator: In

values: ["on-demand"]

- key: capacity-spread

operator: In

values: ["1"]

taints:

- key: intent

value: workload-split

effect: NoSchedule

# spot-nodepool.yaml (trimmed from aws-samples/karpenter-blueprints)

apiVersion: karpenter.sh/v1

kind: NodePool

metadata:

name: node-spot

spec:

template:

spec:

nodeClassRef:

group: karpenter.k8s.aws

kind: EC2NodeClass

name: default

requirements:

- key: karpenter.sh/capacity-type

operator: In

values: ["spot"]

- key: capacity-spread

operator: In

values: ["2", "3", "4", "5"]

taints:

- key: intent

value: workload-split

effect: NoSchedule

Both NodePools use a NoSchedule taint so that only workloads with a matching toleration land on these nodes. Without the taint, any pod in the cluster could schedule onto these NodePools and skew the split ratio.

The Deployment uses a topology spread constraint on the capacity-spread key with maxSkew: 1, plus a toleration for the taint. With five topology values total (1 On-Demand + 4 Spot) and maxSkew: 1, the scheduler distributes pods evenly across all values, producing a roughly 80/20 Spot-to-OD ratio:

# workload.yaml (spec.template.spec section, trimmed)

tolerations:

- key: "intent"

operator: "Equal"

value: "workload-split"

effect: "NoSchedule"

topologySpreadConstraints:

- labelSelector:

matchLabels:

app: workload-split

maxSkew: 1

topologyKey: capacity-spread

whenUnsatisfiable: DoNotSchedule

The ratio is configurable by adjusting the number of values. Three Spot values and two On-Demand values gives you 60/40. Equal values gives 50/50. The topology spread constraint distributes pods evenly across all capacity-spread values, so the ratio follows directly from the value count on each side.

Karpenter also has a built-in capacity type priority: Reserved > Spot > On-Demand. If you have EC2 capacity reservations or Savings Plans, Karpenter uses them first automatically. You don't configure this in YAML. It's the default allocation behavior since v1.

Right-Sizing Pod Requests

Karpenter provisions nodes based on pod requests, not limits, and not actual usage. If your pods request 2 CPU and 4Gi memory but use 0.3 CPU and 500Mi, Karpenter provisions nodes sized for the requests. Consolidation packs pods based on requests. The entire cost optimization chain starts with accurate resource specifications.

This makes right-sizing a shared responsibility. Karpenter optimizes infrastructure. You optimize pod specifications. Neither can compensate for the other.

For non-compressible resources like memory, set requests equal to limits. Here's why: consolidation packs pods onto fewer nodes based on allocatable capacity minus total requests. If a pod's memory limit exceeds its request, it can burst above the request on a tightly packed node. When multiple pods burst simultaneously, the kernel's OOM killer intervenes. Consolidation makes this more likely by reducing the slack between total requests and total allocatable memory.

# deployment.yaml (resources section)

resources:

requests:

cpu: 250m # CPU can burst (compressible)

memory: 512Mi # Memory can't. Set equal

limits:

cpu: "1" # Allow CPU bursting

memory: 512Mi # Match request to prevent OOM

For CPU, setting requests below limits is fine. CPU is compressible: when a container exceeds its request, it gets throttled rather than killed. The cost tradeoff is between accurate bin-packing (higher requests) and burst headroom (lower requests).

If some teams in your cluster don't set resource requests at all, use LimitRange as a namespace-level default:

# limitrange.yaml

apiVersion: v1

kind: LimitRange

metadata:

name: default-resources

namespace: workloads

spec:

limits:

- default:

cpu: 500m

memory: 256Mi

defaultRequest:

cpu: 100m

memory: 128Mi

type: Container

For ongoing right-sizing, run the Vertical Pod Autoscaler in recommendation mode (it observes usage and suggests request values without modifying pods) or deploy Goldilocks, which creates VPA objects for each Deployment in a namespace and surfaces recommendations in a dashboard. Kubecost adds dollar-value visibility, showing the gap between requested and used resources as wasted spend.

Safety Nets for Cost Control

Broad instance selection and Spot consolidation can save money. They can also scale your cluster faster than your budget if you're not careful. Karpenter provisions nodes as fast as pods demand them, and there's no cluster-wide spending cap built in.

NodePool resource limits are your first line of defense. The spec.limits field sets a ceiling on total CPU and memory that a NodePool can provision:

# nodepool.yaml (spec section)

limits:

cpu: 1000

memory: 1000Gi

When the limit is reached, Karpenter stops provisioning and logs memory resource usage of 1001 exceeds limit of 1000. These limits are per-NodePool, not cluster-wide. If you have three NodePools, each with a 1000-CPU limit, your theoretical maximum is 3000 CPUs.

Disruption budgets control how aggressively consolidation reshuffles your cluster. The default is nodes: 10%, meaning Karpenter can disrupt up to 10% of a NodePool's nodes simultaneously. During business hours or deploy windows, you can restrict this to zero:

# nodepool.yaml (spec.disruption section)

disruption:

consolidationPolicy: WhenEmptyOrUnderutilized

budgets:

- nodes: "20%"

- nodes: "0"

schedule: "@daily"

duration: 10m

When multiple budgets apply, Karpenter takes the most restrictive (minimum) value. Here, the nodes: "0" budget blocks all voluntary disruption for the first 10 minutes of each day (UTC), while the nodes: "20%" budget caps disruption at 20% the rest of the time. Budgets only affect voluntary disruption: consolidation, drift, and emptiness. Spot interruption handling and node expiration are not affected. Schedule-based budgets accept cron syntax for more complex windows.

eks-node-viewer gives you real-time cost visibility during testing. It's a CLI tool that shows each node's instance type, utilization, and estimated monthly cost:

# Simplified output (actual tool uses a TUI with color bars)

$ eks-node-viewer

ip-10-0-104-249 c7i-flex.large spot cpu: 62% $0.04/hr

ip-10-0-47-113 m7g.xlarge spot cpu: 78% $0.08/hr

ip-10-0-83-213 c6a.large od cpu: 45% $0.08/hr

For production spend monitoring, set up CloudWatch billing alarms for threshold alerts and enable AWS Cost Anomaly Detection for ML-based unusual spend detection. These are external to Karpenter but essential. NodePool limits prevent runaway provisioning. Billing alarms catch everything else.

Gotchas

Spot-to-Spot consolidation is off by default. The SpotToSpotConsolidation feature gate must be explicitly enabled. Without it, Spot nodes are only consolidated via deletion (empty nodes), never via replacement. Many teams miss this and wonder why their Spot nodes never right-size.

The 15-type threshold is single-node only. Multi-node consolidation (merging several underutilized nodes into one) has no flexibility requirement. If you see Unconsolidatable events about 15 instance types, it's specifically the single-node replacement path that's blocked.

Narrow instance selection silently kills Spot consolidation. If your NodePool requirements yield fewer than 15 eligible instance types cheaper than the current node, single-node Spot consolidation never fires. There's no warning at deploy time. You only discover it through Unconsolidatable events or by noticing that Spot nodes never shrink.

Pods without requests look free to the scheduler. Karpenter treats pods with no resource requests as requiring zero resources. This leads to aggressive bin-packing where many "zero-resource" pods land on the same node, all competing for actual CPU and memory. Use LimitRange to prevent this.

Consolidation packs by requests, not limits. If your memory limits exceed requests, consolidation can pack pods onto a node where total limits exceed total allocatable memory. Multiple pods bursting simultaneously triggers OOM kills. Set requests == limits for memory.

NodePool limits are not cluster-wide. There is no global resource cap across all NodePools. If you need a cluster-wide ceiling, you have to sum the individual NodePool limits and set them accordingly. Billing alarms are your actual cluster-wide safety net.

Wrap-up

Cost optimization with Karpenter is a layered strategy, not a single toggle. Broad instance diversification gives PCO room to optimize. Spot-to-Spot consolidation right-sizes Spot nodes that would otherwise sit underutilized. The OD/Spot split pattern keeps critical workloads on reliable capacity while pushing everything else to Spot pricing. Accurate pod resource requests make all of these patterns effective, because every optimization in the chain operates on requests, not actual usage.

Start with pod right-sizing. If your requests don't reflect reality, no amount of infrastructure optimization compensates. Then broaden your instance selection and enable Spot-to-Spot consolidation. Layer on NodePool limits and billing alarms before you sleep well at night. The 20-40% savings that companies like Tinybird and Grover report aren't from a single feature. They're from getting all of these patterns working together.

This post is part of the Karpenter — Kubernetes Node Autoscaling from Setup to Optimization collection (5 of 6)

Mastering the Kubernetes ecosystem — depth-first, no hype.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.

Related Articles

KEDA and Karpenter Together — Pod and Node Scaling Synergy

Combining KEDA's event-driven pod scaling with Karpenter's just-in-time node provisioning for a fully reactive, cost-efficient Kubernetes autoscaling stack.

Disruption, Drift, and Consolidation — Karpenter's Node Lifecycle

How Karpenter automatically replaces drifted nodes, consolidates underutilized capacity, and respects disruption budgets to keep clusters lean and current.

GPU Sharing Strategies for Multi-Tenant Kubernetes: MIG, Time-Slicing, and MPS

NVIDIA's GPU sharing mechanisms — MIG, time-slicing, and MPS — are gaining traction as teams run multiple inference workloads per GPU.