Welcome to KubeDojo

What KubeDojo is and what you'll find here: deep dives into real code, honest explorations of the Kubernetes ecosystem, and structured learning paths to master every certification.

Deep-dive guides, real code, and structured learning paths — from core workloads to AI infrastructure.

Featured

What KubeDojo is and what you'll find here: deep dives into real code, honest explorations of the Kubernetes ecosystem, and structured learning paths to master every certification.

A practical map of the five CNCF Kubernetes certifications — what each one covers, how exams work, and which path fits your career.

llm-d was accepted as a CNCF Sandbox project, providing Kubernetes-native distributed inference with KV-cache-aware routing, prefill/decode disaggregation, and accelerator-agnostic serving.

5 certification tracks · 196 lessons · Real code, real manifests, real exam prep.

Master every CKA exam competency — from cluster setup to troubleshooting. Real cluster operations, kubectl workflows, and hands-on exercises across 5 exam domains.

5 modules · 63 lessons

Master every CKS exam competency — from cluster hardening to runtime security. Real policies, security tools, attack scenarios, and hands-on exercises across 6 exam domains.

6 modules · 25 lessons

Recent Posts

How KEDA extends Kubernetes HPA with 65+ scalers, scale-to-zero, and a two-phase architecture for event-driven pod autoscaling.

How KEDA's Kafka and SQS scalers calculate lag and queue depth, with TriggerAuthentication patterns and production edge cases.

Using KEDA's Prometheus scaler to drive autoscaling from any PromQL query — replacing Prometheus Adapter with a simpler, more flexible approach.

How the KEDA HTTP Add-on intercepts traffic to scale HTTP workloads to zero, and when the Prometheus scaler is better.

ScaledJob creates one Kubernetes Job per event, scales dynamically, and lets long-running batch workloads terminate cleanly.

Combining KEDA's event-driven pod scaling with Karpenter's just-in-time node provisioning for a fully reactive, cost-efficient Kubernetes autoscaling stack.

Scraping KEDA operator metrics, building Grafana dashboards for scaling events, and diagnosing common ScaledObject issues in production.

How kagent, Agent Sandbox, KEDA, and OPA/Kyverno form the production stack for agentic AI on Kubernetes.

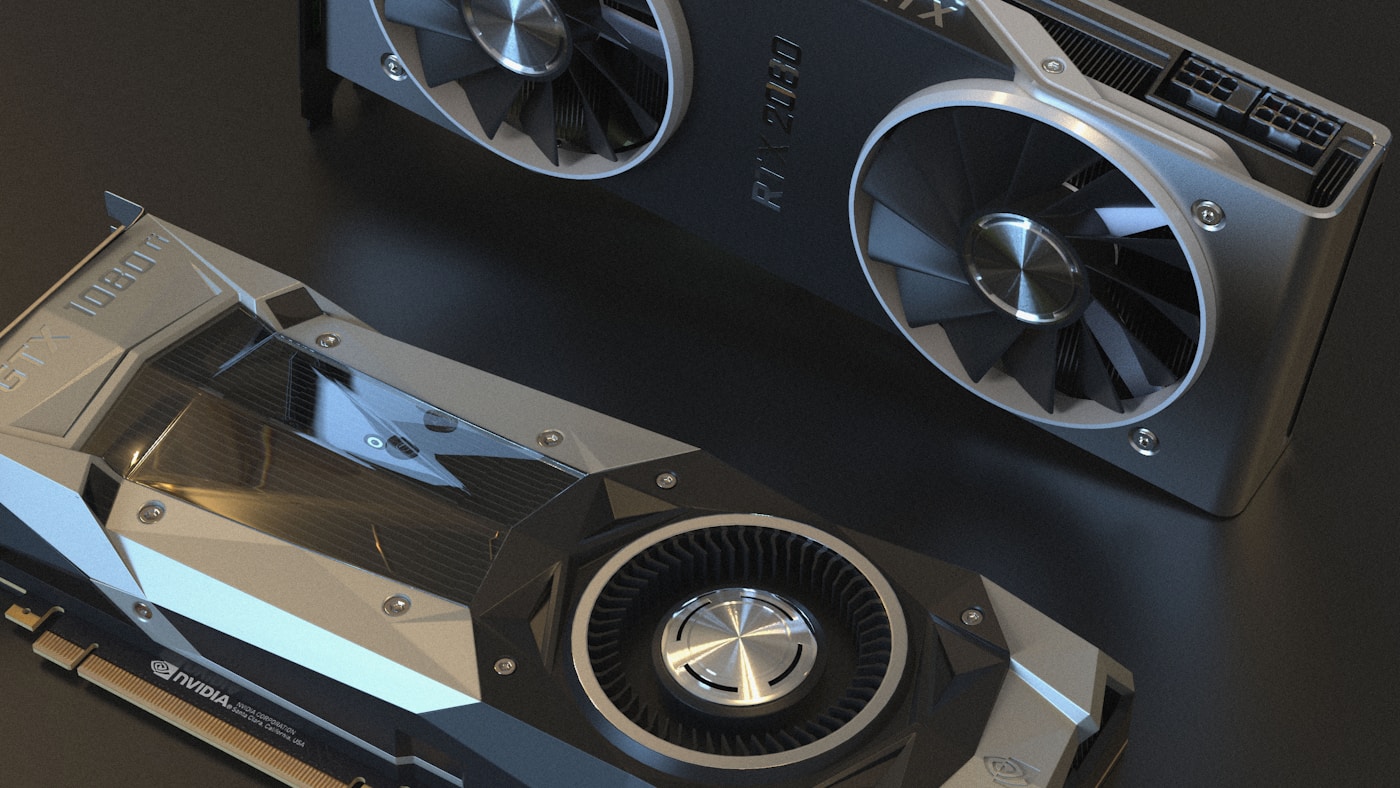

NVIDIA's GPU sharing mechanisms — MIG, time-slicing, and MPS — are gaining traction as teams run multiple inference workloads per GPU.