Deployments: Rolling Updates and Rollbacks

Three deployments. kubectl get deployments -A shows them stuck at 2/5 READY. UP-TO-DATE doesn't match AVAILABLE. You're on-call and need to understand fast: is this a rollout in progress, a stalled rollout, or a degraded deployment?

This is exactly what the CKA Workloads & Scheduling domain tests. Not "what field controls rolling update behavior," but "why is this rollout stuck, what does the deployment status tell you, and how do you fix it?" That spans both Workloads (15%) and Troubleshooting (30%) simultaneously. The same diagnostic process you use here applies to half the troubleshooting scenarios on the exam.

This article covers how rolling updates work under the hood, how to read deployment status conditions precisely, the full kubectl rollout toolkit, and the five most common reasons rollouts stall.

The Rolling Update Mechanism

A rolling update is a choreography between two ReplicaSets. When you trigger a rollout, the Deployment controller creates a new ReplicaSet and begins scaling it up while scaling down the old one. The rate of that exchange is controlled by two fields: maxSurge and maxUnavailable.

maxSurge is how many pods above desired can exist during the rollout. maxUnavailable is how many pods below desired can be unavailable simultaneously. Both default to 25%, but they round differently: maxSurge rounds up, maxUnavailable rounds down. For a 4-replica deployment, that means at most 5 pods running at peak (ceil(0.25 × 4) = 1 surge) and at most 1 unavailable (floor(0.25 × 4) = 1). The rounding difference matters more at low replica counts: for a 3-replica deployment, floor(0.25 × 3) = 0: the rollout cannot terminate any old pod. Combined with ceil(0.25 × 3) = 1 surge, it proceeds by creating one new pod first, then removing old ones one by one. With maxUnavailable: 0, surge capacity is required to make progress.

Not every change to a Deployment triggers a rollout. Only changes to .spec.template do. Scaling replicas, adding labels to the Deployment metadata, changing annotations on the Deployment object: none of those create a new ReplicaSet. This matters when you're wondering why a metadata change didn't cycle your pods.

What production deployments actually configure

CoreDNS is a good example of an explicit, conservative strategy. As cluster DNS, it cannot afford unnecessary unavailability during a rollout:

# coredns/deployment — kubernetes/coredns.yaml.sed (lines 84-91)

spec:

# replicas: not specified here:

# 1. Default is 1.

# 2. Will be tuned in real time if DNS horizontal auto-scaling is turned on.

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

maxUnavailable: 1 (absolute, not percentage) means exactly one CoreDNS pod can be down during a rollout. maxSurge is unset, so it defaults to 25% rounded up. The replicas field is intentionally omitted and managed by the DNS autoscaler. CoreDNS also runs with priorityClassName: system-cluster-critical and podAntiAffinity using requiredDuringSchedulingIgnoredDuringExecution on kubernetes.io/hostname. When DNS pods must spread across nodes, the rolling constraint ensures they do so safely during updates.

Compare that to prometheus-operator, a leader-elected controller that runs as a single replica:

# prometheus-operator/kube-prometheus — manifests/prometheusOperator-deployment.yaml (lines 11-12)

spec:

replicas: 1

# no strategy field — Kubernetes defaults (25%/25%) apply

No strategy field. The default 25%/25% works fine for a single-replica controller. During a rollout, the old pod terminates and the new one starts. There's a brief gap, but leader election handles it. The manifest's real operational investment is elsewhere: explicit resource limits (cpu: 200m/100m, memory: 200Mi/100Mi) and full security hardening (allowPrivilegeEscalation: false, readOnlyRootFilesystem: true, capabilities: drop: ALL). Rolling strategy is secondary when availability is already limited to one.

cert-manager's values.yaml documents the pattern explicitly for the single-replica + leader-election case:

# cert-manager/cert-manager — deploy/charts/cert-manager/values.yaml (lines 122-131)

# Deployment update strategy for the cert-manager controller deployment.

# For more information, see the [Kubernetes documentation](https://kubernetes.io/docs/concepts/workloads/controllers/deployment/#strategy).

#

# For example:

# strategy:

# type: RollingUpdate

# rollingUpdate:

# maxSurge: 0

# maxUnavailable: 1

strategy: {}

maxSurge: 0 with maxUnavailable: 1 is the no-surge pattern: the old pod terminates before the new one starts. No extra capacity consumed, no surge-related scheduling pressure. cert-manager ships with empty strategy: {} (Kubernetes defaults) but documents this explicitly for resource-constrained environments.

revisionHistoryLimit and its cost

Every rollout creates a new ReplicaSet. By default, Kubernetes retains 10 old ReplicaSets in etcd. cert-manager exposes revisionHistoryLimit as a top-level Helm value applied via the controller deployment template:

# cert-manager/cert-manager — deploy/charts/cert-manager/templates/deployment.yaml (lines 19-21)

# ... (line 18: template comment on if-statement semantics, omitted for brevity)

{{- if not (has (quote .Values.global.revisionHistoryLimit) (list "" (quote ""))) }}

revisionHistoryLimit: {{ .Values.global.revisionHistoryLimit }}

{{- end }}

The commented-out default in values.yaml is revisionHistoryLimit: 1. For high-churn controllers, trimming history reduces etcd noise. Setting it to 0 eliminates rollback entirely. That's a permanent trade-off: once the old ReplicaSets are gone, kubectl rollout undo has nothing to restore.

Reading Deployment Status

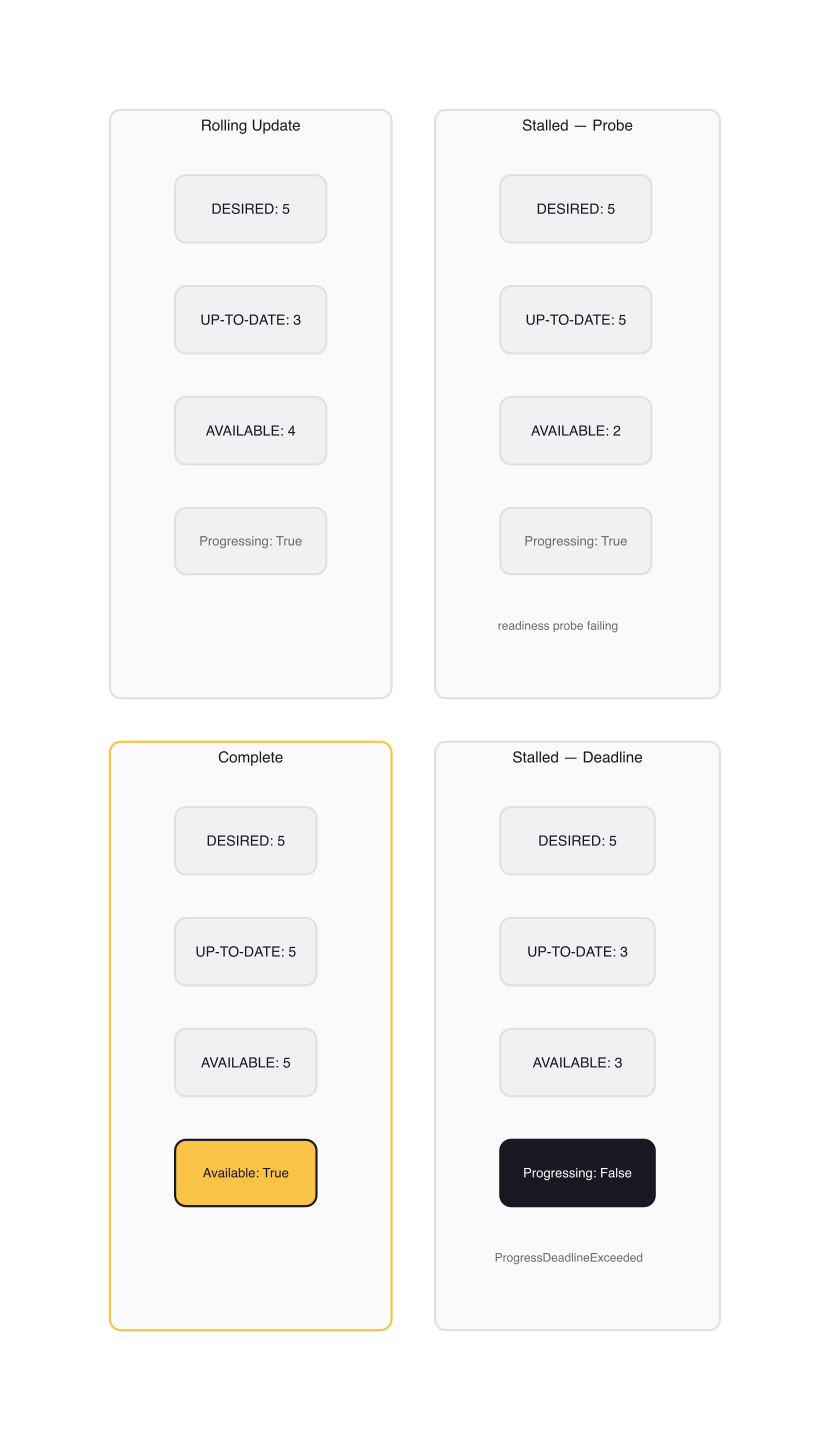

kubectl get deployments shows four columns that are not synonyms:

READY(ready/desired): pods that have passed readiness probesUP-TO-DATE: pods running the new pod templateAVAILABLE: pods that have been ready for at leastminReadySeconds(default 0, so effectively equal toREADYunless you've set it)

A healthy rolling deployment in progress shows UP-TO-DATE climbing while READY temporarily dips. When UP-TO-DATE equals READY equals desired, the rollout is complete.

The summary columns tell you what's happening. The .status.conditions field tells you why:

$ kubectl get deployment argocd-server -n argocd \

-o jsonpath='{.status.conditions}' | jq .

[

{

"lastTransitionTime": "2026-03-01T12:00:00Z",

"message": "Deployment has minimum availability.",

"reason": "MinimumReplicasAvailable",

"status": "True",

"type": "Available"

},

{

"lastTransitionTime": "2026-03-01T12:00:00Z",

"message": "ReplicaSet \"argocd-server-6d9f7b8c9\" has successfully progressed.",

"reason": "NewReplicaSetAvailable",

"status": "True",

"type": "Progressing"

}

]

Three condition types matter:

| Condition | Status | Meaning |

|---|---|---|

Available |

True | Minimum replicas are available |

Progressing |

True | Rollout underway or completed successfully |

Progressing |

False | Stalled past progressDeadlineSeconds (reason: ProgressDeadlineExceeded) |

ReplicaFailure |

True | ReplicaSet can't create pods (quota, taints, scheduler rejection) |

progressDeadlineSeconds defaults to 600. When a rollout hasn't made progress for that window, Kubernetes sets Progressing=False. This is a diagnostic signal, not a control action.

warning:

progressDeadlineSecondsdoes not trigger an automatic rollback. Kubernetes marks the rollout failed and keeps retrying. You must runkubectl rollout undoyourself, or fix the underlying cause and wait for the rollout to complete.

kubectl describe deployment surfaces this through Events and Conditions in human-readable form. On the CKA, describe is often faster than inspecting raw JSON conditions.

The kubectl rollout Toolkit

Status and CI/CD gating

kubectl rollout status watches the rollout and exits 0 on success, non-zero on failure. That exit code makes it useful as a pipeline gate:

$ kubectl set image deployment/argocd-server \

argocd-server=quay.io/argoproj/argocd:v2.12.0 -n argocd

$ kubectl rollout status deployment/argocd-server -n argocd --timeout=5m

Waiting for deployment "argocd-server" rollout to finish: 1 out of 3 new replicas have been updated...

Waiting for deployment "argocd-server" rollout to finish: 2 out of 3 new replicas have been updated...

Waiting for deployment "argocd-server" rollout to finish: 1 old replicas are pending termination...

deployment "argocd-server" successfully rolled out

Without --timeout, it blocks indefinitely. In CI, always set a timeout.

History and revision tracking

Revision history lives in ReplicaSet annotations, not in the Deployment itself. The CHANGE-CAUSE column in rollout history is populated from the kubernetes.io/change-cause annotation on the Deployment:

$ kubectl rollout history deployment/argocd-server -n argocd

REVISION CHANGE-CAUSE

1 initial deploy

2 upgrade to v2.12.0

3 <none>

The --record flag that used to set this annotation was deprecated in Kubernetes 1.22 and removed in 1.28. Set it manually after updating the image:

$ kubectl annotate deployment/argocd-server \

kubernetes.io/change-cause="upgrade to v2.12.0" -n argocd

Inspect what a specific revision contains:

$ kubectl rollout history deployment/argocd-server -n argocd --revision=2

deployment.apps/argocd-server with revision #2

Pod Template:

Labels: app.kubernetes.io/name=argocd-server

pod-template-hash=6d9f7b8c9

Annotations: kubernetes.io/change-cause=upgrade to v2.12.0

Containers:

argocd-server:

Image: quay.io/argoproj/argocd:v2.12.0

Rollback

kubectl rollout undo scales the previous ReplicaSet back up and scales the current one down. It's the rolling update mechanism in reverse:

# Roll back to the previous revision

$ kubectl rollout undo deployment/argocd-server -n argocd

# Roll back to a specific revision

$ kubectl rollout undo deployment/argocd-server -n argocd --to-revision=1

After a rollback, the restored revision gets a new revision number. Revision 1 rolled back to becomes revision 4. Revision numbers are not reused.

Pause and resume

Pausing a Deployment stops pod template changes from triggering rollouts. This lets you make multiple changes without an intermediate rollout for each:

$ kubectl rollout pause deployment/argocd-server -n argocd

$ kubectl set image deployment/argocd-server \

argocd-server=quay.io/argoproj/argocd:v2.12.1 -n argocd

$ kubectl set resources deployment/argocd-server \

-c argocd-server --limits=cpu=500m,memory=512Mi -n argocd

# One rollout with all changes

$ kubectl rollout resume deployment/argocd-server -n argocd

A paused Deployment won't report Progressing status until resumed. If you pause during an active rollout, that rollout also pauses mid-flight.

Restart without spec change

kubectl rollout restart cycles all pods without modifying the pod template spec. Useful for picking up rotated secrets, refreshing in-memory state, or forcing a recycle after node-level changes:

$ kubectl rollout restart deployment/argocd-server -n argocd

Under the hood, it adds a kubectl.kubernetes.io/restartedAt annotation to .spec.template.metadata, which counts as a template change and triggers a normal rolling update.

Diagnosing Stuck Rollouts

When a rollout stalls, pod state is the first place to look. kubectl get pods -n <ns> shows you the new pods; their status tells you almost everything.

1. Image pull failure. New pods stuck in ImagePullBackOff or ErrImagePull. The event from kubectl describe pod reads: Failed to pull image "quay.io/argoproj/argocd:bad-tag": ... not found. The old ReplicaSet stays scaled at its current count; the new RS can't reach ready. Fix the image reference and the rollout continues automatically.

2. Insufficient resources. New pods stuck in Pending with event 0/3 nodes are available: 3 Insufficient memory. The scheduler can't place them. Check node capacity against pod resource requests; the new pod template may have higher requests than the old one. kubectl describe nodes | grep -A5 "Allocated resources" shows what's committed per node.

3. Resource quota exceeded. New pods in Pending, and kubectl describe deployment shows condition ReplicaFailure=True with message exceeded quota: requests.cpu. The namespace quota doesn't have headroom for surge pods. Either raise the quota or set maxSurge: 0 to avoid creating additional pods during the rollout.

4. Readiness probe failing. New pods start and run, but never become Ready: kubectl get pods shows 0/1 READY for new pods. kubectl describe pod shows the readiness probe failing with its specific error. From the Deployment's perspective, this is silent until progressDeadlineSeconds fires: UP-TO-DATE climbs but AVAILABLE doesn't. This is the hardest failure to spot from the Deployment view alone.

5. Taint/toleration mismatch. New pods in Pending with event node(s) had untolerated taint {node.kubernetes.io/unreachable: NoExecute}. The pod template doesn't tolerate a taint that now exists on all schedulable nodes. Check whether taint conditions on nodes changed since the last successful rollout.

The diagnostic sequence: kubectl get pods to identify state, kubectl describe pod <failing-pod> to read events, kubectl describe deployment to read conditions. That order works for every failure mode.

Gotchas

The single-replica deadlock. With maxUnavailable: 25% (default) and a 1-replica deployment, 25% of 1 rounds down to 0. The rollout cannot terminate the old pod (that would make 1 pod unavailable, exceeding the 0 limit). If maxSurge is also 0, the deployment is deadlocked: it can neither scale up new pods nor scale down old ones. For single-replica deployments, set maxUnavailable: 1 (allow termination before replacement) or maxSurge: 1 (allow replacement before termination).

Scaling during a rollout doesn't reset it. If you kubectl scale deployment/foo --replicas=10 while a rollout is in progress, Kubernetes spreads the new replicas proportionally across old and new ReplicaSets. The rollout continues at the new scale, not from scratch.

revisionHistoryLimit: 0 permanently eliminates rollback. Once old ReplicaSets are deleted, there's no recovery from a bad deploy short of redeploying. For production deployments, keep at least 2-3 revisions.

Only .spec.template changes trigger rollouts. kubectl annotate deployment/foo kubernetes.io/change-cause="..." does not trigger a rollout. It modifies the Deployment metadata, not the pod template. Annotate after updating the image, not as a separate operation expecting pod cycling.

progressDeadlineSeconds fires, nothing happens automatically. Already covered above, but worth repeating as a separate mental model: a failed progress condition is information only. You own the remediation.

Practice Scenarios

These cover the CKA exam patterns for deployments and rollouts:

Scenario 1: Update image and document the change

kubectl set image deployment/web-server nginx=nginx:1.25 -n production

kubectl annotate deployment/web-server \

kubernetes.io/change-cause="upgrade nginx to 1.25" -n production

kubectl rollout status deployment/web-server -n production

Scenario 2: Roll back to a specific revision

kubectl rollout history deployment/web-server -n production

kubectl rollout undo deployment/web-server --to-revision=2 -n production

kubectl rollout status deployment/web-server -n production

Scenario 3: Debug a stalled rollout

kubectl get deployments -n production

kubectl describe deployment/web-server -n production # read Conditions and Events

kubectl get pods -n production # identify pod states

kubectl describe pod <failing-pod> -n production # read pod events

Scenario 4: Configure strategy for a single-replica deployment to avoid deadlock

kubectl patch deployment/web-server -n production --type=merge -p \

'{"spec":{"strategy":{"type":"RollingUpdate","rollingUpdate":{"maxSurge":1,"maxUnavailable":0}}}}'

maxUnavailable: 0 with maxSurge: 1 ensures the single replica is never terminated before its replacement is ready. The new pod starts, passes readiness, then the old one terminates.

Wrap-up

Rolling updates are the intersection of Workloads and Troubleshooting on the CKA. The mechanism is simple; the operational skill is reading status conditions accurately and knowing which kubectl commands surface the right information quickly.

When a rollout stalls: read the deployment conditions first, then the ReplicaSet state, then the pod events. That sequence works for every failure mode listed above.

Next up: ConfigMaps and Secrets, which cover injecting runtime configuration into the same pods you just deployed, without triggering unnecessary rollouts.

Workloads and Scheduling (1 of 18)

Languages (Rust, Go & Python), container orchestration (Kubernetes), data and cloud providers (AWS & GCP) lover. Runner & Cyclist.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.