The 4Cs of Cloud Native Security

You can harden your application code, scan every container image, and set readOnlyRootFilesystem: true on every Pod. None of it matters if the cloud account running your cluster has an IAM role that grants * on *. Security doesn't start at the application layer. It starts at the infrastructure.

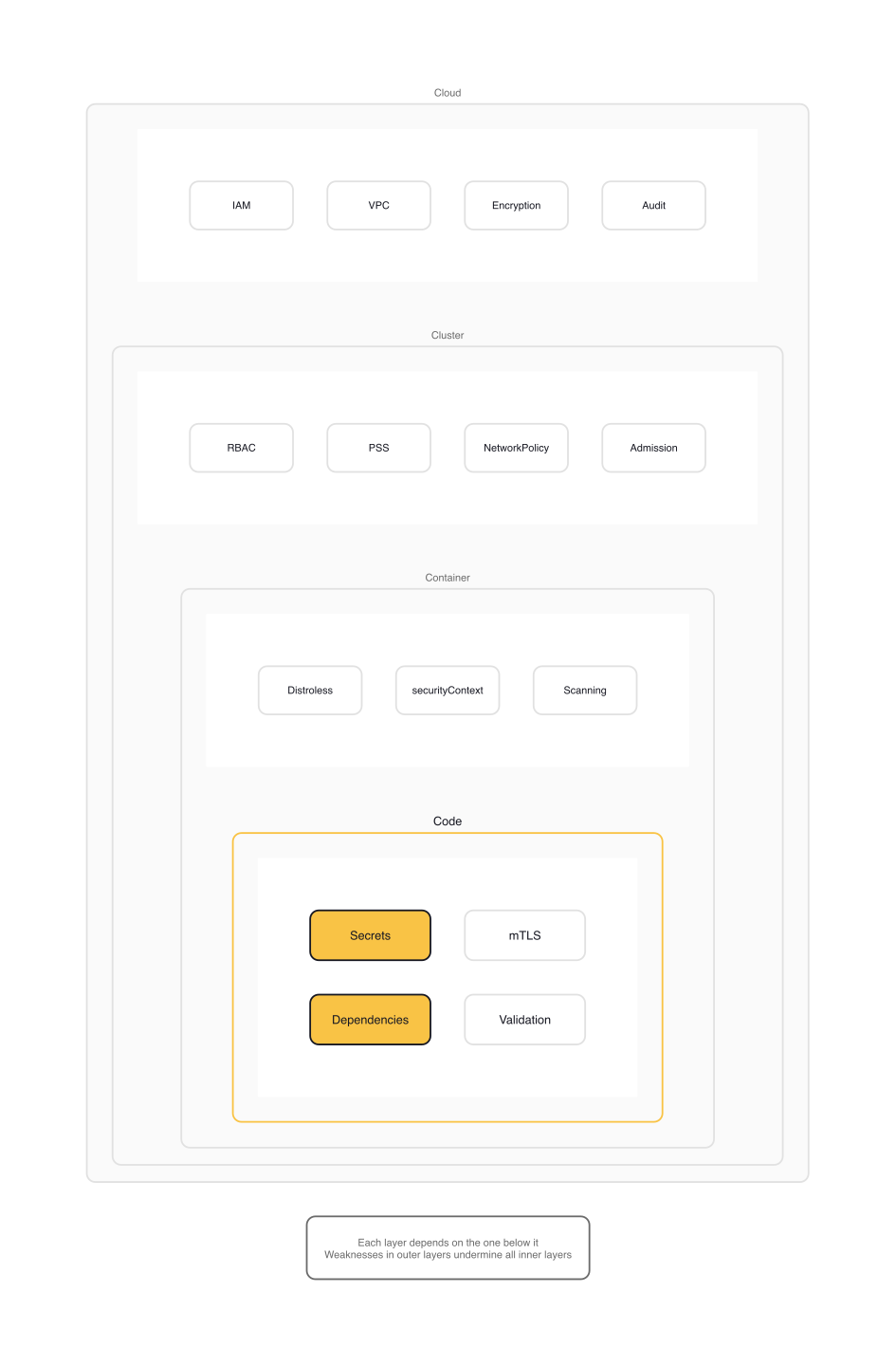

The 4Cs of Cloud Native Security formalize this as a mental model: four nested layers, each depending on the one below it. Cloud sits at the foundation. Cluster wraps around it. Container narrows the boundary further. Code runs at the center. A weakness at any outer layer undermines every inner layer, no matter how well-hardened.

This is the first topic in the KCSA "Overview of Cloud Native Security" domain (14% of the exam). Understanding the 4Cs isn't just exam prep. It's the organizing principle for every security decision you'll make in a Kubernetes environment.

The 4Cs Model

The model is straightforward: four concentric layers of security, from broadest (Cloud) to narrowest (Code).

Cloud is the infrastructure provider: AWS, GCP, Azure, or your own data center. It controls the network perimeter, identity management, and physical security.

Cluster is Kubernetes itself: the API server, RBAC, admission controllers, NetworkPolicy, and Pod Security Standards.

Container is the runtime boundary: the image you ship, the user it runs as, the capabilities it holds, and the filesystem it can write to.

Code is your application: the dependencies it pulls, the secrets it handles, and the inputs it trusts.

The critical principle: you cannot compensate for a weak outer layer by hardening an inner one. If your cloud IAM policy grants broad access to every node, no amount of Pod securityContext hardening saves you. If your cluster lets anyone create privileged Pods, container image scanning is irrelevant.

Security isn't a phase. It's present at every stage of the lifecycle, from development through deployment to runtime.

Cloud: The Foundation Layer

The Cloud layer covers everything below Kubernetes: the compute instances, the network fabric, the identity provider, and the storage backend. Whether you're on a public cloud or running bare metal in a colo, this layer defines the trust boundary for everything above it.

On managed services (EKS, GKE, AKS), your provider handles the control plane and physical infrastructure. You own everything else: IAM policies, network configuration, encryption settings, and audit logging. The key controls:

- IAM policies with least-privilege scoping. Node instance roles should only grant what the kubelet needs, not broad EC2 or storage access.

- Network perimeter: VPCs, security groups, and firewall rules that restrict who can reach the API server and etcd.

- Encryption at rest for etcd and persistent volumes. Most managed services offer this as a toggle, but it's not always enabled by default.

- Audit logging: CloudTrail (AWS), Cloud Audit Logs (GCP), Activity Log (Azure).

A common misconfiguration: giving node instance roles access to S3 buckets or secrets managers with broad permissions, then assuming the container layer will prevent unauthorized access. It won't. Any Pod on that node can reach the instance metadata service and assume the node's IAM role. Workload identity solutions (IRSA on AWS, Workload Identity on GCP) exist specifically to bind IAM roles to individual ServiceAccounts instead of entire nodes:

# ServiceAccount with EKS Pod Identity (AWS IRSA)

apiVersion: v1

kind: ServiceAccount

metadata:

name: app-sa

namespace: production

annotations:

eks.amazonaws.com/role-arn: arn:aws:iam::123456789012:role/app-s3-readonly

With this annotation, only Pods using serviceAccountName: app-sa can assume the app-s3-readonly role. Other Pods on the same node get nothing. This is the Cloud layer done right: identity scoped to the workload, not the infrastructure.

Cluster: The Kubernetes Layer

The Cluster layer is where Kubernetes-specific security controls live. The API server is the single point of entry for every operation in the cluster. Securing it means getting three things right: authentication (who is making the request), authorization (what are they allowed to do), and admission control (what mutations or validations should be applied).

Pod Security Standards

Kubernetes defines three security profiles for Pods: Privileged (unrestricted), Baseline (blocks known privilege escalations), and Restricted (current hardening best practices). You enforce them by labeling namespaces:

# podsecurity-restricted.yaml (kubernetes/website/examples/security)

apiVersion: v1

kind: Namespace

metadata:

name: my-restricted-namespace

labels:

pod-security.kubernetes.io/enforce: restricted

pod-security.kubernetes.io/enforce-version: latest

pod-security.kubernetes.io/warn: restricted

pod-security.kubernetes.io/warn-version: latest

The enforce mode rejects Pods that violate the policy. The warn mode lets them through but prints a warning. The audit mode logs violations without blocking.

The Restricted profile is where most production workloads should land. It requires runAsNonRoot: true, mandates dropping all Linux capabilities, enforces a Seccomp profile, and blocks privilege escalation. The Baseline profile is a reasonable starting point for workloads that can't yet meet Restricted requirements. It still blocks the most dangerous configurations: hostNetwork, hostPID, privileged containers, and hostPath volumes.

NetworkPolicy

NetworkPolicy is the cluster-level firewall. By default, all Pods can talk to all other Pods. The standard production pattern is default-deny, then explicitly allow what's needed:

# default-deny-all.yaml (from kubernetes.io/docs/concepts/services-networking/network-policies)

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: default-deny-all

spec:

podSelector: {}

policyTypes:

- Ingress

- Egress

An empty podSelector matches every Pod in the namespace. Listing both Ingress and Egress in policyTypes without any rules means all traffic is denied. You then add specific allow rules for legitimate communication paths.

RBAC, audit logging, and admission control round out the Cluster layer. The short version: avoid cluster-admin ClusterRoleBindings for anything that doesn't absolutely need them, enable audit logging so you have forensic evidence when something goes wrong, and use admission webhooks (or the built-in Pod Security Admission controller) to enforce policy before resources are created.

Container: The Runtime Boundary

The Container layer is about what's inside the image and how it runs. Two principles dominate: minimize the attack surface (ship less) and restrict runtime capabilities (grant less).

Minimal Images

A container image that includes a shell, a package manager, and a full operating system gives an attacker everything they need after an initial compromise. Distroless images strip all of that away, leaving only the application binary and its runtime dependencies.

CoreDNS demonstrates this pattern:

# Dockerfile (coredns/coredns)

ARG DEBIAN_IMAGE=debian:stable-slim

ARG BASE=gcr.io/distroless/static-debian12:nonroot

FROM --platform=$BUILDPLATFORM ${DEBIAN_IMAGE} AS build

ARG DEBIAN_FRONTEND=noninteractive

RUN apt-get -qq update \

&& apt-get -qq --no-install-recommends install libcap2-bin

COPY coredns /coredns

RUN setcap cap_net_bind_service=+ep /coredns

FROM ${BASE}

COPY --from=build /coredns /coredns

USER nonroot:nonroot

WORKDIR /

EXPOSE 53 53/udp

ENTRYPOINT ["/coredns"]

The build stage installs libcap2-bin to run setcap, granting the binary the CAP_NET_BIND_SERVICE capability. This lets CoreDNS bind to port 53 without running as root. The runtime stage uses gcr.io/distroless/static-debian12:nonroot and runs as USER nonroot:nonroot. No shell, no package manager, no extra utilities. If an attacker gains code execution inside this container, there's almost nothing to work with.

Security Context Hardening

The securityContext fields in a Pod spec control what a container can do at runtime. Well-maintained CNCF projects ship hardened defaults. cert-manager's Helm chart is a good reference:

# values.yaml (cert-manager/cert-manager/deploy/charts/cert-manager)

securityContext:

runAsNonRoot: true

seccompProfile:

type: RuntimeDefault

containerSecurityContext:

allowPrivilegeEscalation: false

capabilities:

drop:

- ALL

readOnlyRootFilesystem: true

These five fields are the baseline for any production workload. The combination of runAsNonRoot, allowPrivilegeEscalation: false, and capabilities.drop: [ALL] means the container starts as a regular user, can't become root via setuid binaries, and holds zero Linux capabilities. If a specific capability is needed (like NET_BIND_SERVICE for CoreDNS), add it back explicitly rather than running with the full set. readOnlyRootFilesystem prevents writing to the container's root filesystem, and seccompProfile: RuntimeDefault applies the container runtime's syscall filter.

Image Scanning and Runtime Monitoring

Container image scanning (Trivy, Grype, Snyk) catches known CVEs in base images and dependencies. It's a necessary baseline, but it only covers known vulnerabilities. Runtime monitoring fills the gap. Falco, for example, watches for anomalous behavior at the kernel level: unexpected process execution, unauthorized file access, network connections to suspicious destinations.

There's an inherent trade-off here. Falco itself needs privileged access to load its eBPF driver or kernel module. From its Helm chart defaults:

# values.yaml (falcosecurity/charts/charts/falco) — driver loader

containerSecurityContext:

privileged: true

# values.yaml (falcosecurity/charts/charts/falco-exporter) — exporter

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

capabilities:

drop:

- ALL

The driver-loader runs privileged: true to access the kernel. The exporter, which only reads Falco's gRPC output, ships fully locked down. You're granting elevated access to one component so it can detect unauthorized elevated access by other components. This is a legitimate pattern, but you should understand what you're trading.

Code: The Application Layer

The Code layer is the innermost ring. It covers everything your application does: how it handles secrets, what dependencies it pulls, how it validates input, and how it communicates with other services.

Secrets management is the most common failure point. Kubernetes Secret resources are base64-encoded, not encrypted. Anyone with RBAC read access to Secrets in a namespace can decode them. For production, use an external secrets manager (HashiCorp Vault, AWS Secrets Manager, GCP Secret Manager) with the External Secrets Operator or CSI Secrets Store driver. Enable encryption at rest for etcd to add a layer of protection for Secrets stored in the control plane.

Dependency scanning matters because your container image is only as secure as its weakest library. A single vulnerable transitive dependency in your Go module or npm package can negate every infrastructure control you've built. Integrate dependency scanning into CI/CD and treat critical vulnerabilities as build failures.

Application-level encryption: NetworkPolicy operates at L3/L4. It controls which Pods can talk to which other Pods, but it doesn't encrypt traffic between them. For encryption in transit within the cluster, you need mTLS, typically via a service mesh like Istio, Linkerd, or Cilium's built-in encryption. The Code layer is where you decide whether to trust the network or encrypt regardless.

Input validation doesn't change because you're running in Kubernetes. SQL injection, XSS, and deserialization vulnerabilities exist regardless of how hardened your infrastructure is. The outer layers protect your application from external threats. They don't protect it from itself.

Gotchas

Pod Security Standards require namespace labels. If you don't label a namespace, it defaults to the Privileged profile. This means unenforced namespaces accept any Pod configuration, including privileged containers with host networking. Audit your namespaces: kubectl get namespaces --selector='!pod-security.kubernetes.io/enforce' shows you which namespaces have no enforcement.

NetworkPolicy has no deny rules. It's an allow-list model. Once you add a NetworkPolicy that selects a Pod, all traffic not explicitly allowed is denied. The most common mistake: forgetting to allow DNS egress to kube-system (port 53 UDP/TCP). Every Pod that needs name resolution, which is almost every Pod, breaks silently.

readOnlyRootFilesystem: true breaks applications that write to /tmp. Many frameworks and libraries write temporary files to the root filesystem. The fix is simple: mount an emptyDir volume at /tmp. But if you don't know about it beforehand, the failure mode is confusing. The application starts, runs for a while, then crashes when it tries to write a temp file.

Cloud IAM misconfigurations dominate real-world Kubernetes breach reports. In most public post-mortems, the initial vector isn't a container escape or kernel exploit. It's an overly permissive IAM role on a node instance that lets any Pod on the node access cloud resources it shouldn't. The Capital One breach, the Tesla cryptomining incident, and numerous smaller incidents trace back to this pattern.

Image scanning catches known CVEs, not logic bugs. Scanning tells you if your base image has a vulnerable version of OpenSSL. It doesn't tell you that your application logs passwords to stdout or trusts unsigned JWTs. Scanning is a necessary baseline, not a complete solution.

Wrap-up

The 4Cs are a reasoning tool, not a checklist: Cloud, Cluster, Container, Code, each layer only as strong as the one below it. Work from the outside in. Get the Cloud layer right first, then Cluster, then Container, then Code.

Next up: Cloud Provider and Infrastructure Security, where we dig into the Cloud layer with real IAM configurations for AWS, GCP, and Azure, and examine how managed Kubernetes services handle the shared responsibility model.

Overview of Cloud Native Security (1 of 6)

Languages (Rust, Go & Python), container orchestration (Kubernetes), data and cloud providers (AWS & GCP) lover. Runner & Cyclist.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.