What Is Kubernetes and Why It Exists

You can run a container on your laptop with a single command. Building an image, pushing it to a registry, starting a process inside an isolated namespace: Docker made all of that straightforward. But running hundreds of containers across a fleet of machines, keeping them healthy, routing traffic between them, and rolling out updates without downtime? That is a different problem entirely.

That gap is exactly what Kubernetes fills, and it is why Kubernetes Fundamentals carries 46% of the KCNA exam. This lesson traces the path from physical servers to containers, from Google's internal Borg system to open-source Kubernetes, and explains the declarative model that holds it all together.

The Problem: From Physical Servers to Container Orchestration

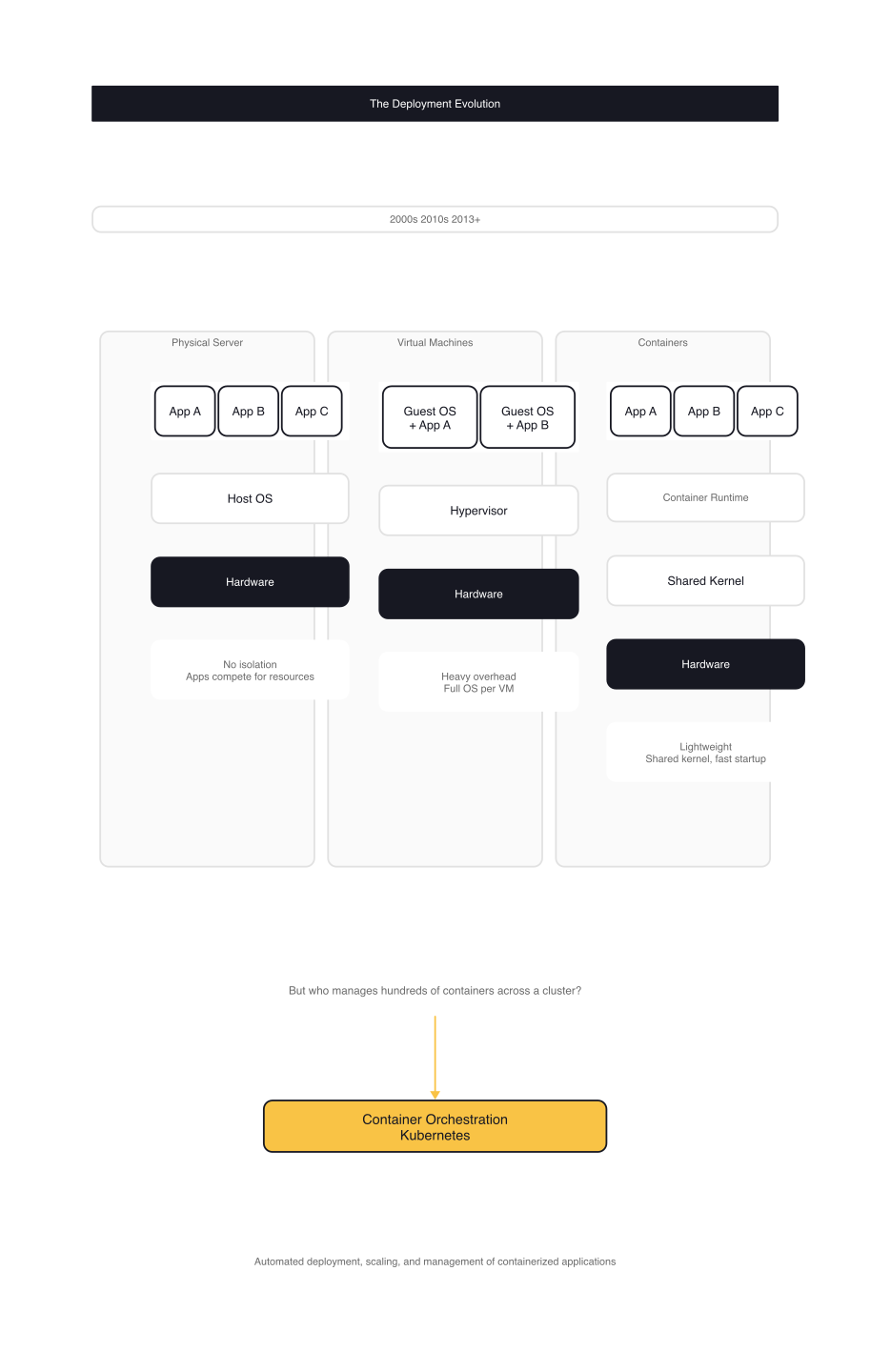

The way we deploy software has evolved through three distinct eras, each solving the limitations of the previous one.

Physical servers came first. You ran applications directly on bare metal. If two applications needed the same machine, they competed for CPU, memory, and disk. There was no isolation boundary. One noisy process could starve another. The workaround was dedicating a whole server to each application, which solved contention but created massive underutilization. Expensive hardware sat mostly idle.

Virtual machines fixed the isolation problem. A hypervisor carved one physical machine into multiple VMs, each running its own operating system. Applications could share hardware without stepping on each other. But VMs are heavy: each one boots a full OS, consumes its own block of memory, and takes minutes to start. Scaling up means provisioning new VMs, which is faster than racking servers but still slow compared to what was coming next.

Containers changed the equation. Instead of virtualizing hardware, containers virtualize at the operating system level. They share the host kernel, isolate processes via Linux namespaces and cgroups, and start in seconds rather than minutes. A container image packages the application, its dependencies, and its runtime configuration into a single portable artifact. The same image runs identically on a developer's laptop, in CI, and in production.

Containers solved packaging and isolation. What they did not solve was the operational layer on top: if you have 200 containers running across 20 machines, who decides which container runs where? When a container crashes, who restarts it? When you push a new version, who rolls it out gradually and rolls it back if health checks fail? When one service needs to talk to another, how does it find the right address?

That set of problems, collectively called container orchestration, is what Kubernetes was built to handle.

How Kubernetes Came to Be: From Borg to Open Source

Kubernetes did not appear out of nowhere. Its architecture and core concepts trace directly back to systems Google built over a decade of running containers internally.

Google's Borg System

Starting around 2003, Google developed Borg, an internal cluster management system that ran virtually everything at the company as containers: Bigtable, Spanner, MapReduce, web front-ends, batch jobs. Borg managed hundreds of thousands of jobs across tens of thousands of machines. It introduced several ideas that would later define Kubernetes.

Allocs were resource envelopes that grouped related processes on the same machine. A common pattern: a web server running alongside a log collector that shipped logs to a cluster filesystem. This became Kubernetes Pods.

Naming and load balancing gave services a stable identity that mapped to a dynamic set of tasks. Clients connected to a name, and the system routed traffic to healthy instances. This became Kubernetes Services.

Labels emerged because Borg's rigid Job/Task model was too limiting. Operators wanted to group processes across job boundaries: all front-ends across canary and stable releases, or a subset of tasks during a rolling update. Kubernetes adopted labels as flexible key-value pairs that can be attached to any object and queried with selectors.

IP-per-Pod came from a Borg pain point. In Borg, all tasks on a machine shared the host's IP address and had to coordinate port usage. Kubernetes gives every Pod its own IP via overlay networks, eliminating port conflicts entirely.

Docker and the Open-Source Moment

In 2013, Docker made containers accessible to individual developers. It provided a clean build-ship-run workflow that dramatically lowered the barrier to containerization. But Docker ran on a single machine. Google engineers Joe Beda, Brendan Burns, and Craig McLuckie saw the opportunity to build an orchestrator that could manage containers across a fleet of machines, applying the lessons from Borg in an open-source context.

Google announced Kubernetes in mid-2014. The internal codename was Project 7, a reference to Star Trek's Seven of Nine, a character who was formerly Borg. The seven spokes on the Kubernetes logo are a nod to that codename.

Kubernetes v1.0 shipped in 2015, and Google donated the project to the newly formed Cloud Native Computing Foundation (CNCF), part of the Linux Foundation. Microsoft, Red Hat, IBM, and Docker joined as early contributors. The project grew rapidly because it was solving a real problem that every organization running containers at scale was hitting independently.

What Kubernetes Actually Does: The Declarative Model

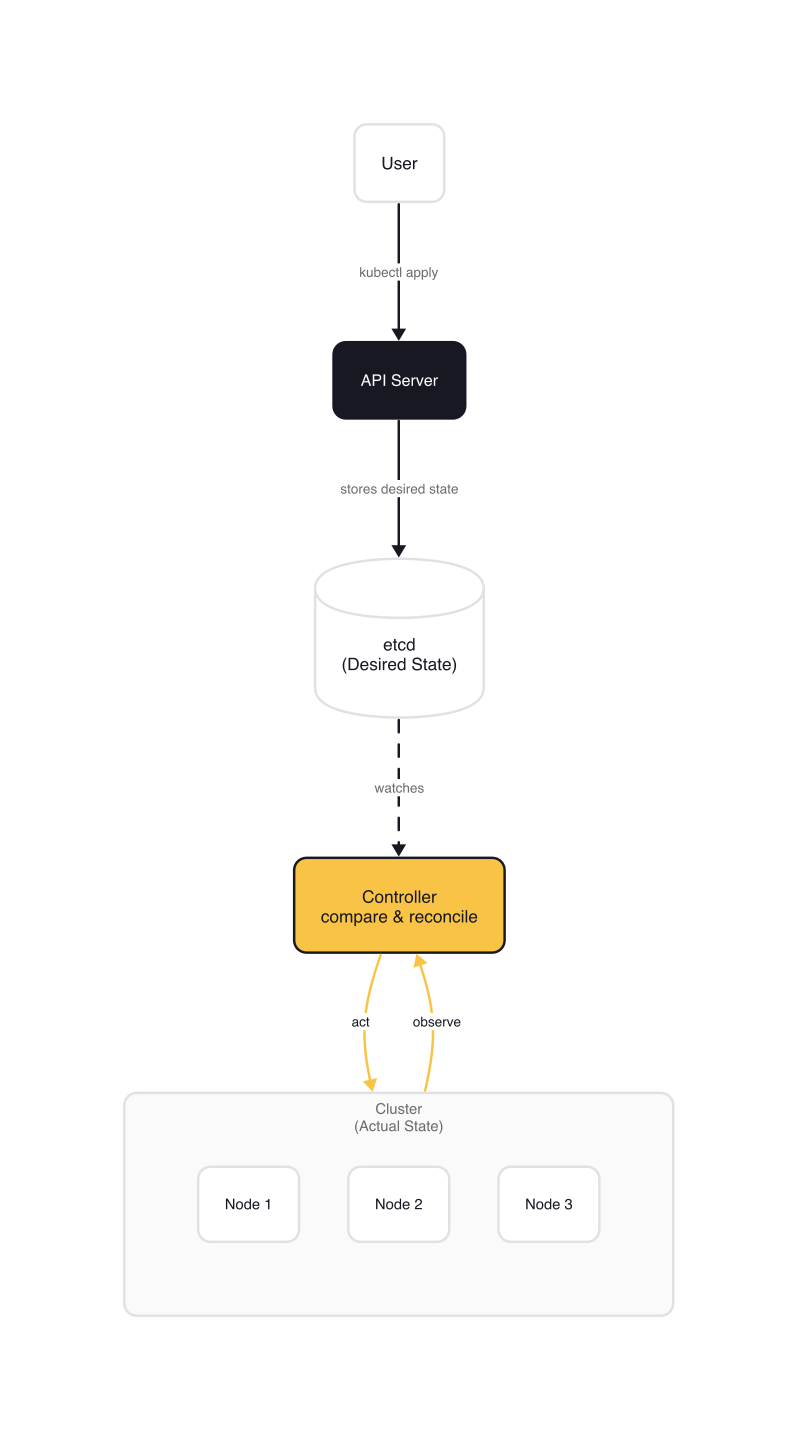

The core idea behind Kubernetes is deceptively simple: you declare what you want your system to look like, and Kubernetes continuously works to make reality match that declaration.

This is the declarative model. Instead of telling the system "create three Pods," you tell it "there should always be three Pods running this container image." The difference matters. In an imperative approach, if one Pod crashes, nothing happens automatically. In a declarative approach, Kubernetes detects the drift between desired state (three Pods) and actual state (two Pods), and creates a new one.

Here is what that looks like in practice. This is the canonical nginx Deployment from the official Kubernetes documentation:

# nginx-deployment.yaml (kubernetes/website — examples/controllers/nginx-deployment.yaml)

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.14.2

ports:

- containerPort: 80

This manifest says: "I want three replicas of an nginx container, labeled app: nginx, listening on port 80." You submit it to the Kubernetes API server, which stores the desired state in etcd, a distributed key-value store. A Deployment controller watches for Deployment objects, compares desired state with actual state, and creates or removes Pods to converge.

If a node goes down and takes a Pod with it, the controller notices the replica count dropped below three and schedules a replacement. If you update the image from nginx:1.14.2 to nginx:1.25.4, the controller performs a rolling update: spinning up new Pods with the new image, waiting for them to pass health checks, then terminating old ones.

This reconciliation loop, where controllers continuously drive actual state toward desired state, is the single architectural pattern that explains nearly everything Kubernetes does. Deployments, Services, ConfigMaps, network policies: they all follow the same pattern. Declare what you want, and a controller makes it so.

The Capabilities: What You Get Out of the Box

Kubernetes is not just a container scheduler. It provides a set of primitives that, taken together, handle the operational concerns of running distributed applications.

Service discovery and load balancing. When a Pod needs to reach another service, it uses a DNS name. Kubernetes runs CoreDNS as a cluster add-on that resolves service names to cluster-internal IP addresses. The CoreDNS Kubernetes plugin implements the Kubernetes DNS specification, serving records for the cluster.local zone:

# CoreDNS Corefile — adapted from coredns/coredns plugin/kubernetes/README.md examples

10.0.0.0/17 cluster.local {

kubernetes {

pods verified

}

}

Any Pod in the cluster can reach a Service by name, such as my-service.my-namespace.svc.cluster.local. Kubernetes automatically load-balances connections across the Pods backing that Service.

Automated rollouts and rollbacks. The Deployment controller handles zero-downtime updates. You change the desired state (new image, new environment variable), and the controller rolls out the change incrementally. If the new version fails health checks, you can roll back to the previous revision.

Self-healing. Kubernetes restarts containers that crash, replaces Pods when nodes fail, and removes unhealthy instances from Service endpoints so clients never see them. Liveness and readiness probes give you fine-grained control over what "healthy" means for your application.

Horizontal scaling. Scale a Deployment from 3 replicas to 10 with a single field change, or configure a HorizontalPodAutoscaler to scale based on CPU, memory, or custom metrics.

Secret and configuration management. Secrets and ConfigMaps decouple sensitive data and configuration from container images. You can update a configuration without rebuilding or redeploying the image.

Storage orchestration. Kubernetes supports pluggable storage backends through PersistentVolumes and StorageClasses. Local disk, NFS, cloud block storage, and distributed filesystems like Ceph all work through the same interface.

Batch execution. Jobs and CronJobs manage one-off and scheduled workloads. A Job runs a task to completion and tracks success or failure. A CronJob schedules Jobs on a repeating interval.

Ecosystem integration. Kubernetes exposes its internal state through well-defined APIs that ecosystem tools consume natively. A clear example is Prometheus, which uses Kubernetes service discovery to find scrape targets automatically:

# prometheus-kubernetes.yml (prometheus/prometheus — documentation/examples, trimmed)

scrape_configs:

- job_name: "kubernetes-apiservers"

kubernetes_sd_configs:

- role: endpoints # Discover targets via Kubernetes endpoint objects

# ... scheme, tls_config, and authorization omitted for brevity

relabel_configs:

- source_labels: # Filter to only the default/kubernetes/https endpoint

[__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

Prometheus does not need a static list of targets. It discovers API servers, nodes, pods, and services through the Kubernetes API, using kubernetes_sd_configs with different roles. This is the pattern: Kubernetes provides the platform, and CNCF ecosystem projects like Prometheus, Envoy, Helm, and Argo plug in through its APIs.

What Kubernetes Is Not

Understanding what Kubernetes is not is just as important for the KCNA exam as understanding what it is.

Not a Platform as a Service. Kubernetes does not deploy your source code, build your container images, or provide application-level middleware like message queues or databases. It operates at the container level, not the application level. CI/CD pipelines, logging stacks, and monitoring systems are separate concerns that run on top of Kubernetes or integrate with it.

Not a traditional orchestrator. The word "orchestration" implies a sequential workflow: do A, then B, then C. Kubernetes does not work that way. It is a set of independent control loops, each watching a slice of the desired state and driving the actual state to match. The API server does not coordinate a sequence of steps. Controllers act independently and concurrently. This design makes the system resilient: if one controller falls behind, the others keep working.

Not opinionated about tooling. Kubernetes does not mandate a specific logging solution, monitoring stack, or configuration language. It provides hooks and APIs. You choose Prometheus or Datadog for monitoring, Fluentd or Vector for logging, Helm or Kustomize for packaging. The CNCF ecosystem, with over 200 projects, fills these gaps. The KCNA exam tests your awareness of this ecosystem, not just Kubernetes itself.

Not limited to microservices. Monoliths, stateful databases, batch processing pipelines, machine learning training jobs, and GPU-accelerated inference workloads all run on Kubernetes. If an application can run in a container, Kubernetes can manage it.

Gotchas

A few misconceptions worth clearing up before moving on.

Kubernetes does not fix application design. Wrapping a poorly designed monolith in a container and deploying it on Kubernetes does not magically make it scalable or resilient. Kubernetes manages the lifecycle of containers. Your application design determines whether that lifecycle management is useful.

The complexity is inherent, not incidental. "Kubernetes is complex" is a common complaint, but it misses the point. The alternative is building scheduling, service discovery, self-healing, secret management, and rolling updates yourself, or accepting the limitations of not having them. The complexity was always there. Kubernetes makes it explicit and manageable.

The declarative model requires a mental shift. If your background is imperative scripting ("create this, then configure that, then restart the other thing"), the Kubernetes approach feels unfamiliar at first. You describe the end state, not the steps to get there. The system figures out the steps. This is one of the most important conceptual shifts the KCNA tests.

tip: KCNA Exam Note: The exam frequently tests the difference between imperative and declarative approaches. Understand that Kubernetes controllers use a reconciliation loop: they watch desired state, compare it with actual state, and take corrective action. This is the core operating model.

Wrap-up

Kubernetes is a declarative platform born from Google's decade of running containers at scale with Borg. It automates the scheduling, scaling, networking, and lifecycle management of containerized workloads across a cluster of machines. Its design is built around a single pattern: controllers that continuously drive actual state toward desired state.

The reconciliation loop is the one concept that ties everything together. Deployments, Services, storage, scaling: they all work because a controller somewhere is watching the desired state you declared and making corrections when reality drifts. If you take one idea from this article into the rest of the KCNA curriculum, make it this one.

Next up: Kubernetes Architecture: Control Plane and Worker Nodes, where you will see how the components that implement this reconciliation loop (the API server, etcd, the scheduler, controllers, and the kubelet) are organized into a control plane and worker nodes.

Kubernetes Fundamentals (1 of 8)

Languages (Rust, Go & Python), container orchestration (Kubernetes), data and cloud providers (AWS & GCP) lover. Runner & Cyclist.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.