The Kubernetes Networking Model

You kubectl apply a Deployment and your Pods start talking to each other across nodes. No port mappings, no NAT rules, no manual route configuration. It just works. But "it just works" hides a set of deliberate design decisions that shape how every packet moves through your cluster.

Everything in Kubernetes networking builds on this model: Services, NetworkPolicy, Ingress, and service mesh all assume it is in place. It is also a core topic in the KCNA Container Orchestration domain (22% of the exam). Get the fundamentals right and the rest follows.

This article covers the four rules Kubernetes mandates for networking, how CNI plugins implement those rules, the trade-offs between overlay and native routing, and where kube-proxy fits into the picture.

The Four Rules of Kubernetes Networking

Kubernetes defines a networking model with four non-negotiable requirements. Every cluster must satisfy all of them, regardless of the underlying infrastructure.

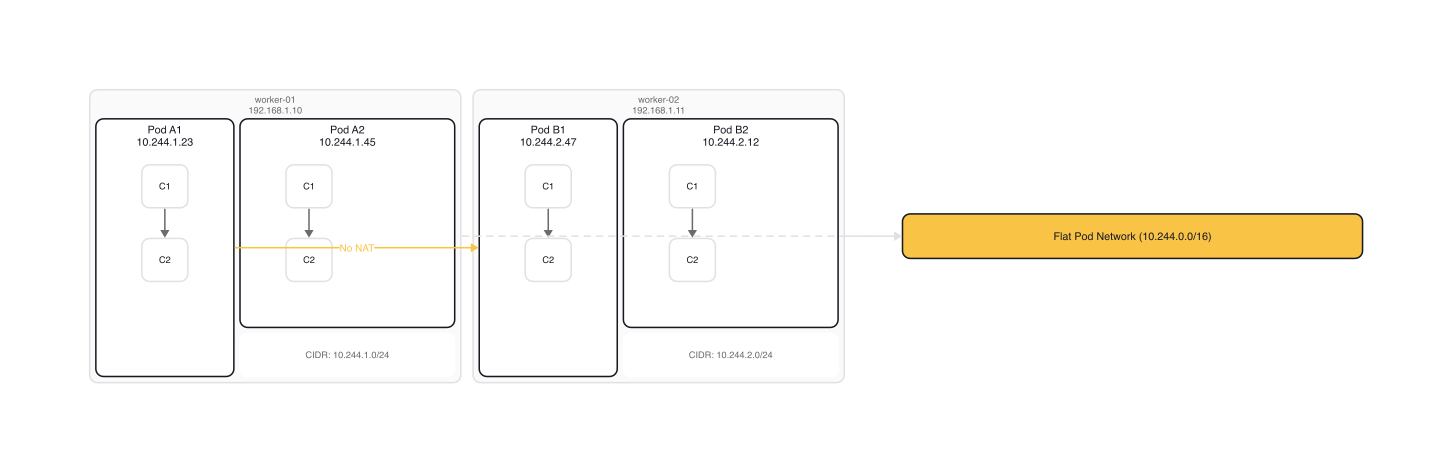

Rule 1: Every Pod gets its own IP address. Each Pod receives a unique, cluster-wide IP. Containers within the same Pod share that IP and communicate over localhost. This is possible because all containers in a Pod share a single network namespace.

Rule 2: All Pods can reach all other Pods without NAT. A Pod on Node A can send a packet to a Pod on Node B using the destination Pod's IP directly. No network address translation, no port mapping, no proxies. The source IP the receiver sees is the real IP of the sender.

Rule 3: Agents on a node can reach all Pods on that node. The kubelet, kube-proxy, and system daemons running on a node can communicate with every Pod scheduled to that node.

Rule 4: Container-to-container communication within a Pod happens over localhost. Since all containers in a Pod share a network namespace, they communicate through 127.0.0.1 on different ports, just like processes on the same machine.

Why this matters

These four rules create what is effectively a flat network. Every Pod behaves like a host on a shared LAN segment. You don't need to coordinate port allocations across teams. You don't need dynamic port remapping. Applications can use well-known ports (80, 443, 5432) without collisions because each Pod has its own IP.

This is a sharp departure from pre-Kubernetes Docker networking, where containers shared the host's network namespace by default. Running two containers on port 80 meant one had to be remapped to a different host port. Kubernetes eliminated that coordination problem entirely.

$ kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE

frontend-6b7f6d4bc-x2k9p 1/1 Running 0 12m 10.244.1.23 worker-01

backend-8d9f7c3ab-r8m3q 1/1 Running 0 12m 10.244.2.47 worker-02

In this output, frontend on worker-01 can reach backend at 10.244.2.47 directly. No NAT, no port translation. The networking model guarantees it.

How CNI Plugins Implement the Model

Kubernetes defines what the network must look like but does not implement it. That job belongs to Container Network Interface (CNI) plugins.

What CNI is

CNI is a CNCF specification that defines a standard interface between container runtimes and network plugins. When the kubelet asks the container runtime to create a Pod, the runtime calls the configured CNI plugin to set up networking. The specification defines five core operations:

- ADD: attach a container to the network, assign an IP

- DEL: detach a container from the network, release the IP

- CHECK: verify the container's network configuration is correct

- VERSION: report supported CNI spec versions

- GC: garbage-collect stale network resources

The runtime communicates with the plugin through environment variables (CNI_COMMAND, CNI_CONTAINERID, CNI_NETNS) and passes the network configuration as JSON on stdin. The plugin returns results on stdout.

CNI configuration in practice

Here is the CNI configuration from Flannel's deployment manifest (flannel-io/flannel/Documentation/kube-flannel.yml, ConfigMap kube-flannel-cfg). This gets written to /etc/cni/net.d/10-flannel.conflist on each node:

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

This is a plugin chain. When a Pod is created, the runtime executes the flannel plugin first (which creates the network interface and assigns an IP), then the portmap plugin (which configures any hostPort mappings). CNI plugins come in two categories: interface plugins that create the network interface inside the container, and chained plugins that modify an already-created interface.

IPAM: how Pods get IP addresses

Every CNI plugin needs an IP Address Management (IPAM) strategy. The IPAM component allocates and tracks which IPs are assigned to which Pods. Common approaches:

- host-local: allocates IPs from a predefined subnet range, storing state on the local filesystem. Used by Flannel.

- calico-ipam: allocates IPs from IP pools, dynamically assigning small blocks of IPs per node as needed. Used by Calico.

- Cloud provider IPAM: allocates IPs from the cloud provider's VPC (e.g., AWS VPC CNI assigns real VPC IPs to Pods).

Kubernetes requires a CNI plugin compatible with spec version 0.4.0 or later, and recommends v1.0.0 compatibility.

Overlay vs. Native Routing

The biggest architectural decision in Kubernetes networking is how packets travel between Pods on different nodes. There are two fundamental approaches.

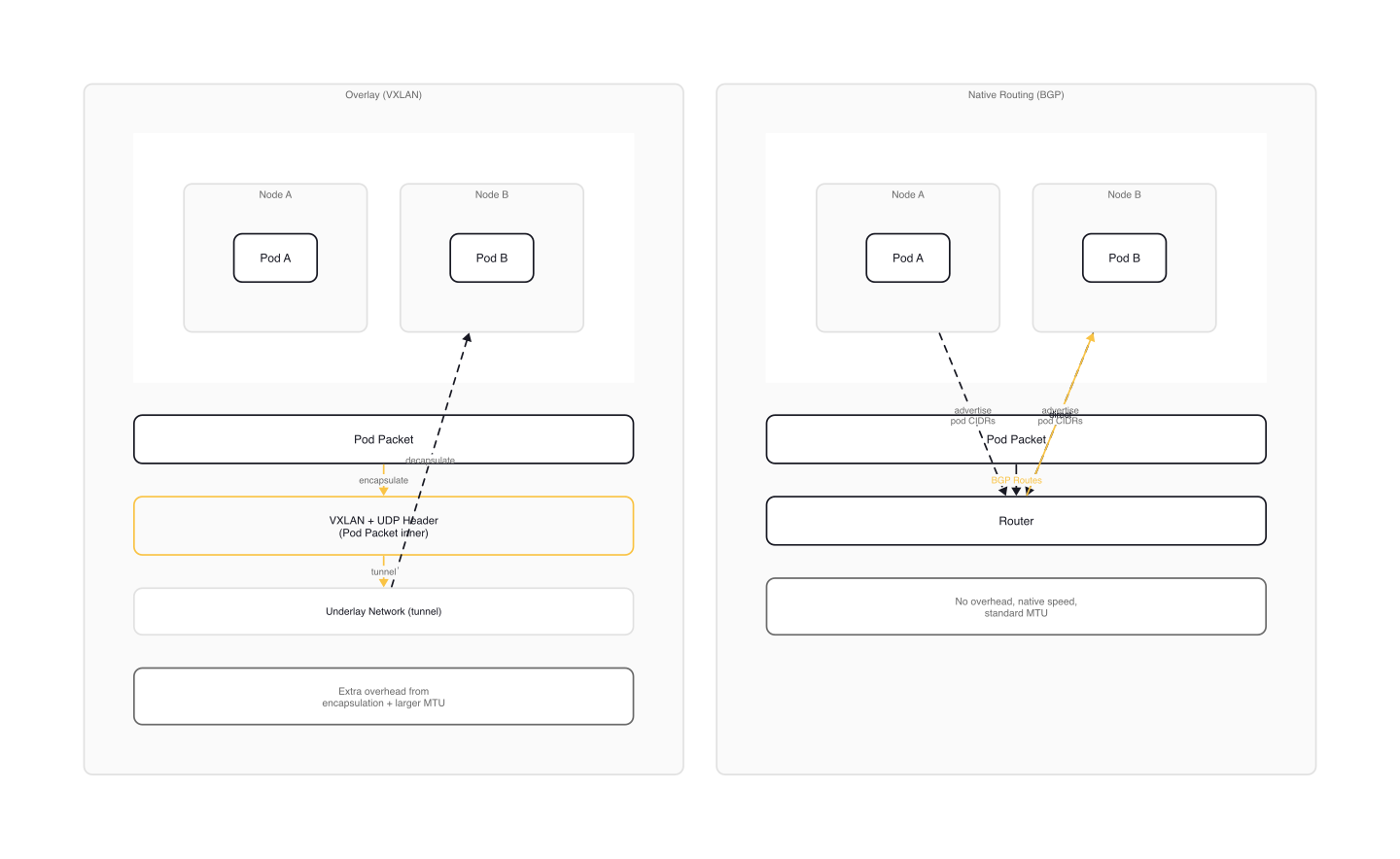

Overlay networks (encapsulated)

An overlay network wraps each Pod-to-Pod packet inside another packet for transport across the underlying node network. The most common encapsulation protocol is VXLAN, which wraps Layer 2 Ethernet frames inside UDP packets.

Flannel's network configuration (flannel-io/flannel/Documentation/kube-flannel.yml, ConfigMap net-conf.json) shows a typical VXLAN setup:

{

"Network": "10.244.0.0/16",

"EnableNFTables": false,

"Backend": {

"Type": "vxlan"

}

}

By default, each node gets a /24 slice of the 10.244.0.0/16 range (e.g., node-01 gets 10.244.1.0/24, node-02 gets 10.244.2.0/24). When a Pod on node-01 sends a packet to a Pod on node-02, Flannel encapsulates it in a VXLAN header and sends it as a UDP packet to node-02's real IP. Node-02's Flannel agent decapsulates it and delivers it to the destination Pod.

The advantage: overlay networks work on any underlying infrastructure. The nodes just need basic IP connectivity between them. Cloud VPCs, bare-metal data centers, even mixed environments.

The cost: each packet carries ~50 bytes of additional encapsulation overhead, reducing the effective MTU.

Native routing (unencapsulated)

Native routing skips encapsulation entirely. Instead, it programs the network infrastructure to route Pod CIDRs directly. BGP (Border Gateway Protocol) is the standard mechanism: each node announces its Pod subnet to the network, and routers learn where to forward packets.

Calico in BGP mode is the canonical example. Each node runs a BIRD BGP daemon that peers with the network's routers (or other nodes). When a packet destined for 10.244.2.47 arrives at a router, the router already knows to forward it to node-02.

No encapsulation means no overhead, no reduced MTU, and full visibility into packet headers for network-level firewalls and monitoring tools. The trade-off: your network infrastructure must support BGP, or at minimum your cloud provider must support custom route tables.

Choosing between them

| Factor | Overlay (VXLAN) | Native routing (BGP) |

|---|---|---|

| Infrastructure requirements | Any IP connectivity between nodes | BGP-capable routers or cloud route tables |

| Packet overhead | ~50 bytes per packet | None |

| MTU impact | Reduced (typically 1450 vs. 1500) | None |

| Network visibility | Encapsulated packets obscure inner headers | Full visibility |

| Setup complexity | Low (works out of the box) | Medium (BGP peering configuration) |

| Cloud compatibility | All providers | Requires provider-specific setup |

For most clusters, especially those getting started or running in cloud environments, overlay networking with VXLAN is the pragmatic choice. For bare-metal clusters where performance matters or where you need full packet visibility, native routing with BGP is worth the additional setup.

kube-proxy and Service Networking

The CNI plugin handles Pod-to-Pod networking. But when you create a Service, something else needs to translate that Service's virtual IP (ClusterIP) into the actual Pod IPs behind it. That is kube-proxy's job.

kube-proxy runs on every node as a DaemonSet (or static Pod) and watches the Kubernetes API for Service and EndpointSlice changes. When traffic arrives for a Service's ClusterIP, kube-proxy's rules redirect it to one of the backing Pods. For example, a Service with ClusterIP 10.96.42.10 backed by three Pods gets three routing rules, one for each Pod IP. When a packet arrives at 10.96.42.10:80, the rules DNAT it to one of the Pod endpoints at random.

Proxy modes

kube-proxy supports three modes on Linux, each using different kernel mechanisms:

iptables (current default): installs iptables rules that perform DNAT (destination NAT) to redirect Service traffic to Pod endpoints. For each Service, kube-proxy creates a chain of rules. Random selection is used by default to pick a backend. The rule count grows linearly with the number of Services and endpoints: O(n).

nftables (stable since v1.33): the modern successor to iptables mode. Uses the nftables API, which provides better performance and scalability. Recommended as the future default for new clusters.

IPVS (deprecated in v1.35): uses the kernel's IP Virtual Server for load balancing, with hash table lookups instead of linear rule chains. Offers O(1) connection processing and supports advanced scheduling algorithms (round-robin, least connections, weighted). However, it still falls back to iptables for SNAT and packet filtering. The nftables mode has superseded it.

$ kubectl get pods -n kube-system -l k8s-app=kube-proxy

NAME READY STATUS RESTARTS AGE

kube-proxy-7h4kt 1/1 Running 0 3d

kube-proxy-b9x2l 1/1 Running 0 3d

kube-proxy-m5r8w 1/1 Running 0 3d

tip: Some CNI plugins like Cilium and Calico (in eBPF mode) can replace kube-proxy entirely, implementing Service load balancing directly in eBPF programs attached to the kernel. This eliminates iptables/nftables overhead and provides better performance at scale.

Gotchas

No CNI plugin means no networking. A freshly bootstrapped cluster without a CNI plugin cannot schedule Pods. They will sit in ContainerCreating status indefinitely, waiting for a network interface that never arrives. This is the first thing to install after kubeadm init.

Three CIDR ranges that must not overlap. Pod CIDR (e.g., 10.244.0.0/16), Service CIDR (e.g., 10.96.0.0/12), and Node CIDR (your host network) are three separate address ranges. If they overlap, routing breaks in subtle and painful ways. Plan your IP address space before deploying.

Overlay networks reduce MTU. VXLAN adds ~50 bytes of encapsulation overhead. The default Ethernet MTU of 1500 bytes drops to ~1450 for Pod traffic. Applications sending maximum-sized packets may experience fragmentation or drops if the MTU is not configured correctly.

NetworkPolicy requires CNI support. The NetworkPolicy API is always available in any cluster, but it only does something if your CNI plugin implements it. Flannel alone does not support NetworkPolicy. If you need network segmentation, you need Calico, Cilium, or a similar plugin that enforces policies.

Changing CNI plugins is not trivial. Migrating from one CNI plugin to another on a running cluster is not a supported operation. The new plugin may use different CIDR ranges, different routing rules, or different IPAM backends. In practice, this means rebuilding the cluster. Choose your CNI plugin before you deploy workloads.

Wrap-up

Kubernetes networking is a contract: every Pod gets an IP, every Pod can reach every other Pod, and NAT is not allowed. CNI plugins fulfill that contract, and your choice of plugin determines NetworkPolicy support, packet overhead, and whether you can replace kube-proxy with eBPF. The model is simple. The implementations vary. Choose your CNI plugin carefully, because it is one of the most consequential infrastructure decisions for a cluster.

Next up: Service Types: ClusterIP, NodePort, and LoadBalancer, where we look at how traffic reaches your workloads from inside and outside the cluster, and why Kubernetes uses virtual IPs instead of DNS round-robin.

Container Orchestration (1 of 7)

Languages (Rust, Go & Python), container orchestration (Kubernetes), data and cloud providers (AWS & GCP) lover. Runner & Cyclist.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.