Dockerfiles: Instructions, Layers, and Best Practices

You can copy a Dockerfile from a tutorial and get a working image. But when the build takes ten minutes, the image weighs 1.2 GB, your container runs as root, and a SIGTERM from Kubernetes never reaches your process, that working image becomes a production liability.

The gap between "it builds" and "it belongs in production" comes down to understanding what each Dockerfile instruction actually does. Not the syntax. The consequences. Every instruction carries intent: FROM picks your attack surface, RUN builds your layers, USER sets your security boundary. The CKAD exam tests this understanding directly. The "Application Design and Build" domain (20% of the exam) lists "Define, build and modify container images" as its first competency, and the exam expects you to write and modify Dockerfiles from scratch under time pressure.

Dockerfile Instructions: The Building Blocks

FROM: Choosing Your Base

Every Dockerfile starts with FROM. The choice of base image determines your attack surface, image size, and available tooling.

Three strategies dominate production Dockerfiles:

Distroless images strip everything except the application binary. No shell, no package manager, no coreutils. CoreDNS uses gcr.io/distroless/static-debian12:nonroot, and etcd uses the same family pinned by digest:

# github.com/etcd-io/etcd/Dockerfile

ARG ARCH=amd64

FROM --platform=linux/${ARCH} gcr.io/distroless/static-debian12@sha256:20bc6c...

Alpine images (~5 MB) provide a minimal Linux userland with apk for package management. cert-manager's webhook example uses Alpine for the final stage when it needs ca-certificates:

# github.com/cert-manager/webhook-example/Dockerfile (final stage)

FROM alpine:3.23@sha256:25109184c71b...

RUN apk add --no-cache ca-certificates

Debian-slim images are larger but include apt, bash, and standard GNU tools. NGINX uses debian:trixie-slim because it needs system packages for GPG verification and complex setup:

# github.com/nginx/docker-nginx/stable/debian/Dockerfile

FROM debian:trixie-slim

The musl libc in Alpine occasionally breaks glibc-linked binaries, which is why projects with complex native dependencies (NGINX) stick with Debian-slim. Distroless has no shell at all, so you cannot exec into a running container to debug. That is a feature for security and a constraint for troubleshooting.

RUN: Executing Build Commands

RUN executes commands during the build and creates a new filesystem layer for each invocation. The NGINX Dockerfile demonstrates why this matters. It chains user creation, package installation, GPG verification, and cleanup into a single RUN:

# github.com/nginx/docker-nginx/stable/debian/Dockerfile

RUN set -x \

&& groupadd --system --gid 101 nginx \

&& useradd --system --gid nginx --no-create-home --home /nonexistent \

--comment "nginx user" --shell /bin/false --uid 101 nginx \

&& apt-get update \

&& apt-get install --no-install-recommends --no-install-suggests \

-y gnupg1 ca-certificates \

# ... GPG key verification, package installation ...

&& apt-get remove --purge --auto-remove -y \

&& rm -rf /var/lib/apt/lists/* \

# ...

&& ln -sf /dev/stdout /var/log/nginx/access.log \

&& ln -sf /dev/stderr /var/log/nginx/error.log \

&& mkdir /docker-entrypoint.d

Every && in that chain is deliberate. If any command fails, the entire layer fails. And critically, the cleanup (rm -rf /var/lib/apt/lists/*) happens in the same layer as the installation. If cleanup were a separate RUN, the deleted files would still occupy space in the previous layer.

COPY vs ADD

COPY copies files from the build context into the image. ADD does the same but also auto-extracts tar archives and can fetch remote URLs. etcd uses ADD for its binaries, but there's no advantage here since it's copying plain files:

# github.com/etcd-io/etcd/Dockerfile

ADD etcd /usr/local/bin/

ADD etcdctl /usr/local/bin/

ADD etcdutl /usr/local/bin/

Use COPY unless you specifically need tar extraction. COPY makes your intent explicit.

ENV and ARG

ENV sets variables that persist into the running container. ARG sets build-time variables that disappear after the build. NGINX uses ENV to pin package versions so rebuilds are reproducible:

# github.com/nginx/docker-nginx/stable/debian/Dockerfile

ENV NGINX_VERSION 1.28.3

ENV NJS_VERSION 0.9.6

ENV NJS_RELEASE 1~trixie

ENV ACME_VERSION 0.3.1

ENV PKG_RELEASE 1~trixie

ENV DYNPKG_RELEASE 1~trixie

CoreDNS uses ARG before FROM to parameterize the base image, making it swappable at build time without editing the Dockerfile:

# github.com/coredns/coredns/Dockerfile

ARG BASE=gcr.io/distroless/static-debian12:nonroot

# ... (build stage) ...

FROM ${BASE}

WORKDIR, EXPOSE, USER, LABEL

WORKDIR sets the working directory for subsequent instructions. etcd uses a double WORKDIR trick: each invocation creates the directory if it doesn't exist, so WORKDIR /var/etcd/ followed by WORKDIR /var/lib/etcd/ creates both paths, with the last one becoming the active directory.

EXPOSE documents which ports the container listens on. CoreDNS exposes both TCP and UDP: EXPOSE 53 53/udp.

USER switches to a non-root user for all subsequent instructions and the running container. CoreDNS runs as nonroot:nonroot (a user baked into distroless images). Prometheus uses the built-in nobody user. Grafana creates a custom user with a configurable UID:

# github.com/grafana/grafana/Dockerfile (final stage)

ARG GF_UID="472"

ARG GF_GID="0"

# ...

RUN if [ ! $(getent group "$GF_GID") ]; then \

# Alpine: addgroup -S, Debian: groupadd --system

addgroup -S -g $GF_GID grafana; \

fi && \

GF_GID_NAME=$(getent group $GF_GID | cut -d':' -f1) && \

# Alpine: adduser -S, Debian: useradd --system --no-create-home

adduser -S -u $GF_UID -G "$GF_GID_NAME" grafana && \

mkdir -p "$GF_PATHS_DATA" "$GF_PATHS_LOGS" && \

chown -R "grafana:$GF_GID_NAME" "$GF_PATHS_DATA" "$GF_PATHS_LOGS"

USER "$GF_UID"

LABEL adds metadata to the image. Prometheus uses the full OCI label schema for registry traceability:

# github.com/prometheus/prometheus/Dockerfile

LABEL org.opencontainers.image.authors="The Prometheus Authors" \

org.opencontainers.image.vendor="Prometheus" \

org.opencontainers.image.title="Prometheus" \

org.opencontainers.image.description="The Prometheus monitoring system ..." \

org.opencontainers.image.source="https://github.com/prometheus/prometheus" \

org.opencontainers.image.documentation="https://prometheus.io/docs" \

org.opencontainers.image.licenses="Apache License 2.0" \

# ... (additional: url, variant)

VOLUME, STOPSIGNAL, and HEALTHCHECK

VOLUME marks a path for external storage. When Docker runs this image, it creates an anonymous volume at that path, and any writes to it after the VOLUME instruction during the build are discarded. In Kubernetes, you mount storage via a volumeMount in the Pod spec. The Dockerfile VOLUME instruction has no effect on Kubernetes volume binding. Prometheus declares /prometheus as a volume:

# github.com/prometheus/prometheus/Dockerfile

VOLUME [ "/prometheus" ]

STOPSIGNAL changes the signal sent when the container is stopped. The default is SIGTERM, but NGINX uses SIGQUIT for graceful shutdown, allowing in-flight requests to complete:

# github.com/nginx/docker-nginx/stable/debian/Dockerfile

STOPSIGNAL SIGQUIT

HEALTHCHECK defines a command Docker runs periodically to check if the container is healthy. Kubernetes ignores Dockerfile HEALTHCHECK instructions (it uses its own livenessProbe and readinessProbe in the Pod spec), but Docker Compose and standalone Docker respect them:

# docs.docker.com/reference/dockerfile/#healthcheck

HEALTHCHECK --interval=30s --timeout=5s --retries=3 \

CMD wget -qO- http://localhost:9090/-/healthy || exit 1

Note that distroless images cannot use HEALTHCHECK CMD because there is no shell to execute the command.

How Layers and Caching Work

Every RUN, COPY, and ADD instruction creates a new filesystem layer. Metadata-only instructions (ENV, EXPOSE, USER, LABEL, ARG) modify the image configuration but don't create filesystem layers.

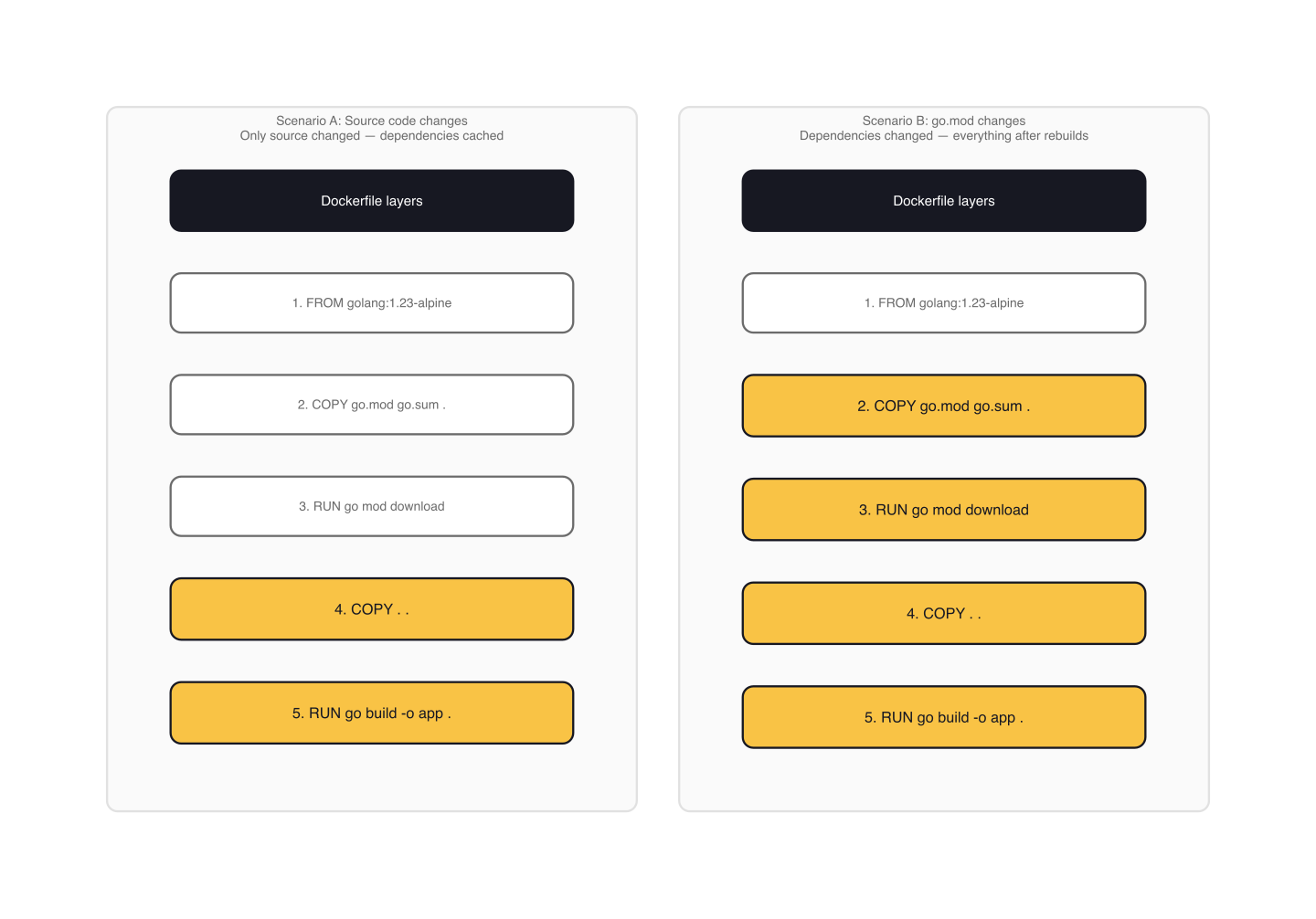

Docker caches each layer and reuses it on subsequent builds if nothing has changed. The cache check is sequential: if layer 3 is invalidated (because a source file changed), layers 4, 5, and everything after rebuild from scratch. This is why instruction ordering matters.

cert-manager's webhook Dockerfile demonstrates the dependency-first pattern. It copies go.mod and go.sum before the source code, then runs go mod download:

# github.com/cert-manager/webhook-example/Dockerfile

FROM golang:1.23-alpine3.19@sha256:5f333688... AS build_deps

RUN apk add --no-cache git

WORKDIR /workspace

COPY go.mod .

COPY go.sum .

RUN go mod download

FROM build_deps AS build

COPY . .

RUN CGO_ENABLED=0 go build -o webhook -ldflags '-w -extldflags "-static"' .

If you change your application code, only the COPY . . layer and the build step re-execute. The dependency download stays cached because go.mod and go.sum didn't change. Grafana applies the same pattern for both its Node.js and Go dependencies.

The NGINX pattern takes the opposite approach: pack everything into a single RUN so that install artifacts and cleanup coexist in one layer. Both strategies are valid. Use dependency-first ordering for builds where you want incremental caching. Use the single-layer pattern for installation steps where you need to clean up immediately.

Build Context and .dockerignore

When you run docker build ., the entire directory tree gets sent to the daemon as the build context. Without a .dockerignore, that includes .git/, node_modules/, test fixtures, and anything else in the directory.

A .dockerignore file works like .gitignore: list patterns to exclude from the context. At minimum, exclude .git, build artifacts, and anything that contains secrets.

ENTRYPOINT vs CMD

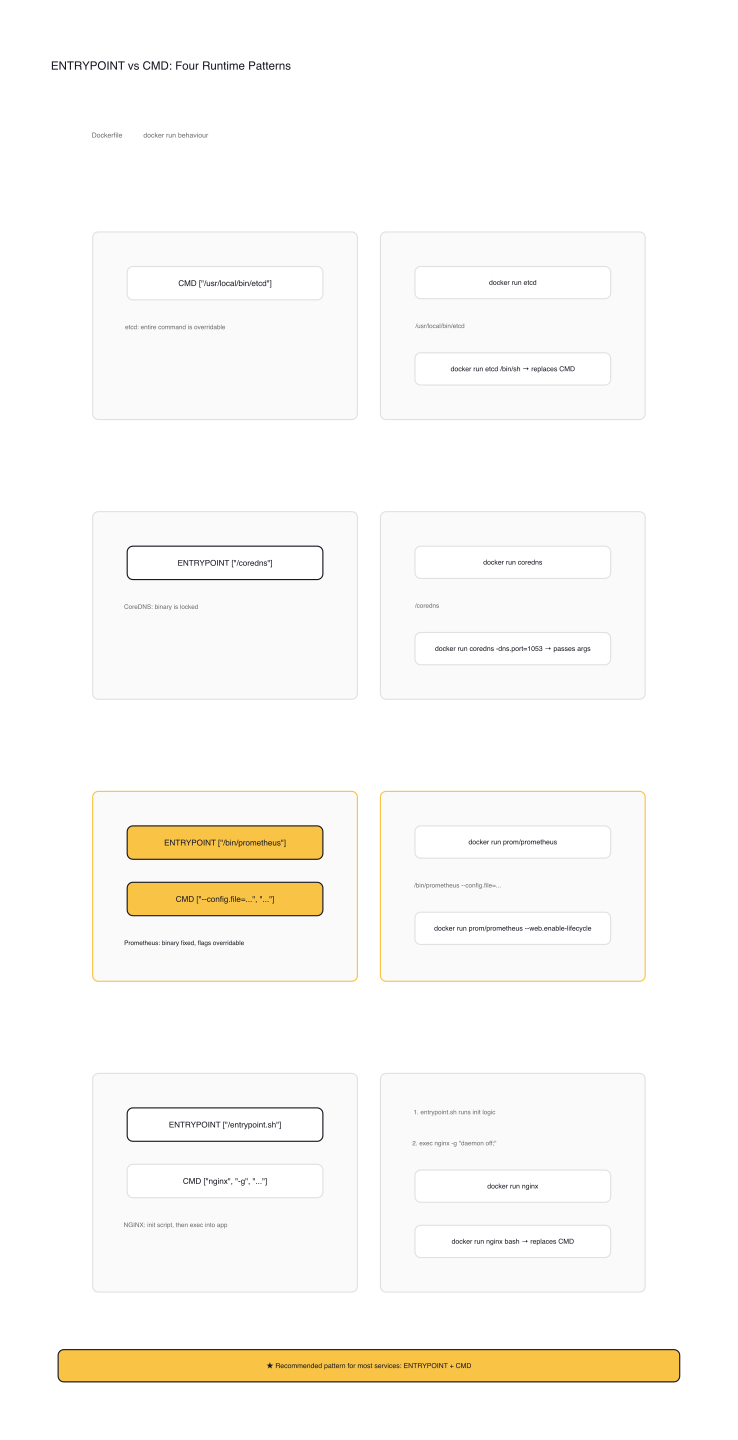

This is the single most confused pair of instructions, and the CKAD exam will test your understanding.

CMD alone makes the entire command overridable. etcd uses CMD ["/usr/local/bin/etcd"], which means docker run etcd /bin/sh replaces the etcd binary with a shell.

ENTRYPOINT alone locks the binary. CoreDNS uses ENTRYPOINT ["/coredns"]. Anything passed at runtime becomes arguments to /coredns. You cannot replace the binary without --entrypoint.

ENTRYPOINT + CMD together is the most flexible pattern. Prometheus demonstrates it:

# github.com/prometheus/prometheus/Dockerfile

ENTRYPOINT [ "/bin/prometheus" ]

CMD [ "--config.file=/etc/prometheus/prometheus.yml", \

"--storage.tsdb.path=/prometheus" ]

The binary (/bin/prometheus) is fixed. The default flags come from CMD. Running docker run prom/prometheus --config.file=/custom.yml replaces only the CMD portion. The entrypoint stays.

NGINX combines an entrypoint script with CMD. The entrypoint script runs numbered drop-in scripts from /docker-entrypoint.d/, then execs into whatever CMD provides:

# github.com/nginx/docker-nginx/stable/debian/Dockerfile

COPY docker-entrypoint.sh /

COPY 10-listen-on-ipv6-by-default.sh /docker-entrypoint.d

COPY 15-local-resolvers.envsh /docker-entrypoint.d

COPY 20-envsubst-on-templates.sh /docker-entrypoint.d

COPY 30-tune-worker-processes.sh /docker-entrypoint.d

ENTRYPOINT ["/docker-entrypoint.sh"]

# ... EXPOSE 80, STOPSIGNAL SIGQUIT ...

CMD ["nginx", "-g", "daemon off;"]

Shell Form vs Exec Form

This distinction matters more than most people realize. Shell form (CMD node app.js) wraps the command in /bin/sh -c, which means your process runs as PID 2, not PID 1. When Kubernetes sends SIGTERM to stop the container, the signal goes to the shell (PID 1), which may not forward it to your process.

Exec form (CMD ["node", "app.js"]) runs the process directly as PID 1. It receives signals correctly. Every CNCF project in this article uses exec form. Do the same.

| Scenario | Use | Example |

|---|---|---|

| Fixed binary, configurable flags | ENTRYPOINT + CMD |

Prometheus |

| Single-purpose container | ENTRYPOINT only |

CoreDNS, cert-manager |

| General-purpose / overridable | CMD only |

etcd |

| Init script + app command | ENTRYPOINT script + CMD |

NGINX |

Patterns from Production Dockerfiles

Run as Non-Root

Three approaches from the research: CoreDNS uses distroless's built-in nonroot user, Prometheus uses the system nobody account, and Grafana creates a dedicated user with a configurable UID. All three are valid. The important thing is that your container does not run as root. Kubernetes Pod Security Standards enforce this, and the CKAD exam environment expects it.

Pin Base Images by Digest

Tags are mutable. Someone can push a new image to alpine:3.23 at any time. etcd pins by SHA digest: gcr.io/distroless/static-debian12@sha256:20bc6c.... Digests are content-addressable and immutable. If the upstream image changes, your build still uses the exact same base. cert-manager pins both its builder and runtime images the same way.

Minimize the Final Image

Distroless images have no shell and no package manager, giving you the smallest attack surface. Alpine is ~5 MB and suitable when you need apk. Debian-slim is the fallback when you need apt and system-level tooling. The next article covers multi-stage builds, which let you use a full build environment and copy only the compiled binary to a minimal final image.

Clean Up in the Same Layer

Files deleted in a later RUN instruction still exist in the earlier layer. NGINX's pattern of chaining apt-get install, configuration, and rm -rf /var/lib/apt/lists/* in a single RUN ensures the apt cache never persists in the image.

Redirect Logs to stdout/stderr

NGINX symlinks its log files to /dev/stdout and /dev/stderr:

# github.com/nginx/docker-nginx/stable/debian/Dockerfile (inside RUN)

ln -sf /dev/stdout /var/log/nginx/access.log

ln -sf /dev/stderr /var/log/nginx/error.log

This lets Docker and Kubernetes collect logs natively via docker logs or kubectl logs. Containers that write to log files instead of standard streams require extra tooling to ship those logs.

Gotchas

ADD silently extracts tar archives. ADD archive.tar.gz /app/ doesn't copy the tar file; it extracts it into /app/. If you want the archive itself, use COPY.

ARG values don't persist into the running container, but they do appear in docker history output. Never pass secrets as build arguments.

Each RUN instruction starts a fresh shell. RUN cd /app changes nothing for the next RUN. Use WORKDIR to set the directory persistently.

Shell form CMD (CMD node app.js) wraps your process in /bin/sh -c, making it PID 2. Kubernetes sends SIGTERM to PID 1 (the shell), which may not forward it. Your process never learns it's time to shut down. Always use exec form.

ARG declared before FROM is only available in FROM lines. To use it inside a build stage, you must re-declare it (without a default) after the FROM. This catches people who parameterize their base image with ARG and then try to reference the same variable in a RUN instruction.

EXPOSE is documentation. It doesn't publish ports at runtime. You still need -p 8080:8080 or a Kubernetes containerPort spec.

Deleting files in a separate RUN layer doesn't reduce image size. The file still exists in the layer where it was created. Combine installation and cleanup in a single RUN instruction.

Wrap-up

A Dockerfile is a contract between the image author and the runtime environment. FROM sets your security surface. USER sets your privilege boundary. ENTRYPOINT sets your signal handling. When you can explain why each instruction is there, not just what it does, you can write Dockerfiles under exam pressure and audit them in production. The projects in this article make deliberate choices because they have learned the cost of not doing so.

Next up: Multi-Stage Builds and Image Optimization. You will learn how to use multiple FROM stages to separate build dependencies from runtime, dramatically reducing image size and attack surface.

Application Design and Build (1 of 13)

Languages (Rust, Go & Python), container orchestration (Kubernetes), data and cloud providers (AWS & GCP) lover. Runner & Cyclist.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.