Managing Role-Based Access Control (RBAC)

RBAC is 25% of the CKA exam and the authorization layer for every API call in the cluster. Get the scope wrong on a single binding and you've granted cluster-wide access when you intended per-namespace. The exam catches both failure modes: missing bindings that cause 403s and overly broad bindings you're asked to tighten.

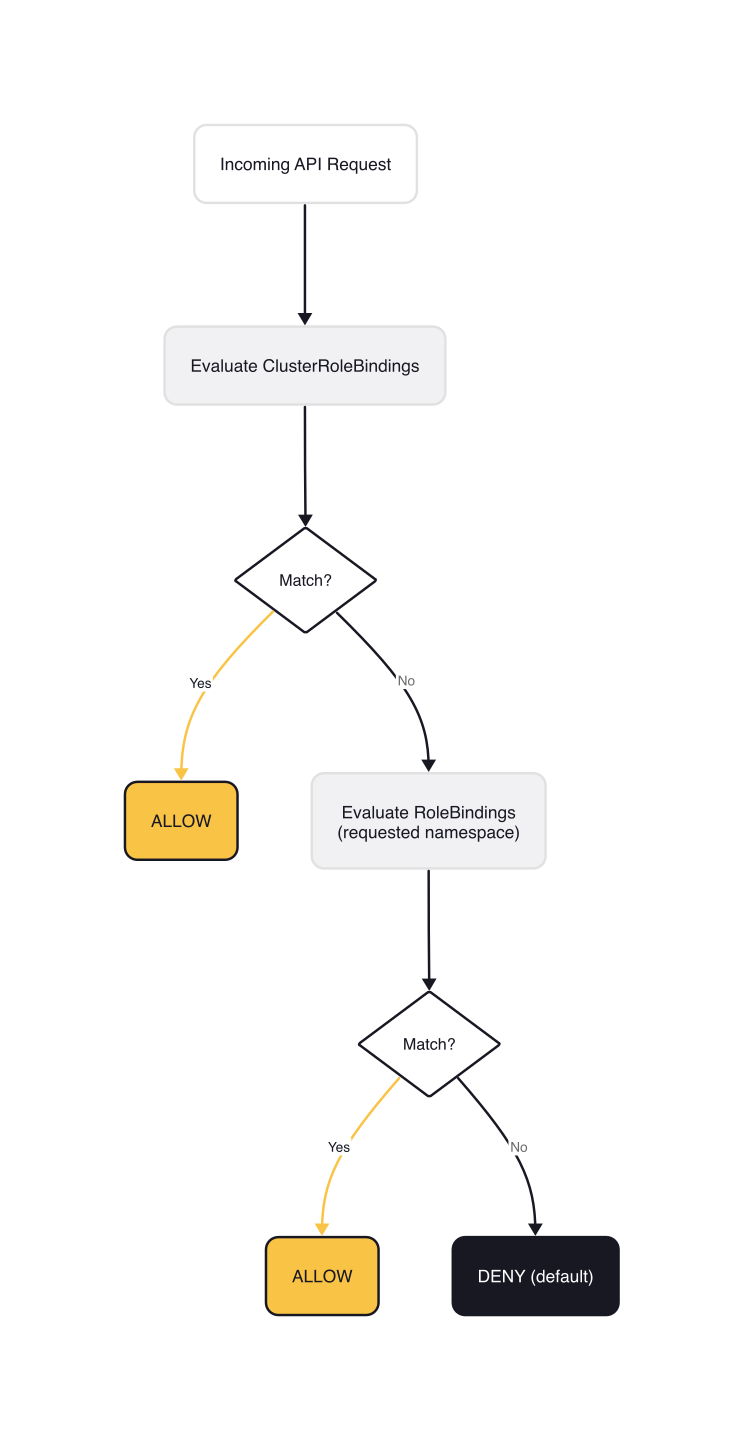

RBAC in Kubernetes is purely additive. There are no deny rules. You either have a permission or you don't. Every request goes through: authentication → authorization (RBAC) → admission. The authorization step evaluates ClusterRoleBindings first, then RoleBindings in the requested namespace, and defaults to deny:

// kubernetes/staging/src/k8s.io/api/rbac/v1/types.go (lines 23-26)

// Authorization is calculated against

// 1. evaluation of ClusterRoleBindings - short circuit on match

// 2. evaluation of RoleBindings in the namespace requested - short circuit on match

// 3. deny by default

This article covers the RBAC data model, the imperative kubectl workflow the exam demands, built-in ClusterRoles and the aggregation pattern, and the gotchas that cause real failures. ServiceAccount identity, token mechanics, and workload federation are covered in the dedicated ServiceAccounts, Tokens, and Identity lesson.

The RBAC Data Model

RBAC has four API objects. Role and RoleBinding are namespaced. ClusterRole and ClusterRoleBinding are cluster-scoped. The naming maps directly to their scope.

Permissions are expressed as PolicyRules. Each rule is a combination of API groups, resource types, and allowed verbs:

// kubernetes/staging/src/k8s.io/api/rbac/v1/types.go (PolicyRule struct, comments adapted for clarity)

type PolicyRule struct {

// Verbs: get, list, watch, create, update, patch, delete, deletecollection

Verbs []string `json:"verbs"`

// APIGroups: "" for core API, "apps" for Deployments, "rbac.authorization.k8s.io" for RBAC objects

// "*" means all groups

APIGroups []string `json:"apiGroups,omitempty"`

// Resources: plural names — "pods", "secrets", "deployments"

// "*" means all resources in the listed groups

Resources []string `json:"resources,omitempty"`

// ResourceNames: optional allowlist to restrict access to specific named objects

ResourceNames []string `json:"resourceNames,omitempty"`

// NonResourceURLs: paths like "/metrics", "/healthz" — only meaningful in ClusterRoles

// (requires a ClusterRoleBinding to take effect)

NonResourceURLs []string `json:"nonResourceURLs,omitempty"`

}

The apiGroups field trips people up until it clicks. The core API group (Pods, Services, Secrets, ConfigMaps, Nodes) uses "". The apps group covers Deployments, StatefulSets, DaemonSets, ReplicaSets. Use kubectl api-resources -o wide to see the API group for any resource.

The RoleRef field in every binding is immutable after creation. You cannot change which role a binding references. If you need to change the role, delete the binding and recreate it.

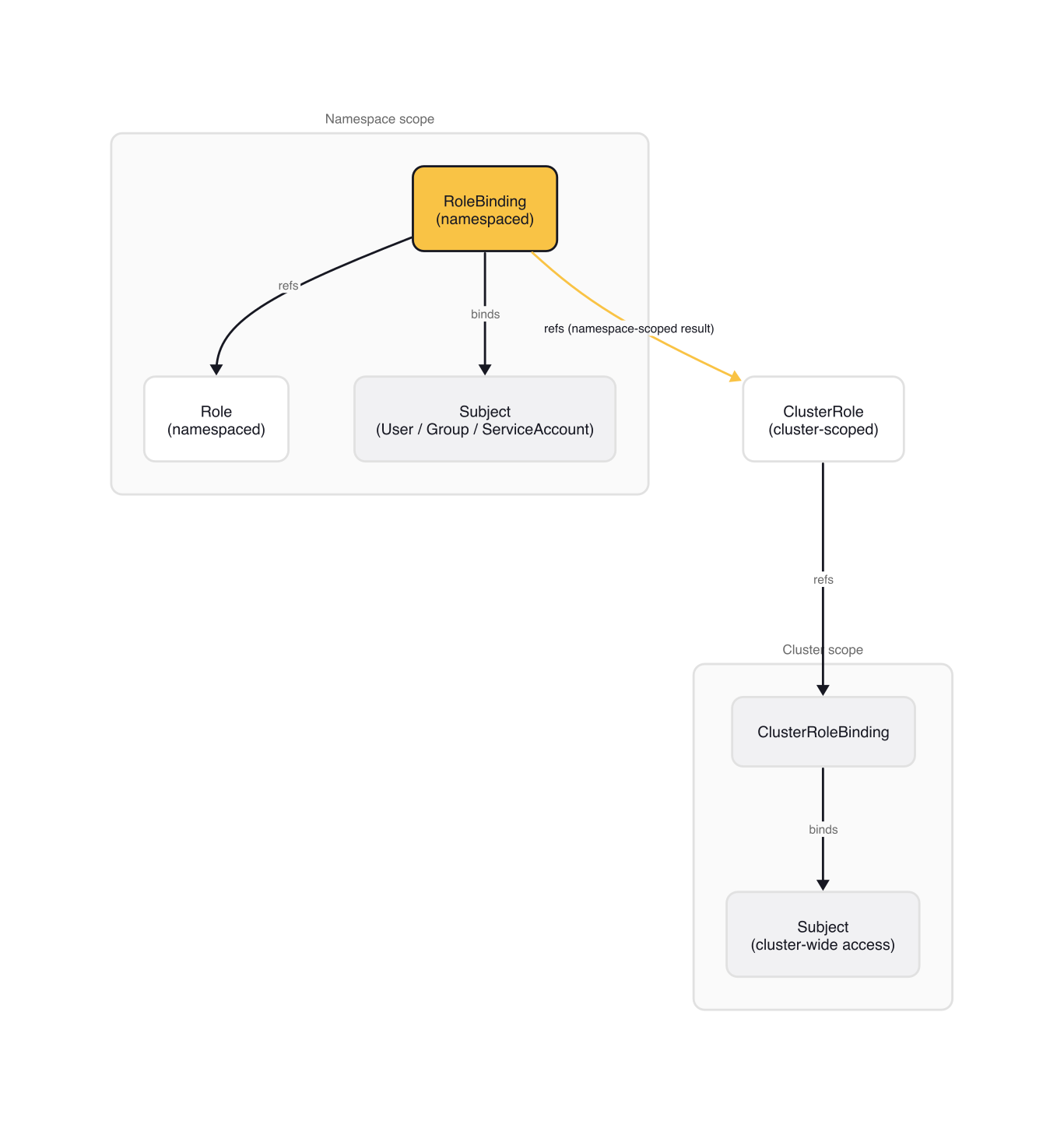

The binding matrix

The scope of permissions depends on the type of binding and whether it references a Role or ClusterRole.

| Binding type | Role type | Result |

|---|---|---|

RoleBinding |

Role |

Permissions in that namespace |

RoleBinding |

ClusterRole |

ClusterRole permissions scoped to that namespace |

ClusterRoleBinding |

ClusterRole |

ClusterRole permissions across all namespaces |

The second row is what confuses people. A RoleBinding can reference a ClusterRole, which is useful for reusing common permission sets across namespaces without redefining them. The scope is still the namespace the RoleBinding lives in. A ClusterRoleBinding is what grants cluster-wide permissions.

The Exam Workflow

The CKA exam is a performance-based terminal environment. You write RBAC YAML by hand only when the imperative flags can't express what you need. Most tasks use kubectl create role, kubectl create clusterrole, kubectl create rolebinding, and kubectl create clusterrolebinding.

# Create a namespaced Role

kubectl create role pod-reader \

--verb=get,list,watch \

--resource=pods \

-n staging

# Bind it to a user

kubectl create rolebinding dev-pod-reader \

--role=pod-reader \

--user=jane \

-n staging

# Create a ClusterRole and bind to a ServiceAccount

kubectl create clusterrole node-reader \

--verb=get,list,watch \

--resource=nodes

kubectl create clusterrolebinding monitoring-node-reader \

--clusterrole=node-reader \

--serviceaccount=monitoring:prometheus

The --serviceaccount flag takes the format namespace:name. Get this wrong (e.g., specifying the name without the namespace) and kubectl will accept it but the binding will never match.

The get,list,watch triple appears in every example because most Kubernetes clients use informers: list populates the initial cache, watch keeps it current, and get handles direct lookups. Granting only get without list+watch breaks any controller that relies on a shared informer.

After creating bindings, verify with kubectl auth can-i:

# Test as a specific user

$ kubectl auth can-i list pods --as=jane -n staging

yes

# Test something the SA does NOT have — node-reader grants nodes, not pods

$ kubectl auth can-i list pods \

--as=system:serviceaccount:monitoring:prometheus

no

# List all permissions for a subject in a namespace

$ kubectl auth can-i --list --as=jane -n staging

Resources Non-Resource URLs Resource Names Verbs

pods [] [] [get list watch]

The --as=system:serviceaccount:namespace:name format is the standard way to impersonate a ServiceAccount for testing. Use this every time you debug "why can't this pod call the API?".

When the imperative flags aren't enough (for resourceNames, subresources like pods/log, or multi-group rules) use --dry-run=client -o yaml to generate a base manifest and edit it:

kubectl create role pod-log-reader \

--verb=get \

--resource=pods \

-n staging \

--dry-run=client -o yaml > role.yaml

# Then edit role.yaml to add resources: ["pods", "pods/log"] and apply

Built-in ClusterRoles and Aggregation

Kubernetes ships four default ClusterRoles for human access: cluster-admin, admin, edit, and view. The bootstrap policy in the Kubernetes source defines the verb sets these roles use:

// kubernetes/plugin/pkg/auth/authorizer/rbac/bootstrappolicy/policy.go (bootstrap verb sets)

var (

Write = []string{"create", "update", "patch", "delete", "deletecollection"}

ReadWrite = []string{"get", "list", "watch", "create", "update", "patch", "delete", "deletecollection"}

Read = []string{"get", "list", "watch"}

// ReadUpdate, Label, Annotation omitted

)

cluster-admin is bound to the system:masters group at bootstrap. This is not an RBAC binding in the normal sense: system:masters bypasses the authorization layer entirely. Any member of that group is superuser with no override possible. Never add users to system:masters.

The admin, edit, and view roles use aggregation. Instead of hardcoding their full permission set, they pull rules from other ClusterRoles that match a label selector:

// kubernetes/plugin/pkg/auth/authorizer/rbac/bootstrappolicy/policy.go

func ClusterRolesToAggregate() map[string]string {

return map[string]string{

"admin": "system:aggregate-to-admin",

"edit": "system:aggregate-to-edit",

"view": "system:aggregate-to-view",

}

}

These are internal implementation identifiers, not the label keys you use directly. The label keys that operators put on ClusterRoles are rbac.authorization.k8s.io/aggregate-to-view: "true". Add the right aggregation labels to a new ClusterRole and the controller picks it up automatically.

Flux2 does exactly this for all its CRD types:

# fluxcd/flux2/manifests/rbac/view.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: flux-view

labels:

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-view: "true"

rules:

- apiGroups:

- notification.toolkit.fluxcd.io

- source.toolkit.fluxcd.io

- source.extensions.fluxcd.io

- helm.toolkit.fluxcd.io

- image.toolkit.fluxcd.io

- kustomize.toolkit.fluxcd.io

resources: ["*"]

verbs:

- get

- list

- watch

Once this ClusterRole is installed, kubectl get helmreleases works for any user or ServiceAccount with the view ClusterRole bound in that namespace.

Some workloads genuinely need cluster-scoped access. The Prometheus ClusterRole from kube-prometheus shows why: nodes/metrics is a subresource on a cluster-scoped resource, and nonResourceURLs can only appear in a ClusterRole:

# prometheus-operator/kube-prometheus/manifests/prometheus-clusterRole.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

# labels omitted

name: prometheus-k8s

rules:

- apiGroups: [""]

resources: ["nodes/metrics"]

verbs: ["get"]

- nonResourceURLs:

- /metrics

- /metrics/slis

verbs: ["get"]

If you tried to express /metrics access in a namespaced Role, the API server would reject it. nonResourceURLs only works in ClusterRoles, and they require a ClusterRoleBinding to take effect.

Gotchas

RoleRef is immutable. Once a binding is created, you cannot change which role it references. The API server returns a clear error if you try to update roleRef, but the fix is not obvious: delete the binding and recreate it. In the exam, this means you sometimes need to delete an existing binding before you can fix it.

list secrets gives you everything. The Kubernetes docs flag this explicitly: a subject with list on Secrets gets the full object data, including secret values, in the API response. Granting list is functionally equivalent to granting get for data exfiltration. Only grant the verb combination you actually need.

ClusterRoleBinding scopes to all namespaces, always. A ClusterRoleBinding to the view ClusterRole gives read access across every namespace in the cluster, including namespaces created in the future. The correct pattern for per-namespace access is RoleBinding → ClusterRole (not ClusterRoleBinding).

The escalate and bind verbs are privilege escalation vectors. A subject with escalate on roles can create Roles with more permissions than they themselves hold. A subject with bind can bind themselves to any existing Role. The RBAC good practices guide recommends never granting these outside tightly controlled automation. Treat them like cluster-admin.

Wrap-up

RBAC reduces to three decisions per permission grant: what (a PolicyRule with verbs, API groups, and resources), where (namespace scope via RoleBinding, or cluster scope via ClusterRoleBinding), and who (a Subject: User, Group, or ServiceAccount). The exam tests all three, almost always in the form of a workload that's failing because a binding is missing or incorrectly scoped.

Scope is the decision with the highest error rate. A RoleBinding that references a ClusterRole gives you reusability with namespace isolation. A ClusterRoleBinding removes that isolation entirely. Getting that distinction wrong in production means granting cluster-wide access when you intended per-namespace.

tip: Deep dive For the full treatment of ServiceAccount identity, token mechanics, and workload federation, see ServiceAccounts, Tokens, and Identity.

Next in the Cluster Architecture module: preparing the infrastructure before running kubeadm, covering network prerequisites, container runtime configuration, and the kernel settings that determine whether kubeadm init succeeds or fails with a preflight error.

Cluster Architecture, Installation and Configuration (1 of 18)

Languages (Rust, Go & Python), container orchestration (Kubernetes), data and cloud providers (AWS & GCP) lover. Runner & Cyclist.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.