Pod-to-Pod Communication and the Networking Model

The pod can't reach its database. Same namespace, different node. kubectl exec confirms the pod is Running, its IP is assigned, routes look sane from the source node. Packets are simply not arriving at the destination. This is the moment when "Kubernetes networking just works" stops being good enough, and you need to understand what's actually happening beneath the surface.

Kubernetes makes three specific promises about pod networking: every pod gets a unique cluster-wide IP address, pods can reach all other pods without NAT, and node agents can reach all pods on that node. These are guarantees, not aspirations. But they're enforced by a CNI plugin running as a DaemonSet on every node, not by Kubernetes core. When the plugin fails, all three guarantees break simultaneously, and the failure mode looks exactly like the scenario above.

This article covers the IP-per-Pod model, how CNI plugins use veth pairs and IPAM to wire each pod into the network, how cross-node routing works with VXLAN and direct routing backends, and how to diagnose connectivity failures from first principles.

The IP-per-Pod Model

Kubernetes addresses four distinct networking problems, each at a different layer. Container-to-container communication is handled by shared network namespaces: containers within the same pod share eth0 and communicate over localhost. Pod-to-service communication is handled by kube-proxy (or eBPF programs in implementations like Cilium). External-to-service communication is handled by LoadBalancer and Ingress. This article focuses on the second problem: pod-to-pod communication.

The Kubernetes networking model specifies three guarantees:

- Every pod gets a unique IP address across the entire cluster.

- Any pod can reach any other pod directly by IP, without NAT.

- Node agents (kubelet, kube-proxy) can reach all pods on their node.

The design intent is to make pods look like VMs from a networking perspective. No port mapping. No NAT gymnastics. Applications listen on their natural port, and other pods connect to that port directly. This is why you can run a Redis cluster in Kubernetes with the exact same configuration you'd use on bare metal: the network topology is flat, and the IP is stable for the pod's lifetime.

Docker's default model wraps all of this in NAT: containers get private IPs, external access requires explicit port mapping, and two containers on different hosts wanting port 6379 require coordination. That breaks at cluster scale. Kubernetes's flat model eliminates the entire problem class.

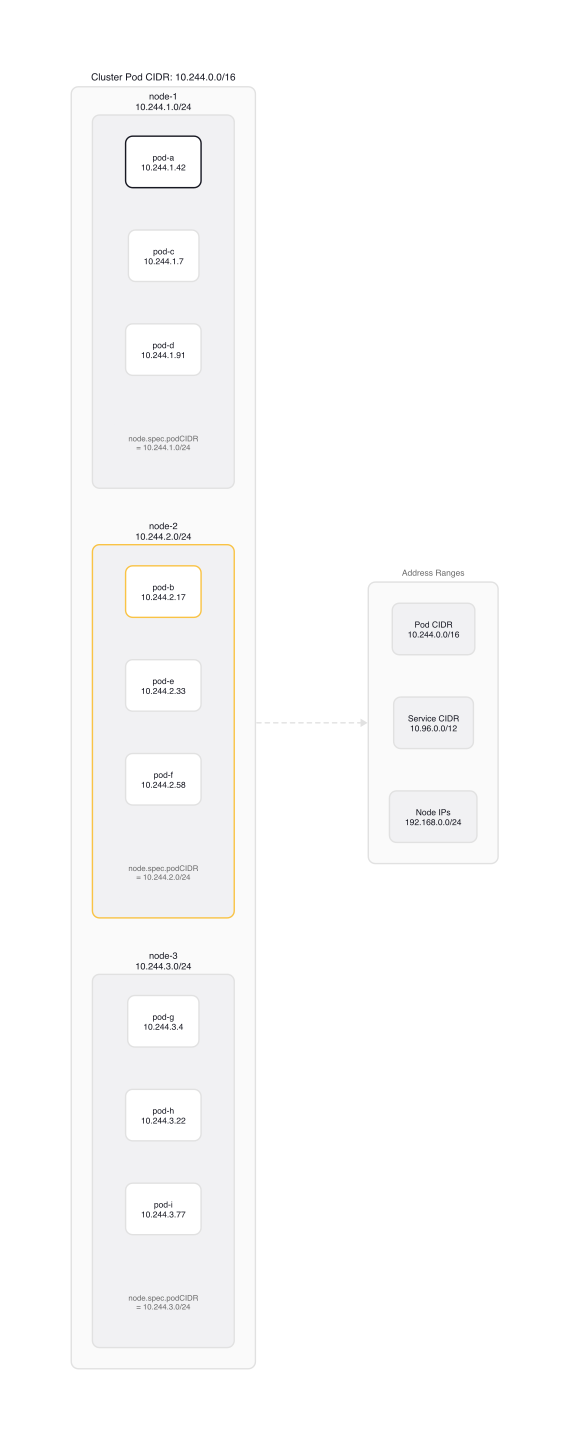

IP address space is divided into three non-overlapping ranges: the pod CIDR (Flannel defaults to 10.244.0.0/16, Calico to 192.168.0.0/16), the service CIDR (kubeadm defaults to 10.96.0.0/12), and the node addresses (your physical network). The pod CIDR is subdivided into per-node blocks: each node gets a /24 (or smaller) range for its pods, visible in node.spec.podCIDR:

$ kubectl get nodes -o jsonpath='{range .items[*]}{.metadata.name}{"\t"}{.spec.podCIDR}{"\n"}{end}'

node-1 10.244.1.0/24

node-2 10.244.2.0/24

node-3 10.244.3.0/24

The per-node CIDR is the foundation: each node owns a specific block, and CNI plugins use that block to allocate IPs to pods and program cross-node routes.

CNI: The Contract Between Runtime and Network Plugin

CNI (Container Network Interface) is a specification, not an implementation. It defines a protocol between a container runtime (containerd, CRI-O) and a network plugin binary. The plugin is short-lived: it's executed by the runtime each time a pod sandbox is created or deleted, then exits. The DaemonSet that ships with most CNI plugins exists to install and update the binary on each node, and to maintain cluster-level state like VXLAN forwarding tables and BGP routes.

The runtime passes context to the plugin via environment variables and JSON on stdin. The CmdArgs struct in pkg/skel/skel.go defines exactly what the plugin receives:

// pkg/skel/skel.go (lines 38-46) — field comments added for clarity; original has none

type CmdArgs struct {

ContainerID string // unique container identifier from the runtime

Netns string // path to the pod's network namespace, e.g. /var/run/netns/cni-xxx

IfName string // interface name to create inside the pod, typically eth0

Args string // optional key=value pairs from CNI_ARGS env variable

Path string // colon-separated directories to search for plugin binaries

NetnsOverride string

StdinData []byte // the full CNI config JSON from /etc/cni/net.d/

}

The env variables CNI_COMMAND, CNI_CONTAINERID, CNI_NETNS, CNI_IFNAME, and CNI_PATH populate this struct. The plugin then executes one of three operations: ADD (attach pod to network), DEL (detach and clean up), or CHECK (verify network state is consistent with what ADD set up).

What ADD Does

The simplest complete CNI plugin to read is ptp, which creates a point-to-point link between the host and a single pod. setupContainerVeth in plugins/main/ptp/ptp.go shows the complete wiring:

// plugins/main/ptp/ptp.go — setupContainerVeth

err := netns.Do(func(hostNS ns.NetNS) error {

// Create the veth pair: one end (ifName/eth0) stays in the container namespace,

// the other (hostVeth) is moved back to the host namespace.

hostVeth, contVeth0, err := ip.SetupVeth(ifName, mtu, "", hostNS)

...

// Assign the IP address and configure the interface inside the container.

if err = ipam.ConfigureIface(ifName, pr); err != nil {

return err

}

// Delete the automatically-added broad subnet route, then add:

// - A /32 host route to the gateway via eth0 (scope: link)

// - A subnet route (e.g., 10.244.1.0/24) via that gateway, covering the pod CIDR

...

})

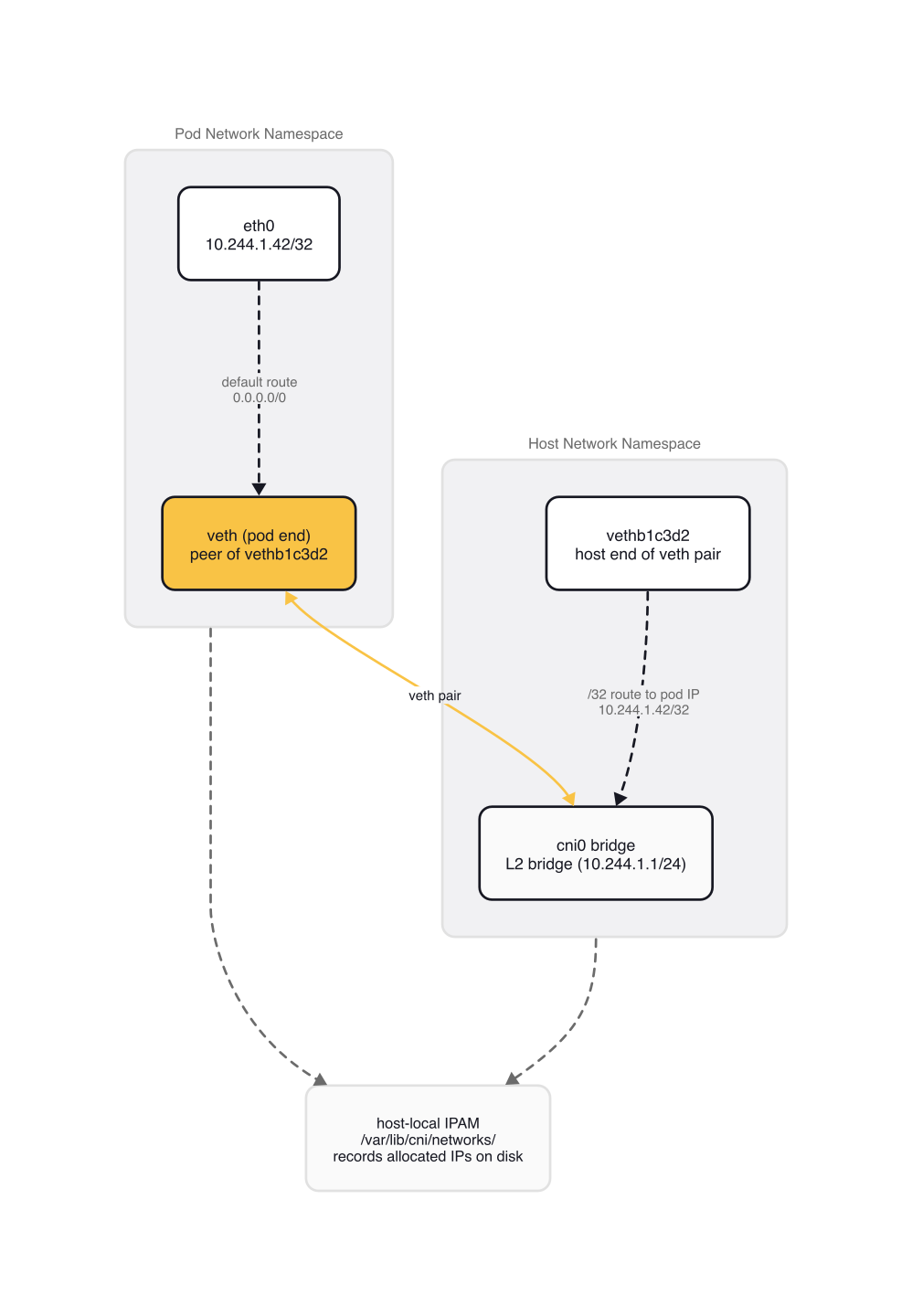

setupHostVeth mirrors this on the host side: it assigns the gateway IP to the host-end veth and adds a /32 route pointing to the pod IP. The result is a direct point-to-point path: the kernel routes pod IP traffic out the specific veth, not through a broadcast domain.

The IPAM step calls a separate plugin. The host-local IPAM plugin allocates an IP from the node's pod CIDR and persists the allocation to disk. From plugins/ipam/host-local/main.go:

// plugins/ipam/host-local/main.go — cmdAdd

store, err := disk.New(ipamConf.Name, ipamConf.DataDir)

// DataDir defaults to /var/lib/cni/networks/<name>/

// Each file is named by IP address and contains the container ID holding that lease.

...

for idx, rangeset := range ipamConf.Ranges {

allocator := allocator.NewIPAllocator(&rangeset, store, idx)

ipConf, err := allocator.Get(args.ContainerID, args.IfName, requestedIP)

...

result.IPs = append(result.IPs, ipConf)

}

Allocations survive pod restarts (the file persists on disk) but are entirely node-local. On DEL, cmdDel calls ipAllocator.Release(args.ContainerID, args.IfName) to remove the file and return the IP to the pool.

The Bridge Variant

Most production CNI deployments use the bridge plugin rather than ptp. Instead of a direct host-to-pod veth, each pod's veth connects to a Linux bridge (cni0 by default). All pods on the same node share this bridge, which acts as their layer-2 switch. The bridge.go NetConf struct shows the key fields:

// plugins/main/bridge/bridge.go — NetConf

const defaultBrName = "cni0"

type NetConf struct {

types.NetConf

BrName string `json:"bridge"` // defaults to "cni0"

IsGW bool `json:"isGateway"` // bridge gets the gateway IP

// ...

IPMasq bool `json:"ipMasq"` // outbound SNAT for traffic leaving cluster

// ...

MTU int `json:"mtu"`

HairpinMode bool `json:"hairpinMode"`

// ...

}

IPAM is delegated identically to ptp: the bridge itself knows nothing about IP addresses, it just switches frames. When IsGW: true is set, the bridge interface receives the gateway IP for the node's pod CIDR, and all pods on that node default-route through it.

The CNI config file that orchestrates this lives in /etc/cni/net.d/. A typical Flannel configuration uses a plugin chain:

// /etc/cni/net.d/10-flannel.conflist

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": { "portMappings": true }

}

]

}

The runtime calls each plugin in sequence. The portmap plugin runs after flannel to handle hostPort mappings. Each plugin in the chain can read the previous plugin's result from prevResult in stdin.

Cross-Node Routing: Overlay vs. Direct

The veth pair and bridge handle intra-node communication. For cross-node traffic, a pod on node-1 (IP 10.244.1.5) sending to a pod on node-2 (IP 10.244.2.7) exits the bridge, hits the host kernel routing table, and needs a route to 10.244.2.0/24. That route doesn't exist by default. Installing and maintaining those routes across all nodes is the job of the CNI plugin's DaemonSet.

The approach determines whether you need L2 adjacency between nodes and what the per-packet overhead is.

Overlay with Flannel VXLAN

VXLAN encapsulates the original pod IP packet inside a UDP packet. Each node runs a VXLAN device (flannel.1) that handles encapsulation and decapsulation. The Flannel DaemonSet watches the Kubernetes API for node subnet lease events. When a new node joins, handleSubnetEvents in pkg/backend/vxlan/vxlan_network.go programs three pieces of kernel state in sequence:

// pkg/backend/vxlan/vxlan_network.go — handleSubnetEvents (EventAdded)

// (simplified for clarity — actual source wraps each call in retry.Do() for transient kernel failures)

// Step 1: ARP entry mapping the remote pod subnet IP to the remote VTEP MAC.

// The kernel uses this to resolve the next-hop MAC without broadcasting ARP.

nw.dev.AddARP(neighbor{IP: sn.IP, MAC: net.HardwareAddr(vxlanAttrs.VtepMAC)})

// Step 2: FDB entry mapping the remote VTEP MAC to the remote node's physical IP.

// The VXLAN device uses this to know which physical host to send the UDP packet to.

nw.dev.AddFDB(neighbor{IP: attrs.PublicIP, MAC: net.HardwareAddr(vxlanAttrs.VtepMAC)})

// Step 3: Kernel route: 10.244.2.0/24 via 10.244.2.0 dev flannel.1

// This is the route that directs pod-CIDR traffic into the VXLAN device.

netlink.RouteReplace(&vxlanRoute)

When node-1 sends to 10.244.2.7, the kernel routes it to flannel.1, which looks up the FDB entry to find the physical IP of node-2, wraps the packet in UDP on port 8472, and sends it over the physical network. node-2's flannel.1 device decapsulates and delivers the original packet.

VXLAN works across L3 boundaries: cloud VPCs, different racks, any infrastructure where nodes aren't on the same L2 segment. The cost is 50 bytes of encapsulation overhead per packet (the encapOverhead = 50 constant in vxlan_network.go). With a standard 1500-byte NIC MTU, pod interface MTU must be set to 1450 or lower.

If directRouting: true is set and all nodes are on the same L2 subnet, Flannel's VXLAN mode skips the ARP/FDB programming entirely and falls back to direct kernel routes, identical to host-gw. This means you can run a single Flannel deployment that uses direct routing on homogeneous infrastructure and automatically falls back to VXLAN tunneling where needed.

Direct Routing with Flannel host-gw

Host-gw skips encapsulation entirely. For each remote pod CIDR, Flannel programs a kernel route pointing at the physical IP of the node that owns it. From pkg/backend/hostgw/hostgw.go:

// pkg/backend/hostgw/hostgw.go — RegisterNetwork

n.GetRoute = func(lease *lease.Lease) *netlink.Route {

return &netlink.Route{

Dst: lease.Subnet.ToIPNet(), // 10.244.2.0/24

Gw: lease.Attrs.PublicIP.ToIP(), // physical IP of the node owning that subnet

LinkIndex: n.LinkIndex,

}

}

Zero encapsulation overhead, full MTU available, simpler to debug with ip route show. The requirement is L2 adjacency between all nodes: every node must be reachable at layer 2, which means the same physical subnet with no NAT between them.

The New() function enforces this at startup:

// pkg/backend/hostgw/hostgw.go — New

if !extIface.ExtAddr.Equal(extIface.IfaceAddr) {

return nil, fmt.Errorf("your PublicIP differs from interface IP, " +

"meaning that probably you're on a NAT, which is not supported by host-gw backend")

}

This is exactly the error you get on AWS, GCP, or Azure default VPC networking, where node instances have private IPs behind NAT. Host-gw is a good fit for bare metal clusters or cloud VMs where all nodes share a single subnet.

BGP and eBPF

Calico takes direct routing further with BGP: each node runs a BGP speaker that advertises its pod CIDR prefix. Peer nodes install kernel routes automatically, without requiring L2 adjacency, as long as there's a functioning BGP fabric. Calico also supports IPIP or VXLAN encapsulation as a fallback when direct routing isn't possible.

Cilium replaces iptables and kube-proxy with eBPF programs attached at tc ingress/egress hooks. The difference is architectural: iptables rule evaluation scales O(n) with rule count, while Cilium's eBPF datapath does a single BPF hash map lookup regardless of cluster size. On a node with 500 services (not unusual in a large microservices cluster), iptables-save | wc -l returns 40,000+ rules. Cilium collapses that to one lookup. It also supports L7 network policies and distributed packet tracing built directly into the dataplane. The operational complexity is genuinely higher than Flannel or basic Calico.

For the CKA exam: know the overlay vs. direct routing trade-off (L3 compatibility vs. encapsulation overhead), and when each breaks.

Diagnosing Pod Connectivity Failures

When two pods can't communicate, establish the basic facts before looking at kernel-level networking.

Confirm both pods are running and get their IPs and node assignments:

$ kubectl get pod pod-a pod-b -o wide

NAME READY STATUS IP NODE

pod-a 1/1 Running 10.244.1.42 node-1

pod-b 1/1 Running 10.244.2.17 node-2

Test reachability directly from the source pod:

$ kubectl exec -it pod-a -- curl -s --max-time 5 http://10.244.2.17:8080

curl: (28) Connection timed out after 5001 milliseconds

A timeout points to a routing or filtering problem. A connection refused means the destination is reachable but the application isn't listening. A timeout means packets aren't arriving.

Check node pod CIDR assignments:

$ kubectl get nodes -o jsonpath='{range .items[*]}{.metadata.name}{"\t"}{.spec.podCIDR}{"\n"}{end}'

node-1 10.244.1.0/24

node-2 10.244.2.0/24

Then inspect the routing table on node-1:

$ ip route show

default via 192.168.1.1 dev eth0

10.244.1.0/24 dev cni0 proto kernel scope link src 10.244.1.1

10.244.2.0/24 via 10.244.2.0 dev flannel.1 onlink

If the route to 10.244.2.0/24 is missing, the Flannel DaemonSet isn't maintaining state. Check it:

$ kubectl get pods -n kube-flannel -o wide

NAME READY STATUS NODE

kube-flannel-ds-4vr9x 0/1 CrashLoopBackOff node-1

kube-flannel-ds-mk2pn 1/1 Running node-2

A crashed Flannel pod on node-1 means it hasn't installed the VXLAN forwarding entries for any nodes that joined after its last successful run. New pods on node-1 won't be able to reach pods on any node added to the cluster since then.

Check the veth interfaces on the host to confirm a pod is properly wired:

$ ip link show type veth

5: vethb1c3d2@if4: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qlen 1000

link/ether 6a:7b:8c:9d:0e:1f brd ff:ff:ff:ff:ff:ff link-netns cni-abc123

The MTU of 1450 confirms the VXLAN overhead is accounted for. An MTU of 1500 on a VXLAN cluster is a misconfiguration.

For pods stuck in ContainerCreating, the CNI error appears in pod events:

$ kubectl describe pod pod-name | grep -A5 Events

Events:

Warning FailedCreatePodSandBox Failed to create pod sandbox:

rpc error: code = Unknown desc = failed to setup network for sandbox:

failed to find plugin "flannel" in path [/opt/cni/bin]

This specific error means the CNI binary is missing from the node. The Flannel DaemonSet's initContainer copies the binary to /opt/cni/bin/. If that initContainer failed, new pods on this node can't start.

Gotchas

MTU mismatch with VXLAN is the most common silent failure. The host NIC has MTU 1500. VXLAN adds 50 bytes of overhead, so pod interface MTU must be ≤1450. If it's set to 1500, packets smaller than ~1450 bytes (most control plane traffic, small API calls) appear to work normally. Packets above that threshold, including large file transfers and connections that negotiate a high TCP MSS, are silently dropped. The symptom: applications that work fine under light load and fail mysteriously under real workloads.

hostNetwork pods bypass CNI-assigned IPs. Pods with hostNetwork: true share the node's network namespace and use the node's IP address, not a pod CIDR address. NetworkPolicy enforcement is unreliable for these pods. On the CKA exam, a hostNetwork pod shows the node's IP in kubectl get pod -o wide, not a pod CIDR IP.

CNI version mismatch can leave orphaned network namespaces. containerd v1.6.0-v1.6.3 had a bug where an empty or missing cniVersion field in the CNI config caused "Failed to destroy network for sandbox" on pod deletion. Network namespaces accumulated until the node ran out of namespace slots. If you're seeing sandbox cleanup errors and pod deletion hanging on an older containerd version, check the cniVersion field in /etc/cni/net.d/.

IP exhaustion on nodes with small pod CIDRs. A /24 gives 254 usable addresses. With maxPods: 110 (the kubeadm default), that's tight but workable. Clusters that allocate /28 per node (16 addresses, 14 usable) hit the limit at 14 pods. The node appears healthy; the scheduler sees "0/3 nodes are available: 3 node(s) didn't have free addresses for the requested IP". Only the IPAM allocator log reveals the exhaustion.

NetworkPolicy without a supporting CNI is silently ignored. You can create NetworkPolicy objects on any Kubernetes cluster: the API accepts them regardless of whether the installed CNI enforces them. Flannel doesn't implement NetworkPolicy. Objects created on a Flannel cluster are syntactically valid and completely ignored. No error, no warning. If you're relying on NetworkPolicy for security isolation, verify your CNI supports it before deploying those policies.

Wrap-up

The IP-per-Pod model guarantees a flat address space where every pod is directly routable from anywhere in the cluster. CNI plugins enforce that guarantee by creating veth pairs, allocating IPs from per-node CIDRs via IPAM, and programming kernel routes or VXLAN forwarding tables for cross-node traffic. The choice of backend determines both the infrastructure constraints (VXLAN for L3 compatibility, host-gw for zero-overhead direct routing, BGP for large-scale direct routing, eBPF for high-performance policy enforcement) and the specific failure modes you'll encounter.

When pod-to-pod connectivity fails, the CNI plugin is always in the call chain. Check its DaemonSet status before looking anywhere else.

Next: kube-proxy: iptables, IPVS, and Service Routing covers how Kubernetes translates Service virtual IPs into actual pod endpoints — the layer that sits on top of the flat network described here. After that, Network Policies shows how to control exactly who can reach whom.

Services and Networking (1 of 10)

Languages (Rust, Go & Python), container orchestration (Kubernetes), data and cloud providers (AWS & GCP) lover. Runner & Cyclist.

Subscribe to KubeDojo

Get the latest articles delivered to your inbox.